Best applicant tracking system

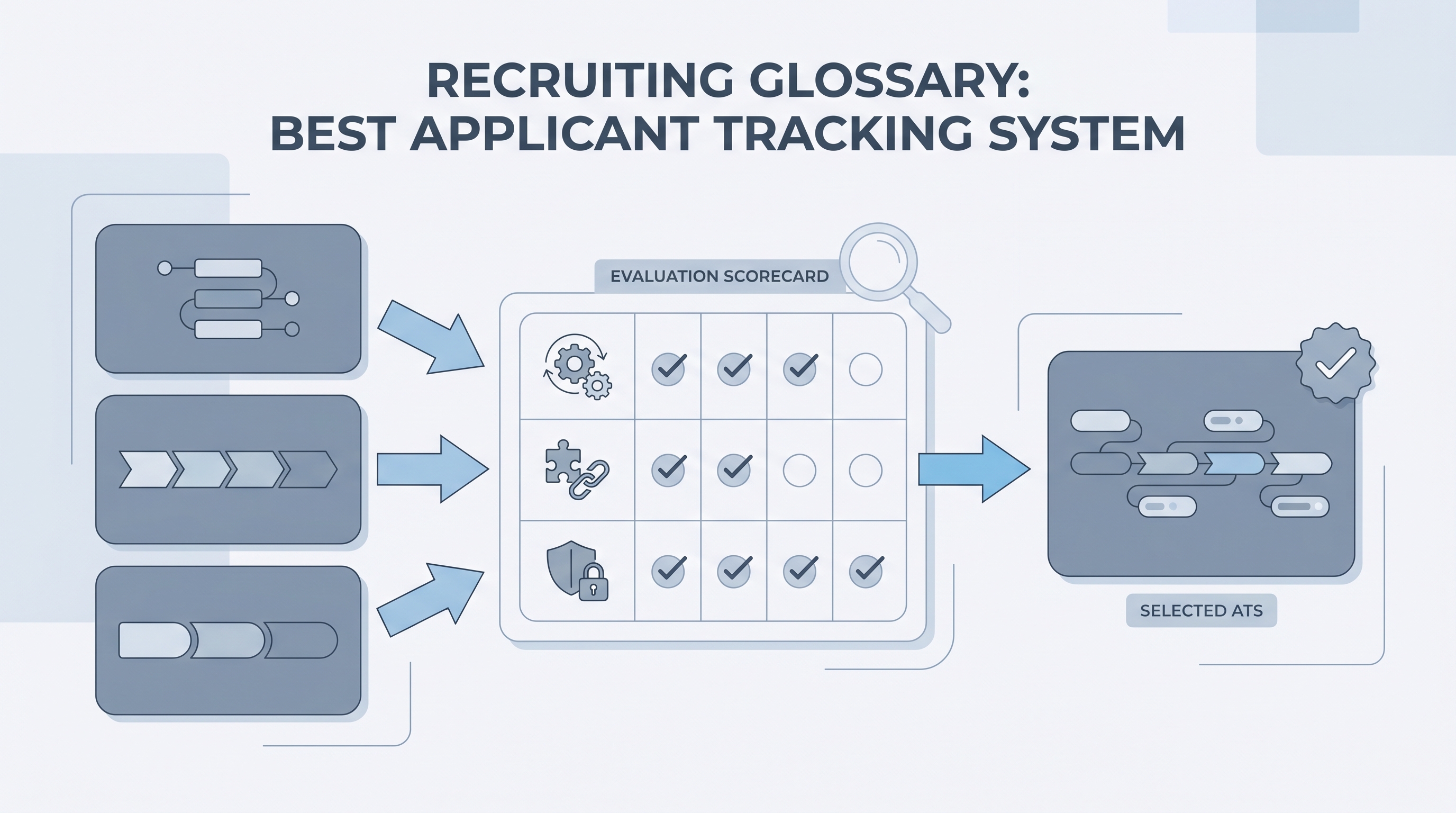

The applicant tracking system that fits your team best is the one that matches your stage logic, integration requirements, and compliance posture, evaluated through realistic pilot workflows rather than feature comparison grids alone.

Michal Juhas · Last reviewed May 4, 2026

What is the best applicant tracking system?

There is no universal winner. The best applicant tracking system is the one your recruiters can run without heroic spreadsheets, where integrations keep candidate identities clean across tools, and where compliance questions have a documented answer. Buyers compare ATS cores, career site capabilities, CRM layers, and analytics modules, then test each vendor honestly against the workflows that broke their current system.

In practice

- A TA director says "we outgrew Lever" or "Greenhouse works for us because engineering owns their own reqs" - these are stage logic and ownership decisions, not just feature comparisons.

- TA ops teams talk about the ATS as the system of record: if stages are vague or fields inconsistently filled, pipeline reports and AI scoring layers inherit the same mess.

- Vendors pitch "best applicant tracking system" in RFPs; practitioners translate that to webhook reliability, dedupe rules, and how quickly support responds on a Friday before a campaign launch.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: The best ATS for your team is the one that matches how your specific hiring process actually runs, not the most popular brand name.

- How you would use it: You evaluate vendors by running your real hiring scenarios through each finalist, not by reading feature comparison grids.

- How to get started: Write down five moments last month when your current ATS slowed the team down. Turn those into required demo scenarios every finalist must pass.

- When it is a good time: When contract renewal approaches, when duplicate candidate records or GDPR requests spike, or when AI modules need a more reliable data foundation.

When you are running live reqs and tools

- What it means for you: Your ATS stage definitions set the quality floor for all downstream tools, from resume parsing to analytics to AI scoring features.

- When it is a good time: Before signing a multiyear contract, after a failed integration audit, or when hiring managers stop trusting pipeline reports.

- How to use it: Run parallel exports from the current system, involve legal and security in vendor evaluations early, and maintain a single scorecard owners update weekly during trials.

- How to get started: Freeze net-new shadow IT integrations for ninety days while you document what currently moves candidate data and under what legal basis.

- What to watch for: AI modules without documented model versioning, opaque pricing for integrations, and vendors who cannot show error budgets or how they handle a webhook failure at 11 p.m.

Where we talk about this

On AI with Michal live sessions, the AI in recruiting and sourcing automation workshops spend time on realistic ATS vendor evaluation, integration mapping, and when to walk away from promising roadmaps. Bring your current stack and integration list to Workshops so peers can pressure-test your reasoning before you sign anything.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "how to choose an ATS for recruiters" for buyer walkthroughs that show admin settings and stage configuration, not only marketing slides.

- Search "ATS demo evaluation script" for practitioner question lists teams use during finalist rounds.

- r/recruiting and r/HRIS host active migration and switching threads; check post dates because ATS features change frequently.

Quora

- Search "ATS selection criteria growing team" for practitioner answers on specific pain points; treat as conversation starters, not buying guides.

ATS evaluation: brand-first versus workflow-first

| Criteria | Brand-first approach | Workflow-first approach |

|---|---|---|

| Starting point | Analyst reports, peer brand recognition | Your five hardest hiring scenarios |

| Demo format | Vendor-controlled tour | Candidate-supplied payloads |

| AI features | Included in pitch | Evaluated separately for bias and versioning |

| Integration audit | Post-contract | Pre-signature |

Related on this site

- Glossary: Applicant tracking software, Best recruitment platform, Talent acquisition metrics, Workflow automation, Resume parsing, Candidate data enrichment

- Blog: AI sourcing tools for recruiters

- Course: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member