Candidate data enrichment

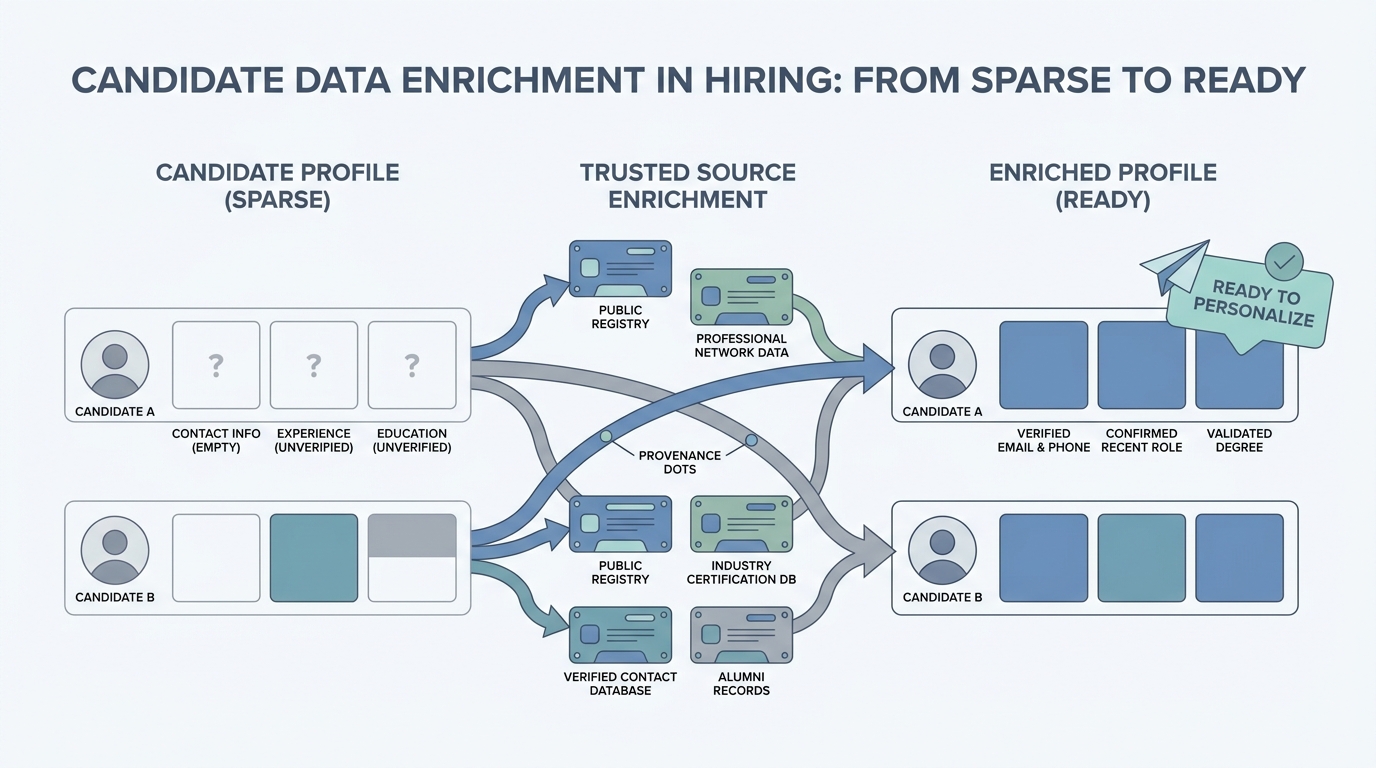

Adding structured fields (email, employer, skills signals, project links) to a candidate record from public or licensed sources so recruiters can personalize outreach or score fit without retyping research.

Michal Juhas · Last reviewed May 2, 2026

What is candidate data enrichment?

Candidate data enrichment means filling in missing profile details from trusted sources, like email, phone, or employer history, before you reach out. You should always note where each fact came from and follow privacy rules.

In practice

- A sourcer drops a LinkedIn URL into a tool that suggests a work email so they can send a tailored note; the button often says "reveal" or "enrich." RecOps slides talk about "enrichment vendors" when they compare data budgets.

- Before an onsite, someone adds current title and location from the web into the ATS because the application was thin. That manual cleanup is low-tech enrichment people do every week.

- Legal reviews ask "where did this phone number come from" after a GDPR note; enrichment as a topic shows up in vendor questionnaires more than in candidate-facing copy.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: Enrichment is filling in missing phone, title, or company facts from a second source so your message is not embarrassingly blank.

- How you would use it: You only add fields you are allowed to store, you note where the fact came from, and you still ask the candidate to confirm anything sensitive.

- How to get started: Pick one provider, run five known profiles you hired already, and compare outputs to reality before you wire automation.

- When it is a good time: After sourcing strings are stable, not before, so you enrich the right people, not the whole internet.

When you are running live reqs and tools

- What it means for you: Enrichment moves personal data between systems, so GDPR, retention, and vendor subprocessors matter as much as match rates. Pair with workflow automation hygiene.

- When it is a good time: When CRM hygiene blocks campaigns, or when hiring managers demand firmographics you do not store yet.

- How to use it: Log source per field, cap refresh cadence, and keep a human inbox for mismatches. Read AI sourcing tools for recruiters before you chain vendors.

- How to get started: Pilot on internal alumni lists where consent is clear, then widen.

- What to watch for: Dark-web vibes vendors, duplicate rows in the ATS, and models that "guess" emails.

Where we talk about this

Sourcing automation workshops treat enrichment as the moment personal data leaves one API and lands in another: keys, logs, and failure alerts get real fast. AI in recruiting blocks ask who reviews enriched facts before outreach. Bring vendor contracts to Workshops if you want peer pressure on the boring parts.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Introduction to Large Language Models (Google Cloud Tech) helps you explain to finance why "just add AI" does not replace data governance.

- Generative AI in 9 minutes (Fireship) is a fast stack-level reset before you evaluate enrichment startups.

- The AI Adoption Curve Explained (IBM Technology) is useful when leadership wants a maturity story tied to spend.

- Best tool to get full candidate and company data? in r/Recruitment compares providers with blunt pros and cons.

- Candidates Enrichment & Pre-qualification in r/Recruitment walks workflow questions from people shipping sequences.

- What sourcing methods and tools do you use EXCEPT LinkedIn? in r/recruiting often mentions enrichment adjacent to alternate channels.

Quora

- What is data enrichment? is generic B2B context; translate carefully to recruiting and privacy.

Enrichment in a responsible stack

| Step | Human accountability |

|---|---|

| Choose vendor or API | Legal + procurement |

| Map fields | TA ops |

| Model draft | Recruiter review before send |

| Retention | HR systems owner |

Related on this site

- Glossary: Workflow automation, Semantic search, Hallucination

- Blog: Boolean search vs AI sourcing, AI sourcing tools for recruiters

- Guides: Sourcers

- Membership: Become a member