Time to fill

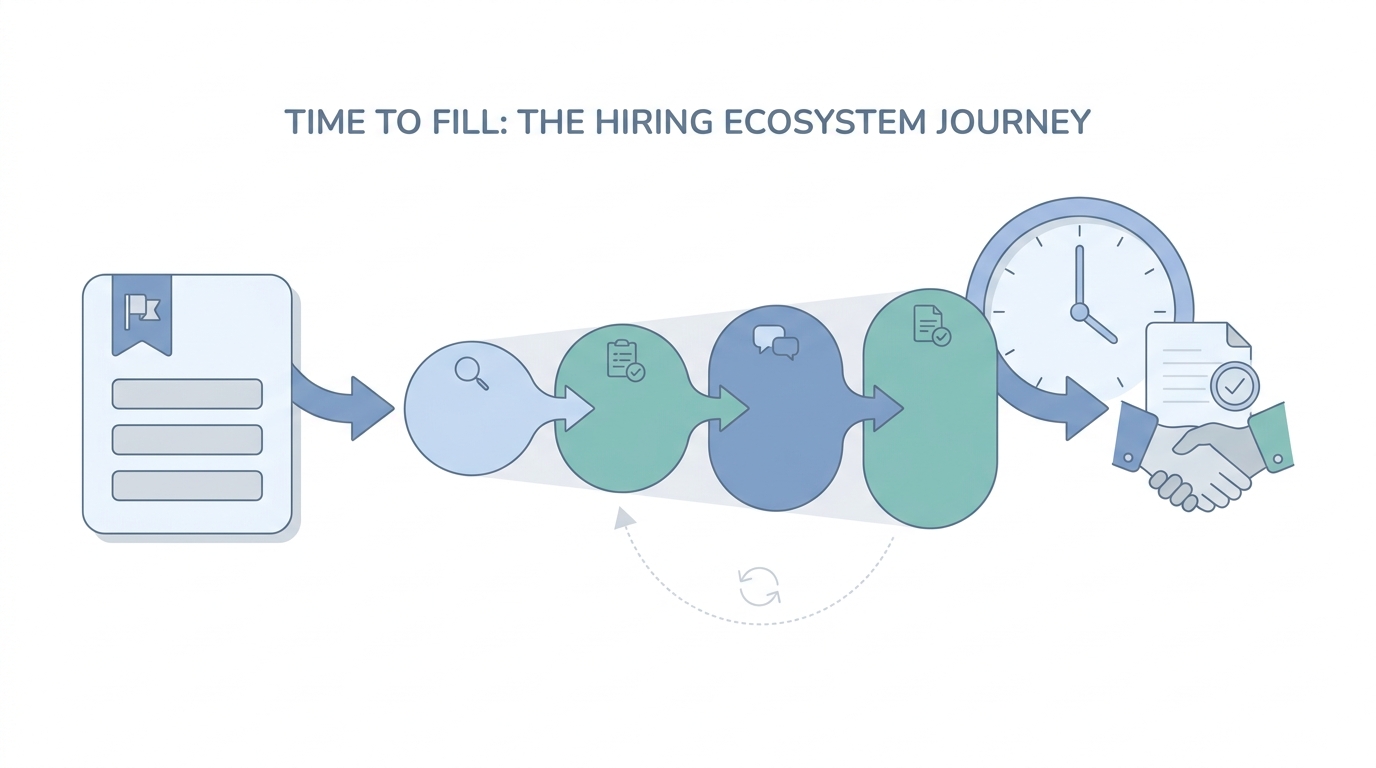

The elapsed calendar time from an approved requisition opening until a candidate accepts an offer for that role, used to diagnose funnel speed, capacity, and hiring manager responsiveness.

Michal Juhas · Last reviewed May 3, 2026

What is time to fill?

Time to fill counts how long an open role stays open from the moment leadership approves hiring until someone accepts the offer. Teams use it to spot bottlenecks in approvals, interviews, and paperwork, not only sourcing speed.

In practice

- TA reviews "median time to fill by department" slides every Monday even when recruiters feel the real story is interview load, not sourcing.

- Hiring managers say "your time to fill is too high" when they mean offers sat in comp approval, not when the first screen was slow.

- Vendors promise "reduce time to fill with AI"; practitioners ask which stage timestamp changed after the pilot.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: It is the calendar stopwatch from "req approved" to "offer accepted" for one role.

- How you would use it: You compare sites, quarters, and role families using the same start and stop rules.

- How to get started: Export last quarter with stage timestamps. Write your definitions on one page everyone signs.

- When it is a good time: Before QBRs, after process changes, or when a new executive asks why hiring feels slow.

When you are running live reqs and tools

- What it means for you: Your ATS configuration decides what counts as open, paused, or filled. Garbage in, leadership panic out.

- When it is a good time: When you change approval chains, add AI stages, or merge ATS instances after acquisition.

- How to use it: Pair headline numbers with stage medians and a few anonymized stories so the team trusts the chart.

- How to get started: Align with finance on whether internal transfers stop the clock. Document the answer beside the metric.

- What to watch for: Averages hiding long tails, inconsistent tags, and vendors who redefine metrics each quarter.

Where we talk about this

AI in recruiting workshops use time to fill when discussing capacity models and realistic AI wins. Bring your stage dictionary to Workshops so the room can sanity-check it.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "time to fill vs time to hire" for TA ops explainers that walk ATS timestamps and executive slides.

- Search "recruiting funnel metrics dashboard" for examples of pairing speed with quality indicators.

- r/recruiting and r/humanresources regularly debate metric definitions when vendors publish benchmarks; read dates and sample sizes critically.

Quora

- Search "time to fill recruiting definition" for mixed practitioner answers; verify any claim against your own ATS export.

Related on this site

- Glossary: Talent acquisition metrics, Workflow automation, Scorecard

- Blog: How to use AI in recruiting

- Workshops: Workshops