Structured output

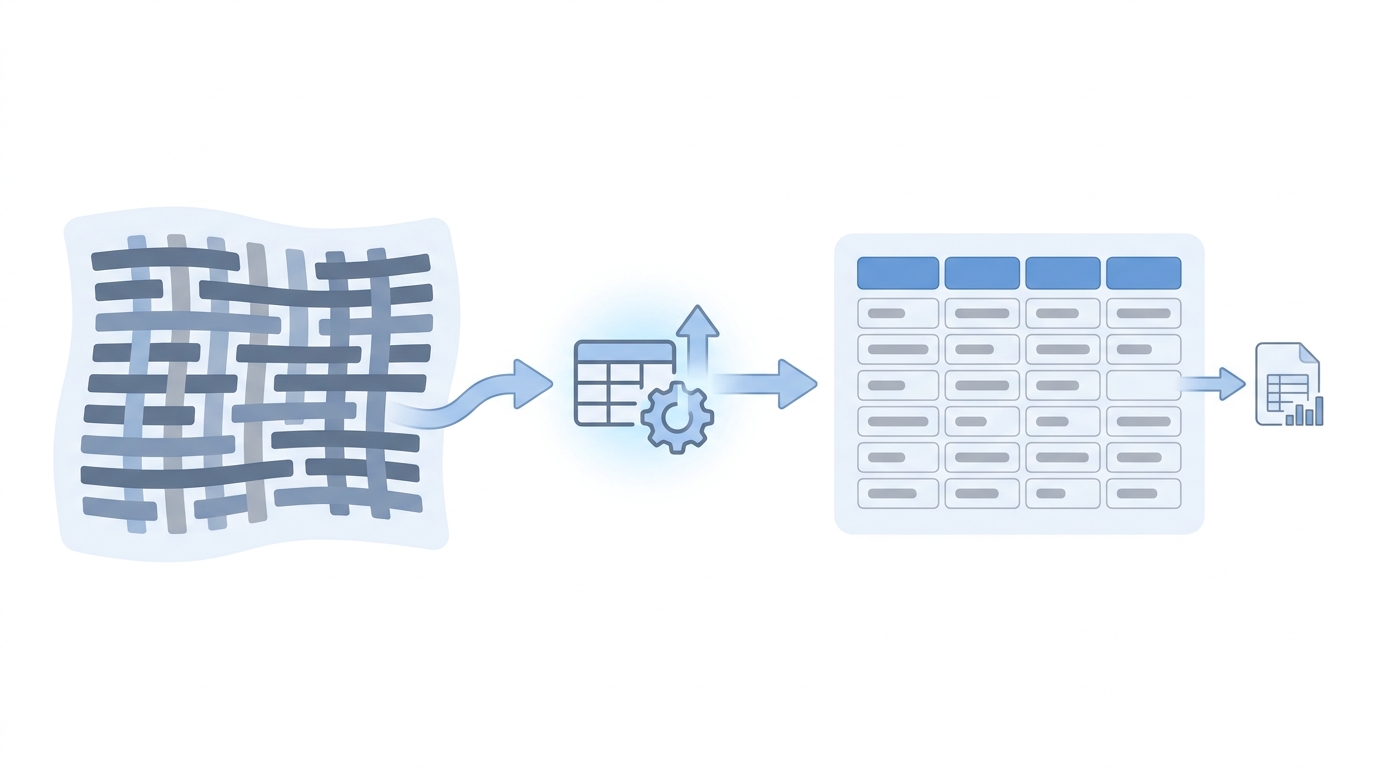

Asking a language model to return machine-parseable shapes (JSON, CSV columns, or rigid tables) instead of prose alone, so you can sort, filter, and automate the next step reliably.

Michal Juhas · Last reviewed May 2, 2026

What is structured output?

Structured output is when you ask the AI to answer in a fixed shape, like JSON fields or clear table columns. That makes it easy to sort, filter, or send the data to a sheet or another tool without parsing a long paragraph.

In practice

- You ask the assistant for candidate notes as a small table with columns for name, fit note, and next step so you can paste into a tracker. Spreadsheet-friendly output is the daily face of structured output, even when nobody says JSON.

- IT or ops tools sometimes show "export as CSV or JSON" for automations; recruiters meet that wording when someone wires a sheet to email alerts.

- A hiring manager might say "three bullets max per answer" which is the same idea in lighter form, without any schema talk at the kickoff.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: You ask the computer to answer in a form with boxes (JSON or a table) instead of a rambling paragraph so the next step does not re-parse your email.

- How you would use it: You want five bullets, each under twelve words, with a yes or no at the end.

- How to get started: Copy a JSON example from your vendor docs, fill it with fake data, run ten real profiles, fix the schema.

- When it is a good time: When spreadsheets, ATS fields, or automations consume model answers.

When you are running live reqs and tools

- What it means for you: Structured output constrains decoding: JSON schema, function calling, or typed tools so downstream code validates. It lowers some hallucination modes by shrinking the output space.

- When it is a good time: When prompt chains hand off between systems or when compliance wants machine-checkable fields.

- How to use it: Version schemas, reject malformed passes, and never treat numeric "scores" as science without calibration.

- How to get started: Pair with scorecard anchors and human review for anything candidate-facing.

- What to watch for: Pretty JSON that hides wrong enums, and vendors implying "structured" equals "fair."

Where we talk about this

Sourcing automation workshops like structured passes because webhooks love JSON. AI in recruiting workshops warn that scores still need human meaning. See both at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- JSON explained in 10 Minutes (Web Dev Simplified) helps recruiters read the schemas engineers hand you before you sign off on automation.

- Introduction to Large Language Models (Google Cloud Tech) mentions tool and format patterns vendors copy.

- LangChain Explained in 13 Minutes (TechWorld with Nana) shows where structured tool calls sit in chains.

- JSON mode vs function calling in r/OpenAI compares API styles you will see in RFPs.

- How do you chain multiple LLM calls? in r/LangChain shows structured handoffs in the wild.

- Few shot examples vs long prompt? in r/ChatGPT relates to keeping schemas short.

Quora

- What is structured output from an LLM? is still forming as a FAQ topic (read critically).

Prose versus structured handoff

| Output | Human read | Automation read |

|---|---|---|

| Long paragraph | Easy | Fragile |

| Table in Markdown | Medium | Medium |

| JSON / CSV | Harder skim | Robust |

Related on this site

- Blog: AI candidate screening

- Blog: How to write better AI prompts

- Tools: n8n

- Guides: Recruiters

- Course: Starting with AI: the foundations in recruiting

Frequently asked questions

Why bother with JSON for recruiting if we are not engineers?

What is a minimal JSON shape for screening assist?

fit_score (bounded integer), confidence (enum: low, medium, high), must_have_hits, gaps, and next_question keep reviewers oriented. Keep enums small so humans spot nonsense fast and automation can branch safely. Version the schema in the same place you version prompts. Add source_excerpt pointers when policy requires showing why a label appeared. Document how each field maps to ATS picklist IDs so imports do not create orphan values on Monday morning. Add a reviewer_id and model_version column even if leadership thinks it is overkill; auditors and product teams will thank you after the first dispute.