AI-based resume screening

A category of hiring software that applies machine learning or large language models to score and rank incoming CVs against job criteria, going beyond keyword matching to evaluate meaning and context, and returning a ranked shortlist or tier flags rather than a raw pile of documents.

Michal Juhas · Last reviewed May 9, 2026

What is AI-based resume screening?

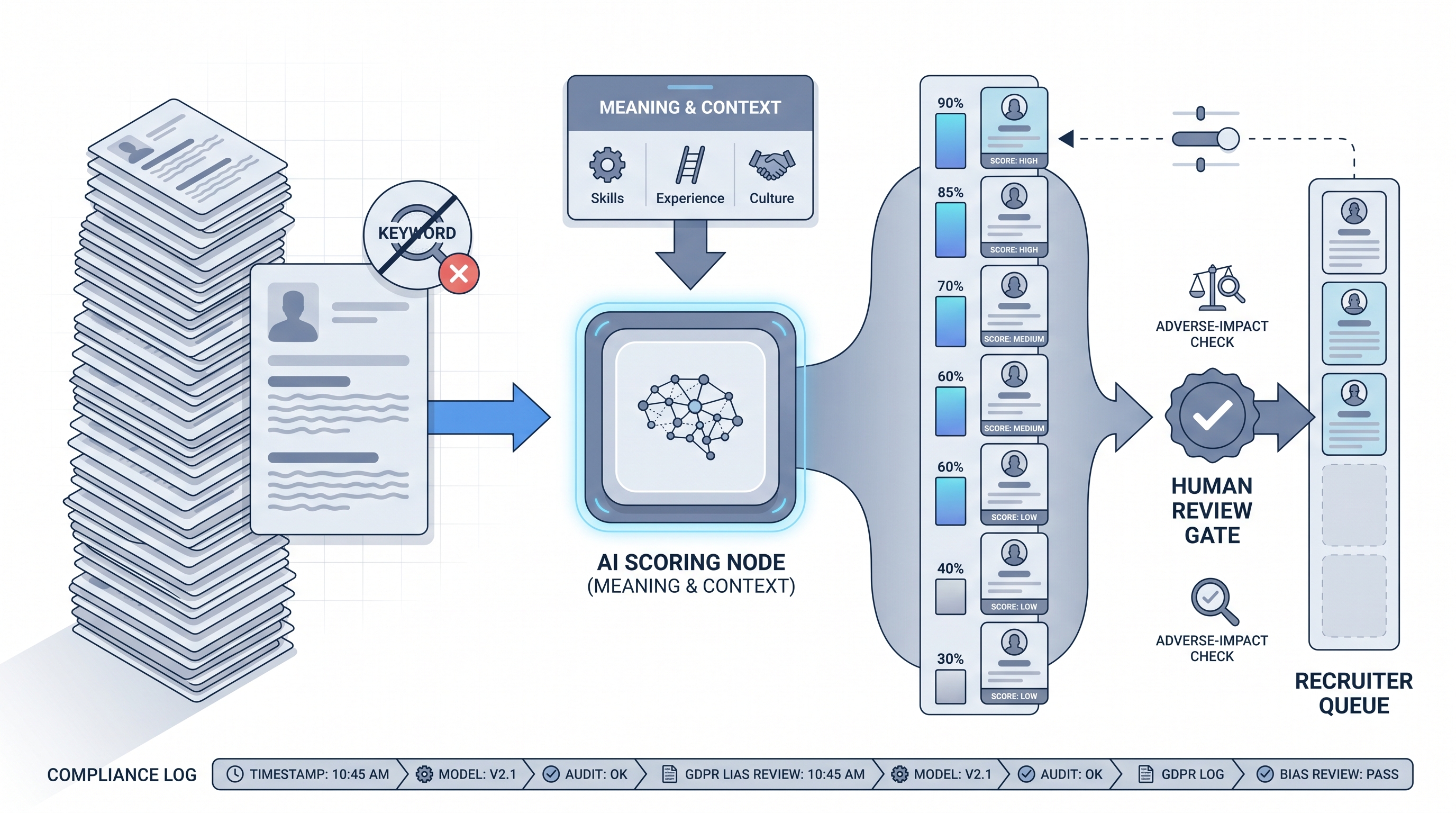

AI-based resume screening is a category of hiring software that evaluates CVs on meaning and context rather than keyword presence. The tool scores each application against the job criteria and returns a ranked shortlist or tier labels for the recruiter to review. It replaces the first manual sort pass, not the hiring decision.

In practice

- A TA manager at a 500-person company switches from keyword filtering to an AI-based screening tool and notices it surfaces candidates with non-traditional backgrounds who perform as well as the usual profile. The model found fit signals the keyword filter was missing entirely.

- A recruiter asks why a strong candidate landed in tier two. The vendor cannot explain which criteria drove the score. That gap is the difference between a tool you can defend to legal and one you cannot.

- In a team debrief, "the AI screened them out" starts a conversation about whether the criteria given to the model actually matched what the hiring manager said mattered. Garbage-in still applies.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how AI-based screening fits your ATS, your legal exposure, and your review workflow.

Plain-language summary

- What it means for you: Instead of reading every CV yourself or relying on keyword rules, software scores each application for meaning and context against your criteria and hands you a ranked shortlist. You review the ranking, not the pile.

- How you would use it: You define the criteria (must-have skills, minimum tenure, role type), the model scores each CV, and you adjust the threshold if it is too tight or too loose after the first batch.

- How to get started: Run the model on CVs where you already know the outcome. Compare its ranking to your past decisions. Fix the gap before it touches live candidates.

- When it is a good time: After you have at least thirty applications per cycle, criteria stable enough to survive two weeks without changes, and a completed legal review of the specific tool.

When you are running live reqs and tools

- What it means for you: AI-based screening changes candidate state. Scores and tiers follow the record into your ATS and influence every downstream decision. That is different from a recruiter making a personal note in a spreadsheet.

- How to use it: Pair screening output with a human-in-the-loop gate. The model ranks; a recruiter reviews the top cut before any candidate advances or receives a rejection. Log the model version and criteria used for each run so you can replay decisions.

- How to get started: Run an adverse impact check on your first live batch. Compare pass rates across gender, age, and ethnicity proxies. If any group passes at less than four-fifths the rate of the highest-passing group, pause and investigate before continuing.

- What to watch for: Vendor-silent model updates that change scoring without notice, proxy variables such as university name or location that correlate with protected groups, and roles where criteria shift so often the model is always one JD version behind.

Where we talk about this

On AI with Michal live sessions, AI-based resume screening comes up in both the AI in recruiting and sourcing automation tracks, specifically around how to pass criteria to a model, how to audit the first batch, and what legal language your policy team will ask about. If you want the full room conversation with real ATS names and sample criteria, start at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "AI resume screening ATS demo" for current practitioner walkthroughs that show real integrations and the edge cases vendor demos usually skip.

- Search "AI resume screening bias audit" for sessions covering group-level pass-rate testing and what teams found when they ran their first retrospective.

- r/recruiting threads on AI screening surface real recruiter frustrations: missed candidates, synonym problems, and vendor claims that did not survive production.

- Search "AI resume screening" in r/humanresources for HR leader perspectives on policy and legal exposure before rollout.

Quora

- How do companies use AI to screen resumes? collects practitioner answers ranging from tactical to skeptical (quality varies, so read critically).

AI-based versus keyword-based screening

| Dimension | Keyword or rule-based | AI-based |

|---|---|---|

| Matching method | Exact phrase or field presence | Meaning and semantic similarity |

| Handles synonyms | Rarely | Usually |

| Explainability | One-line rule | Requires logged scores and criteria |

| Bias exposure | Explicit in rules | Encoded in model training |

| Setup effort | Minutes | Hours to days including calibration |

Related on this site

- Glossary: Artificial intelligence resume screening, Resume parsing, Adverse impact, AI bias audit, Human-in-the-loop (HITL), Scorecard, ATS API integration

- Blog: Boolean search vs AI sourcing

- Guides: Sourcers

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member