Human-in-the-loop (HITL)

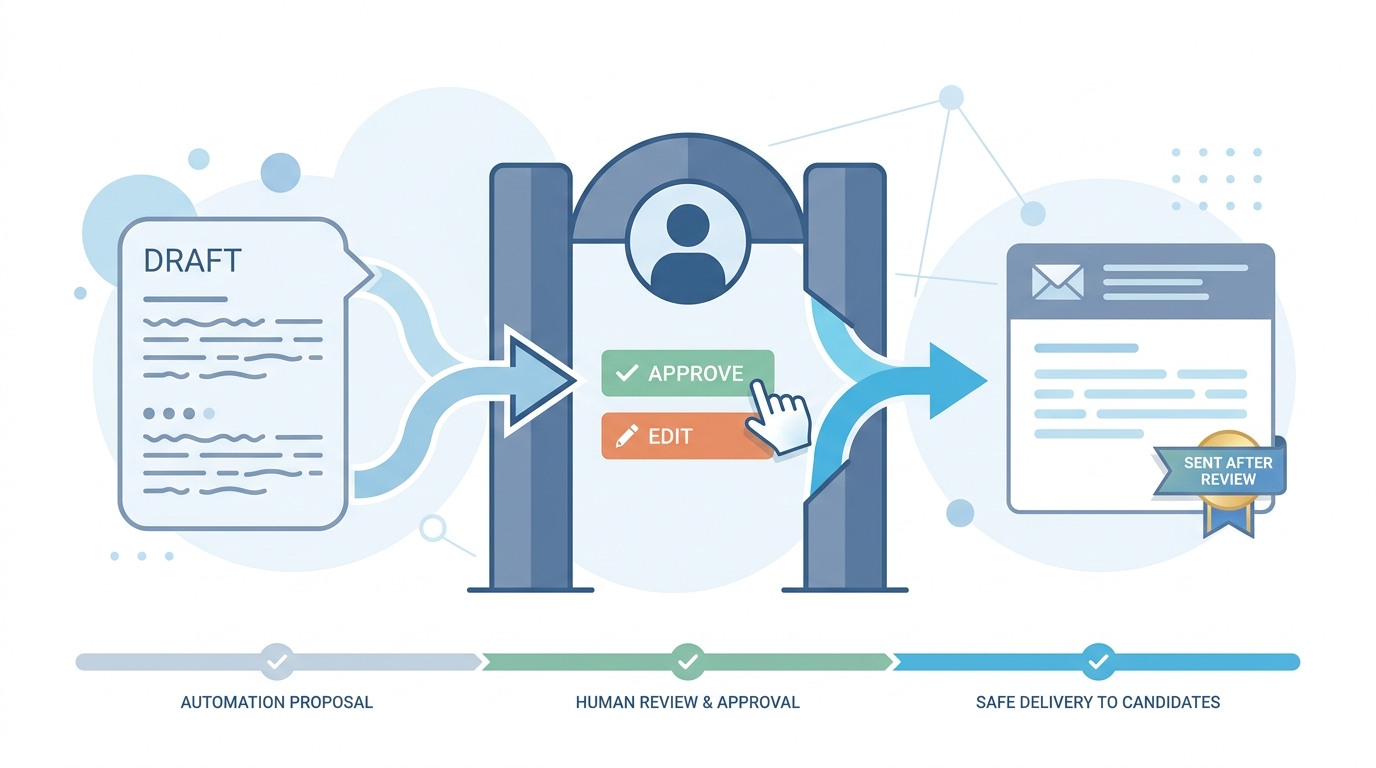

A designed step where a named person approves, corrects, or rejects model or automation output before it changes candidate-facing state, money, or compliance clocks, with logging you could show in an audit.

Michal Juhas · Last reviewed May 2, 2026

What is human-in-the-loop (HITL)?

Human-in-the-loop means a person is required in the path before software or models finalize something that would change a candidate experience, legal record, or money. The loop is intentional: models propose, humans decide, systems log enough to prove the step happened.

In practice

- A send button that stays disabled until a recruiter checks a draft is HITL at the smallest scale; a webhook that writes ATS stages only after a sheet row is marked reviewed is the same idea in automation.

- TA ops might say "we are HITL on outreach" when marketing wants full automation but legal insists on a named reviewer before bulk mail.

- Interview debriefs use the phrase when discussing who can override model scores on a scorecard and whether that override is tracked.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding where review belongs in the ATS, sourcing stack, or candidate communications.

Plain-language summary

- What it means for you: A real person must say yes or fix the draft before the computer sends the email, moves the stage, or pays the bonus. The system remembers who clicked.

- How you would use it: You pair AI speed with a clear inbox or button queue so nobody ships outreach while multitasking without looking.

- How to get started: List the three worst things that could go wrong if a robot acted alone this week. Put a human stop sign immediately before each of those actions.

- When it is a good time: Always for candidate-facing sends and compliance-sensitive steps; lighter for private scratch notes once trust is high.

When you are running live reqs and tools

- What it means for you: HITL is operations, not a PDF promise. You need owners, backups when people are on leave, and metrics on queue time so volume spikes do not erase review.

- When it is a good time: Before you scale workflow automation, after you see repeated hallucination patterns, and whenever regulators or customers ask how humans stay in control.

- How to use it: Combine model drafts with structured output checks, then require human sign-off on uncertain fields. Log model version and reviewer ID the way you log offer approvals.

- How to get started: Pilot one high-blast-radius path (outbound or stage change), measure edit rates, then widen only when error and skip rates look boring for a month.

- What to watch for: Rubber-stamping under SLA pressure, invisible bypass flags in admin panels, and policies that claim HITL while runtime defaults auto-send.

Where we talk about this

On AI with Michal live sessions we rehearse verify-before-send, credential hygiene, and what happens when webhooks spike volume. HITL shows up whenever we wire prompt chains into real stacks. Start at Workshops if you want the room conversation, not only this page.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- What is Human-in-the-Loop? (Google Cloud Tech) ties HITL to production review, confidence scores, and escalation paths.

- What is Human in the Loop in Machine Learning? (Levity) is a compact definition video you can share with hiring managers.

- Introduction to AI and Machine Learning on Google Cloud (Google Cloud Tech) frames where supervised learning and review layers sit in a broader AI stack.

- How is AI changing recruiting? in r/recruiting includes trust and verification themes next to hiring floor stories.

- Automation workflow with Humans in loop - Need suggestions in r/n8n is a practical thread on approval UI patterns next to routers.

- Human-in-the-Loop for Tool Calls is out! (2.6.0) in r/n8n tracks native HITL features in newer releases.

Quora

- What is human-in-the-loop in machine learning? mixes definitions and opinions (read critically).

HITL placement by blast radius

| Change | Typical blast radius | HITL posture |

|---|---|---|

| Private research note | Low | Sample review |

| Sheet row before ATS write | Medium | Lock row, then sync |

| Bulk candidate email | High | Hard gate, log reviewer |

Related on this site

- Glossary: Workflow automation, Hallucination, Structured output, Prompt chain

- Blog: Boolean search vs AI sourcing

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member