Adverse impact

A statistically significant gap in selection rates between a protected group and others that results from a hiring procedure, test, or AI tool, even when no bias was intended.

Michal Juhas · Last reviewed May 3, 2026

What is adverse impact?

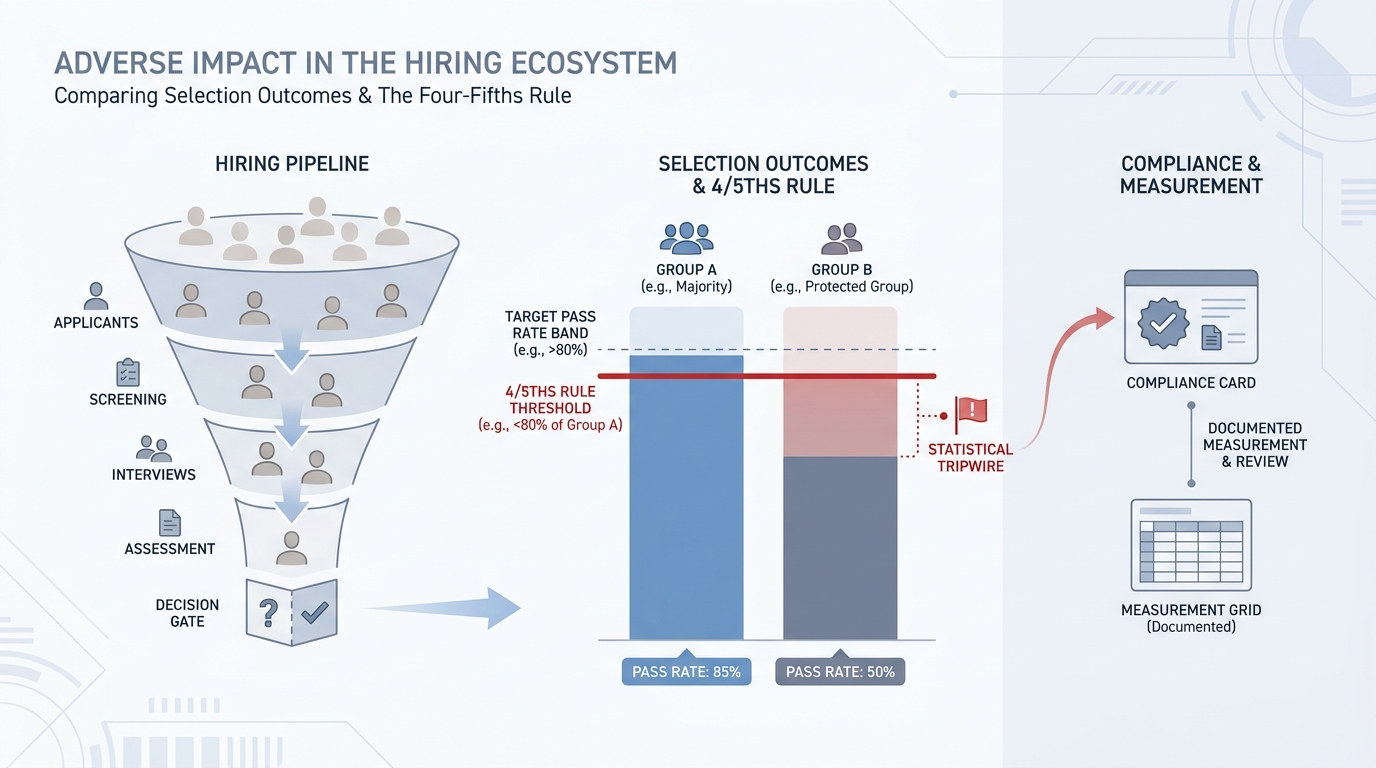

Adverse impact happens when a selection procedure produces outcomes that are statistically worse for a protected group than for others, even when no discriminatory intent exists. In AI-assisted hiring that means a resume ranker, assessment platform, or screening algorithm that produces different pass rates across protected categories can create legal and reputational risk regardless of what the vendor promised in the demo.

In practice

- After a TA ops team ran a 90-day analysis on their AI screen, they found female applicants passed at 68% versus 85% for male candidates, a ratio right at the legal edge, and nothing in the algorithm was labeled "gender."

- A hiring manager hears "the AI flagged this resume" and approves a no without reading it. When fifty such decisions stack up across a quarter, the pattern is adverse impact even if no individual decision was intentional.

- Legal or DEIB partners may ask "did you run a disparate impact analysis on that tool before we signed the vendor contract?" and the answer reveals whether the team has operational controls or only policy slides.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: If your screen passes one group at 85% and another at 65%, that gap may be illegal even if you never thought about group membership. The rule measures outcomes, not intentions.

- How you would use it: Count who passes each stage, split by protected group, and check whether every ratio stays above 0.80. Flag it before anyone else does.

- How to get started: Pull your last 90 days of funnel data. Ask IT or the vendor for a group-rate breakdown. If they cannot produce one, that is already a risk signal worth documenting.

- When it is a good time: Before you go live with any new AI screening tool, then quarterly after that, and any time rejection rates spike on a particular req type.

When you are running live reqs and tools

- What it means for you: Every AI ranker, filter, or score that moves candidates in or out of the funnel is a selection procedure under EEOC and Title VII. Group-rate monitoring is not optional for tools that touch protected class in any proxy.

- When it is a good time: Before vendor contract signature, before each new model version reaches production, and after any spike in rejection rate for a specific req type or demographic segment.

- How to use it: Log candidate IDs, stage decisions, and model version together. Run 4/5ths ratios by protected group quarterly at minimum. Keep results with owner names and audit dates. Cross-link to structured output logging patterns if you are automating the export.

- How to get started: Ask your current AI vendors for their most recent bias audit results and which protected classes they tested. If no audit exists, that is your first compliance conversation and a useful vendor differentiator.

- What to watch for: Vendors who use proxy features (zip code, university name, employment gap) that correlate with protected class without disclosing it. Low-sample-size results that look clean but are statistically meaningless. Silent model updates that change scoring without notifying your compliance team.

Where we talk about this

On AI with Michal live sessions, adverse impact comes up in the AI in recruiting track because it is one of the first objections hiring managers and legal partners raise when evaluating AI tools. We walk through the 4/5ths calculation, a vendor audit question checklist, and the memo format that gets legal sign-off. If you want the full room conversation with real funnel data, start at Workshops and bring your vendor contracts.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "adverse impact analysis 4/5ths rule" on YouTube for spreadsheet walkthroughs from HR practitioners and employment law educators. Several law firm channels (Seyfarth, Ogletree) post accessible explainers when new state rules pass.

- The EEOC official YouTube channel publishes public-sector guidance useful for building shared vocabulary before vendor calls.

- Search "NYC Local Law 144 AI hiring" for compliance-focused updates from employment lawyers walking through the audit requirements and candidate notification rules.

- r/humanresources threads on "adverse impact" and "AI screening" mix practitioner experience with compliance questions; the comment sections surface the real objections legal teams raise.

- r/recruiting has recurring threads on AI resume screeners where bias risk comes up organically alongside vendor comparisons.

- r/legaladvice covers candidate-side adverse impact questions that train recruiter empathy for what applicants actually experience.

Quora

- Searching "adverse impact hiring AI" on Quora surfaces a mix of HR practitioners, employment lawyers, and researchers explaining the gap between statistical and legal significance (read critically; quality varies).

Adverse impact versus adverse treatment

| Adverse impact | Adverse treatment | |

|---|---|---|

| Also called | Disparate impact | Disparate treatment |

| Intent required | No | Usually yes |

| Legal trigger | Statistical outcome gap (below 4/5ths) | Evidence of intentional bias |

| AI example | Resume ranker passes one group at a lower rate | Recruiter explicitly filters by protected trait |

| Primary fix | Audit and validate or replace the tool | Policy, discipline, training |

Related on this site

- Glossary: Human-in-the-loop (HITL), Hallucination, Structured output, Scorecard, AI adoption ladder

- Blog: AI candidate screening, How to use AI in recruiting

- Guides: Hiring managers

- Live cohort: Workshops

- Membership: Become a member