AI-based recruitment tools

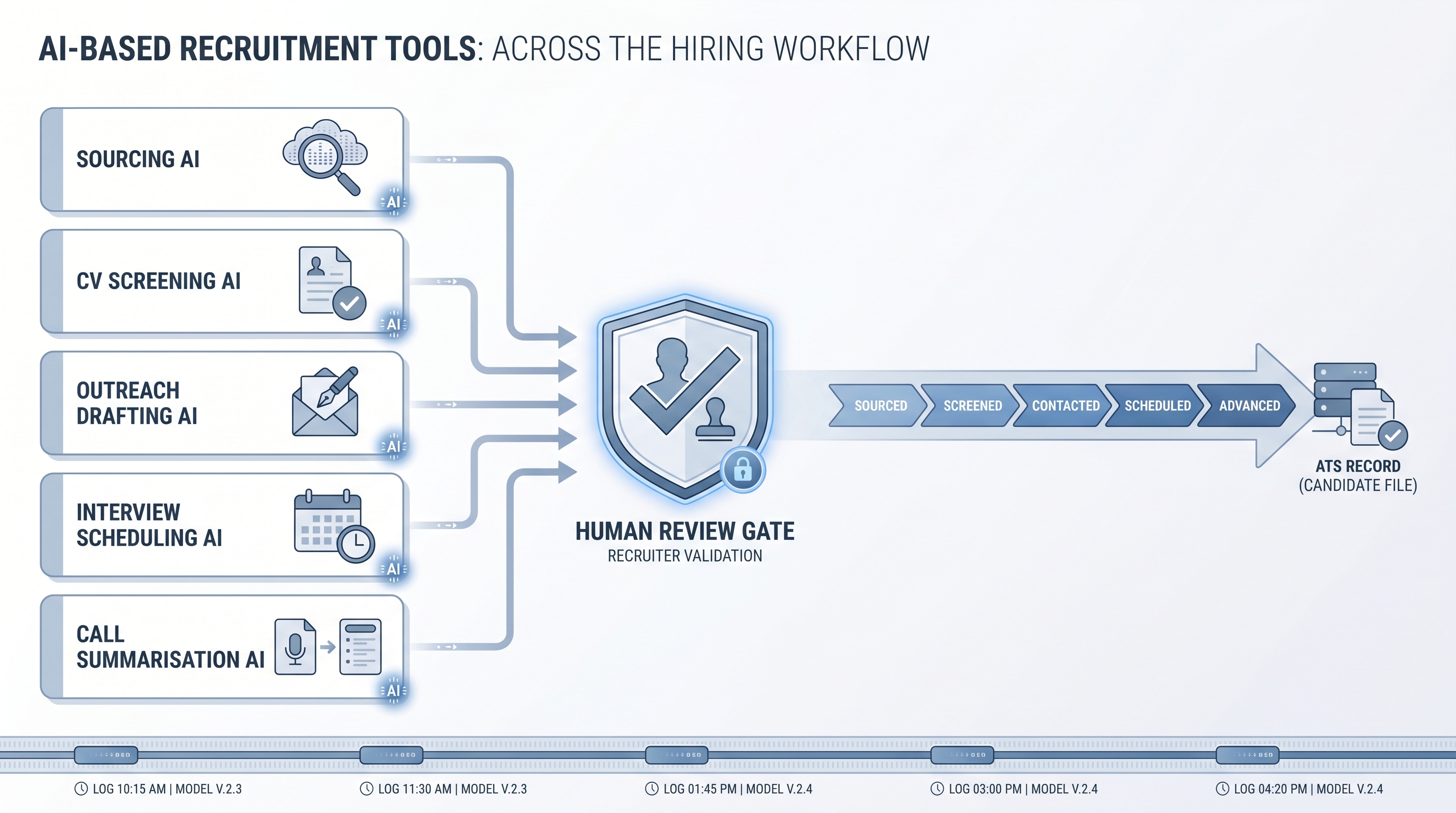

Recruitment software where AI is the operative layer for a specific hiring task: sourcing candidates from signal-rich profiles, screening CVs against job criteria, drafting personalised outreach, scheduling interviews from calendar availability, or summarising call notes into structured records.

Michal Juhas · Last reviewed May 9, 2026

What are AI-based recruitment tools?

AI-based recruitment tools are software products where AI inference is the operative mechanism for a specific hiring task, not a summary button or drafting helper bolted onto a rules-based engine. When a sourcing tool takes a job brief and returns a ranked candidate list based on semantic signal rather than keyword match, that is an AI-based tool. When a screening tool reads a CV and produces a score that changes with subtle context differences rather than a count of matched terms, that is an AI-based tool.

The distinction matters because many tools marketed as AI are rule-based engines with an AI-sounding interface. The practical test: give the tool two candidates where one has a non-linear career path that fits the role and one has a clean title match that does not. An AI-based tool should surface the stronger fit. A rules engine will surface the title match.

In practice

- A sourcer at a Series B fintech uses an AI-based sourcing tool to translate a senior backend engineer brief into a ranked candidate list from three platforms. The tool surfaces a candidate whose title is "Platform Reliability Lead" because the semantic model connects their skill signals to the role, not because the title matched. A keyword-based tool would have missed them.

- A TA team running 40 reqs a quarter uses an AI-based screening tool to produce a structured summary and a recommended stage for each inbound application. A recruiter reviews every recommendation before moving a candidate. The tool saves an average of eight minutes per application; the review gate catches misfits the model confidently ranked too high.

- In a vendor review, two tools describe themselves as "AI-powered screening." One returns the same score for a CV regardless of which job description it is scored against. The other returns meaningfully different scores and can explain in plain language why a candidate ranked low. The first is a rules engine with AI branding. The second is AI-based.

Quick read, then how hiring teams use it

This is for recruiters, TA leads, and HR ops partners who need to evaluate recruitment software, explain trade-offs to procurement, or understand what a vendor means when they describe their product as AI-based. Skim the summary for shared vocabulary. Use the operational section when comparing tools or scoping an implementation.

Plain-language summary

- What it means for you: An AI-based recruitment tool does the task through AI inference, so it picks up on context that a keyword filter or rule engine misses, including non-linear career paths, adjacent skills, and timing signals.

- How you would use it: You set the task, review the output, and own the decision gate. The tool handles the first pass; you handle the judgment calls and the escalation path when the tool gets it wrong.

- How to get started: Pick one low-stakes workflow, outreach drafting is the lowest risk, and run the tool output in parallel with your manual process for two weeks before reducing the manual step. Score output quality before you scale.

- When it is a good time: After you have named an owner for errors, documented the lawful basis for the candidate data you are processing, and written down what happens when the tool produces a result you would not have chosen.

When you are running live reqs and tools

- What it means for you: AI-based tools update their output based on the specific input, not a static rule. That is the leverage; it is also where the risk concentrates. A tool that ranks candidates differently based on demographic proxies embedded in language patterns will not announce that it is doing so.

- When it is a good time: When criteria are agreed and stable, when a human review gate sits between AI output and any candidate-facing action, and when you have a quarterly demographic pass-rate review on the calendar before you wire a consequential decision to the AI layer.

- How to use it: Pair every AI tool with a review queue before candidate-facing actions. Log which model version scored which candidate. Run an adverse impact analysis quarterly. Read the sub-processor list before signing the data processing agreement.

- How to get started: Start with outreach drafting, then add sourcing signal tools, then screening tools. Each category increases employment-consequence risk; add governance steps before you add tool categories, not after the first incident.

- What to watch for: Silent pass-rate drift across demographic groups, integration breaks that empty a queue without alerting anyone, model updates from the vendor that change scoring logic without a public changelog, and candidate data retained by the vendor after contract end. See workflow automation for the same failure modes in the automation layer beneath these tools.

Where we talk about this

On AI with Michal live sessions, tool evaluation runs through two tracks: the AI in recruiting track covers how specific tool categories fit into a hiring workflow and what questions to ask vendors before signing, and the sourcing automation track connects tool outputs to the automation layer that moves data between them. If you are comparing shortlisted vendors or are mid-RFP, start at Workshops and bring the tool names, your integration requirements, and the person who would own the error inbox.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures and data handling practices directly with vendors before signing anything or wiring candidate data.

YouTube

- How to Automate Your Entire Hiring Process with n8n and Notion (Michele Torti) shows a practitioner-built hiring workflow useful for understanding where AI tools slot into an automated stack.

- n8n Tutorial: Build an AI HR Assistant That Shortlist... demonstrates AI scoring logic built from scratch, which helps you ask better questions of vendors describing similar logic as proprietary.

- Boost Your Productivity: Mastering the Power of Workflow Automation (DottoTech) covers the automation vocabulary that connects AI tool outputs to the rest of the stack.

- How are you actually using AI in your recruiting workflow right now? in r/recruiting separates genuine AI tool adoption from bolt-on feature use, from practitioners who have used both.

- Has anyone used Zapier? in r/recruiting shows the integration workarounds that a well-connected AI-based tool stack is supposed to eliminate.

- I want to make some recruitment automated workflows but... in r/RecruitmentAgencies is a frank starting-point thread from practitioners working through the build-versus-buy decision.

Quora

- What are the best AI tools for recruitment and hiring? collects practitioner answers with varying depth; read for the evaluation criteria people mention alongside the platform names.

AI-based recruitment tools versus traditional recruitment software

| Dimension | Traditional tool | AI-based tool |

|---|---|---|

| Matching logic | Keyword or rules engine | AI semantic inference |

| Context sensitivity | Fixed criteria, same output | Output varies with subtle input changes |

| Explainability | Shows matched fields | Can explain ranking reasons |

| Bias risk surface | Lower (transparent rules) | Higher (opaque inference) |

| Governance burden | Lower (human initiates each step) | Higher (AI initiates, human audits outcomes) |

| Compliance requirement | Standard DPA and data retention | Plus bias audit, pass-rate monitoring, Article 22 review path |

Related on this site

- Glossary: AI recruiting tools, AI tools for recruitment, AI hiring tools, AI-based recruitment platform, AI-based recruiting, Adverse impact, AI bias audit, Human-in-the-loop, Workflow automation

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting and sourcing automation

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member