AI-based recruitment platform

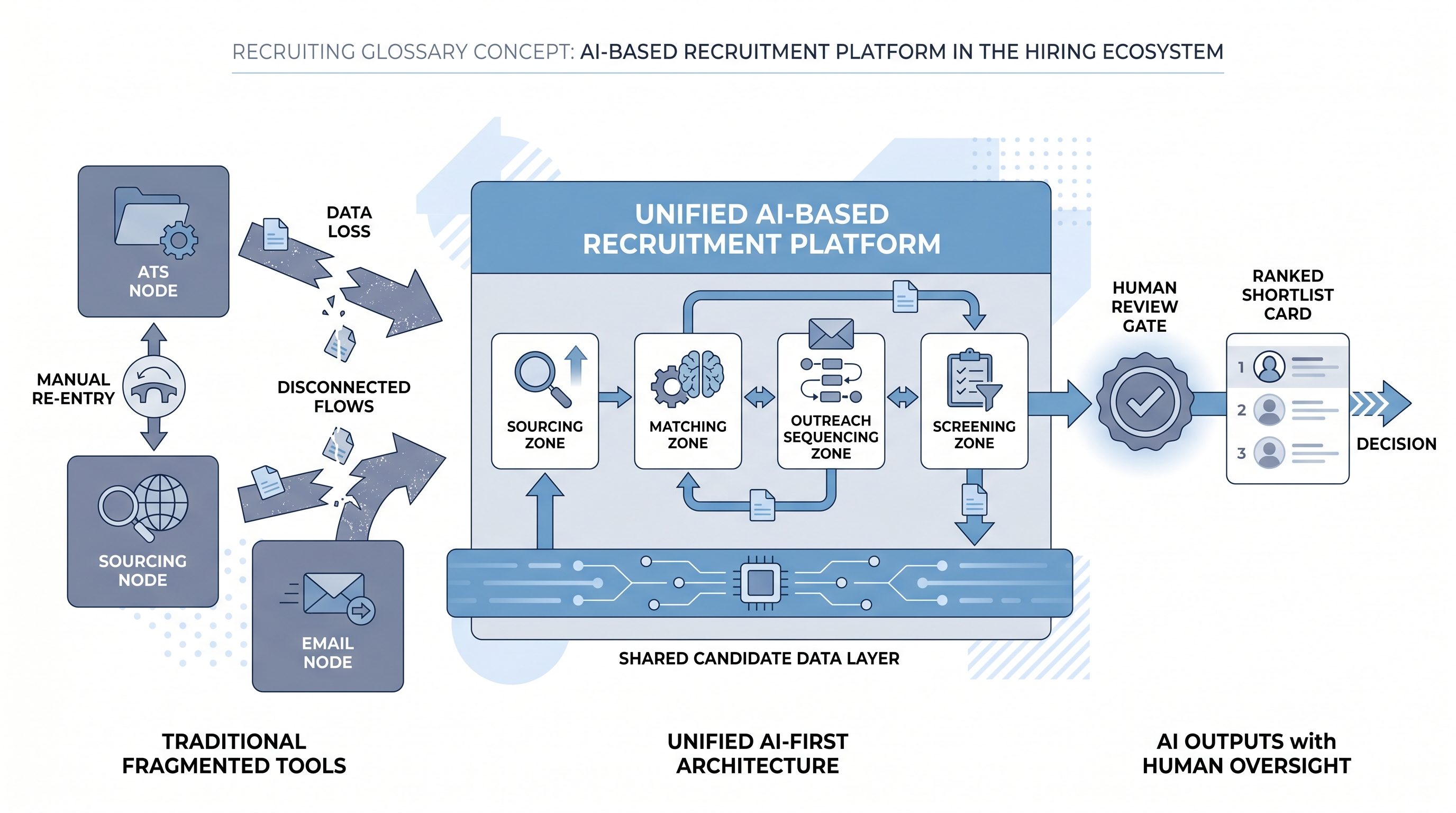

Recruitment software where AI is the foundational architecture rather than a bolt-on feature layer: sourcing signals, candidate matching, outreach sequencing, screening, and pipeline analytics share a unified data model so AI-driven recommendations flow continuously without manual re-entry between modules.

Michal Juhas · Last reviewed May 9, 2026

What is an AI-based recruitment platform?

An AI-based recruitment platform is recruitment software where AI is the foundational architecture rather than a set of features applied on top. Candidate records, job descriptions, sourcing signals, and evaluation outputs share a unified data model that AI queries and updates continuously. Matching, ranking, outreach timing, and deduplication happen through AI inference. The pipeline view a recruiter sees is the output of those decisions, not the mechanism driving them.

This differs from a traditional recruitment platform, which puts an applicant tracking system at the center and adds AI capabilities as helpers inside that workflow: a resume summary here, a draft message there, a scheduling assistant at one stage. In that model, a recruiter still drives each step. In an AI-based platform, the system determines when candidates are surfaced, when outreach is timed, and which shortlists are presented. The recruiter owns the review and decision gates.

The architecture test is practical, not rhetorical. Ask a vendor to demonstrate a candidate who applied nine months ago being re-surfaced automatically on a new req with a timing recommendation. If that requires a manual search, the AI is a product feature. If the platform surfaces it without prompting, the AI is the infrastructure.

In practice

- A recruiter opening a new senior marketing req on an AI-based recruitment platform does not start with a Boolean search. The platform surfaces ten warm candidates from past sourcing campaigns and previous applicants, ranked by fit score and outreach timing recommendation. The recruiter reviews, removes two, and sends personalised outreach to the remaining eight from within the platform in under twenty minutes.

- An RPO team using an AI-based recruitment platform for three client accounts benefits from shared candidate data that does not require re-sourcing the same profiles for similar roles. When a fintech client opens a compliance analyst req, the platform flags two candidates from a previous banking search at a different client, with permissions set so each client sees only their own data.

- In a vendor demo, an AI-based recruitment platform and an ATS with AI add-ons can look identical. The difference surfaces when you ask to see how a candidate who applied twice (once inbound, once sourced) appears in the system. A unified data model shows one record with a full history. Two records, or a merge requiring manual intervention, indicates bolt-on AI, not a foundational architecture.

Quick read, then how hiring teams use it

This is for recruiters, TA leads, and HR ops partners who need to evaluate recruitment software, explain trade-offs to procurement, or understand what a vendor means when they describe their product as AI-based. Skim the summary for a shared vocabulary. Use the operational section when comparing platforms or scoping an implementation.

Plain-language summary

- What it means for you: An AI-based recruitment platform surfaces the right candidates at the right moment from every previous interaction, rather than waiting for a recruiter to rebuild the same search for each new role.

- How you would use it: You set intake criteria and review AI-curated shortlists. You own the review gates, the error inbox, and the pass-rate audit schedule.

- How to get started: Run a scorecard-based vendor review before any demo. Score data portability, explainability, bias monitoring, API stability, and data residency in writing before entering any vendor meeting.

- When it is a good time: When your team has enough structured historical hiring data that a model is not encoding past mistakes, and when someone has named ownership of error monitoring and demographic pass-rate review before go-live.

When you are running live reqs and tools

- What it means for you: AI-based means the platform updates candidate state, changes ranking signals, and times outreach without a recruiter triggering each step manually. When automation silently fails, the error is in the pipeline before anyone notices.

- When it is a good time: When the criteria you are optimising for are stable and agreed, when GDPR and state AI employment law compliance is documented before you wire candidate-facing decisions to the AI layer, and when one person owns the pass-rate audit cadence.

- How to use it: Pair platform outputs with structured human review gates before any candidate-facing action. Log which model version scored which candidate. Run an adverse impact review at least quarterly.

- How to get started: Start with one module, sourcing or screening, not the full stack. Validate AI shortlist quality against what a recruiter would have chosen manually for two weeks before removing the manual step. Read the sub-processor list before signing the DPA.

- What to watch for: Pass-rate drift across demographic groups that surfaces slowly, outreach timing decisions that conflict with opt-out preferences stored in a disconnected CRM, candidate deduplication failures that create duplicate outreach, and model drift after a platform update that changes scoring logic without a public changelog.

Where we talk about this

On AI with Michal live sessions, platform evaluation is a recurring topic in the sourcing automation and AI in recruiting tracks: what questions to ask vendors, how to read a data processing agreement, and what governance responsibilities the hiring team holds when the vendor runs the model. If you are comparing shortlisted vendors or are in the middle of an RFP, start at Workshops and bring the platform names, your integration requirements, and the name of the person who would own the error inbox.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures and data handling practices directly with vendors before signing anything.

YouTube

- How to Automate Your Entire Hiring Process with n8n and Notion (Michele Torti) shows a practitioner-built hiring workflow that illustrates the difference between a stack of bolted-on tools and an integrated flow, useful context before evaluating all-in-one platforms.

- n8n Tutorial: Build an AI HR Assistant That Shortlist... demonstrates how AI scoring logic works when built from scratch, which helps you ask better questions of platform vendors describing similar logic as proprietary.

- Boost Your Productivity: Mastering the Power of Workflow Automation (DottoTech) covers automation vocabulary before you enter a vendor conversation about triggers, actions, and AI orchestration layers.

- How are you actually using AI in your recruiting workflow right now? in r/recruiting separates genuine AI platform adoption from bolt-on feature use, from practitioners who have used both.

- Has anyone used Zapier? in r/recruiting shows the integration workarounds that a well-connected AI-based recruitment platform is supposed to eliminate.

- I want to make some recruitment automated workflows but... in r/RecruitmentAgencies is an honest starting-point thread from practitioners working through the build-versus-buy decision.

Quora

- What are the best AI tools for recruitment and hiring? collects practitioner answers with varying depth; read for the evaluation criteria people mention alongside the platform names.

AI-based recruitment platform versus traditional tools

| Dimension | Traditional ATS | AI-based recruitment platform |

|---|---|---|

| Data model | Stage-centric pipeline | Candidate-centric AI data model |

| AI role | Feature helper inside workflow | Engine driving workflow logic |

| Candidate deduplication | Rule-based or manual | AI-resolved across all sources |

| Historical reactivation | Manual search required | AI-surfaced by intent and timing signals |

| Pass-rate monitoring | On-request from vendor dashboard | Continuous alerts built into the platform |

| Governance burden | Lower (human initiates each step) | Higher (AI initiates, human audits outcomes) |

Related on this site

- Glossary: AI-based hiring platform, AI recruitment platform, AI hiring platform, AI-based recruiting, AI powered recruiting, AI recruiting solutions, Adverse impact, AI bias audit, Human-in-the-loop, Applicant tracking software

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting and sourcing automation

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member