AI interview intelligence

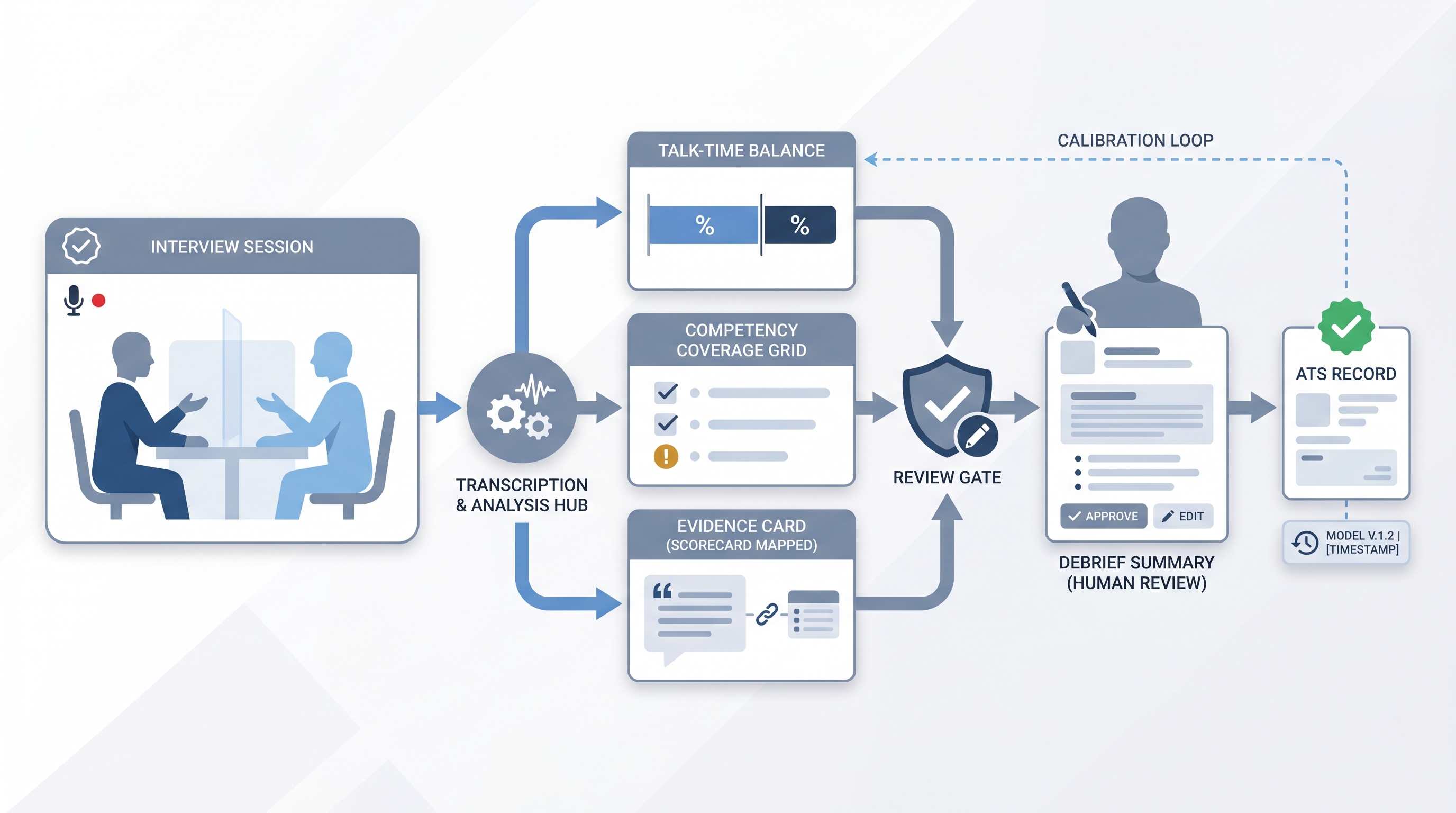

Software that captures, transcribes, and analyzes interview conversations to surface structured signals: talk-time ratios, question coverage gaps, scorecard-relevant evidence, and debrief-ready summaries for the hiring team.

Michal Juhas · Last reviewed May 15, 2026

What is AI interview intelligence?

AI interview intelligence software captures, transcribes, and analyzes interviews so hiring teams get structured debrief data instead of raw recordings. It maps what was said to your scorecard criteria, flags coverage gaps, and drafts a summary the interviewer reviews and approves before it enters the official record.

In practice

- A recruiter notices that one hiring manager always asks the same two questions and then runs out of time before covering competencies four and five on the scorecard. Interview intelligence surfaces this pattern after three interviews, not three months.

- A sourcing-focused team adopts an interview intelligence tool and discovers that their average candidate talk-time is 38 percent, well below the 70 percent industry guidance for structured behavioral interviews. That number starts a real conversation.

- During a compliance review, TA ops exports structured debrief data from the platform to show regulators that every hiring decision was tied to a named criterion with a named human approving the final assessment.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are wiring interview intelligence into your ATS, scorecard process, and compliance posture.

Plain-language summary

- What it means for you: Instead of relying on memory or rushed handwritten notes, you get a structured draft of what was covered in the interview, mapped to the criteria you set in advance. You still approve the record.

- How you would use it: Connect the tool to your video interview platform or invite it as a meeting participant. After the call, review the AI-generated note against your own memory. Correct anything that landed wrong, then submit.

- How to get started: Pick one role with a defined scorecard. Run five interviews in parallel with your current manual process. Only adopt AI notes as the primary record after the parallel run shows acceptable accuracy for your team.

- When it is a good time: When your panel consistently submits feedback late, in wildly different formats, or with competency gaps the debrief can not recover from. Not when your scorecard is still being debated.

When you are running live reqs and tools

- What it means for you: Interview intelligence changes data lineage. The audit trail now includes the model version, input source, and the name of the human who approved each note. That is useful for compliance and essential to document before a challenge arises.

- When it is a good time: After your scorecard is finalized, after candidate consent and disclosure flows are in place, and after a parallel run has confirmed acceptable transcription quality on your actual call stack.

- How to use it: Gate visibility so no panelist sees another's AI-drafted note until all have reviewed and submitted their own. Set the system prompt or template to your scorecard criteria explicitly. Flag low-confidence transcript segments for manual review rather than silently including them in the debrief summary.

- How to get started: Audit your current feedback bottlenecks first. If the problem is late submission, AI drafting helps. If the problem is poor calibration on what good evidence looks like, fix the scorecard before you wire automation. Read your vendor DPA carefully: what they say in a demo and what they commit to in a contract are different documents.

- What to watch for: Hallucinated quotes attributed to candidates, panel anchoring when drafts are visible too early, model drift when the transcription vendor updates their engine quietly, and GDPR consent gaps if the recording disclosure was not in the interview confirmation email.

Where we talk about this

On AI with Michal live sessions, interview intelligence comes up in the AI in recruiting track when discussing how AI fits the evaluation pipeline without replacing human judgment. The debrief sequencing conversation, including anchoring risk and scorecard alignment, is a recurring live topic. Start at Workshops and bring your current scorecard, ATS name, and any vendor shortlist so the session is specific to your stack.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data through a new platform.

YouTube

- Search "Metaview interview intelligence" or "Aspect AI recruiting" for product walkthroughs showing how structured debrief data flows into common ATS platforms.

- Search "structured interviewing debrief bias" for practitioner and academic sessions on how note-format inconsistency introduces evaluation error across panels.

- r/recruiting has threads on whether AI interview notes are trustworthy and how interviewers feel about having a draft prepared before they write their own.

- r/humanresources covers disclosure and compliance questions that HR leaders raise when AI enters the formal evaluation record.

Quora

- Search "AI interview intelligence recruiter" for a range of practitioner answers on adoption friction, accuracy concerns, and where teams have found genuine time savings.

Manual notes versus AI-assisted debrief summaries

| Dimension | Manual notes | AI interview intelligence |

|---|---|---|

| Time to submit | Often 24 to 72 hours | Often under 30 minutes with a draft |

| Format consistency | Varies by interviewer | Consistent when scorecard is the template |

| Coverage tracking | Manual spot-check | Automated gap detection |

| Anchoring risk | Present if notes shared early | Present if drafts visible before submission |

| Audit trail | ATS timestamp | Model version, input source, human approver |

Related on this site

- Glossary: Scorecard, AI-assisted interview feedback in the ATS, Human-in-the-loop (HITL), Structured output, Workflow automation, Candidate data enrichment

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member