AI video interview software

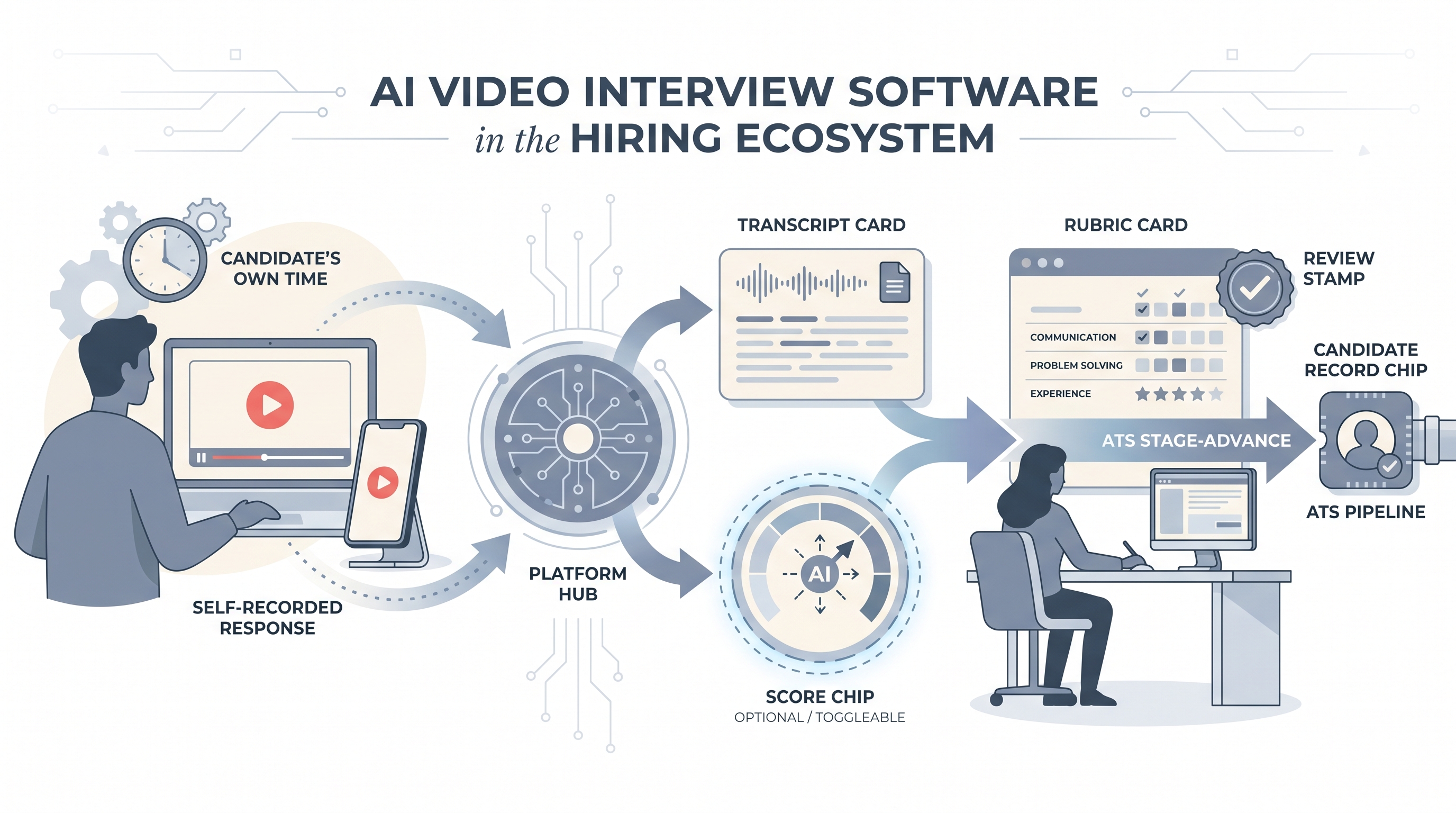

A platform category that combines video screening with automated analysis layers, processing candidate recordings to surface transcript signals, keyword matches, or scoring outputs before a human reviewer makes the hiring decision.

Michal Juhas · Last reviewed May 10, 2026

What is AI video interview software?

AI video interview software is a platform category that combines video recording with automated analysis. Candidates record answers to preset questions on their own schedule. An AI layer processes the resulting video or transcript, returning signals such as keyword matches, speaking pace, sentiment indicators, or a fit score. Reviewers then watch the clips alongside those signals before deciding who moves forward.

The term covers a spectrum from platforms that do nothing more than capture and share recordings, to tools that overlay facial expression scoring, paralinguistic analysis, and composite hiring predictions. Most enterprise vendors sit somewhere in the middle: automated transcripts plus keyword scoring, with an optional confidence score your team can choose to surface or suppress.

In practice

- A recruiter at a mid-size tech company sends 60 first-round screening invites via HireVue. Candidates record three questions in 90 seconds each. The team watches Thursday afternoon using a shared rubric, ignoring the vendor confidence score until legal has reviewed the bias audit.

- A talent acquisition manager says "we use AI screening" and means the platform auto-generates a transcript and flags answers that mention specific skills, not that a model is ranking candidates for hire.

- In sourcing communities, candidates call it "the robot video interview" and post on Reddit asking whether eye contact, lighting, or word choice affects the score. Their anxiety is a signal about transparency gaps you should address in your invite email.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need shared vocabulary in debriefs, vendor calls, and policy reviews. Skim the plain-language section for a fast picture. Use the second when you are making platform decisions or wiring tools to your ATS.

Plain-language summary

- What it means for you: You send candidates a link. They record a few short answers on their own time. Software returns the clips with optional AI signals, like keyword hits or a score. You and the hiring manager review before deciding who advances.

- How you would use it: For early funnel screening on roles with more than 20 similar applicants per week, where the same four questions appear on every first call and scheduling is the bottleneck.

- How to get started: Write the three questions you ask on every first screen. Build a rubric for each. Pilot on one stable role. Review the first batch manually, with the AI score hidden, to calibrate before you expose it to reviewers.

- When it is a good time: When scheduling is the real bottleneck, you have a written rubric, and you can staff a human review within five business days of each submission.

When you are running live reqs and tools

- What it means for you: AI video screening is a scheduling trade and a data capture tool. You gain throughput and a searchable transcript. You lose the follow-up question and you add regulatory surface area if you enable automated scoring.

- When it is a good time: After your rubric is calibrated, legal has reviewed consent language for each hiring jurisdiction, and you have run at least a basic adverse impact check on pilot results.

- How to use it: Wire the vendor into your ATS so reviewed clips move stages automatically. Keep AI-generated scores off the official record until a bias audit validates them. Log which rubric version and model version were active during each batch so you can answer an audit question later.

- How to get started: Resolve consent language and data retention questions before the first invite goes out. Test captions, mobile recording, and low-bandwidth playback. Run a calibration session where the hiring manager scores five pilot recordings against the rubric before live reviews begin.

- What to watch for: Completion drop-off after the invite (often signals friction in the recording flow or a generic system-address sender), ghosting post-submission, facial expression analysis overlays legal has not reviewed, and vendor score drift when they retrain the model between your cohorts.

Where we talk about this

Live AI in recruiting sessions at AI with Michal use video screening as a working case study: where does the human review gate have to stay, what does a compliant consent clause look like, and how do you brief candidates so they trust the process. If your organization is evaluating, replacing, or auditing this step, bring the real vendor contract and policy constraints to Workshops and work through them with practitioners who have run both sides.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to a vendor.

YouTube

- HireVue AI Explained: How Does It Work? walks the vendor-side explanation of what their scoring layer claims to measure.

- Job Seekers React to One Way Video Interviews shows real candidate reactions worth watching before you write your invite email.

- The future of hiring and AI covers vendor claims around bias and AI scoring, useful context for evaluating automated overlays.

- Has anyone used AI video interviews for hiring? Thoughts on bias? in r/recruiting is a frank practitioner thread on bias trade-offs.

- Are one way video interviews actually here to stay? in r/Recruitment debates agency realities and candidate experience.

- Recruiters, is asynchronous video interviewing of candidates useful or a waste of time? in r/recruiting is a longstanding thread with split opinions.

Quora

- What are the pros and cons of AI video interviews? collects mixed candidate and employer answers (read critically and check dates).

AI video scoring versus manual video review

| Factor | AI scoring enabled | Human-only review |

|---|---|---|

| Speed | High (batch processing) | Limited by reviewer hours |

| Construct validity | Varies by vendor; often undisclosed | Depends on rubric quality |

| Legal surface area | Higher (bias audit, consent, NYC LL 144) | Lower, standard interview rules |

| Bias risk | Automated across vocal, expression cues | Anchoring, halo, appearance cues |

| Auditability | Score log exists; inputs may be opaque | Notes required; reviewer accountable |

Related on this site

- Glossary: One-way video interview, Async screening, Scorecard, Human-in-the-loop (HITL), Adverse impact, AI bias audit, Explainable AI in hiring

- Blog: AI candidate screening

- Guides: Talent acquisition managers

- Course: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member