Boolean search

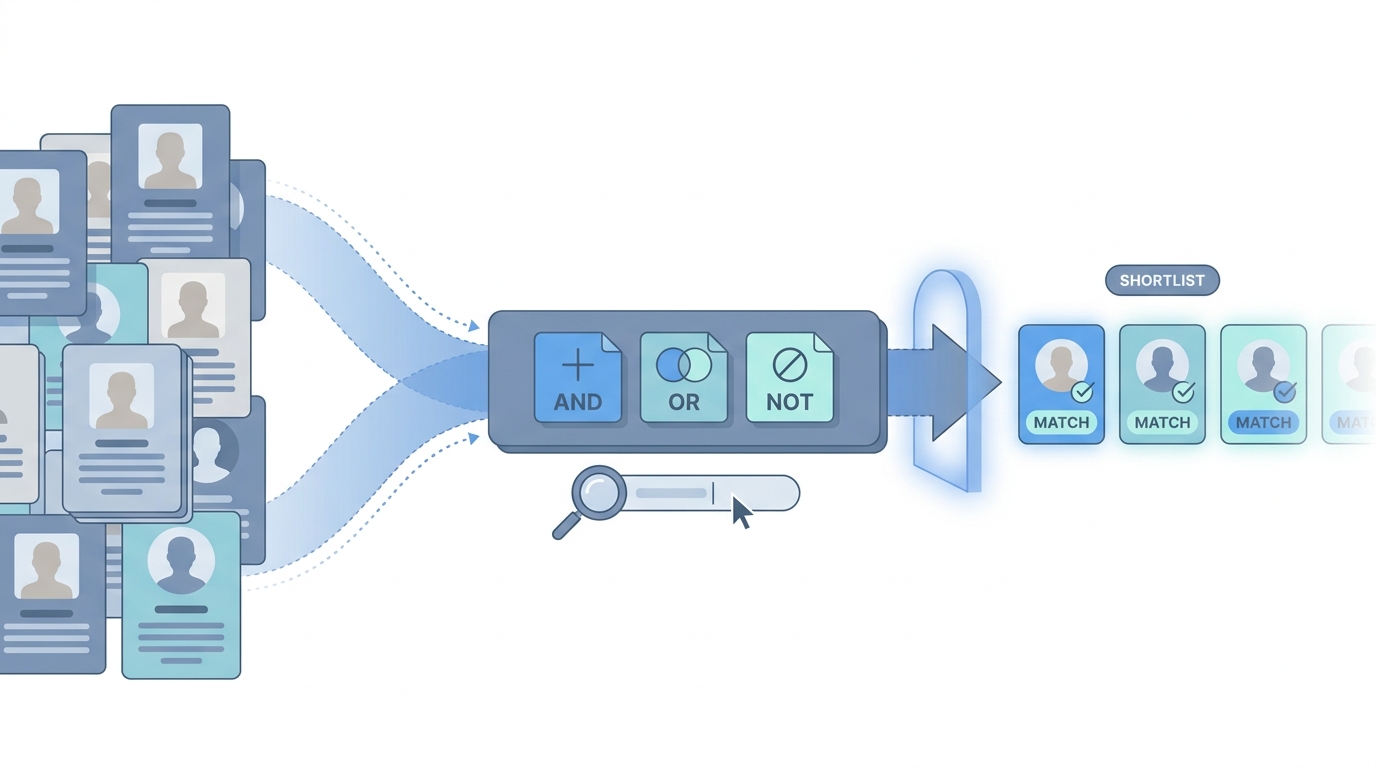

Literal keyword logic (AND, OR, NOT, parentheses, quotes) you use to narrow a talent pool in databases, job boards, or LinkedIn before you lean on semantic or AI ranking.

Michal Juhas · Last reviewed May 2, 2026

What is Boolean search?

Boolean search is a way to filter lists with AND, OR, NOT, quotes, and parentheses so only rows that match your exact words stay in the results. Recruiters use it in databases, spreadsheets, and LinkedIn when they need tight control over keywords.

In practice

- In LinkedIn Recruiter or your ATS search box, sourcers build strings with AND and OR so the list only shows titles, skills, and locations that match hard rules. New teammates usually meet this on their first sourcing course, not from reading a product manual.

- After you export a big sheet from a job board or talent database, you use the same style filters to cut thousands of rows down before anyone reads profiles by hand. You will hear "send me your Boolean" when someone wants to reuse a search that worked last quarter.

- In team chat, people paste a query and ask "why am I getting zero results" or "why fifty thousand," which is Boolean acting as a mirror for how well the team understands the market.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: Boolean is AND / OR / NOT for text boxes so you include the right titles and kick out the wrong ones, like filters on shopping sites.

- How you would use it: You start wide, you add exclusions until the list feels human-sized, then you read profiles.

- How to get started: Copy a working string from a teammate, change one clause, and compare result counts before you rewrite everything.

- When it is a good time: When "semantic" suggestions feel mushy and you need an explainable filter for compliance or a hiring manager who wants receipts.

When you are running live reqs and tools

- What it means for you: Boolean is an auditable slice: exact strings, hard negatives, repeatable exports. It pairs with semantic search when you rank inside a Boolean bucket.

- When it is a good time: When APIs return structured fields you can combine with literals, which is how sourcing automation workshops like to start.

- How to use it: Test in-tool, log counts, name owner of each clause. Read Boolean search vs AI sourcing.

- How to get started: Rebuild one req from scratch with a sourcer watching; diff the strings in version control or a doc.

- What to watch for: Zero-result vanity strings, non-English title drift, and stealth profiles that defeat naive AND stacks.

Where we talk about this

Sourcing automation blocks still spend time on Boolean because providers expose structured fields worth filtering before you spend API money. AI in recruiting blocks connect the same discipline to hiring-manager trust. Practice live at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- How to Use Boolean Search on LinkedIn is one of many LinkedIn-focused walkthroughs; pick the one that matches your Recruiter vs free UI.

- How to Use LinkedIn Recruiter (LinkedIn) is official-ish vocabulary for filters sourcers argue about in kickoff.

- Generative AI in 9 minutes (Fireship) helps teammates understand why AI does not erase the need for hard filters.

- How do you make a great Boolean search? in r/recruiting is a practical tips thread.

- Is boolean search still big? in r/recruiting debates whether literals are "dying art" versus foundation.

- What's your go-to Boolean trick when LinkedIn search feels too crowded? in r/recruiting is full of exclusion patterns people actually run.

Quora

- What is Boolean searching? is a broad definition page (quality varies).

Boolean versus semantic shortlists

| Approach | Strength | Weak spot |

|---|---|---|

| Boolean | Hard exclusions, auditability | Synonyms and fuzzy titles |

| Semantic / vector | Meaning and similar phrasing | Harder to explain "why this row" to compliance |

| Hybrid | Boolean slice, semantic rank | Needs clear owner for each step |

Related on this site

- Blog: AI sourcing tools for recruiters

- Tools: ChatGPT for recruiters

- Guides: Sourcers

- Course: Starting with AI: the foundations in recruiting

- Community: Become a member