Diversity hiring tools

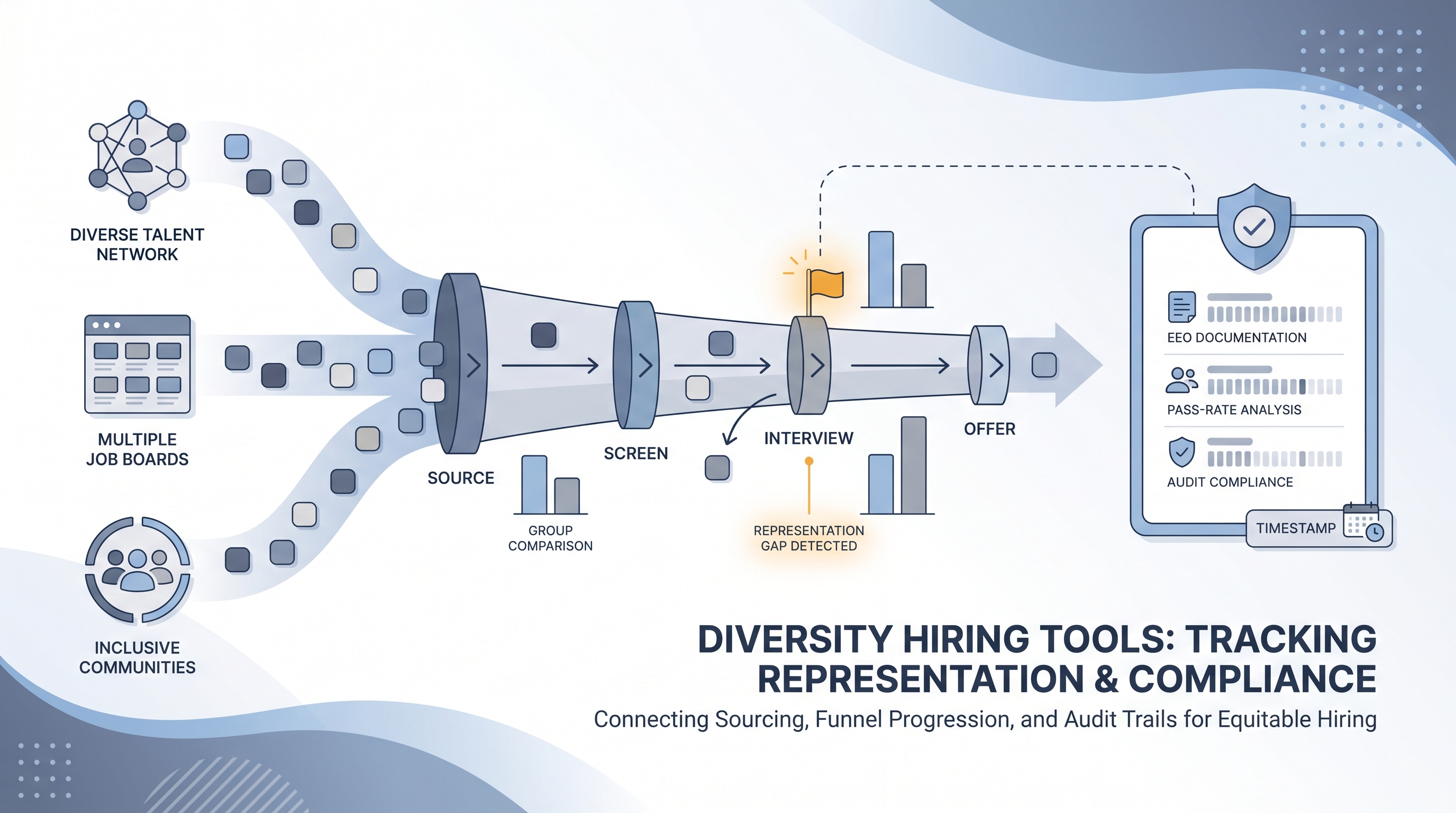

Software platforms and ATS features that help TA teams build representative pipelines by tracking candidate representation at each hiring stage, flagging where gaps emerge, and generating audit trails for GDPR, EEO, and compliance reviews.

Michal Juhas · Last reviewed May 10, 2026

What are diversity hiring tools?

Diversity hiring tools are software platforms and ATS features that track candidate representation at each stage of the hiring pipeline, from sourced to hired. Unlike a simple diversity count at the offer stage, they show where gaps open: whether underrepresented candidates drop off during sourcing, at the first screen, after a hiring manager panel, or at the offer stage.

The distinction matters because the fix depends on the stage. A gap at sourcing points to channel or search strategy. A gap at the hiring manager interview points to rubric calibration and structured debrief. Without stage-level data, DEI programs target the wrong intervention.

In practice

- A TA ops lead configures an ATS to pull EEO self-identification fields into a stage-conversion table, then presents it monthly in a pipeline review alongside time-to-fill and offer acceptance rate. When the hiring manager interview pass rate for one demographic group drops by 20 points, the data surfaces a conversation the team had not had before.

- A sourcer running a university recruiting program adds historically Black colleges and universities and Hispanic-serving institutions to the sourcing list, then uses a diversity analytics tool to track whether early-funnel representation improves over the semester.

- An HRBP reviewing a failed DEI audit uses stage-level data to show that the gap is not at sourcing but at the hiring manager interview stage, and uses that finding to mandate structured scorecard criteria and a blind debrief before the next cohort opens.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how these tools show up in your ATS, sourcing workflow, or compliance documentation.

Plain-language summary

- What it means for you: Software that shows where your pipeline loses diverse candidates at each stage, not just whether your final hires match a target.

- How you would use it: Connect your ATS EEO fields to a stage-level conversion report. Review it monthly alongside pipeline health metrics rather than only at year-end.

- How to get started: Export one quarter of stage-decision data with EEO indicators, build a simple pivot table by group, and identify the two stages with the biggest drop. Fix those before buying new software.

- When it is a good time: After you have a consistent EEO data collection process and a named owner for the review cadence. A tool without an owner generates reports nobody acts on.

When you are running live reqs and tools

- What it means for you: Diversity hiring tools connect to your ATS stage data and EEO fields to produce group pass-rate tables, sourcing channel analytics, and audit-ready exports. They make bias risks visible at the point where calibration still helps.

- When it is a good time: After your sourcing channels are stable enough to measure, your EEO consent language is documented, and at least one person owns the metric review cadence.

- How to use it: Pull stage-conversion data by group. Flag stages where one group passes at less than four-fifths the rate of the highest-passing group, then investigate whether the selection tool at that stage has documented validity. See adverse impact and AI bias audit for the full audit methodology.

- How to get started: Map your ATS stage fields to EEO indicators, confirm consent language with legal, and build the first report before evaluating dedicated software. Most early-stage programs run on ATS exports and a spreadsheet.

- What to watch for: Self-identification gaps making the sample too small for statistical conclusions; AI recommendation features embedding historical bias; and GDPR documentation lagging the data collection. Log which tool version produced each report and store the audit trail with your DPIA.

Where we talk about this

On AI with Michal live sessions we cover diversity hiring tools in both directions: the AI in recruiting track connects them to structured evaluation and bias audit practices, while the sourcing automation track shows how to build sourcing channels that improve early-funnel representation without automating bias at scale. If you want the full room conversation with peers working the same problem, start at Workshops and bring your ATS schema and one real quarter of data.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to a new platform.

YouTube

- Search "diversity hiring funnel analysis" on the AIHR YouTube channel for practitioner walkthroughs of stage-level representation tracking and how to present findings to leadership.

- Search "DEI metrics hiring" on LinkedIn Talent Solutions YouTube for how sourcing and screening stages affect representation outcomes across the funnel.

- Search "adverse impact hiring tools" on YouTube for step-by-step tutorials on computing group-rate pass rates and spotting threshold violations from ATS exports.

- Diversity hiring tools discussion in r/humanresources shows which features practitioners actually use versus vendor marketing claims.

- DEI hiring data and ATS in r/TalentAcquisition covers how TA ops teams structure quarterly diversity reporting and which metrics leadership acts on.

- Adverse impact and AI screening in r/recruiting connects diversity funnel concerns to the legal risk side, with examples from recruiters running AI tools at scale.

Quora

- What tools do companies use to improve diversity in hiring? collects practitioner and DEI consultant perspectives on which stage-level metrics matter most (quality varies; read critically).

Structured tools versus manual DEI tracking

| Approach | Visibility | Key risk |

|---|---|---|

| Stage-level ATS funnel tracking | Group drop-off at each gate | Requires consistent EEO self-identification |

| Dedicated DEI analytics platform | Richer cross-req and channel cuts | Data residency and GDPR consent scope |

| AI-powered sourcing filters | Wider early-funnel reach | Can embed historical hiring bias |

| Blind resume review | Reduces name and photo influence at screen | Does not address panel bias later in process |

Related on this site

- Glossary: Diversity funnel metrics, Adverse impact, AI bias audit, Scorecard, Explainable AI in hiring, Human-in-the-loop (HITL), Talent acquisition metrics

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member