Hiring assessment test

A standardized evaluation used in hiring to measure whether a candidate meets role requirements before a decision is made, covering cognitive ability, job-relevant skills, personality traits, or situational judgment.

Michal Juhas · Last reviewed May 5, 2026

What is a hiring assessment test?

A hiring assessment test is a standardized evaluation that measures whether candidates meet the requirements of a specific role before a hiring decision is made. The test format varies by what the role demands: a cognitive screen for roles requiring fast pattern recognition, a work sample for roles requiring documented output, or a situational judgment test for roles where decision-making under pressure is the core skill.

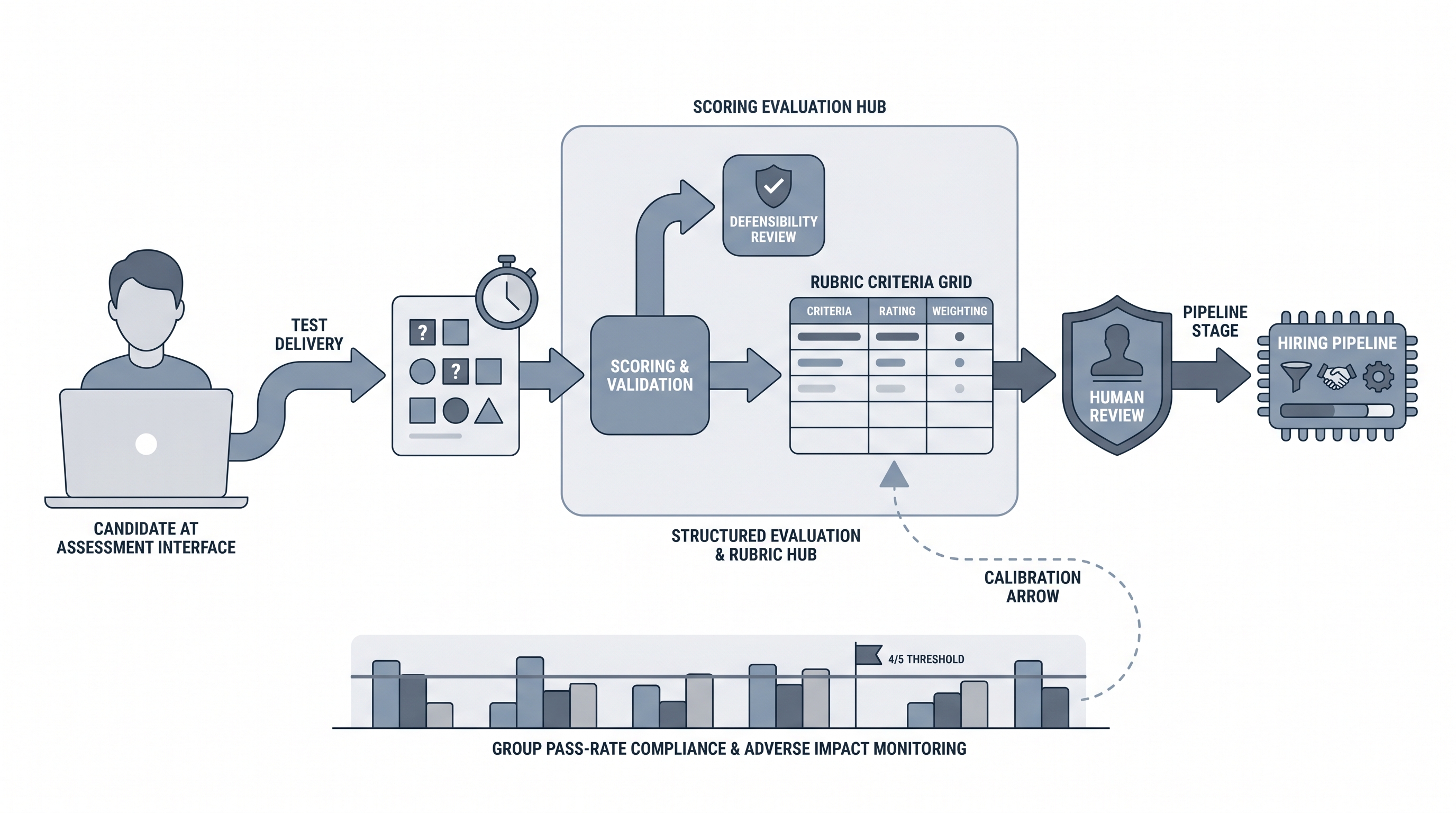

The distinguishing feature is structure: the same questions, the same scoring criteria, and the same scoring process applied consistently across every candidate. That consistency is what makes assessment data comparable across a pool and defensible in a legal review. Without it, you are comparing subjective impressions, not performance signals.

In practice

- A TA team at a 400-person company adds a 20-minute cognitive screen after the initial resume filter for all analyst-level roles. The screen runs before the recruiter call, so both sides arrive at the first conversation knowing whether the cognitive bar has been cleared. Group pass rates for the first cohort flag a demographic gap they fix before rolling out to additional role types.

- A recruiter sourcing for a content marketing role sends a short writing brief after the first call. The brief asks candidates to outline an article on a topic the team actually covers. Two out of five candidates who looked strong on paper deliver work samples that change the shortlist entirely.

- A talent ops lead building a recruiting dashboard adds assessment score by stage alongside hiring funnel conversion rates. When pass rates drop mid-quarter, the dashboard flags it before the hiring manager notices that interview quality has changed.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in intake calls, vendor reviews, and compliance audits. Skim the first section when you need a fast shared picture. Use the second when you are deciding where an assessment fits in a live req or building a selection system.

Plain-language summary

- What it means for you: A hiring assessment test is any structured evaluation that compares candidates on a common scale. The key word is structured: the same task, the same rubric, every time.

- How you would use it: Pick one attribute the role genuinely requires, find or build a test that measures it, validate that scores predict performance, and place it at the right funnel stage.

- How to get started: Before buying a vendor assessment, check whether your ATS already tracks score data you are not using. A simple scoring rubric applied to phone screen notes is a lightweight first version.

- When it is a good time: After the intake conversation is complete and the hiring manager has agreed on what the role actually requires. Assessments applied before that clarity produce noise.

When you are running live reqs and tools

- What it means for you: At scale, consistent assessment data makes pipeline reviews faster and debrief conversations shorter. Everyone has the same score to reference, not competing interpretations of the same conversation.

- How to use it: Log assessment vendor, version, and cohort date alongside each score so you can detect model drift across hiring cycles. Add a group pass-rate column to your pipeline report and review it every quarter.

- How to get started: Wire assessment invite triggers to ATS stage changes so candidates receive instructions automatically. Route scores back to the candidate record through the vendor API rather than manual entry. See ATS API integration for the technical setup.

- When it is a good time: When a role type is hired at volume and you have enough past hires to validate that scores correlate with performance. One hire per quarter is not enough data to validate an assessment.

- What to watch for: Candidate drop-off on long assessments, which harms outbound talent sourcing conversion for passive candidates. Aim for tasks under 45 minutes, communicate the time estimate clearly, and offer a deadline rather than a same-day response window.

Where we talk about this

On AI with Michal live sessions, hiring assessment tests come up in both the AI in recruiting and sourcing automation tracks. The AI in recruiting sessions cover how to evaluate vendor assessments for bias and validity, how to brief a rubric the panel can calibrate to, and when to add AI scoring versus manual review. The sourcing automation sessions cover how to wire assessment invites and scores to ATS pipeline stages without manual copy-paste. If you want the full room conversation with real pipeline examples, start at Workshops and bring the role types you are currently hiring for.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

Search "pre-employment assessment test recruiting" for TA practitioners walking through vendor comparisons and validation stories. Look for videos showing actual score reports and group pass-rate data rather than vendor demo reels.

r/recruiting and r/humanresources both have threads on assessment test drop-off rates and candidate experience complaints. The pattern shows up in threads about why strong candidates ghost after receiving an assessment invite.

Quora

"How do companies validate pre-employment tests?" surfaces answers from I-O psychology practitioners who explain content and criterion validity in plain language. The best responses include the minimum sample sizes needed before a validation study is credible.

Assessment type comparison

| Type | What it measures | Adverse impact risk | Best fit |

|---|---|---|---|

| Cognitive ability | Reasoning, pattern recognition | Higher for many groups | High-volume, fast-paced roles |

| Work sample | Job task output | Lower when task is role-specific | Specialist and mid-level roles |

| Situational judgment | Decision-making in scenarios | Moderate | Management, customer-facing roles |

| Personality inventory | Trait profile | Varies by instrument | Culture-sensitive, leadership roles |

| Job knowledge | Domain expertise | Low when validated | Technical, licensed, certified roles |

Related on this site

- Candidate assessment tools - the broader tool category with vendor landscape

- Employment skills assessment - work-sample specific assessment design

- Adverse impact - group pass-rate analysis and the four-fifths rule

- Explainable AI hiring - documenting and surfacing AI scoring decisions

- Human in the loop - governance framework for AI-assisted decisions

- Hiring funnel conversion rates - how assessment placement affects stage metrics

- Interview to offer ratio - calibration signal that assessment quality directly affects

- Scorecard - the rubric design that makes assessments comparable

- ATS API integration - wiring assessment scores into the pipeline automatically

- Workshops - live sessions on assessment design and pipeline analytics

- Become a member - office hours for vendor evaluation and compliance questions