Hiring assessment tools

The full category of software and structured exercises that hiring teams use to evaluate candidates before, during, or after interviews, covering cognitive tests, skills simulations, work samples, behavioral screens, and AI-scored assessments, selected to predict job performance and reduce reliance on subjective judgment.

Michal Juhas · Last reviewed May 7, 2026

What are hiring assessment tools?

Hiring assessment tools are the tests, simulations, and scored exercises that hiring teams add to the pipeline to get structured data before or alongside interviews. The category is wide: a five-minute cognitive ability screen, a take-home coding challenge, a structured situational judgment test, and an AI-scored async video interview all qualify. What makes them useful is not the technology but the validity evidence behind them: is this instrument predictive of performance in this specific role, for candidates with backgrounds comparable to your target pool?

The buying market is fragmented. Some vendors focus on one category, for example developer simulation platforms or validated personality suites. Others bundle multiple test types under one dashboard. Buying on interface quality rather than technical validity is the most common mistake: a polished invite flow does not make an unvalidated instrument less biased.

In practice

- A recruiter running a high-volume customer support search uses a short validated situational judgment test as one ranked data point, reviews group pass rates before the first batch of invites goes out, and does not use the score as the only gate to the next round.

- A TA leader evaluating a new vendor asks for the technical manual and learns the tool was normed on software engineers, not service roles, making the claimed predictive validity irrelevant for the open req.

- An HRBP reviewing a failed hire round discovers no one tracked demographic pass rates through the coding screen, leaving the team unable to respond to an internal equity audit.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need shared vocabulary when evaluating vendors, configuring a screening stage, or running a compliance review. Skim the first section for a fast overview. Use the second when you are making decisions on a live req or integrating a new tool.

Plain-language summary

- What it means for you: A hiring assessment tool is a scored test, exercise, or AI screen that gives you a data point about a candidate beyond their CV. The score is meaningful only when the tool was built and validated for the job type you are filling.

- How you would use it: Choose one assessment that maps to your top two job requirements, send it to every candidate at the same stage, review group pass rates before setting a cut score, and never use a single score as the only gate to the next round.

- How to get started: Ask the vendor for a validity report that names the job family, sample size, and demographic group differences. If they cannot supply one for your role type, do not deploy until they can.

- When it is a good time: After you have a scorecard naming the competencies you are measuring, after legal has confirmed the lawful basis, and after you have a process for handling GDPR deletion requests.

When you are running live reqs and tools

- What it means for you: An AI-scored assessment adds a vector of candidate signal that manual review would miss at volume, but it also adds model risk: the scoring algorithm inherits any bias in the training data, can fail silently, and may produce different group pass rates at your specific cut score.

- When it is a good time: When the same competency must be evaluated consistently across fifty or more candidates in a single cycle, when your structured interview panel is stretched, and when you have a compliance owner who can run adverse impact reports before each new cohort launches.

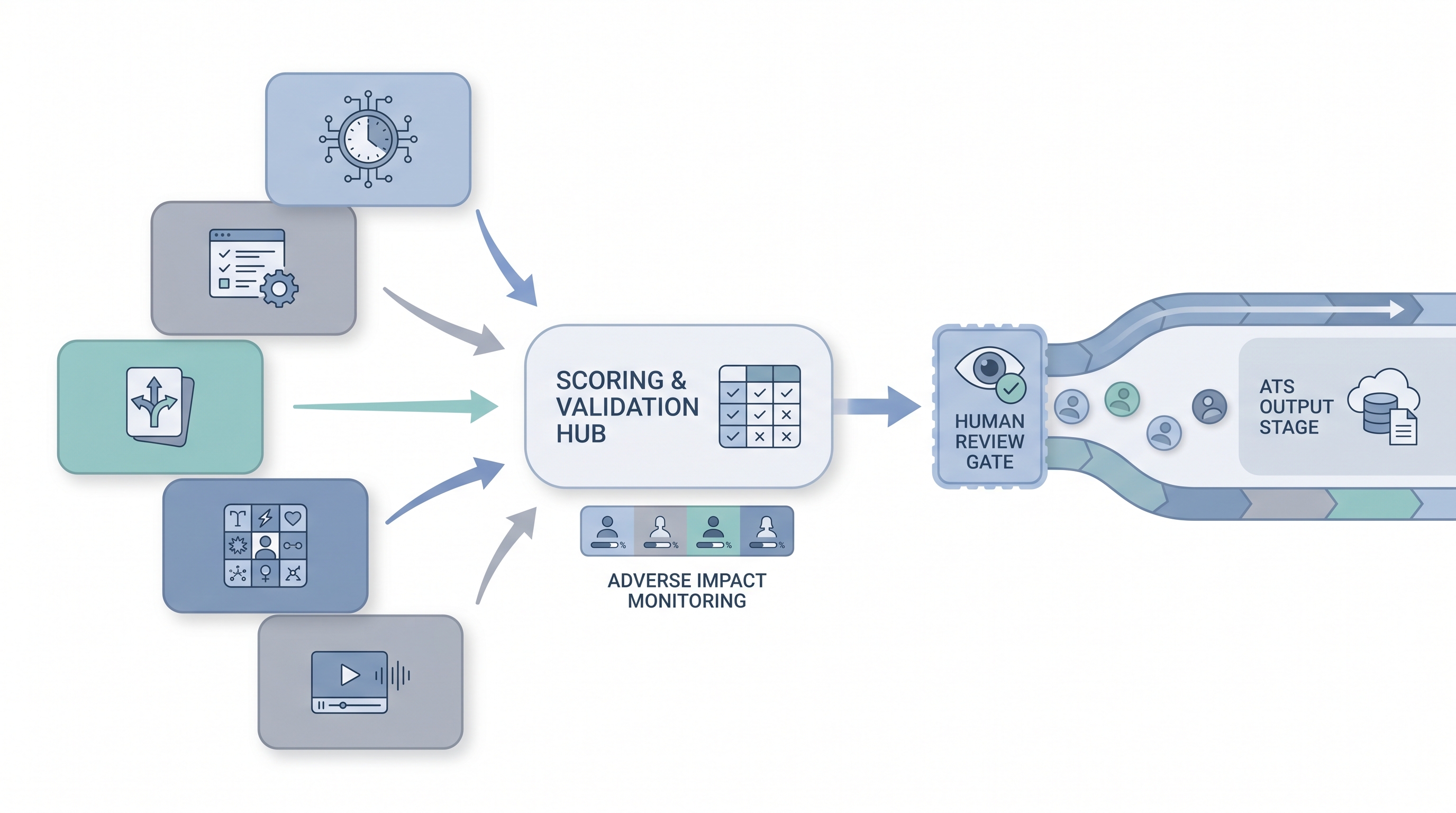

- How to use it: Integrate results into your ATS via a documented API connection, map each score to a specific scorecard criterion, and apply a human-in-the-loop review before any automated shortlisting decision reaches a candidate. Log which tool version scored each batch.

- How to get started: Run a parallel pilot: have your panel independently score ten candidates and compare to the tool output. If the correlation is low, the instrument is not measuring what you think it is. Check AI bias audit before expanding to full-cohort scoring.

- What to watch for: Silent adverse impact accumulating before anyone runs the numbers, AI scoring behaving differently at high versus low volume, vendors changing model versions mid-campaign without notice, and GDPR deletion requests the assessment platform cannot fulfill.

Where we talk about this

On AI with Michal live sessions, hiring assessment tools appear in the AI in recruiting track: practical exercises for reading validity reports, running four-fifths adverse impact calculations on vendor data, and briefing hiring managers on what scored screens can and cannot predict. If you want the full room conversation with real vendor evaluation exercises and compliance scenarios, start at Workshops and bring the name of any tool you are currently evaluating.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "pre-employment assessment validity study recruiting" for IO psychology explainers from HR associations covering what criterion validity means and what vendor demos typically skip.

- Search "hiring assessment tools comparison" for practitioner-produced walkthroughs of work sample and situational judgment test design for non-specialist talent teams.

- Search "adverse impact pre-employment testing EEOC" for compliance-focused overviews of the four-fifths rule and when a cut score creates legal exposure.

- r/recruiting has recurring threads on assessment vendor shortlists, candidate drop-off rates, and candid tool opinions outside paid review sites.

- r/humanresources covers pre-employment test compliance, adverse impact questions, and GDPR concerns from HR practitioners.

Quora

- Search Quora for "best hiring assessment tools" to find practitioner opinions across company sizes and industries, useful as a first-pass landscape scan before vendor demos (verify claims independently before buying).

Hiring assessment categories at a glance

| Category | What it measures | Main risk |

|---|---|---|

| Cognitive ability test | General mental ability and reasoning speed | Consistent demographic differences in norming studies |

| Work sample simulation | Task performance under realistic conditions | Time and cost to validate per role type |

| Situational judgment test | Decision-making in role-relevant scenarios | Norming sample must match your candidate pool |

| Personality inventory (Big Five) | Stable traits correlated with work behaviors | Cultural interpretation differences and faking |

| Technical skills test | Role-specific knowledge and execution | Scope creep: tests should reflect the actual job |

| AI-scored video screen | Behavioral signals from speech and expression | Model bias and Article 22 GDPR rights |

Related on this site

- Glossary: Candidate assessment tools, Employment assessment tools, Employment assessment test, Adverse impact, AI bias audit, Human-in-the-loop (HITL), Scorecard, Async screening, One-way video interview

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member