Predictive analytics in recruitment

The use of historical hiring data and statistical models to forecast future outcomes, such as which candidates are likely to succeed in a role, which reqs are at risk of stalling, or how long a specific hire will take.

Michal Juhas · Last reviewed May 15, 2026

What is predictive analytics in recruitment?

Predictive analytics in recruitment uses historical hiring data and statistical models to estimate future outcomes. Instead of reporting what happened last quarter, it helps TA teams answer what is likely to happen next: which open reqs are at risk of missing their close date, which candidates in the pipeline are most likely to accept an offer, and which sourcing channels will produce hires who stay past 90 days.

The distinction matters for how you spend your time. Descriptive analytics helps you learn from the past. Predictive analytics helps you act before a problem becomes a missed hire.

In practice

- A TA lead who knows that senior engineering reqs historically take 60 days to close will flag any open req at day 45 as "at risk" in the Monday planning meeting. That is the simplest version of predictive analytics: a historical average used as a forward-looking alert.

- "Likelihood to accept" scores appear in sourcing platforms as a way to prioritize outreach. In practice, recruiters often find the score is driven by a few noisy signals like LinkedIn activity recency rather than what a candidate actually wants from a next role.

- "Predictive" is one of the most overloaded words in HR tech. Ask any vendor for the training dataset, sample size, and adverse impact test results before trusting a model's outputs in a hiring decision.

Quick read, then how hiring teams use it

This is for recruiters, TA leaders, and HR business partners who evaluate or implement data tools in hiring. Skim the first section for shared vocabulary in debrief conversations and vendor evaluations. Use the second when deciding whether to buy or build a predictive layer and how to operate it responsibly.

Plain-language summary

- What it means for you: Predictive analytics uses past hiring patterns to estimate what will happen in your current pipeline. Instead of asking why a req took 75 days after it closed, you ask whether a req open for 30 days is on track.

- How you would use it: Flag at-risk reqs before they miss a target, prioritize outreach to candidates most likely to engage, or forecast headcount plan achievability before budget cycles close.

- How to get started: Pull 18 months of closed reqs from your ATS. Calculate average time-to-fill by req type and level. Flag any open req that has already exceeded the historical median. That is a working predictive heuristic that requires no vendor.

- When it is a good time: After your HR analytics in recruitment practice is stable: consistent disposition codes, clean stage timestamps, and a team that already reads and acts on a weekly funnel report.

When you are running live reqs and tools

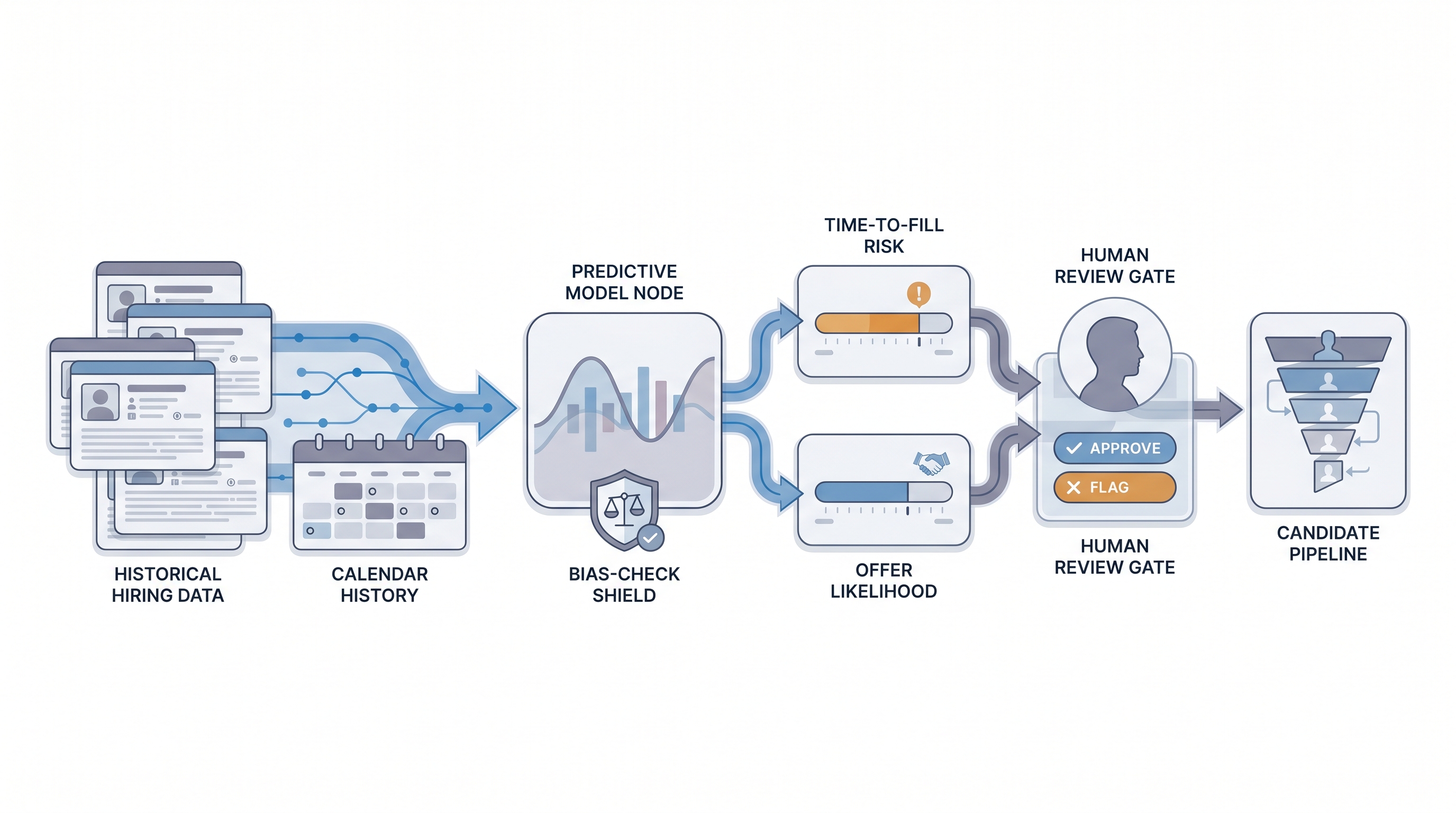

- What it means for you: Predictive models feed probability estimates into your ATS or sourcing tool: a time-to-fill risk flag, a candidate fit score, or an offer acceptance probability. Each output changes recruiter behavior only if the model is well-calibrated and the team trusts it enough to act on it.

- When it is a good time: When you have at least 12 to 18 months of clean ATS data with consistent outcome labels, a named owner for auditing model outputs for bias, and a human-in-the-loop review gate before any consequential hiring decision.

- How to use it: Run a group pass-rate check on any vendor model before deploying it. Compare acceptance or rejection rates across protected groups and flag any gap exceeding the four-fifths threshold from adverse impact analysis. Document the model in your records of processing under GDPR.

- How to get started: Start with time-to-fill forecasting using ATS data, then layer candidate scoring only after your descriptive analytics practice is reliable. Read explainable AI in hiring before committing to a vendor whose model outputs are opaque.

- What to watch for: Model drift as the labor market shifts from training conditions; bias amplification from historically skewed hiring decisions; vendor claims about prediction accuracy that are not backed by external audits or published test data.

Where we talk about this

On AI with Michal live sessions the AI in recruiting track covers building the data foundation for predictive models, including ATS audit, metric definitions, and connecting hiring outcomes to HRIS retention data. The sourcing automation track goes deeper on using pipeline signals to act before reqs stall. Bring your current ATS and the three metrics your TA leader reports to leadership so feedback connects to what you already measure. Start at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast and tooling changes frequently. Treat these as starting points, not endorsements. Do not copy scripts that move candidate data to external platforms without reviewing your DPA first.

YouTube

- Search "predictive analytics in HR recruitment" filtered to the last 12 months. Vendor-sponsored tutorials dominate, so prioritize practitioner walkthroughs that show model validation steps alongside the demo.

- Search "people analytics attrition prediction" for adjacent content on workforce forecasting that shares the same data quality prerequisites as recruitment prediction models.

- Search "AI bias in hiring audit" to see how teams actually run adverse impact checks on scoring models before production deployment.

- r/PeopleAnalytics has candid threads about what organizations can actually predict reliably versus what vendors claim. The skeptical replies are worth reading as carefully as the success stories.

- r/humanresources includes TA leaders discussing whether predictive scores have changed their day-to-day decisions or just added another number to ignore in a dashboard.

- r/recruiting has threads on specific vendor experiences where prediction accuracy turned out to be lower than marketed.

Quora

- How do companies use predictive analytics in hiring? collects varied practitioner answers across company sizes and maturity levels.

Predictive vs descriptive analytics in hiring

| Dimension | Descriptive | Predictive |

|---|---|---|

| Core question | What happened? | What will happen? |

| Typical output | Time-to-fill report, funnel conversion table | Risk flag, fit score, forecast date |

| Data requirement | Clean historical records | Clean records plus outcome labels |

| Risk | Misleading if data is dirty | Misleading plus bias amplification |

| Good starting point | Yes, start here | Only after descriptive is stable |

Related on this site

- Glossary: HR analytics in recruitment, Talent acquisition metrics, Explainable AI in hiring, Adverse impact, AI bias audit, Human-in-the-loop (HITL), Hiring funnel conversion rates, Time-to-fill, Sourcing funnel metrics

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: foundations in recruiting