Sourcing pass-through rate

The percentage of sourced candidates who advance from the initial sourcing stage to the next active hiring step, typically a recruiter phone screen or hiring manager submission, measuring how well sourcing criteria match actual hiring needs.

Michal Juhas · Last reviewed May 9, 2026

What is sourcing pass-through rate?

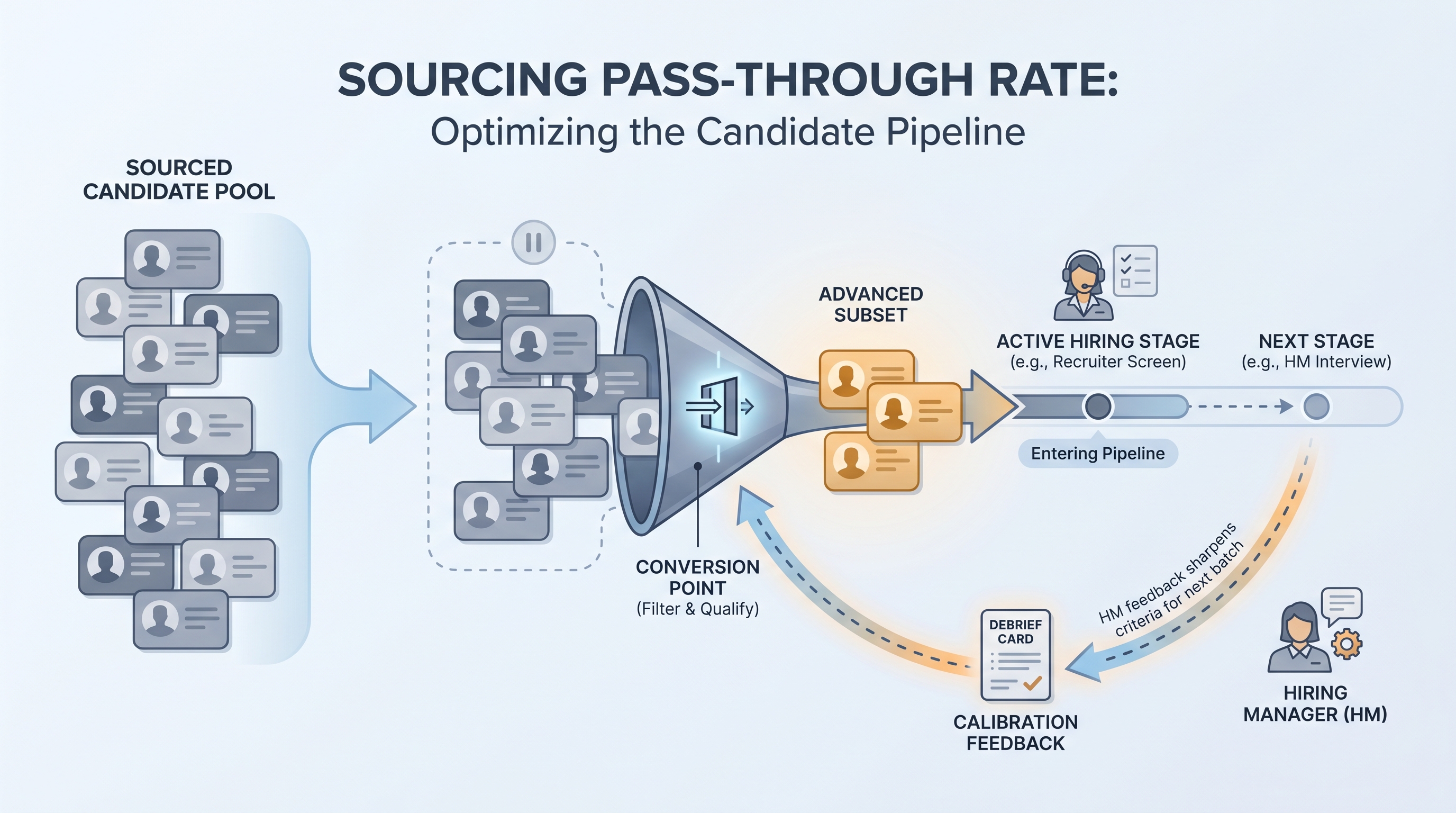

Sourcing pass-through rate is the percentage of candidate profiles a sourcer works that advance to the next active hiring step, typically a recruiter phone screen or hiring manager submission. It sits at the conversion point between sourcing activity and real pipeline contribution.

A team might source 80 profiles against a req in a week, advance 12 to recruiter screens, and log a 15 percent pass-through rate. That number reflects targeting accuracy, brief quality, and the calibration between sourcer and hiring manager, not just how many messages went out.

The metric matters more as AI tools scale sourcing volume. When a sourcer can work ten times the profiles with AI assistance, raw profile count stops carrying signal. Pass-through rate is what separates productive scale from volume theater.

In practice

- A sourcer reviews 60 AI-suggested profiles against a software engineering req brief. She shortlists 10 for recruiter review and 7 advance to a phone screen, giving her an 11.7 percent pass-through rate for that batch. She tracks this weekly against her rolling 12 percent target and flags when it drops two weeks in a row.

- After a hiring manager rejects 8 of 10 submitted profiles in the first two weeks, the TA lead pulls pass-through data and finds the sourcing brief missed a critical requirement. The team adds the missing criteria to the intake template before the next batch is worked.

- A sourcing automation playbook includes a weekly alert: if pass-through drops below 8 percent, it triggers a 20-minute calibration debrief with the hiring manager before the sourcer continues the search.

Quick read, then how hiring teams use it

This is for sourcers, TA leads, and TA ops practitioners who need a shared metric in pipeline reviews, ICP calibration calls, and sourcing automation debriefs. Skim the first section for a fast shared picture. Use the second when configuring dashboards or setting alert thresholds for AI-assisted sourcing.

Plain-language summary

- What it means for you: Sourcing pass-through rate answers "of the profiles I worked this week, how many actually became real conversations?" It tells you whether sourcing effort is producing pipeline or producing activity that fades at the first review.

- How you would use it: Pick one stage boundary (most teams use sourced-to-screened) and track it weekly by req family. When it drops two consecutive weeks, investigate criteria alignment before adding more outreach volume.

- How to get started: Pull last month of sourcing activity and count how many profiles advanced to the next stage. Divide by total profiles worked. If you do not have that data, that is the first fix: log every profile touched and its outcome in the ATS.

- When it is a good time: Before increasing AI-assisted sourcing volume, and after every major change to sourcing criteria, so you have a baseline to compare against.

When you are running live reqs and tools

- What it means for you: At scale, pass-through rate is how you catch calibration failures before they waste a sourcer week. A 5 percent pass-through on an AI-heavy sourcing run usually means the targeting criteria or the ICP input to the AI is off, not that the sourcer is underperforming.

- When it is a good time: After every ICP update, after adding a new sourcing channel, or after switching AI sourcing tools. Pass-through movement tells you which change mattered and in which direction.

- How to use it: Set a floor threshold in your weekly sourcing review (for example, under 10 percent sourced-to-screened triggers a calibration call). Log which ICP version and which tool variant each batch used so you can trace drops to a specific change.

- How to get started: Standardize stage labeling in your ATS so sourced profiles are distinguishable from inbound applicants. Without that separation, pass-through rate for outbound sourcing is contaminated by inbound conversion and the signal disappears.

- What to watch for: Pass-through rate and response rate moving in opposite directions. High response rate with low pass-through means candidates are replying but not qualifying: either the outreach is too broad or the brief is unclear. Both signals appear in the same sourcing funnel metrics view if you track them together.

Where we talk about this

On AI with Michal live sessions, sourcing pass-through rate comes up in sourcing automation blocks as the core feedback signal that keeps AI-assisted volume honest. Teams walk through how to calculate it from ATS exports and outreach tool data, and how to wire it into a calibration cadence with hiring managers so criteria improve in real time. Full room conversation at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Recruiting Metrics That Matter (Recruiting Daily Advisor) covers conversion-focused reporting for teams building their first sourcing dashboard.

- Sourcing Metrics and KPIs for Recruiters (AIHR) walks how sourcing stage conversion connects to hire quality and business outcomes.

- How to Measure Sourcing Effectiveness (SHRM) explains why internal funnel trends outperform industry benchmarks for diagnosing sourcing problems.

- What sourcing metrics do you track? in r/recruiting has candid responses from working sourcers about which conversion numbers drive real decisions.

- How do you track pipeline metrics? in r/recruiting surfaces real-world approaches to funnel measurement and which data most managers act on.

- Sourcing automation and metrics in r/RecruitmentAgencies discusses how automation changes funnel baselines and what to watch when volume scales quickly.

Quora

- How do you measure the effectiveness of your recruiting sourcing? collects practitioner views on sourcing stage conversion and attribution (read critically for context-specificity).

Pass-through rate versus related sourcing metrics

| Metric | What it measures | When it warns you |

|---|---|---|

| Response rate | Outreach landing rate | Messaging or targeting problem |

| Pass-through rate | Profile-to-pipeline conversion | Calibration or ICP quality problem |

| Screened-to-submitted | Sourcer-to-HM conversion | Brief alignment or sourcer skill gap |

| Source-to-offer | End-to-end channel value | Strategic channel mix needs review |

Related on this site

- Glossary: Sourcing funnel metrics, Talent acquisition metrics, Time to fill, AI sourcing tools, Scorecard, Candidate data enrichment

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Course: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member