Candidate assessment test

A standardized evaluation delivered to job applicants to measure job-relevant competencies, producing scored data that supports consistent and defensible hiring decisions before a final offer is made.

Michal Juhas · Last reviewed May 10, 2026

What is a candidate assessment test?

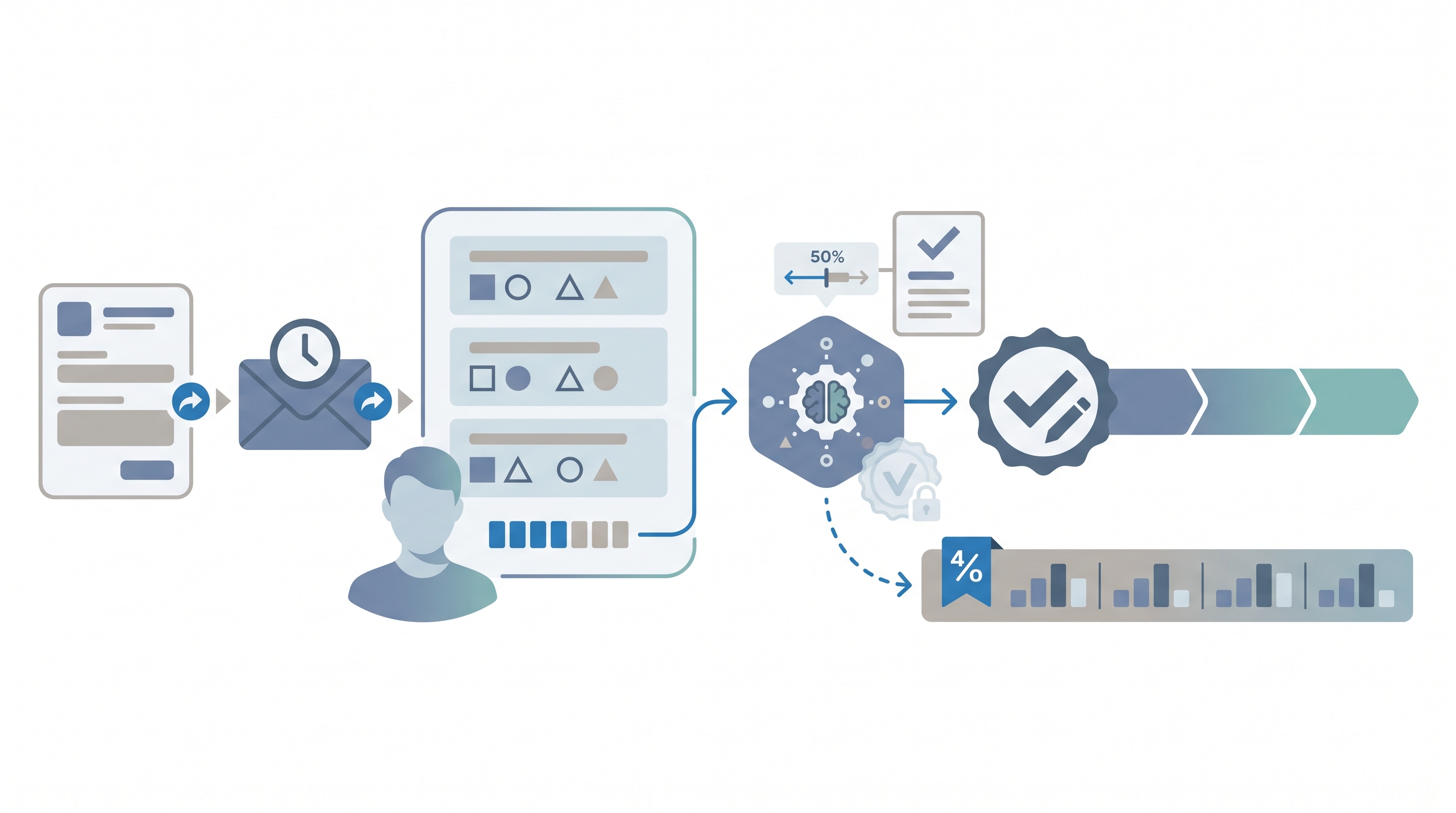

A candidate assessment test is a standardized evaluation delivered to job applicants to produce scored data beyond what a resume or screening call provides. Formats range from short timed cognitive exercises and realistic work samples to situational judgment tests and validated personality inventories.

The score adds value only when the instrument was validated against job performance criteria for the specific role type and population, not a vendor research sample. Teams that skip this step often find the gap only after a compliance review flags an unexplained pass-rate difference across candidate groups.

In practice

- A recruiter running volume hiring for a contact center sends a situational judgment test to every applicant after the resume screen, reviews group pass rates before setting the cut score, and treats the result as one input on the scorecard, not the only gate to the next round.

- A TA leader evaluating a new vendor requests the technical validity manual and discovers the tool was normed on software engineers, making the claimed predictive validity irrelevant for the open customer support req.

- An HRBP reviewing a failed hire cohort finds no one tracked demographic pass rates through the online skills screen, leaving the team unable to answer an internal equity audit.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in vendor briefings, debrief rooms, and policy reviews. Skim the first section for a fast shared picture. Use the second when deciding how an assessment layer fits into a live screening workflow.

Plain-language summary

- What it means for you: A candidate assessment test is a scored task or inventory every applicant in the same req completes under the same conditions, adding a consistent data point before anyone meets in person.

- How you would use it: Pick one instrument that maps to your top two job requirements, send it at the same stage to every candidate, and review group pass rates before you set a cut score. Never use a single score as the only gate to the next round.

- How to get started: Ask the vendor for a validity report that names the job family, sample size, and demographic group differences. If they cannot supply one for your role type, do not deploy until they can.

- When it is a good time: After you have a scorecard that names the competencies you are measuring, after legal has reviewed the lawful basis, and after you have a process for accessibility requests and GDPR deletion.

When you are running live reqs and tools

- What it means for you: An AI-scored candidate assessment adds consistent signal at volume, but it also adds model risk: the algorithm inherits bias in training data, can fail silently, and may produce different group pass rates at your specific cut score.

- When it is a good time: When the same competency must be evaluated consistently across fifty or more candidates in a single cycle, when your interview panel is stretched, and when you have a compliance owner who can run adverse impact reports before each cohort launches.

- How to use it: Integrate results into your ATS through a documented API connection, map each score to a scorecard criterion, and apply a human-in-the-loop review before any automated shortlisting decision reaches a candidate. Log which tool version scored each batch.

- How to get started: Run a parallel pilot first: have your panel independently score ten candidates and compare to the tool output. If the correlation is low, the instrument is not measuring what you think it is. Check AI bias audit before expanding to full-cohort scoring.

- What to watch for: Silent adverse impact accumulating before anyone runs the numbers, vendors changing model versions mid-campaign without notice, and GDPR deletion requests the assessment platform cannot fulfill because scores sit outside your retention policy.

Where we talk about this

On AI with Michal live sessions, candidate assessment tests come up across two tracks: the AI in recruiting block covers how AI scoring layers change candidate experience, what valid assessment review looks like in practice, and how to connect assessment data into ATS pipelines without manual copy-paste. The sourcing automation block covers triggering assessment invites from ATS stage changes and routing results back through an API. Start at Workshops and bring the name of any tool you are currently evaluating.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "pre-employment assessment test validity study IO psychology" for practitioner and academic explainers on what criterion validity means and what vendor demos typically leave out.

- Search "work sample test candidate assessment recruiter" for practitioner walkthroughs of work-sample and situational judgment test design built for TA teams without an IO psychology background.

- Search "adverse impact four-fifths rule pre-employment testing EEOC" for compliance-focused overviews of when a cut score creates legal exposure.

- r/recruiting has recurring threads on assessment vendor shortlists, candidate drop-off rates from testing, and candid opinions you will not find on paid review sites.

- r/humanresources covers pre-employment test compliance, adverse impact questions, and GDPR concerns from HR practitioners rather than recruiters.

Quora

- Search Quora for "candidate assessment test hiring" to find practitioner opinions across company sizes and role types, useful as a first-pass scan before vendor demos (verify claims independently before buying).

Candidate assessment test versus unstructured screening

| Dimension | Unstructured screening | Candidate assessment test |

|---|---|---|

| Predictive validity | Low | High when role-validated |

| Consistency | Variable by interviewer | Standardized across candidates |

| Adverse impact risk | Present (halo, affinity bias) | Present (must be measured) |

| Candidate time cost | 30 to 60 minutes | 20 to 90 minutes |

| GDPR Article 22 risk | Low | High if scoring is automated |

Related on this site

- Glossary: Employment assessment test, Hiring assessment test, Candidate assessment tools, Adverse impact, AI bias audit, Async screening, Human-in-the-loop (HITL), Scorecard, Personality test for employment

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member