Interviewing platform

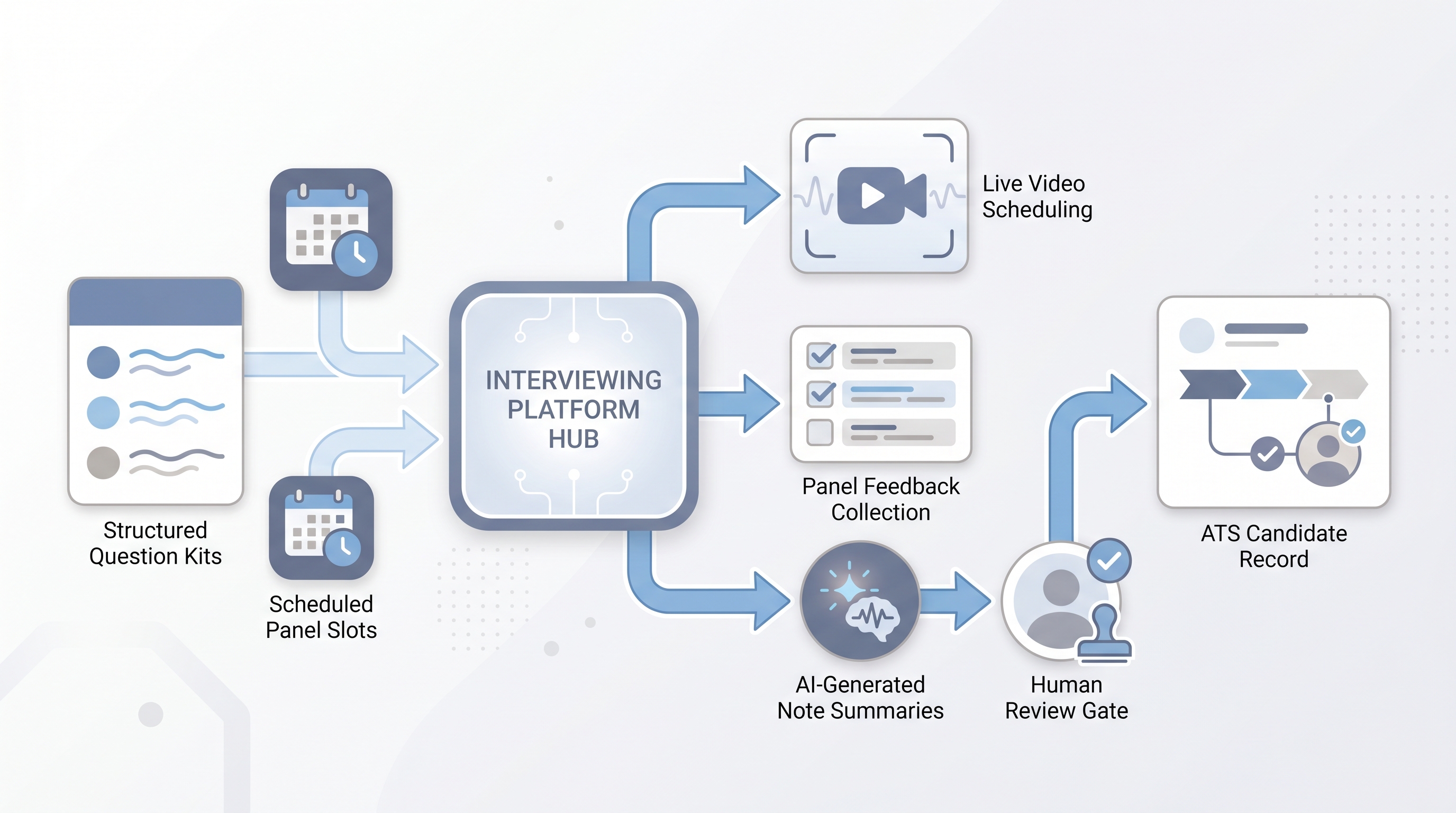

Software that centralizes interview scheduling, structured question kits, live or async video capture, and panel feedback into one tool, sitting between sourcing and the offer stage.

Michal Juhas · Last reviewed May 9, 2026

What is an interviewing platform?

An interviewing platform is software built for the interview stage of hiring: scheduling panels, delivering structured question kits to interviewers, capturing notes or video during calls, and collecting scored feedback in one place. It connects to the ATS so candidate records update without manual re-entry.

The term covers a range of products. Some specialize in video capture, like HireVue and Spark Hire. Others add AI notetaking to live calls, like BrightHire and Metaview. Many ATS platforms (Greenhouse, Lever, Ashby) include native interview kits and panel scheduling that handle most of what a standalone tool does. Whether you need a separate platform or can stay inside your ATS depends on interview volume, rubric complexity, and whether AI-generated note summaries are part of the requirement.

In practice

- A TA lead at a 300-person company replaces ad-hoc meeting links with an interview platform so every panel gets the same question bank, a scoring rubric per question, and a consolidated feedback form. The hiring manager sees all scores before the debrief, not just whoever typed notes first.

- Sourcers and recruiters call it "the interview tool" in standups. Candidates usually encounter it as a scheduling link or a pre-screen prompt, not by its platform name.

- Finance and legal refer to it as "the recording vendor" when reviewing data processing agreements, because their concern is where transcripts land and for how long, not how the rubric is structured.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need shared vocabulary for vendor evaluations, debrief reviews, and compliance conversations. Skim the first section for a fast picture. Use the second when you are deciding how it fits into your ATS, interview process, and data governance setup.

Plain-language summary

- What it means for you: A single place where panelists get their questions, record scores, and leave feedback, instead of one person emailing a doc, another filling a form, and a third remembering to update the ATS.

- How you would use it: Pick a role where panel feedback is inconsistent. Configure question kits with a rubric. Send panelists a link. Compare feedback completion rates and debrief quality before and after.

- How to get started: Before opening a vendor portal, write the three to five questions you want every panelist to answer for the role. Map each to a competency. Then decide whether your ATS can hold that structure or a separate tool is genuinely needed.

- When it is a good time: When structured interviewing is inconsistent across panels, when scheduling runs through email chains, or when hiring managers ask to see all scores before the debrief.

When you are running live reqs and tools

- What it means for you: An interview platform enforces structure at scale: the same questions, the same rubric, the same feedback form, logged to the ATS without manual entry. That is how debrief data becomes usable instead of relying on notes from memory.

- When it is a good time: After your scorecard is stable and your panelists trust the rubric. Deploying an interview platform on an unstable process automates the inconsistency rather than fixing it.

- How to use it: Wire it to your ATS so stage changes, feedback submissions, and calendar confirmations flow automatically. Log which question version was active for each cohort. Keep AI-generated notes behind a human-in-the-loop review gate before anything reaches the official ATS record.

- How to get started: Audit your current interview process on one req: how are questions delivered, how is feedback collected, where does it live? Map that to what the platform replaces versus what needs a policy decision first: recording consent, transcript storage, and AI scoring.

- What to watch for: Low feedback form completion (panelists skipping the rubric), recording consent gaps across jurisdictions, AI scoring features that have not been through a bias audit, and data retention policies that conflict with your DPA.

Where we talk about this

Live AI in recruiting sessions at AI with Michal include interview platform evaluation as a working exercise: which ATS-native tools are sufficient, when a dedicated platform is worth the vendor overhead, and how AI note summaries fit into a human-in-the-loop review workflow. Bring your current interview setup and real compliance questions to Workshops so the answer is grounded in your stack, not a demo environment.

Around the web (opinions and rabbit holes)

Third-party creators move fast and tooling changes often. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "structured interview setup recruiting" filtering by the past year for independent practitioner walkthroughs that show real question banks and rubric design, not vendor marketing demos.

- Search "AI interview notetaker comparison" for honest assessments of BrightHire, Metaview, and similar tools, including what happens when candidates object to recording.

- r/recruiting carries regular threads on interviewing platform decisions, including which ATS-native kits are "good enough" and when teams actually buy a dedicated tool.

- r/humanresources covers the compliance and DPA side more deeply, useful when the legal review is the real bottleneck in your evaluation.

Quora

- What is the best video interview platform for hiring? collects a range of practitioner answers across company sizes (read critically; some are vendor-adjacent or dated).

Interview platform versus ATS-native interview tools

| Factor | Dedicated interview platform | ATS-native interview kit |

|---|---|---|

| Question bank depth | Deep, role-library level | Usually per-req or template |

| AI notetaking | Often native or integrated | Requires a separate add-on |

| Compliance tooling | Dedicated consent and DPA modules | Varies widely by ATS |

| Panelist experience | Separate login, purpose-built UI | Same login as ATS, less focused |

| Setup overhead | Higher (vendor onboarding, DPA review) | Lower (already inside your ATS) |

Related on this site

- Glossary: Async screening, One-way video interview, Scorecard, Human-in-the-loop (HITL), Applicant tracking software, AI hiring software, Explainable AI in hiring

- Blog: AI candidate screening

- Guides: Talent acquisition managers

- Course: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member