AI hiring software

Software that uses artificial intelligence to automate or augment specific recruiting tasks, from sourcing and resume screening to candidate communication and pipeline analytics.

Michal Juhas · Last reviewed May 3, 2026

What is AI hiring software?

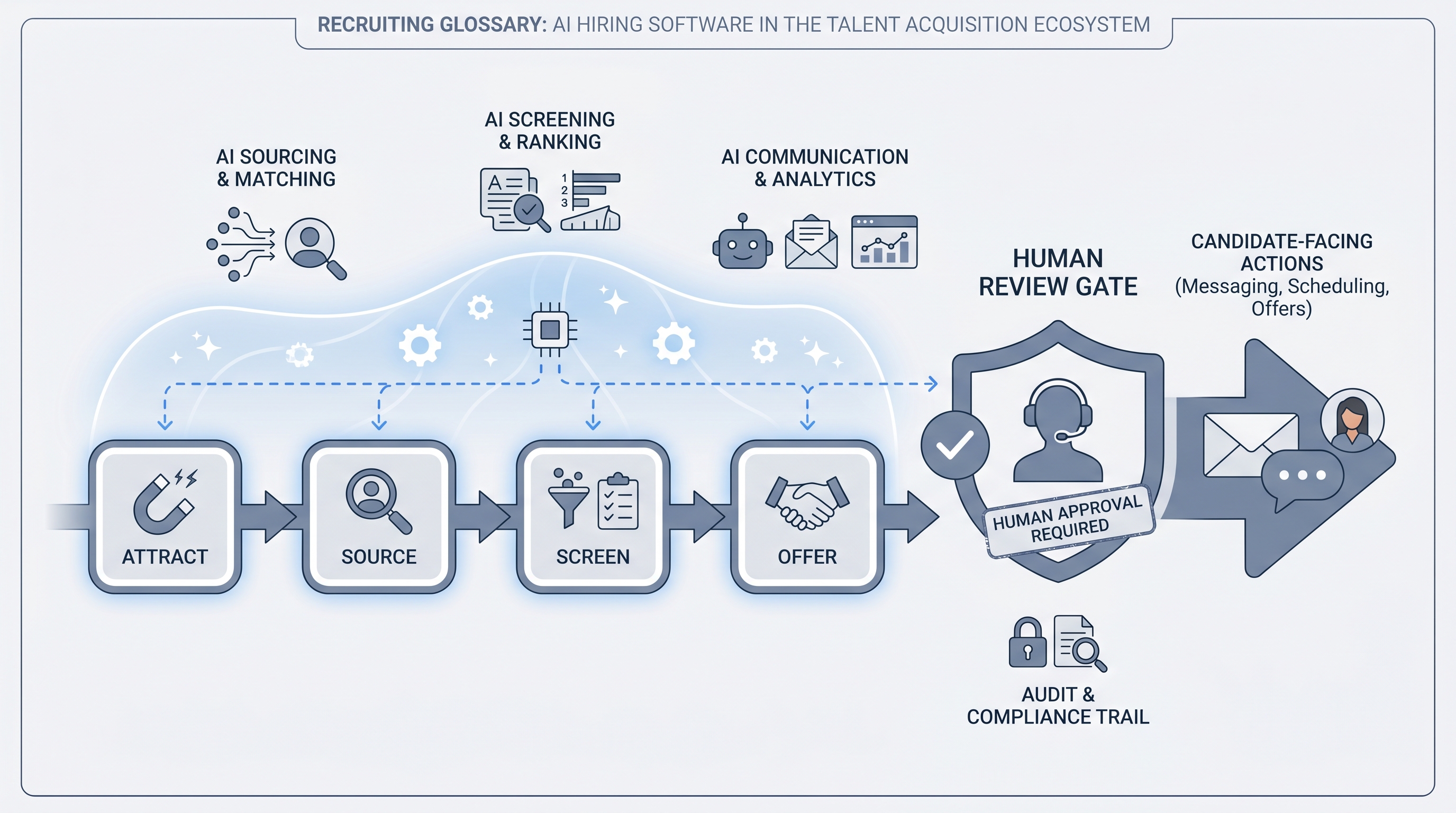

AI hiring software is the category of tools that use artificial intelligence to automate or augment specific steps in the recruiting process. The category spans sourcing tools that find and rank profiles, screening tools that parse CVs and flag likely-fit candidates, communication tools that draft outreach and follow-up messages, and analytics layers that report on pipeline health and talent acquisition metrics. Most AI hiring software runs as a layer on top of an existing applicant tracking system rather than replacing it. The defining feature is that the tool generates, suggests, or filters based on model inference, not just rule-based routing.

In practice

- A sourcer running a 30-person engineering search says "the AI hiring software is surfacing profiles I would have found after two days of Boolean in 20 minutes," meaning the tool's semantic matching is doing early-funnel work that used to be manual.

- A TA lead reviewing a vendor renewal says "we are paying for AI hiring software but our team is still writing all the outreach from scratch," meaning the AI feature was enabled but never calibrated or adopted.

- A compliance officer asking "which AI hiring software scored this candidate, and when" is asking an accountability question most teams cannot answer without explicit logging of which tool and model version generated each suggestion.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA leads, and HRBPs who need a shared vocabulary for tool evaluation, procurement conversations, and compliance reviews. Skim the first section for a fast shared picture. Use the second when you are buying, deploying, or auditing a live AI hiring tool.

Plain-language summary

- What it means for you: AI hiring software is any tool that uses a model to find, score, write, or analyze at some stage of your hiring pipeline, rather than just routing records according to rules you set.

- How you would use it: Pick the one stage that costs the most recruiter time per week and ask whether the tool you have there is doing AI work or just rules-based filtering. That gap is where an AI hiring tool adds the most value.

- How to get started: Map your current stack by stage. For each tool, note whether the AI feature is active, calibrated, and reviewed by a human before it affects a candidate. Most teams discover one or two active AI features no one is monitoring.

- When it is a good time: Before a new tool purchase, after a quarter where a hiring bottleneck traced back to a manual step that an AI layer could have handled, or when a compliance review surfaces a gap in candidate decision logging.

When you are running live reqs and tools

- What it means for you: Every AI hiring tool that generates a score, summary, or message is making a model-based inference. That inference can contain bias, errors, or outdated assumptions, regardless of how confident the output looks.

- When it is a good time: Before you let an AI hiring tool's output influence who advances past any funnel gate without human review. That is where bias, GDPR automated decision rules, and data residency risks converge.

- How to use it: Log the model version and prompt hash for every AI output that influences a candidate decision. Add a review gate before any AI-generated message goes out, and before any AI-generated score feeds a shortlist. Check those logs monthly, not just at procurement time.

- How to get started: Pull a one-line audit of each AI feature your team currently uses: which model runs it, who last reviewed the outputs, and whether the vendor has updated the model in the last six months without notifying you.

- What to watch for: Vendors that fold AI into an existing tool at renewal without reopening the DPA. Integration changes that silently alter how candidate scores are calculated. AI-generated summaries that get copied into rejection decisions without a human reading the source CV.

Where we talk about this

On AI with Michal live sessions the AI hiring software conversation runs through both tracks. AI in recruiting workshops cover tool evaluation, AI feature claims, what questions to ask vendors, and where human gates belong in the pipeline. Sourcing automation sessions go deeper on the integration layer: how AI tools hand off data, which fields break across APIs, and what fails when a vendor updates a model. Bring your current stack and the AI feature you are unsure about to Workshops for a room-tested reality check.

Around the web (opinions and rabbit holes)

Third-party creators cover AI hiring software at high volume. Treat these as starting points, not endorsements, and verify compliance postures and feature claims directly with vendors before committing to a contract.

YouTube

- AI hiring software review and comparison pulls recent practitioner walkthroughs of AI tools across sourcing, screening, and scheduling stages.

- How recruiters use AI tools in hiring shows real workflows from sourcers and TA leads who have deployed AI hiring software in production.

- AI recruiting software bias and compliance covers risk and audit perspectives, useful before any procurement decision.

- AI hiring tools discussion in r/recruiting collects candid practitioner views on which AI tools deliver in production and which disappoint after the demo.

- ATS with AI features in r/recruiting surfaces threads where recruiters compare the AI add-on versus buying a standalone tool.

- AI screening tools and bias in r/humanresources includes HR and compliance perspectives on risk management for automated decision tools.

Quora

- What is the best AI software for hiring? collects practitioner recommendations across company sizes and industries (quality varies; read critically and verify with recent sources).

AI hiring software vs. traditional recruiting software

| Capability | AI hiring software | Traditional (rules-based) tools |

|---|---|---|

| Candidate matching | Intent and context via semantic models | Keyword or criteria filter |

| Message drafting | Generates context-aware drafts | Template fill-in or manual writing |

| Resume screening | Language model extraction and context scoring | Parser plus configurable rules |

| Bias risk | Model bias from training data; needs audit | Rule bias if criteria are discriminatory |

| Compliance work | Needs logged model versions and opt-out paths | Standard DPA and data residency |

| Setup overhead | Calibration, prompt governance, review gates | Configuration and user training |

Related on this site

- Glossary: Applicant tracking software, Recruiter AI, AI bias audit, Human-in-the-loop, Resume parsing, Semantic search, Hallucination, Hiring tools

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Membership: Become a member

- Courses: Starting with AI: the foundations in recruiting