Offer decline analysis in hiring

A structured practice of collecting and reviewing why candidates turn down job offers, identifying patterns across roles and departments, and using those findings to improve compensation, process speed, and candidate experience before the next offer cycle.

Michal Juhas · Last reviewed May 9, 2026

What is offer decline analysis?

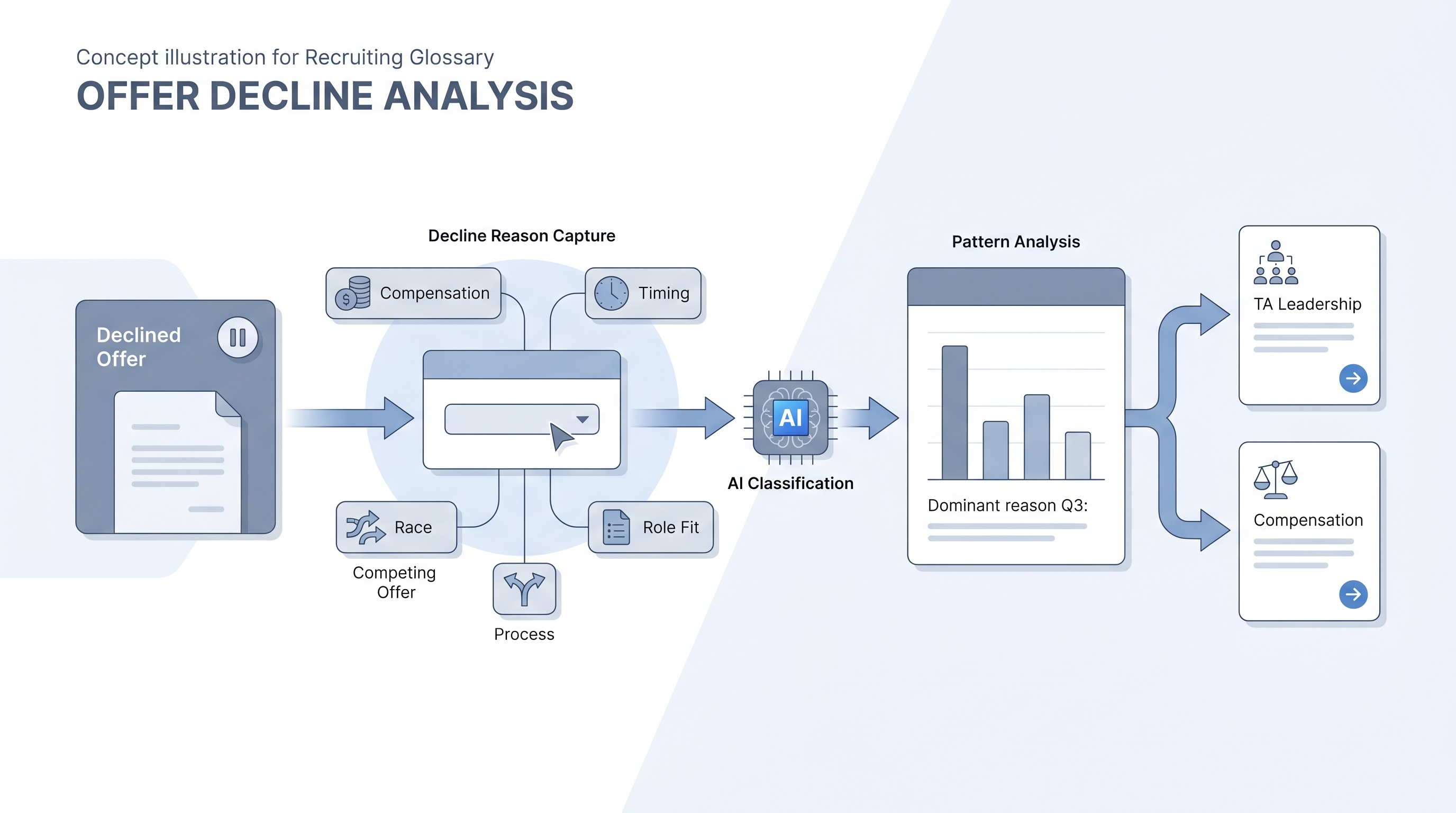

Offer decline analysis is the practice of collecting and reviewing why candidates turn down job offers after they have been extended. Most TA teams track their offer acceptance rate as a number. Fewer track the reasons behind each decline, and fewer still aggregate those reasons across quarters to find patterns that repeat.

The practice is straightforward: after a candidate declines, a recruiter logs the stated reason, typically after a short call or via a two-question survey. Over time, those records show whether declines cluster around compensation, process length, a specific hiring manager, or a role description that drifted from the actual job by the time the offer arrived.

In practice

- A recruiter notices the same candidate response three months in a row: the process took too long. After pulling the data, the team discovers that declines citing timing correlate with offers extended more than 22 days after the first interview. They shorten the process and the decline rate drops by a third in the next quarter.

- During an AI in recruiting workshop, a TA lead prompts an LLM with 40 anonymized decline reasons from the past six months. The model clusters them into five categories and flags that 60 percent of compensation declines came from a single department where the pay band had not been reviewed in two years.

- A sourcer logs every declined offer in a shared spreadsheet with a standardized reason code. The compensation team reviews it quarterly and uses the market feedback to justify a pay band adjustment to finance, with the data as evidence rather than anecdote.

Quick read, then how hiring teams use it

This is for recruiters, TA leaders, HR business partners, and compensation partners who need shared vocabulary in offer debrief sessions, pay band reviews, and process audits. Skim the first section for shared context. Use the second when designing the tracking structure or building an AI-assisted pattern review.

Plain-language summary

- What it means for you: A habit of asking why offers are declined and writing down the answer in a shared place so the pattern becomes visible over time, not just during the next surprise.

- How you would use it: After each declined offer, log the reason in one of five or six standard categories (compensation, timing, competing offer, role fit, process experience, other). Review the totals quarterly with your TA lead and compensation partner.

- How to get started: Create a shared spreadsheet or ATS field with a reason code dropdown. Run it manually for one quarter before deciding whether to automate or expand.

- When it is a good time: Any time your offer acceptance rate is below 80 percent, or when you have had three or more declines in a single role or department in the past 90 days.

When you are running live reqs and tools

- What it means for you: Offer decline data is a feedback signal from the market about your compensation positioning, process design, and candidate experience. Ignored, it compounds. Reviewed quarterly, it gives TA and compensation a shared language for adjustments.

- When it is a good time: After any quarter with an offer acceptance rate below your benchmark, when a hiring manager reports candidates keep dropping at the offer stage, or when a new market opens where you lack historical data.

- How to use it: Log decline reasons in a standard format tied to the req and the hiring manager. Aggregate quarterly. If you have more than 20 declines, use a prompt to classify free-text responses into categories and surface the top three themes. Cross-reference with interview-to-offer ratio to understand conversion pressure across the full funnel.

- How to get started: Add a decline reason field to your ATS or a linked spreadsheet row. Align on five to seven standard codes with your team in a 30-minute calibration session. Assign a quarterly review slot with compensation and TA leadership in the same recurring invite.

- What to watch for: Candidates softening true reasons on calls, especially around compensation. Free-text survey responses can capture more honesty than a phone call. AI classification helps at scale but misreads hedged language, so verify the top themes with a human read of the source responses before presenting findings.

Where we talk about this

On AI with Michal live sessions the offer stage is part of the AI in recruiting track, specifically how TA teams use data and AI to improve conversion at the end of the funnel, not only at the top. Offer decline analysis comes up as a diagnostic tool when teams ask why sourcing volume does not convert to hires. Full room conversation at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before wiring candidate data.

YouTube

- Search "why candidates reject job offers" on the AIHR YouTube channel for practitioner breakdowns of decline reasons and practical steps to reduce rejection rates.

- Search "offer acceptance rate recruiting" on LinkedIn Talent Solutions YouTube for how compensation and process speed affect candidate decisions at the offer stage.

- Search "recruiting funnel metrics offer stage" on YouTube for explainers on how offer acceptance rate fits into a full funnel metrics view and what to do when the number drops.

- How do you handle candidates who decline your offers? in r/recruiting has practitioner takes on decline calls and what information is actually useful to collect after the fact.

- What are the main reasons candidates reject offers? in r/humanresources covers both compensation and process factors with real examples from HR professionals in the chair.

- Offer declined, compensation mismatch in r/TalentAcquisition is a candid set of threads on how to bring market feedback back to compensation and hiring managers in a way that leads to action.

Quora

- Why do candidates reject job offers even after accepting them verbally? collects practitioner and candidate perspectives on post-verbal declines and the signals teams missed (read critically, quality varies).

Offer decline versus related signal types

| Signal | What it measures | Who acts on it |

|---|---|---|

| Offer acceptance rate | Percentage of extended offers accepted | TA leadership, quarterly |

| Offer decline analysis | Root causes behind each decline | TA and compensation, quarterly |

| Interview-to-offer ratio | Candidates needed to produce one accepted offer | Recruiter, per req |

| Funnel drop-off analysis | Where candidates exit before the offer stage | TA ops, ongoing |