Pre-employment assessment test

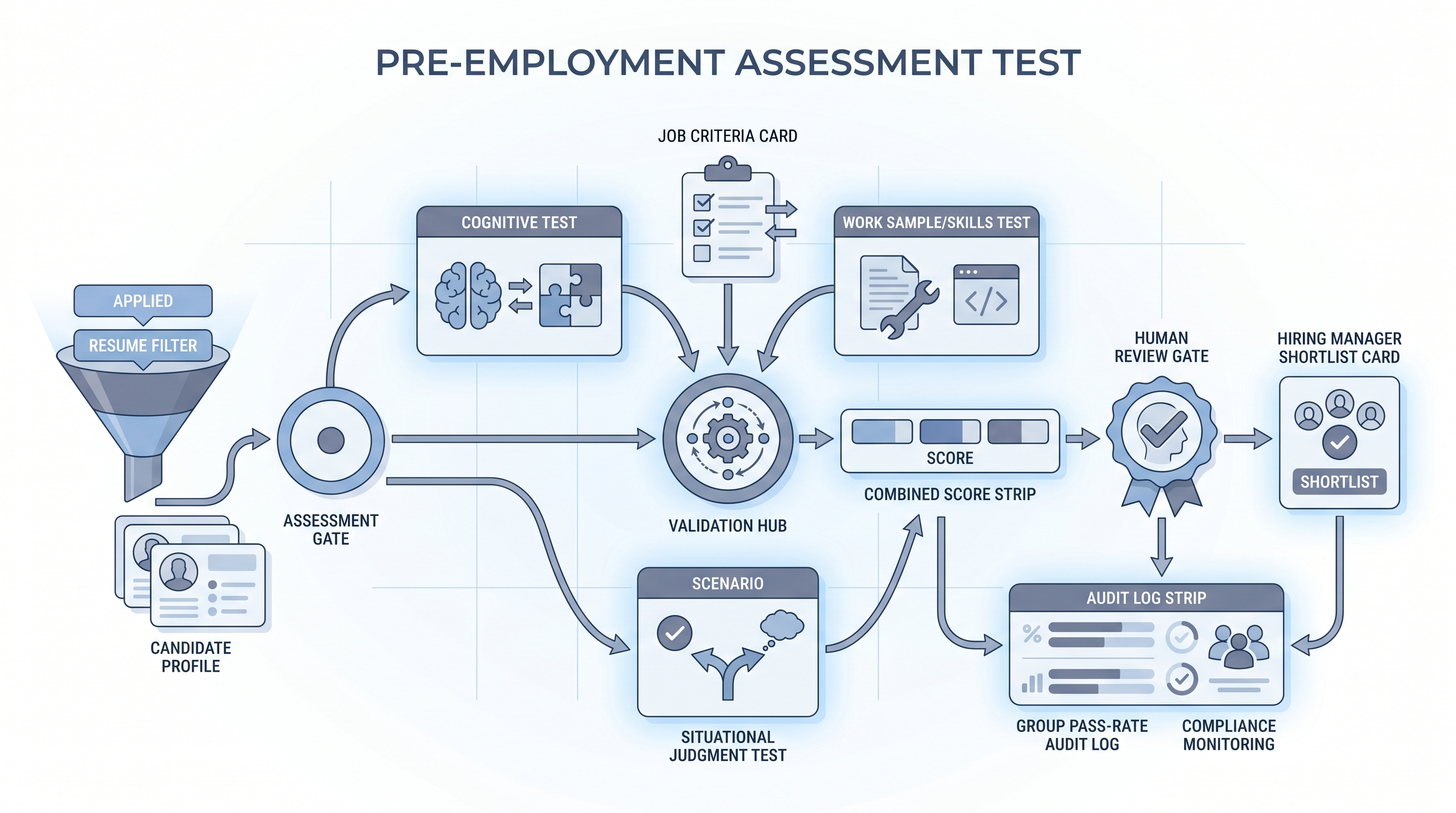

A standardized test administered to candidates before a hiring decision to measure job-relevant skills, cognitive ability, personality traits, or situational judgment, with the goal of predicting role performance and reducing subjectivity in early screening.

Michal Juhas · Last reviewed May 9, 2026

What is a pre-employment assessment test?

A pre-employment assessment test is a standardized evaluation given to candidates before a hiring decision. Unlike a job interview, which captures how someone presents in conversation, an assessment measures specific abilities directly: reasoning speed, writing clarity, code output, or how someone responds to a realistic work scenario.

The key phrase is "pre-employment": the test runs before the offer, not after. That timing makes results useful for the hiring decision and places any scoring tool under legal scrutiny. An assessment that consistently scores protected groups lower is a selection instrument with adverse impact exposure, regardless of what the vendor labels it.

In practice

- A recruiter at a fintech company sends a 25-minute numerical reasoning screen to all analyst applicants after the initial resume filter. Candidates who clear the bar advance to the phone screen; those below the threshold receive a standard decline without a call. The team tracks group pass rates each quarter and reviews the cut score when gaps emerge.

- A talent ops lead evaluating a new screening vendor for a customer success role discovers the "culture fit" AI score correlates with graduation year. After a quick adverse impact analysis, the team replaces it with a short situational judgment test tied to real escalation scenarios the role actually handles.

- An HRBP at a 200-person company wants to add pre-employment testing for warehouse roles. Before purchasing, they ask the vendor for a criterion validity study tied to the role family and a technical manual listing group pass rates. The vendor cannot produce either document, so the HRBP sources a different tool.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in intake calls, vendor briefings, and compliance reviews. Skim the first section for a fast shared picture. Use the second when you are deciding where a test fits in a live req or how to evaluate a scoring vendor.

Plain-language summary

- What it means for you: A pre-employment assessment test is any scored evaluation given to candidates before an offer. It gives the team a consistent data point that does not shift based on who reviewed the resume that morning.

- How you would use it: Choose a test that measures one attribute the role genuinely requires. Validate it on a sample of current employees in similar roles before using it to screen new candidates.

- How to get started: Ask what skill gap most often causes a new hire to fail in the first 90 days. Design or buy a test that measures that specific attribute, not a general proxy for intelligence or fit.

- When it is a good time: After you have documented what the role actually requires, after your team agrees on a scoring rubric, and after a compliance partner has confirmed the lawful basis and data routing.

When you are running live reqs and tools

- What it means for you: A pre-employment test layer in your ATS sends assessments automatically when a candidate reaches a trigger stage, collects scores, and routes results back to the recruiter dashboard. When the vendor updates the scoring model, historical scores shift unless you log model versions and score dates with each result.

- When it is a good time: After your sourcing pass-through rate is stable enough to separate a screening bottleneck from a sourcing problem, and after IT has reviewed data routing between your ATS and the vendor.

- How to use it: Set one cut score threshold per role family, document the rationale in writing, and run a four-fifths adverse impact check on each cohort before acting on results. Keep the scoring output in a separate field from the recruiter stage decision so you can show the two inputs were independent in a future audit.

- How to get started: Pilot on a closed req with 40 or more past hires. Score them retroactively and check whether the assessment result correlates with your own performance ratings. If the correlation is weak, the test is not measuring what you think it is.

- What to watch for: Vendors who claim their tool measures "job fit," "culture match," or "potential" without a named psychometric construct. Any scoring product that cannot show group pass rate data for your role family is a liability, not a tool.

Where we talk about this

On AI with Michal live sessions we cover pre-employment testing in the legal and compliance modules of the AI in recruiting track. Participants work through vendor evaluation exercises, practice reading validity reports, and discuss where an assessment layer adds signal versus where it creates friction with no predictive gain. The sourcing automation track adds the operational side: wiring ATS stage triggers to assessment invites and routing scores back automatically. Join a session at Workshops for the peer discussion with real vendor names and live pipeline examples.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before you wire any assessment into a candidate-facing process.

YouTube

Search results shift frequently; use Filters → Upload date to find recent content from IO psychologists and employment law practitioners alongside vendor demos.

- Pre-employment testing validity IO psychology (criterion validity, job-relatedness, and what "validated for selection" actually means in practice)

- Cognitive ability test adverse impact hiring (four-fifths rule, group pass rates, and how cognitive tests interact with demographic differences)

- Situational judgment test employment validity (how SJTs are built, where they predict well, and where work samples outperform them)

- AI pre-employment screening bias legal risk (what regulators are saying about algorithm-based candidate scoring)

For vendor-published content on norming and test construction, cross-check any vendor claim against independent IO psychology sources before treating it as authoritative.

- r/IOPsychology has active threads on which pre-employment tests show criterion validity versus which are oversold by vendors.

- r/recruiting surfaces real recruiter discussions on candidate experience, test completion rates, and which assessment types drive drop-off at the wrong stage.

- r/humanresources captures HRBP perspectives on EEOC compliance, GDPR documentation, and how to brief legal when a new assessment vendor enters procurement.

Quora

- Quora search: pre-employment testing effectiveness surfaces practitioner and researcher answers; quality varies, so verify any specific claim before acting on it.

Assessment types by hiring stage

| Assessment type | Best placement | Predictive validity | Adverse impact risk |

|---|---|---|---|

| Short cognitive screen | Before recruiter call | High for many roles | Higher for some groups |

| Work sample or skills test | After first call | High when job-relevant | Lower when task-matched |

| Situational judgment test | Before or after first call | Moderate to high | Lower than cognitive alone |

| Personality inventory | Before interview stage | Moderate (role-dependent) | Varies by instrument |

| AI-inferred trait score | Avoid until validated | Unknown to low | High: no audit trail |

Related on this site

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), Candidate assessment tools, Hiring assessment test, Employment assessment test, Scorecard

- Glossary: Hiring funnel conversion rates, Explainable AI hiring, Sourcing pass-through rate

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting