Psychometric testing for recruitment

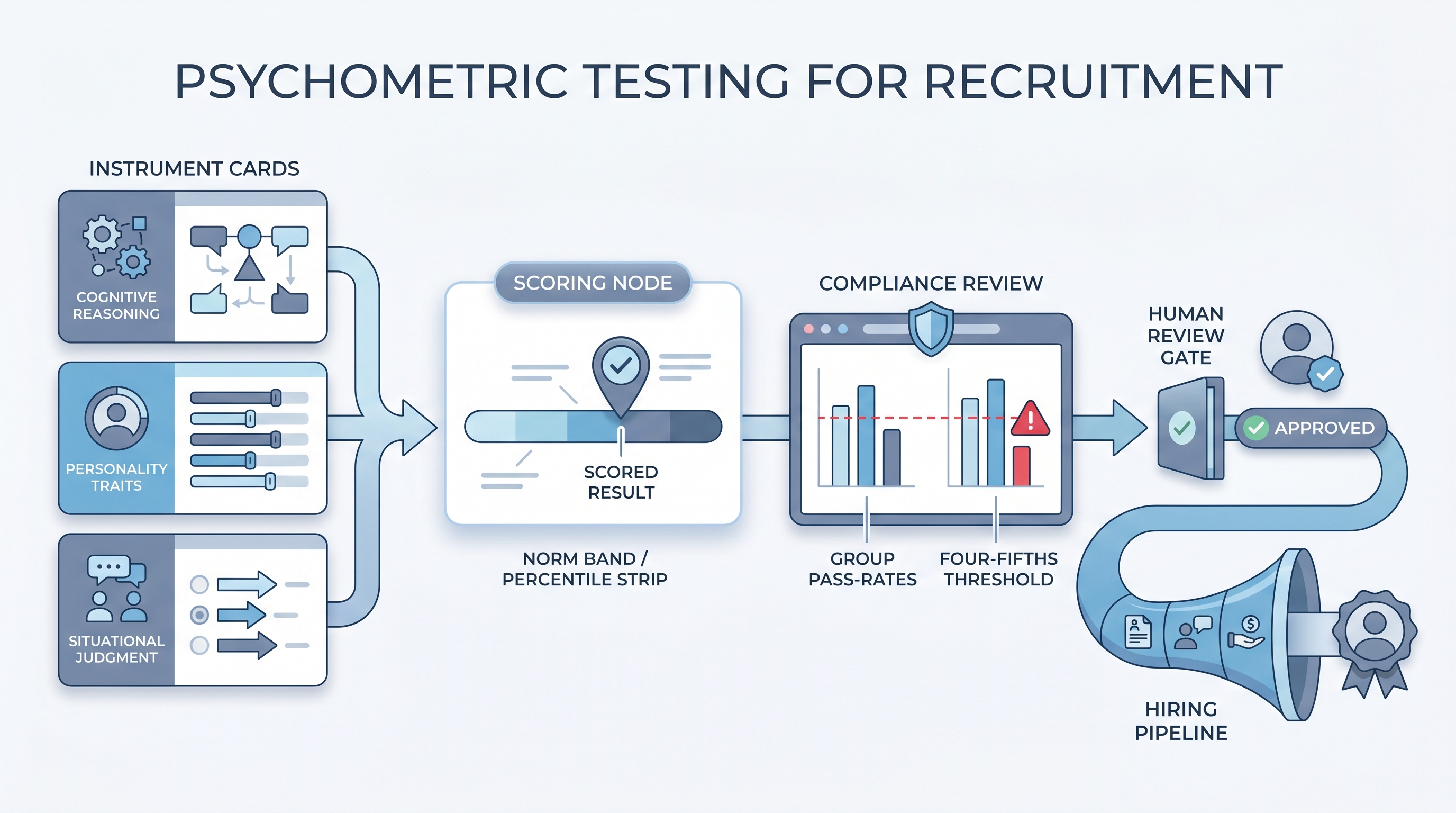

Standardized instruments that measure cognitive ability, personality traits, or behavioral tendencies as structured inputs to hiring decisions, scored against published norms and validated for criterion-related evidence before deployment in a selection process.

Michal Juhas · Last reviewed May 9, 2026

What is psychometric testing for recruitment?

Psychometric testing for recruitment refers to standardized instruments that measure cognitive ability, personality traits, situational judgment, or work sample performance and return scored results against published norms. The key word is standardized: every candidate sees the same content under the same conditions, and scores are interpreted relative to a reference population rather than the recruiter reviewing the responses subjectively.

The case for psychometric testing in hiring rests on predictive validity: the degree to which a test score correlates with actual job performance ratings measured later. Cognitive ability tests have the strongest meta-analytic validity evidence across roles, but they require careful cut-score management because of adverse impact risk. Personality inventories and situational judgment tests have moderate but context-dependent validity, meaning the instrument and the role family need to match for the score to carry weight.

In practice

- A TA team running high-volume customer support hiring adds a 20-minute numerical reasoning test to the screening stage. After the first cohort, they pull pass rates by demographic group and find one group passing at 74 percent of the top-passing group rate. They lower the cut score by five points, recheck the correlation with 90-day quality ratings, and document the decision before the next batch.

- A recruiter at a professional services firm uses a Big Five personality inventory for manager-level searches. During debriefs, a hiring manager asks why a candidate with a high conscientiousness score is being flagged as borderline. The recruiter explains that the instrument measures trait tendencies against a norm group of managers, not a prediction of success in this specific team context, and redirects the conversation to the structured interview data.

- An HRBP evaluating two assessment vendors asks both for a technical manual showing criterion validity for a financial analyst role. One vendor produces a study with a validity coefficient of 0.28 against analyst performance ratings. The other sends a whitepaper on general cognitive testing. The HRBP shortlists only the first vendor.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in vendor evaluations, legal briefings, and hiring manager debriefs. Skim the first section for a fast shared picture. Use the second when you are selecting an instrument, setting cut scores, or reviewing results for a live req.

Plain-language summary

- What it means for you: Psychometric tests give you a standardized score for a specific trait, such as how quickly someone reasons through numbers or how they tend to approach new situations, measured consistently across all candidates rather than estimated from interview impressions.

- How you would use it: Pick one instrument that matches the competency most important for the role, confirm it has criterion validity evidence for that role type, and agree with your legal or HR partner on the cut score and the adverse impact review cadence before the first invite goes out.

- How to get started: Identify the single most predictive competency for the role. Ask three vendors for a technical manual and an independent validity study for that competency. Pilot with 40 or more past hires before using as a live gate.

- When it is a good time: After role requirements are documented, after a compliance partner has confirmed lawful basis for data processing, and after your ATS can receive and store scores in a named field with the model version logged.

When you are running live reqs and tools

- What it means for you: Psychometric scores are selection inputs, not selection decisions. Each score carries a standard error of measurement, meaning a candidate who scores at the 62nd percentile could genuinely be a 55th- or 69th-percentile performer. Set cut scores with that uncertainty in mind, and treat scores as one signal alongside structured interview ratings from a shared scorecard.

- When it is a good time: After the happy path for sourcing and screening is stable, when you have enough volume per role family to calculate group pass rates each cycle, and when you have a named owner for reviewing adverse impact reports before expanding deployment.

- How to use it: Log the instrument version and norm group with every cohort result. Review group pass rates against the four-fifths threshold each cycle. Brief hiring managers on what the instrument measures and does not measure before the first debrief. Keep the score field separate from the stage decision field in your ATS so you can show independence in a compliance audit.

- How to get started: Pilot on a closed req first. Score retrospectively against performance ratings for recent hires in the same role family. If the correlation is weak, the instrument is not measuring what matters for that role. Replace it before using it as a live gate, not after a candidate complaint.

- What to watch for: Vendors who report overall completion rates but not group-level pass rates; instruments whose validity studies reference a general workforce norm group instead of your role family; AI scoring layers without a logged model version for each result; and personality vendors claiming predictive validity without a peer-reviewed or independently audited study.

Where we talk about this

On AI with Michal live sessions, psychometric testing appears in the compliance and vendor evaluation modules of the AI in recruiting track. Participants work through a structured criteria card for platform selection, practice reading a technical manual, and calculate four-fifths adverse impact ratios on vendor-supplied data. The sourcing automation track adds the operational layer: how to trigger assessment invites from ATS stage changes and route scores back without manual data entry. Join a session at Workshops with your real vendor shortlist and ATS name.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before wiring any instrument to a candidate-facing selection process.

YouTube

Search with Filters - Upload date to surface recent IO psychology content alongside vendor marketing.

- Psychometric testing in recruitment: what recruiters need to know (criterion validity, norming, and what vendor claims actually mean)

- Adverse impact hiring assessment EEOC compliance four-fifths rule (how to calculate and document the threshold)

- Big Five personality assessment validity hiring (practitioner and researcher perspectives on when personality tests predict performance)

- r/IOPsychology surfaces active debate on which instrument validity claims hold up versus which are vendor marketing, with named studies and practitioner critique.

- r/recruiting has frank threads on candidate drop-off during assessments, test completion rates, and which platforms actually survive production ATS traffic.

- r/humanresources captures HRBP and legal partner perspectives on GDPR obligations and how to document lawful basis for automated scoring.

Quora

- Quora search: psychometric testing recruitment has practitioner answers on instrument choice and debrief practice; quality varies, so verify any specific claim before acting.

Psychometric test types at a glance

| Instrument type | What it measures | Predictive validity | Key risk |

|---|---|---|---|

| Cognitive ability | Reasoning speed and accuracy | High (meta-analytic) | Adverse impact risk |

| Personality inventory | Trait tendencies vs. norm group | Moderate, role-dependent | Construct mismatch if role fit is poor |

| Situational judgment | Decision-making in role scenarios | Moderate | Item bank staleness over time |

| Work sample | Actual task performance | High, role-specific | Development and scoring cost |

Related on this site

- Glossary: Pre-employment assessment tools, Pre-employment assessment test, Candidate assessment tools

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), Explainable AI hiring

- Glossary: Scorecard, Hiring assessment tools, ATS API integration

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting