Pre-employment assessment tools

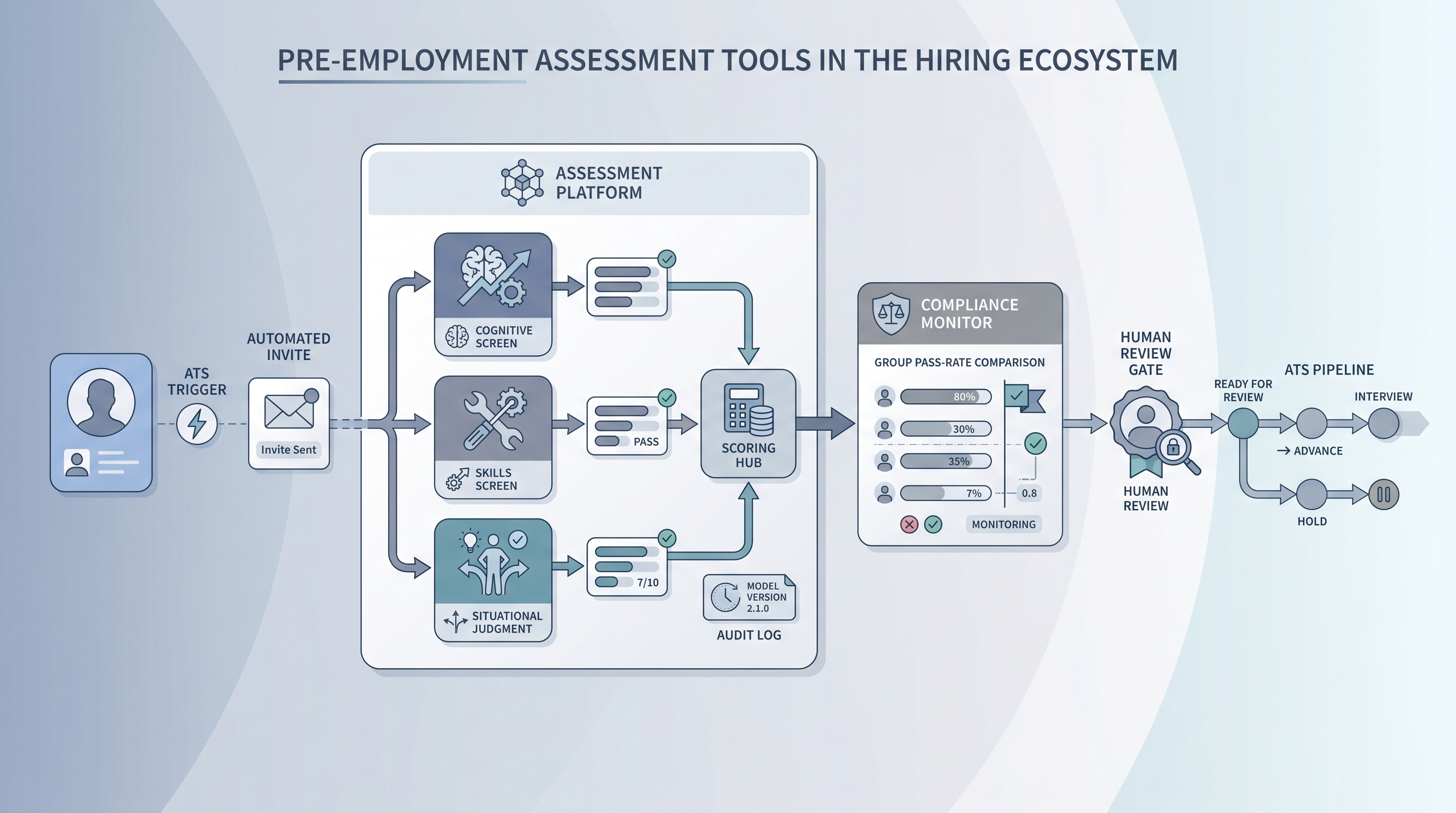

Software platforms that deliver, score, and report on candidate assessments before a hiring decision, covering cognitive tests, skills simulations, situational judgment screens, and personality inventories, with dashboards for adverse impact monitoring, ATS integration, and compliance documentation.

Michal Juhas · Last reviewed May 9, 2026

What are pre-employment assessment tools?

Pre-employment assessment tools are software platforms that handle candidate evaluation before a hiring decision. They deliver tests, collect responses, score results against a rubric, and surface those scores in recruiter dashboards and ATS pipelines. The difference between this category and general hiring assessment tools is timing and scope: pre-employment tools operate specifically before an offer, and the software itself must carry compliance features because every scored invite is a selection step under employment law.

A useful mental model is three layers. The instrument layer is the actual test content, cognitive items, situational scenarios, work samples, or personality scales. The platform layer is the software that delivers, scores, and reports. The compliance layer is the audit trail, adverse impact dashboard, and GDPR documentation the platform either ships natively or leaves entirely to you. When evaluating vendors, all three layers need separate answers.

In practice

- A TA ops lead piloting a new cognitive screening vendor discovers during contract review that the platform logs scores but not the scoring model version. When the vendor updates the algorithm three months in, the team cannot compare cohorts across the update boundary. They add model version as a required custom field before go-live.

- A recruiter at a 300-person SaaS company sends the same situational judgment test to all customer success applicants. The platform shows group pass rates by quarter. At the Q3 review, one group is passing at 68 percent of the top-passing group rate, just below the four-fifths threshold. The team pauses deployment, reviews the cut score with a legal partner, and adjusts the scoring rubric before the next batch.

- An HRBP evaluating assessment platforms for a logistics company asks three vendors the same question: can you show a criterion validity study tied to warehouse supervisor performance, not general-workforce norms? One vendor produces a study; two send the same generic whitepaper. The HRBP shortlists only the vendor that produced the study.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary when briefing vendors, evaluating RFPs, and presenting to legal. Skim the first section for a fast shared picture. Use the second when you are selecting or integrating a platform on a live search.

Plain-language summary

- What it means for you: Pre-employment assessment tools are software products that run tests for candidates before an offer, score results automatically, and connect those scores to your ATS. They remove scorer variation but add vendor risk and compliance obligations you own even when the platform fails.

- How you would use it: Pick one platform that covers the instrument type your role actually requires, confirm it has adverse impact reporting built in, and agree in writing on what field the score lands in your ATS before you buy.

- How to get started: List the competency you most need to measure, ask three vendors for a technical manual and an independent validity study for that competency, and pilot only the vendor that can produce both documents.

- When it is a good time: After your team has documented the role requirements, after a compliance partner has confirmed the data routing and lawful basis, and after IT has reviewed the ATS integration path for GDPR deletion compliance.

When you are running live reqs and tools

- What it means for you: The platform fires an assessment invite when a candidate hits a trigger stage in your ATS, collects responses, scores against a stored rubric, and returns a structured score field. When the vendor updates scoring logic between cohorts, historical data breaks unless the platform logged model versions at the run level.

- When it is a good time: After your sourcing pass-through rate is stable enough to isolate an assessment bottleneck from a sourcing problem, and after your ATS integration has been tested in a sandbox with real GDPR deletion paths.

- How to use it: Set one cut score per role family, document the business rationale in writing with a named owner and date, run a four-fifths adverse impact check on each cohort before acting on results, and keep the score field separate from the stage decision field so you can show independence in an audit.

- How to get started: Pilot on a closed req with 40 or more past hires in the same role family. Score retroactively and check whether the platform result correlates with your own 90-day performance ratings. Weak correlation means the instrument is not measuring what you think it is.

- What to watch for: Vendors who report overall completion rates but not group-level pass rates; platforms that store scores without storing the model version or rubric version used; integrations that leave orphaned assessment records when candidates are deleted from the ATS; and any AI video or speech feature whose scoring documentation references general AI performance rather than an independent IO psychology validation study.

Where we talk about this

On AI with Michal live sessions, pre-employment assessment tools appear in the compliance and vendor evaluation modules of the AI in recruiting track. Participants work through a structured vendor scorecard, practice reading technical manuals, and compare platform shortlists from real active searches. The sourcing automation track adds the operational layer: how to wire stage triggers to assessment invites and route scores back through webhook events without manual data entry. Join a session at Workshops and bring your real vendor shortlist and ATS name so the conversation is grounded.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before wiring any platform to a candidate-facing process.

YouTube

Search with Filters → Upload date to surface recent IO psychology and employment-law content alongside vendor marketing.

- Pre-employment assessment tools IO psychology review (criterion validity, norming, and what platform claims actually mean)

- ATS assessment platform integration tutorial (practical integration walkthroughs across common ATS and assessment vendor pairings)

- Adverse impact hiring assessment EEOC compliance (four-fifths rule, cut score decisions, and documentation)

- AI hiring assessment bias audit (what regulators are asking platform vendors to produce in 2025 and 2026)

- r/IOPsychology surfaces active debate on which platform validity claims hold up versus which are vendor marketing, with practitioner names and study citations.

- r/recruiting has frank threads on candidate drop-off, test completion rates, and which platform integrations actually survive production ATS traffic.

- r/humanresources captures HRBP and legal partner perspectives on GDPR documentation requirements and how to brief procurement on vendor DPA terms.

Quora

- Quora search: pre-employment assessment software has practitioner answers on platform comparisons; quality varies, so verify any specific claim before acting.

Platform capability comparison

| Capability | Lightweight tool | Mature platform |

|---|---|---|

| Adverse impact dashboard | Manual export | Built-in by group, per cohort |

| Scoring model audit trail | Score only | Score plus model version and date |

| ATS integration | Email link embed | Bidirectional API with webhook events |

| GDPR deletion path | Manual vendor request | Automated cascade on ATS delete |

| Validity documentation | Generic whitepaper | Role-specific independent study |

Related on this site

- Glossary: Pre-employment assessment test, Hiring assessment tools, Candidate assessment tools, Employment assessment tools

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), ATS API integration

- Glossary: Explainable AI hiring, Sourcing pass-through rate, Scorecard

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting