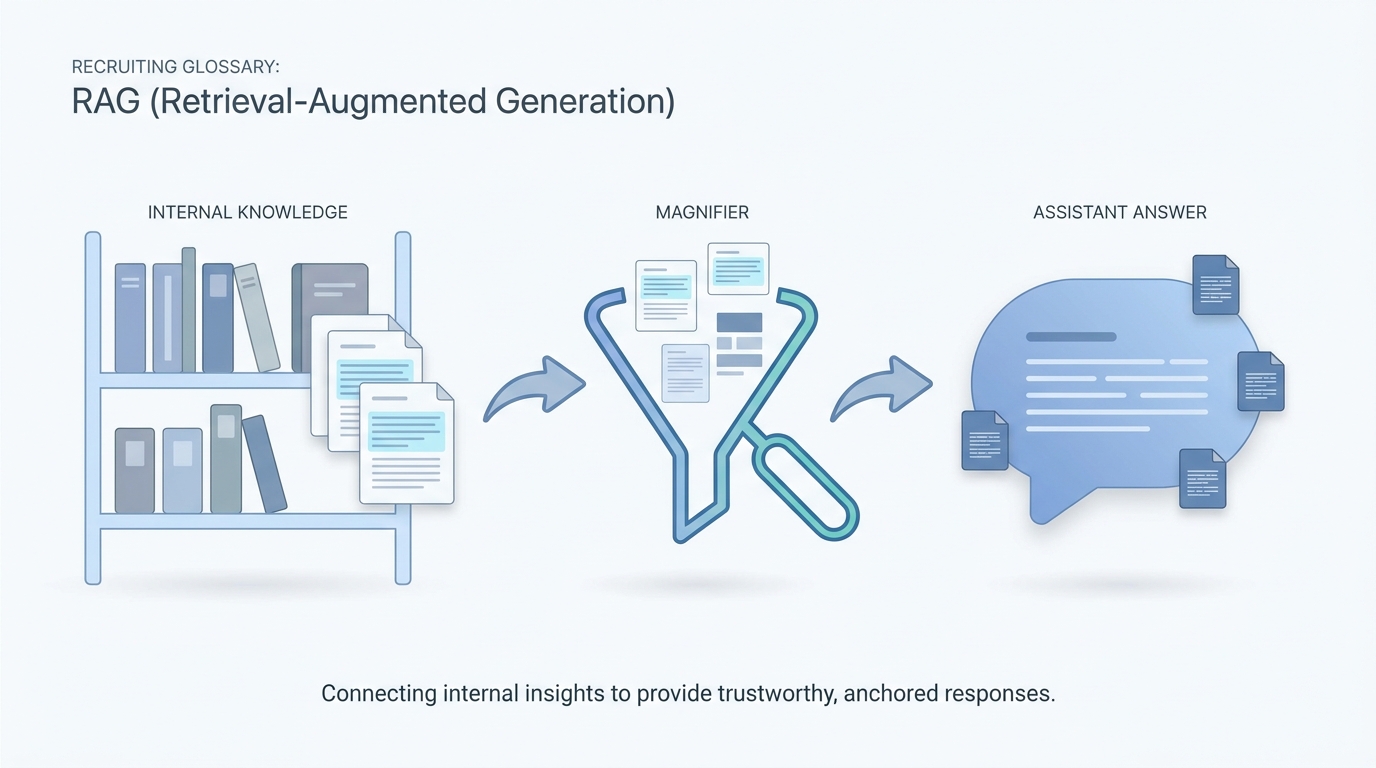

RAG (retrieval-augmented generation)

A pattern where the model answers after retrieving relevant chunks from your documents, CRM notes, or knowledge base, instead of relying only on weights baked in at training time.

Michal Juhas · Last reviewed May 2, 2026

What is RAG (retrieval-augmented generation)?

RAG means the AI pulls short passages from your own files before it writes an answer, instead of guessing from memory alone. You get answers that lean on your playbooks and policies, which is easier to check than a free-form essay.

In practice

- Internal chatbots often say answers come from your help center or handbook; behind the scenes that is the same RAG idea recruiters meet when TA says "only quote our policy PDF." Engineers may shorten it to "it needs retrieval" when answers sound generic.

- When your team uploads approved interview questions into a shared folder and tells the assistant to read there first, that is the spirit of RAG in everyday language, even if nobody says the acronym in kickoff.

- Vendor demos talk about "grounding in your content" when they show a sidebar of source snippets next to the reply. That is the moment recruiters recognize the pattern without caring about embeddings.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: RAG means "read our files first, then answer," so the assistant is not guessing from memory alone.

- How you would use it: You put the policy PDF where the bot is told to look, you ask the question, you check the cited snippet.

- How to get started: Upload three short Markdown pages, ask the same question twice after you edit a page, watch the answer change.

- When it is a good time: When answers sound generic or when legal asks where a sentence came from.

When you are running live reqs and tools

- What it means for you: Retrieval-augmented generation is retrieve-then-read: chunk sources, embed or index, fetch top-k, condition the model, cite. It competes with giant chat threads and with fine-tuning.

- When it is a good time: When Markdown for AI packs replace "paste the whole drive," and when ownership of stale docs is explicit.

- How to use it: Measure hit rate on internal eval questions, monitor embedding drift when vendors change models, and keep human editors.

- How to get started: Read r/Rag "how do I learn" threads, prototype on one handbook, then decide if you need a vector DB.

- What to watch for: Confident citations to outdated comp bands, and "RAG" slides without deletion policy for old PDFs.

Where we talk about this

Recruiting and sourcing sessions keep praising Markdown knowledge bases and project folders over one long chat thread. That is RAG culture even before you buy vectors. We rehearse it in Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Introduction to Retrieval Augmented Generation (RAG) (Google Cloud Tech) is a straight definition with diagrams.

- Introduction to Large Language Models (Google Cloud Tech) sets context for why retrieval matters.

- Let's build GPT: from scratch, in code, spelled out (Andrej Karpathy) helps technical partners understand limits of "just prompt harder."

- RAG on first read is very interesting. But how do I actually learn the practical details? in r/Rag is the canonical "where next" thread.

- From zero to RAG engineer: 1200 hours of lessons so you ... in r/Rag is a rabbit hole for brave souls.

- Multi source answering, linking to appendix and glossary in r/Rag shows why recruiting rubrics map cleanly to multi-doc retrieval.

Quora

- What is retrieval augmented generation (RAG)? collects mixed-depth answers (read critically).

Long chat versus RAG

| Pattern | Strength | Weakness |

|---|---|---|

| Long thread memory | Convenient | Drift, hard audit |

| RAG from files | Grounded, portable | Needs curation |

| Hybrid | Best of both | More moving parts |

Related on this site

- Blog: What is AI-native work?

- Tools: Claude

- Guides: Talent acquisition managers

- Membership: Become a member