AI-powered recruiting

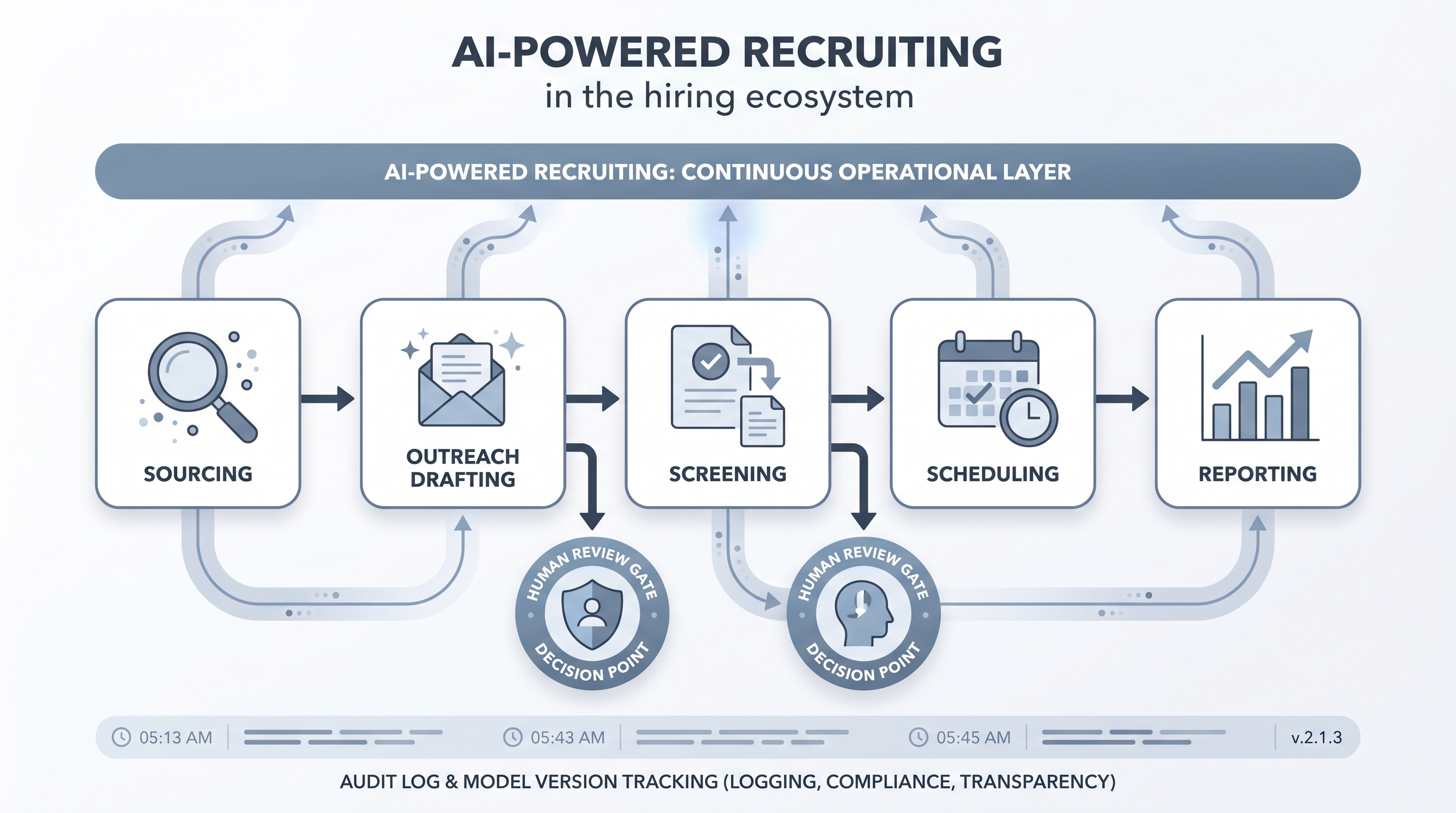

Recruiting operations where AI is built into the core workflow rather than used as an occasional helper: sourcing, screening, outreach, scheduling, and reporting all run through AI-assisted steps with human review gates at decision points.

Michal Juhas · Last reviewed May 4, 2026

What is AI-powered recruiting?

AI-powered recruiting means AI is embedded in the recruiting workflow as infrastructure rather than used occasionally as a helper. Sourcing, screening, outreach, scheduling, and reporting all run through AI-assisted steps, with human review gates at the points where decisions are made. The result is that each stage moves faster and produces structured output without requiring a recruiter to re-enter data between steps.

The term is often used loosely in vendor marketing, where any tool with a language model gets the label. The meaningful definition is systemic: the AI layer is connected, logged, and consistent across every req rather than dependent on an individual recruiter remembering to open a chat window.

In practice

- A sourcing team running an AI-powered function describes their morning routine as: check the overnight sourcing queue (AI ran the search overnight), review 20 flagged profiles (AI ranked by criteria match), approve the outreach batch (AI drafted personalised messages), and check the reply digest (AI summarised responses by sentiment). No manual data entry between steps.

- A TA leader telling the board "we are AI-powered now" after enabling a vendor feature is a red flag in team debriefs. The real question is: can you show the decision log, the bias audit date, and the reviewer name for the last 100 AI-assisted screening outcomes?

- When a recruiting function says AI-powered but outreach drafts still live in individual chat windows and screening notes are still typed manually into the ATS, the actual maturity level is AI-assisted at best, which is a useful distinction for realistic road-mapping.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding what infrastructure to build before the label is accurate.

Plain-language summary

- What it means for you: AI handles the data-moving, drafting, and summarising between each hiring stage so you spend your time on judgment calls rather than copy-paste and note-taking.

- How you would use it: Connect your sourcing, outreach, screening, and reporting steps through AI-assisted nodes with review queues at each decision point. Log every AI output to a shared record.

- How to get started: Pick one high-volume role type. Map every manual step between req open and first interview. Instrument the two most repetitive steps with AI assist and a review gate. Measure time savings and rework rate before expanding.

- When it is a good time: When criteria are stable, volume is high, and the team has named owners for prompt maintenance, decision logging, and bias review cadence.

When you are running live reqs and tools

- What it means for you: AI-powered recruiting requires logging infrastructure before it is defensible. Every AI-assisted step needs model version, input, output, reviewer, and date recorded alongside the candidate record so audits have a trail.

- When it is a good time: After the review gate design is agreed across sourcing, screening, and scheduling. Not while criteria are still changing or the ATS does not have fields for AI-output metadata.

- How to use it: Connect AI steps through workflow automation rather than manual copy-paste. Keep review queues sized so humans genuinely review rather than rubber-stamp. Run an AI bias audit after the first 100 AI-assisted screening decisions.

- How to get started: Map one role end to end, instrument two steps, measure for 30 days, and calibrate before expanding. Read AI sourcing tools for recruiters before evaluating additional vendors for connected workflow nodes.

- What to watch for: Vendor labels that call any AI feature "AI-powered," review fatigue turning approval into rubber-stamping, model drift degrading outputs without a visible alert, and connected workflows moving candidate PII between systems without documented DPAs.

Where we talk about this

On AI with Michal live sessions, sourcing automation and AI in recruiting workshops both address what it takes to move from AI-assisted to AI-powered at a team level: connected steps, shared logging, and governance that scales. If you want a live room conversation on road-mapping rather than a static page, join Workshops and bring your current process map.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures directly before wiring candidate data to any connected workflow.

YouTube

- Building an AI Recruiting Workflow End to End shows the connected-step architecture that distinguishes AI-powered from AI-assisted recruiting.

- n8n for HR Automation walks the workflow automation layer that connects AI steps without manual re-entry between sourcing, screening, and scheduling tools.

- Introduction to Generative AI (Google Cloud Tech) gives the language-model foundation useful before evaluating any AI recruiting vendor claim about what their product actually does.

- What does your AI recruiting stack actually look like? in r/recruiting is a candid comparison of what teams are running versus what vendors claim is possible.

- AI tools for recruiting: honest review after six months in r/recruiting covers the failure modes that appear after the pilot phase ends and volume scales.

- How do you audit AI-assisted hiring decisions? in r/Recruitment covers the compliance and documentation layer that most AI-powered recruiting implementations skip until a complaint arrives.

Quora

- What does AI-powered recruiting actually mean? collects practitioner and vendor perspectives on the difference between marketing language and operational reality.

AI-assisted versus AI-powered

| Characteristic | AI-assisted | AI-powered |

|---|---|---|

| Trigger | Recruiter remembers to prompt | Workflow trigger runs automatically |

| Logging | Personal chat window | Shared record with model version and reviewer |

| Coverage | Varies by individual | Consistent across every req |

| Auditability | Low | High |

| Bias review | Ad hoc | Scheduled cadence |

Related on this site

- Glossary: AI in recruiting, Workflow automation, AI bias audit, Human-in-the-loop, Scorecard, AI adoption ladder, Semantic search

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Courses: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member