Candidate ghosting metrics

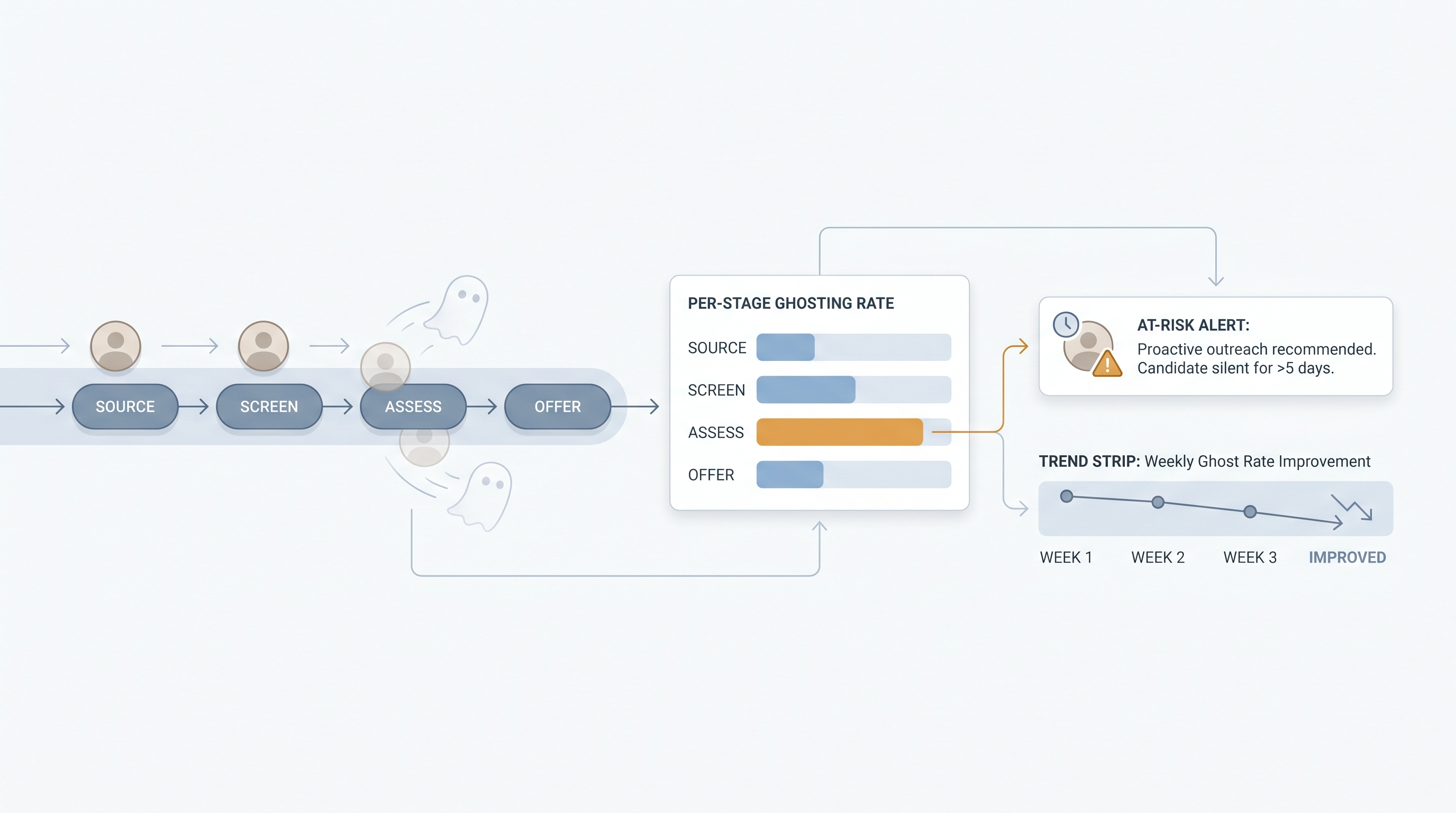

Candidate ghosting metrics track the rate, stage, and pattern of candidates who stop responding during the hiring process without formally withdrawing, helping TA teams identify where pipeline integrity breaks down and what conditions predict disengagement.

Michal Juhas · Last reviewed May 9, 2026

What is candidate ghosting metrics?

Candidate ghosting metrics measure the rate and pattern of candidates who stop responding during a hiring process without formally withdrawing. Instead of a vague sense that candidates are disappearing, a ghost rate by stage gives TA teams a number to investigate and a named owner to hold accountable.

The basic calculation is simple: what percentage of candidates in a given stage never reply within a defined window? The harder work is agreeing on the window, cleaning the disposition codes in the ATS, and separating true ghosting from legitimate slow movers who are still considering. Once that hygiene is in place, a ghost rate becomes a diagnostic tool: high early-stage ghosting points to slow first contact; high assessment-stage ghosting points to task friction; high offer-stage ghosting points to speed or experience problems in the preceding steps.

Teams that track ghosting by stage consistently find one bottleneck that accounts for most of the problem. Fixing that one stage often moves the overall ghost rate more than any sourcing change.

In practice

- A TA lead pulls a three-month ATS export, filters for candidates who were advanced to phone screen but never booked a slot, and finds a 42 percent ghosting rate at that stage. On investigation, the median time from application to first-contact email is six days. The fix is a 24-hour first-contact SLA, not a new sourcing tool.

- Vendors label the same pattern differently: pipeline dropout, candidate disengagement, no-show rate, silent withdrawal. Whatever the label, the underlying question is the same: at what stage, and how often?

- A recruiter managing a high-volume customer service req sets a five-business-day ghost threshold and codes every expired candidate consistently. After eight weeks she has enough data to show that Monday morning screen invites ghost at twice the rate of Thursday invites.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA leads, and HR business partners who need shared vocabulary for pipeline reviews, hiring manager syncs, and vendor conversations. Skim the first section for a fast shared picture. Use the second when you are setting up tracking, pulling reports, or deciding what to fix first.

Plain-language summary

- What it means for you: Ghosting metrics turn "candidates just disappear" from a feeling into a number attached to a specific stage, so you can have a different conversation with a hiring manager than a shoulder shrug.

- How you would use it: Pick the stage where you feel the most silent drop-off. Count how many candidates you chased twice with no reply over the last 90 days. Divide by how many entered that stage. That's your starting ghost rate.

- How to get started: Clean your ATS disposition codes first. If "ghosted" is not a disposition option, add it. Without consistent codes, your ghost rate includes pipeline lag and looks misleading.

- When it is a good time: When time-to-fill is climbing and the team cannot agree on where the pipeline is breaking. Ghosting by stage replaces anecdote with data.

When you are running live reqs and tools

- What it means for you: A ghost rate is only as reliable as your ATS hygiene. Candidates left in active states because recruiters batch-update on Fridays or delay logging rejections inflate the rate artificially. Audit stage movement frequency before trusting the numbers in a leadership report.

- When it is a good time: After at least 60 days of clean disposition coding and at least one named owner per stage. Setting targets before owners are agreed creates a metric nobody acts on.

- How to use it: Connect ATS stage timestamps and disposition exports to a weekly summary. Cross-reference with sourcing funnel metrics to separate ghosting from explicit drop-off, and with time in stage reporting to see whether slow stages correlate with higher ghost rates.

- How to get started: Start with two stages: recruiter outreach response and post-screen follow-up. Set a five-business-day ghost threshold. Track for four weeks before expanding. Compare ghost rates by source channel to identify which channels deliver candidates who engage versus those who disappear.

- What to watch for: International candidates who move more slowly for legitimate reasons, passive candidates who need more time to consider, and roles where the screening task is long enough to warrant a 10-day window. A single ghost threshold applied uniformly across all role types produces misleading comparisons.

Where we talk about this

On AI with Michal live sessions, candidate ghosting comes up in both the AI in recruiting and sourcing automation tracks. Sourcing automation sessions cover how to wire ATS disposition exports into a weekly ghost rate summary; AI in recruiting sessions connect ghosting patterns to hiring manager communication cadence and candidate experience decisions. If you want the full room discussion on what ghost rates actually tell you versus what teams assume they mean, start at Workshops and bring your current ATS reporting setup.

Around the web (opinions and rabbit holes)

Third-party resources move quickly. Treat these as starting points, not endorsements, and double-check anything before wiring candidate data into a new tool.

YouTube

- Why Candidates Ghost Recruiters (and What To Do About It) covers practitioner perspectives on what drives ghosting across hiring stages.

- How to Build a Recruiting Pipeline Dashboard walks through lightweight approaches to turning ATS exports into actionable stage reports.

- Candidate Experience and Ghosting: What the Data Shows surfaces research-grounded views on the link between process speed and ghosting rates.

- Candidates ghosting after phone screens in r/recruiting collects honest recruiter accounts of where ghosting hits hardest and what has actually helped.

- How do you track candidate ghosting in your ATS? surfaces real configurations teams use with Greenhouse, Lever, and Workday.

- Is ghosting getting worse? Data and discussion in r/humanresources captures the HR ops perspective on whether ghosting is increasing and what the root causes look like.

Quora

- Why do job candidates ghost recruiters? collects practitioner and candidate-side answers on motivation and what changes the outcome.

Ghosting metrics versus related pipeline measures

| Metric | What it tracks | Limitation |

|---|---|---|

| Ghosting rate by stage | Candidates who go silent without withdrawing | Requires consistent ATS disposition codes to be accurate |

| Sourcing funnel metrics | Stage-to-stage conversion including all exits | Does not distinguish ghosting from explicit declines or rejections |

| Offer decline analysis | Candidates who explicitly say no at offer | Misses the silent exits before the offer stage |

Related on this site

- Glossary: Talent acquisition metrics, Time in stage reporting, Sourcing funnel metrics

- Glossary: Offer decline analysis, Hiring funnel conversion rates, Pipeline coverage reporting

- Glossary: Recruiting stage SLA metrics, Funnel velocity recruiting, Workflow automation

- Blog: AI sourcing tools for recruiters

- Workshops: AI in recruiting

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member