Claude in recruiting

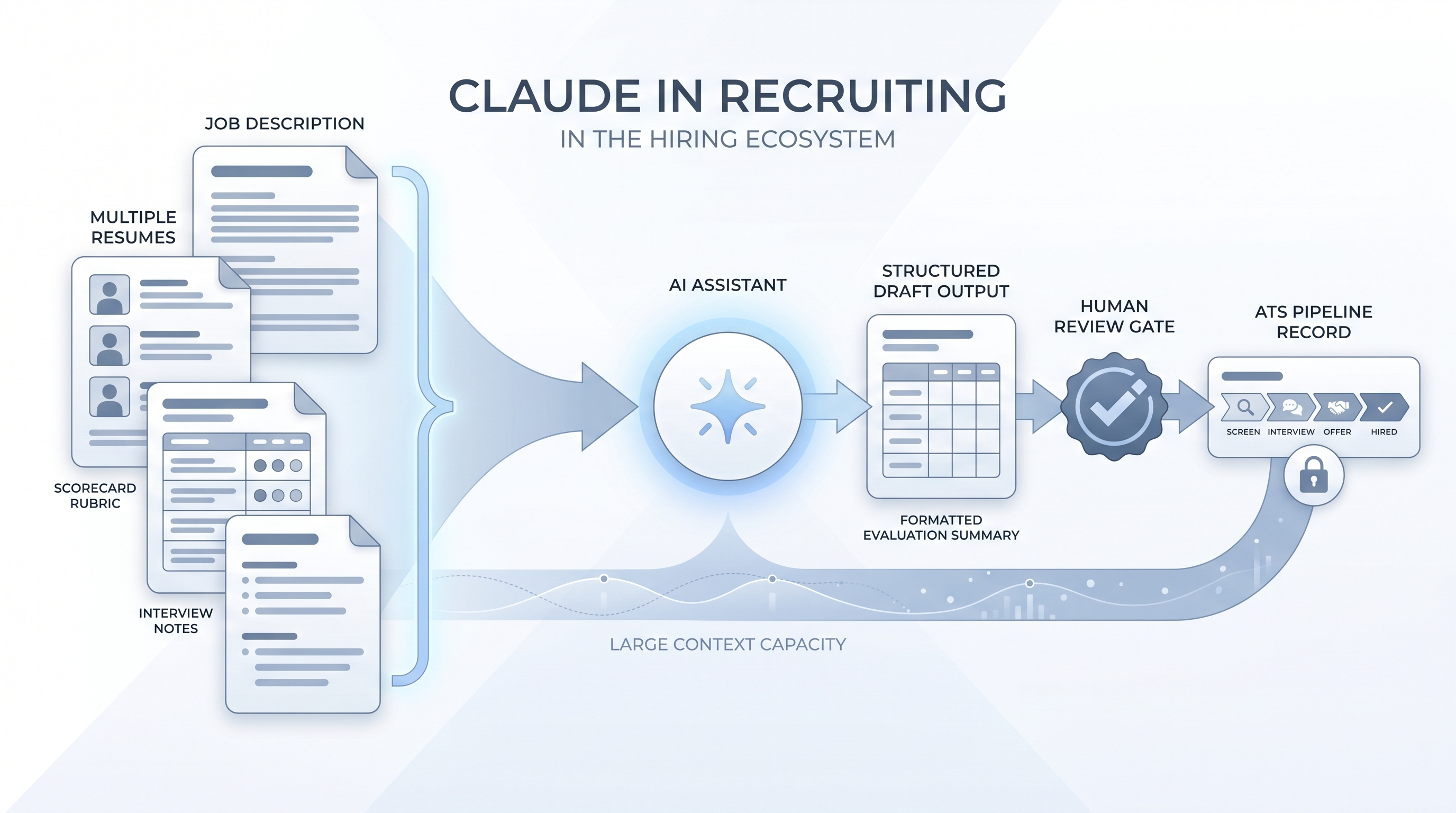

Using Anthropic's Claude to handle text-heavy recruiting work: drafting job descriptions from intake notes, writing personalised outreach, summarising interviews, and processing long document stacks (full interview packets, policy documents, multi-candidate batches) that exceed the context limits of most chat tools.

Michal Juhas · Last reviewed May 5, 2026

What is Claude in recruiting?

Claude is Anthropic's AI assistant, available via Claude.ai and Anthropic's API. In recruiting, it refers to using Claude directly, through the chat interface or via connected tools, for the text-heavy production tasks that surround every req: drafting job descriptions and outreach, summarising interviews, and analysing large batches of candidate documents that would exceed the context limits of most other tools.

The term sits within the broader category of AI for recruiters but is specific to Claude's interface and where it differs from alternatives. The extended context window (up to 200,000 tokens in recent versions) is the most often cited practical reason teams reach for it when a task requires holding an entire interview packet in memory at once.

In practice

- A TA coordinator pastes a full interview packet (job description, two-page resume, hiring manager brief, and a four-criteria scorecard) into one Claude prompt and asks for a draft evaluation summary by competency. The output takes 30 seconds; the panelist edits it before logging to the ATS.

- A sourcer describes a niche engineering role in plain language and asks Claude to generate five LinkedIn Boolean strings and five Google X-Ray strings in one pass. Claude returns all ten with explanations, and the sourcer removes false-positive synonyms before running them.

- A recruiter who says "we use Claude for Work so our candidate data doesn't train the model" is explaining the enterprise-tier DPA distinction to a hiring manager asking about privacy.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how Claude fits your daily workflow, your ATS, or your sourcing stack.

Plain-language summary

- What it means for you: Claude is a chat interface where you describe a task in plain language and it produces a useful first draft, whether that is a job description, a cold outreach message, or a call summary. You edit the draft; you do not send it as-is.

- How you would use it: Open a chat, paste your intake notes or a candidate profile, write a short prompt describing what you want, and read the output critically. Edit, shorten, and check for invented details before the text touches any system or any person.

- How to get started: Pick one task where you spend at least 30 minutes a week on manual writing. Write a prompt for it, run it alongside your normal process for two weeks, and note where the output saves time and where it needs correction. Start there before trying to automate anything.

- When it is a good time: When you have a stable task, a repeatable prompt, and enough time to review the output before it goes anywhere. Not when the process changes weekly or when the output would reach a candidate without a review step.

When you are running live reqs and tools

- What it means for you: Claude is a drafting layer you bring to every req. Every output lands in your clipboard first, which means every output gets a human-in-the-loop review before it moves anywhere.

- When it is a good time: After you have written two or three stable prompts for a given task and can identify a poor draft in under a minute. Before that point, the editing overhead can exceed the time saved.

- How to use it: Set a system instructions-style opening message for each session: your company name, the role, tone expectations, and any must-avoid phrases. Paste in the minimum data needed (role brief, candidate summary, intake notes) and ask for a specific output format. Log which model version produced each output so you can revisit prompts after an Anthropic update changes behaviour.

- How to get started: Move one prompt to Claude for Work or Enterprise if your team processes any candidate personal data. Create a shared folder of approved prompt templates so output quality is consistent across the team, not dependent on who drafted the prompt. Review the AI outreach drafting entry for the outreach pattern specifically.

- What to watch for: Hallucinations on company names, dates, and titles when you ask Claude to research rather than draft. GDPR risk if personal candidate data enters a consumer-tier account. Model drift when Anthropic updates the underlying model and previously reliable prompts start producing different-quality output.

Where we talk about this

On AI with Michal live sessions, Claude comes up as part of the model comparison conversation: which tool for which task, and why the data handling tier matters before any candidate document leaves your clipboard. The AI in recruiting track covers prompting patterns and review habits, while the sourcing automation track moves toward embedding stable prompts in light automations. If you want the full room conversation with a practitioner cohort, start at Workshops and bring a prompt you are already using so feedback is grounded in real output, not theory.

Around the web (opinions and rabbit holes)

Third-party creators move fast on this topic. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data through a workflow you found in a tutorial.

YouTube

- Claude AI recruiting prompts for practitioner walkthroughs of prompt-to-draft flows and before-and-after comparisons of output quality across AI tools

- Claude AI long document analysis HR for extended context use cases including multi-resume batches and interview packet analysis in hiring teams

- Anthropic Claude GDPR data privacy recruiting for compliance-focused discussions on data handling tiers and what Claude for Work actually changes for HR teams

- r/recruiting: Claude AI surfaces candid practitioner feedback on what works, what produces generic output, and where human editing still matters most

- r/humanresources: Claude Anthropic covers the compliance and data handling side, including threads on enterprise tiers and GDPR obligations for HR use cases

- r/RecruitmentAgencies: AI drafting tools for agency-side views on volume, personalisation limits, and client expectations when AI drafting is part of the delivery model

Quora

- How can Claude AI be used in recruiting? collects practitioner answers from sourcers and TA leaders (read critically; quality varies and not all contributors have deep recruiting backgrounds)

Claude versus ChatGPT for recruiting

| Dimension | Claude | ChatGPT |

|---|---|---|

| Context window | Up to 200K tokens (full interview packets in one prompt) | Varies by tier; typically shorter per session |

| Safety tuning | Constitutional AI; tends to decline harmful instructions | RLHF; similar guardrails, different edge cases |

| Enterprise tier | Claude for Work (Teams / Enterprise) with DPA | ChatGPT Teams / Enterprise with DPA |

| ATS integration | Manual copy-paste; no native ATS connector | Manual copy-paste; no native ATS connector |

| Audit trail | None by default; your team must create one | None by default; your team must create one |

| Best fit | Large multi-document tasks; policy review; long-form analysis | Fast iteration on shorter prompts; broad awareness in teams |

Related on this site

- Glossary: AI for recruiters, ChatGPT for recruiters, Large language model, Hallucination, Human-in-the-loop, System instructions, AI outreach drafting, Scorecard, AI in recruiting

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member