Pre-employment skills assessment

A structured evaluation of job-relevant skills administered before a hiring decision, using work samples, technical exercises, or practical simulations to measure what a candidate can actually do rather than inferring ability from a CV or personality survey.

Michal Juhas · Last reviewed May 15, 2026

What is a pre-employment skills assessment?

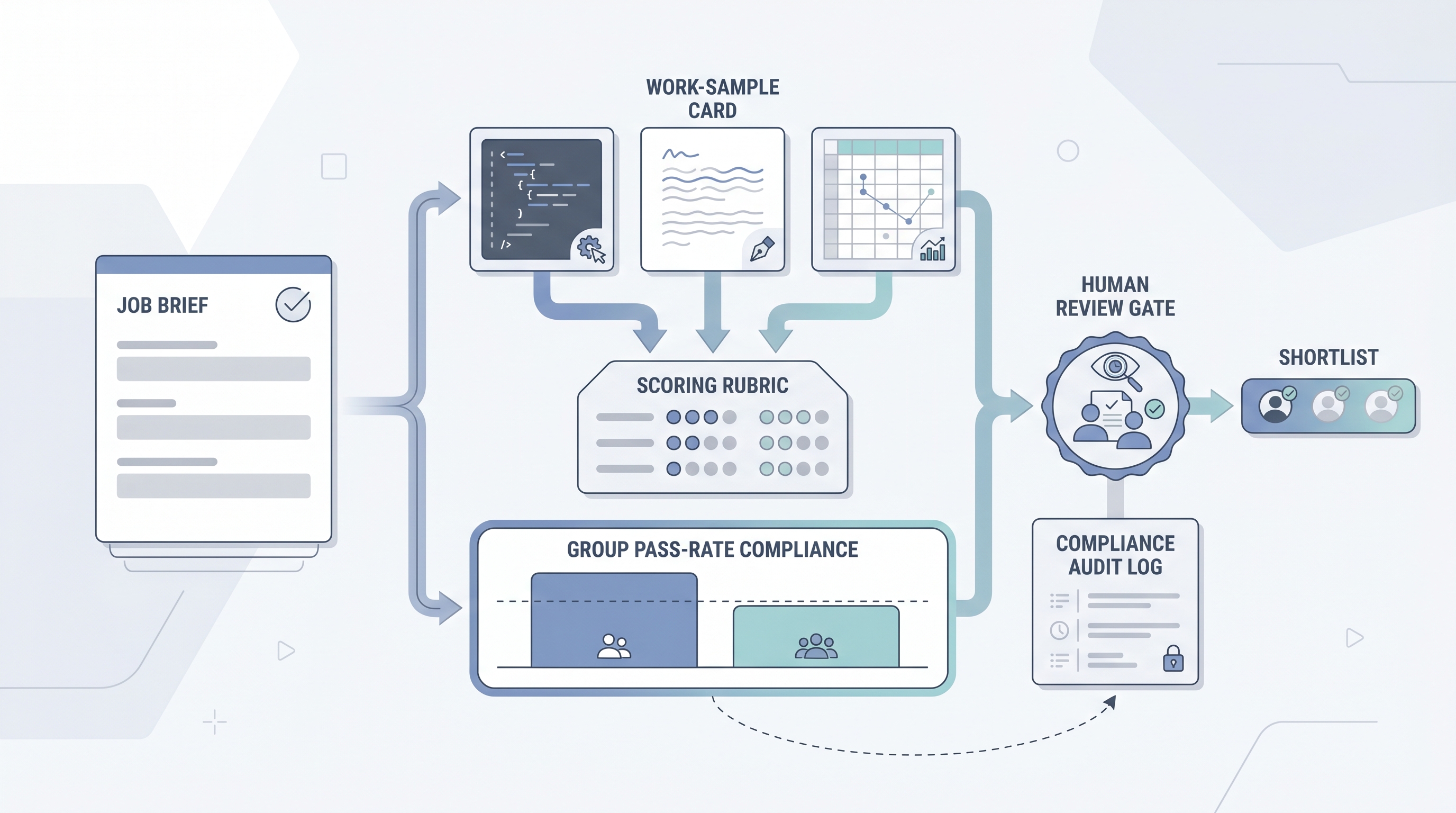

A pre-employment skills assessment asks candidates to complete a job-relevant task before a hiring decision, rather than relying on CVs, interviews, or proxy measures alone. Unlike cognitive ability tests or personality questionnaires, a skills assessment measures output directly: can this person write a clear email, fix a broken query, structure a client debrief, or prioritize a conflicted workload? The evaluation instrument is the task itself, and the validity evidence is the match between that task and real job duties.

The practical distinction matters at three points. First, the job analysis must precede the task design. Teams that reach for vendor libraries without first mapping what the role actually demands end up with instruments that measure something, just not what they intended. Second, uniform administration is a legal requirement: every candidate must receive identical time limits, instructions, and platform conditions, or the administration introduces confounding variance. Third, adverse impact monitoring applies regardless of face validity. Work samples that look job-relevant can still produce group pass-rate disparities that exceed the four-fifths rule threshold, and a platform that cannot generate cohort-level group pass-rate reports is not operationally ready for compliance review.

In practice

- A TA ops team piloting a writing assessment for a customer success role discovers that 40 percent of candidates abandon before completing. The task time limit was 45 minutes but typical completions take 65. They cut the prompt scope, re-pilot on a closed req, and bring the completion rate above 80 percent before using the assessment on live candidates.

- An engineering hiring manager reviews the company's technical screen and realizes the coding problem requires a library that candidates outside large-company engineering teams rarely encounter. The task measures tool familiarity, not problem-solving skill. It is replaced with a logic problem drawn from an actual product bug the team debugged last quarter.

- An HRBP running a quarterly compliance review asks the assessment vendor for group pass-rate data by gender for the previous three cohorts. The vendor produces the report within one business day. The four-fifths check passes, and the HRBP has a documented record before the department head meeting.

Quick read, then how hiring teams use it

This is for recruiters, TA leaders, and HR partners who need shared vocabulary when briefing vendors, reviewing contracts, and presenting to legal or procurement. Skim the first section for a fast shared picture. Use the second when you are selecting, integrating, or auditing a platform on a live deployment.

Plain-language summary

- What it means for you: A pre-employment skills assessment asks a candidate to do a task that looks like real work, so you can see how they perform before you make a hiring decision.

- How you would use it: Pick one task a new hire would need to do well in the first 30 days, write clear instructions, give every candidate identical time and context, and decide what a passing standard looks like before you score anyone.

- How to get started: Write down the three hardest things a poor hire in this role gets wrong in the first 90 days. If one of those is a skill you can observe in a task, that is your assessment candidate.

- When it is a good time: After the job analysis is written, after a compliance partner has confirmed the adverse impact monitoring plan, and after the platform has been tested to confirm that completion data is stored with candidate consent.

When you are running live reqs and tools

- What it means for you: The platform fires an invite when a candidate reaches the assessment stage in your ATS, collects responses under timed conditions, scores against a stored rubric, and returns a structured score field. When the vendor updates the rubric or scoring logic between cohorts, historical scores break unless the platform logged the rubric version at the run level.

- When it is a good time: After your ATS stage trigger has been tested end-to-end, after the GDPR deletion path has been verified in a sandbox, and after a pilot cohort of at least 40 completions has run with retroactive scoring against 90-day performance data.

- How to use it: Set one documented pass standard per role family before candidates see the task. Run the four-fifths adverse impact check after every cohort of 40 or more results. Keep the score field separate from the stage-advance field in your ATS so the two decisions are independently auditable.

- How to get started: Pilot on a role where you have at least 20 current employees whose performance ratings you can access. Score the assessment retroactively against those ratings. A weak correlation means the task does not predict performance in this role and should not be used for decisions.

- What to watch for: Completion rates below 80 percent (time limit is too long or instructions are unclear); mobile completion below 70 percent (platform is not mobile-ready); vendors who report overall pass rates but not group-level pass rates; AI scoring modules with no documented rubric version log; and tasks that measure tool familiarity rather than the underlying skill.

Where we talk about this

On AI with Michal live sessions, pre-employment skills assessment appears in the compliance and vendor evaluation modules of the AI in recruiting track alongside broader assessment platform selection. Participants work through job-task analysis briefs, compare instrument types against role requirements, and review group pass-rate data from real vendor reports. The sourcing automation track adds the operational layer: how to wire stage triggers to assessment invites, route scores through webhook events, and build the GDPR deletion path before go-live. Join a session at Workshops with your real role brief and vendor shortlist.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before wiring any platform to a candidate-facing process.

YouTube

Search with Filters → Upload date to surface recent IO psychology and employment-law content alongside vendor marketing.

- Pre-employment skills assessment validity evidence (criterion validity, work sample design, and what platform claims actually mean)

- Work sample test adverse impact EEOC (four-fifths rule applied to practical skills tasks and documentation requirements)

- AI scoring rubric pre-employment assessment (automated rubric scoring walkthroughs and IO psychology review considerations)

- Job task analysis skills assessment design (how to map role duties to assessment content before buying a platform)

- r/IOPsychology has practitioner debate on which skills assessment types hold up under scrutiny versus which are vendor marketing, with research citations.

- r/recruiting has frank threads on candidate drop-off from long assessments, mobile completion problems, and which platforms survive production ATS traffic.

- r/humanresources captures HRBP and legal partner perspectives on GDPR documentation requirements and group pass-rate reporting obligations.

Quora

- Quora search: pre-employment skills assessment has practitioner answers on task design and platform comparisons; quality varies, so verify specific claims before acting.

Skills assessment versus cognitive or personality test

| Factor | Skills assessment | Cognitive test | Personality questionnaire |

|---|---|---|---|

| Measures | Specific job-relevant output | General reasoning capacity | Stable trait patterns |

| Validity basis | Content validity (task-job match) | Criterion validity (historical data) | Criterion validity (research base) |

| Group differences | Varies by task type | Often higher disparities | Generally lower disparities |

| Custom content required | Yes, per role family | No (standardized items) | No |

| AI grading maturity | Early stage (rubric-scored tasks) | Not applicable | Not applicable |

Related on this site

- Glossary: Pre-employment assessment software, Pre-employment assessment tools, Pre-employment assessment test, Employment skills assessment

- Glossary: Adverse impact, AI bias audit, Explainable AI hiring, Scorecard

- Glossary: Hiring assessment tools, Candidate assessment tools, Human-in-the-loop (HITL)

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting