Pre-employment assessment software

A category of HR technology products that automate the delivery, scoring, and compliance reporting of candidate assessments before a hiring decision, integrating with applicant tracking systems to route invitations, return structured score fields, and generate adverse impact statistics for legal review.

Michal Juhas · Last reviewed May 15, 2026

What is pre-employment assessment software?

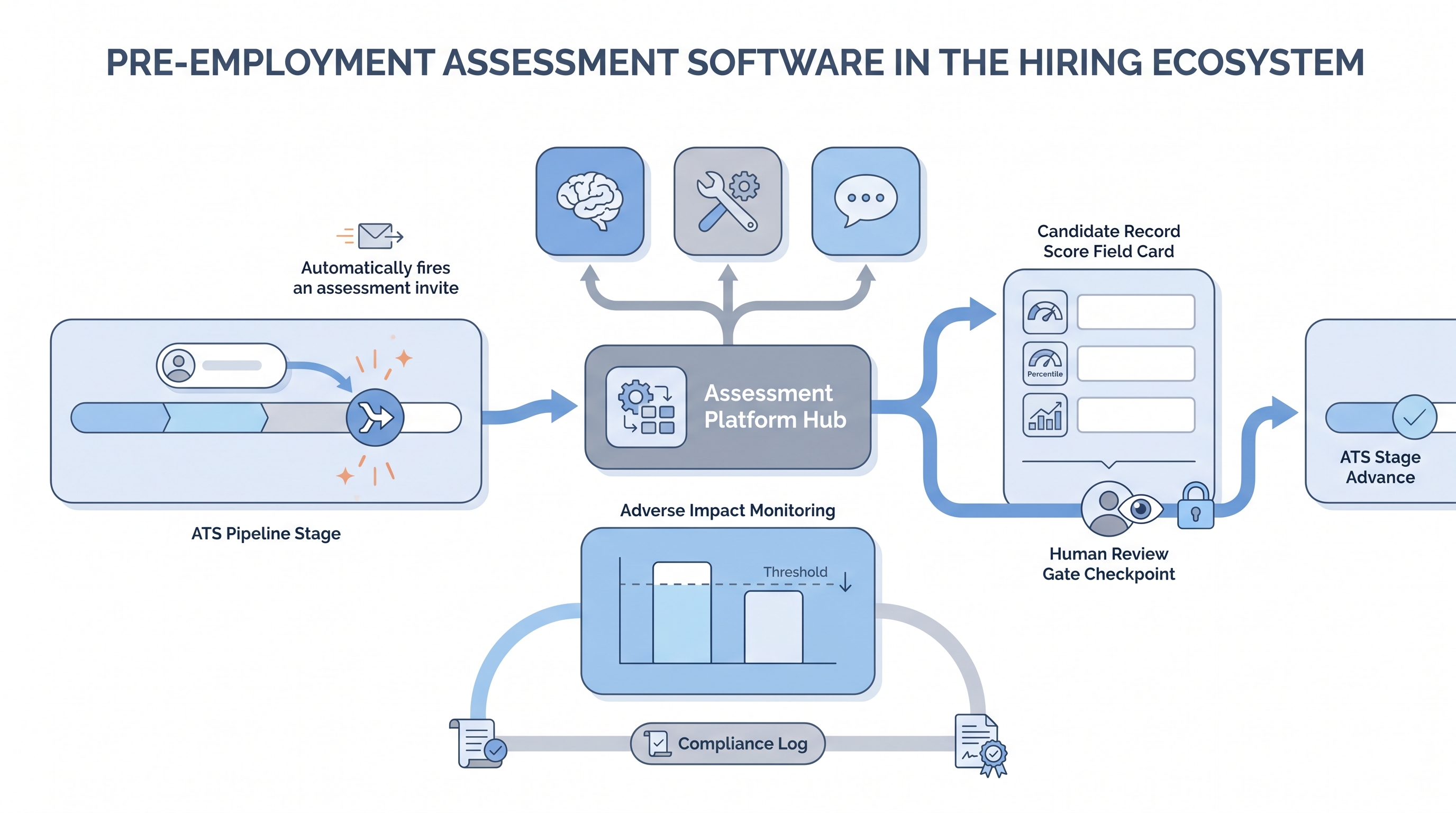

Pre-employment assessment software is the platform layer that sits between your applicant tracking system and the evaluation instruments themselves. It automates invite delivery, tracks candidate responses, scores results against stored rubrics, surfaces scores in recruiter dashboards, and feeds structured score fields back to the ATS candidate record. The key word is software: you are buying operational infrastructure that determines what audit trail you have, how GDPR deletion propagates, and whether a legal team can answer a compliance question in one screenshot rather than six spreadsheets.

A useful mental model is three layers. The instrument layer is the actual test content: cognitive items, situational scenarios, work samples, or behavioral questions. The platform layer is the software that delivers, scores, and reports. The compliance layer is the adverse impact dashboard, model version log, and GDPR documentation the platform either ships natively or leaves entirely to you. When evaluating vendors, all three layers need separate answers, because a weak compliance layer is not solved by a strong instrument catalogue.

In practice

- A TA ops lead reviewing a new assessment vendor notices the API documentation describes only an email link embed rather than a bidirectional webhook. When the team maps the GDPR deletion path, there is no automated cascade: assessment data persists after a candidate is removed from the ATS. The vendor is removed from the shortlist before the pilot begins.

- A recruiter working on a high-volume customer support role finds that Q2 scores from the new cognitive screen cannot be compared to Q1 results. The vendor updated the scoring algorithm without notice between the two cohorts, and the platform did not log model versions at the run level. The team has no evidence of consistent scoring across candidates.

- An HRBP preparing for an audit of the company's screening process asks the assessment software vendor for group pass-rate data by gender and age for the most recent cohort. The vendor produces the report in two business days, the adverse impact calculation passes the four-fifths check, and the HRBP has a defensible record before the review meeting.

Quick read, then how hiring teams use it

This is for recruiters, TA leaders, and HR partners who need shared vocabulary when briefing vendors, reviewing contracts, and presenting to legal or procurement. Skim the first section for a fast shared picture. Use the second when you are selecting, integrating, or auditing a platform on a live deployment.

Plain-language summary

- What it means for you: Pre-employment assessment software automates the step between a candidate applying and a recruiter seeing a score. The software fires the invite, collects responses, scores results, and returns a number to your pipeline without manual data entry.

- How you would use it: Pick one platform that covers the instrument type your role requires, confirm it has adverse impact reporting and a real ATS API, agree in writing on what score field lands in the candidate record, and pilot on a closed req before rolling out.

- How to get started: List the competency you most need to measure, ask three vendors for a technical manual and independent validity study for that competency, and pilot only the vendor that can produce both documents before the next call.

- When it is a good time: After your team has documented the role requirements, after a compliance partner has confirmed data routing and lawful basis, and after IT has verified the ATS integration handles GDPR deletion properly.

When you are running live reqs and tools

- What it means for you: The platform fires an invite when a candidate hits a trigger stage in your ATS, collects responses, scores against a stored rubric, and returns a structured score field. When the vendor updates scoring logic between cohorts, historical data breaks unless the platform logged model versions at the run level.

- When it is a good time: After your sourcing pass-through rate is stable enough to isolate an assessment bottleneck from a sourcing problem, and after your ATS integration has been tested with real GDPR deletion paths in a sandbox.

- How to use it: Set one cut score per role family, document the business rationale in writing with a named owner and date, run a four-fifths adverse impact check on each cohort before acting on results, and keep the score field separate from the stage decision field so you can show independence in an audit.

- How to get started: Pilot on a closed req with 40 or more past hires in the same role family. Score retroactively and check whether the platform result correlates with your own 90-day performance ratings. A weak correlation means the instrument is not measuring what the vendor claims.

- What to watch for: Vendors reporting overall completion rates but not group-level pass rates; platforms that store scores without storing the model version or rubric version used; integrations that leave orphaned assessment records when candidates are deleted from the ATS; AI video or speech features whose documentation references general AI performance rather than an independent IO psychology validation study; and mobile completion rates below 70 percent, which signal candidate drop-off before you have usable data.

Where we talk about this

On AI with Michal live sessions, pre-employment assessment software appears in the compliance and vendor evaluation modules of the AI in recruiting track. Participants work through a structured vendor scorecard, practice reading technical manuals, and compare platform shortlists from real active searches. The sourcing automation track adds the operational layer: how to wire stage triggers to assessment invites and route scores back through webhook events without manual data entry. Join a session at Workshops and bring your real vendor shortlist and ATS name so the conversation is grounded.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before wiring any platform to a candidate-facing process.

YouTube

Search with Filters → Upload date to surface recent IO psychology and employment-law content alongside vendor marketing.

- Pre-employment assessment software IO psychology (criterion validity, norming, and what platform claims actually mean)

- ATS assessment integration webhook tutorial (practical bidirectional integration walkthroughs across common ATS and assessment vendor pairings)

- Adverse impact pre-employment testing EEOC (four-fifths rule, cut score decisions, and documentation requirements)

- AI assessment software bias audit hiring (what regulators are asking platform vendors to produce in 2025 and 2026)

- r/IOPsychology surfaces active practitioner debate on which platform validity claims hold up versus which are vendor marketing, with study citations.

- r/recruiting has frank threads on candidate drop-off rates, test completion on mobile, and which platform integrations survive production ATS traffic.

- r/humanresources captures HRBP and legal partner perspectives on GDPR documentation requirements and vendor DPA terms.

Quora

- Quora search: pre-employment assessment software has practitioner answers on platform comparisons; quality varies, so verify any specific claim before acting.

Point solution versus suite platform

| Factor | Point solution | Suite platform |

|---|---|---|

| Instrument depth | Deep in one category | Broad across categories |

| ATS integration | May require custom build | Usually pre-built connectors |

| Adverse impact dashboard | Often manual export | Usually built-in per cohort |

| Compliance documentation | Variable | Usually more complete |

| Vendor consolidation risk | Low | Higher if suite is acquired |

Related on this site

- Glossary: Pre-employment assessment tools, Pre-employment assessment test, Pre-employment testing software, Hiring assessment tools

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), ATS API integration

- Glossary: Explainable AI hiring, Scorecard, Sourcing pass-through rate

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting