Predictive hiring

The use of structured data, validated assessments, and statistical models to estimate which candidates are most likely to succeed in a specific role before making a hiring decision.

Michal Juhas · Last reviewed May 15, 2026

What is predictive hiring?

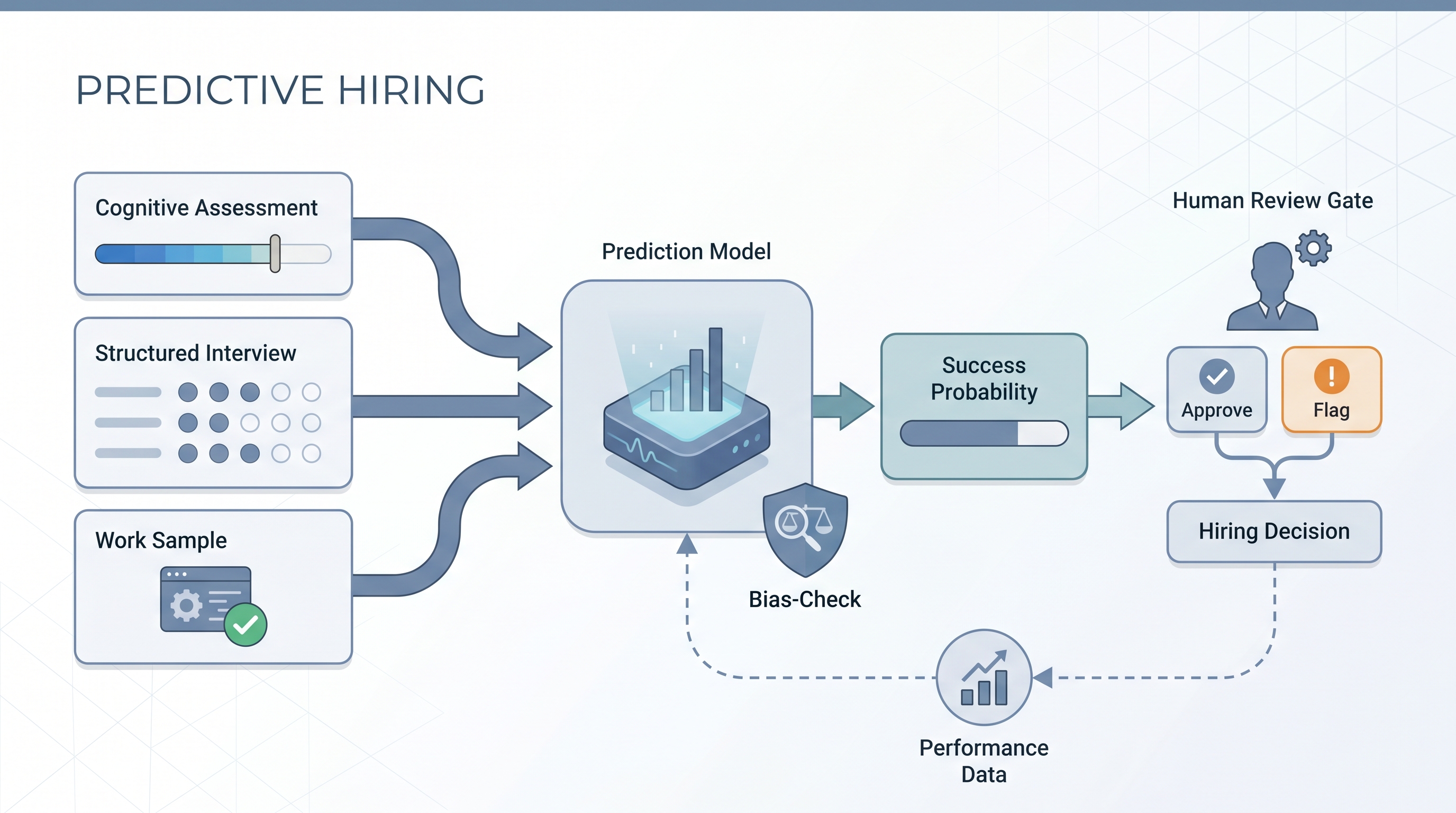

Predictive hiring uses structured data and validated instruments to estimate which candidates are most likely to succeed in a role before the hiring decision is made. The core idea is that past performance on validated assessments -- cognitive tests, structured interviews, job-relevant work samples -- correlates with future on-the-job performance, and that correlation can be measured, calibrated, and used to improve decisions.

The term is often used interchangeably with "data-driven hiring" and "AI hiring," but there is a meaningful distinction: predictive hiring requires linking pre-hire signals to post-hire outcomes. Without that feedback loop, you have assessment data but no validated model. With it, you can identify which instruments actually predict the outcomes you care about -- performance ratings, retention, promotion rate -- and which instruments are just adding process complexity.

In practice

- A TA team that standardized to a 20-minute cognitive assessment and a structured competency screen saw 90-day attrition in one role type drop significantly over 12 months, not because of a vendor model, but because consistent structured data replaced ad-hoc gut checks.

- "Predictive hiring" is how most enterprise HR technology vendors describe their matching algorithms. Ask any vendor: what is the validation sample size, what outcome variable was used (offer acceptance, 90-day retention, or manager ratings), and has the model been tested for adverse impact on protected groups?

- The biggest failure mode is not inaccurate predictions but unvalidated inputs. Teams often implement an assessment, never connect it to outcomes, and spend years collecting data that cannot prove or disprove whether the tool is working.

Quick read, then how hiring teams use it

This is for recruiters, TA leaders, and HR business partners evaluating whether to invest in predictive hiring tools or build structured measurement from scratch. Skim the first section for shared vocabulary in vendor evaluations. Use the second when deciding whether to buy or build and how to operate assessments responsibly across live reqs.

Plain-language summary

- What it means for you: Predictive hiring means using validated pre-hire data to improve the probability of a good hire, not eliminate the judgment call. The human decision stays; the data makes it better-informed.

- How you would use it: Deploy a validated assessment at a specific req type, track 90-day retention and early performance for all hires from that cohort, and after 12 months compare outcomes against pre-hire scores to see if the instrument is actually predictive for your context.

- How to get started: Standardize your interview process with a shared scorecard first. You cannot validate a predictive model without consistent structured ratings to correlate against outcomes. Add assessments only after the scoring process is stable.

- When it is a good time: When you have enough hire volume to build a meaningful validation sample (typically 50 or more hires per role type within 18 months) and a clean link between ATS candidate records and HRIS performance or retention data.

When you are running live reqs and tools

- What it means for you: Predictive scores appear in your ATS or assessment platform as a ranked probability or a pass/flag/review tier. These outputs should inform recruiter decisions, not replace them. A human-in-the-loop review gate is required before any score triggers an automatic rejection under GDPR Article 22.

- When it is a good time: When the model has been validated on a sample with similar role types and candidate demographics to your current pipeline, and when you have a named owner responsible for monitoring adverse impact on each hire cycle.

- How to use it: Run the four-fifths adverse impact check on any predictive score before and after each hiring cycle. If a protected group's pass rate falls below 80% of the highest-passing group, pause and investigate before using the score in consequential decisions.

- How to get started: Start with pre-hire assessment tools that include published validity evidence and adverse impact data. Avoid vendor models that cannot tell you which features drive a score -- see explainable AI in hiring for why this matters in practice.

- What to watch for: Model drift when your role mix or candidate market shifts significantly from the training period; bias amplification from historically skewed hiring decisions; and performance management data that is biased by the same managers who made the original hiring decision.

Where we talk about this

On AI with Michal live sessions the AI in recruiting track covers building the data foundation for predictive hiring: ATS audit, structured interview standardization, and connecting pre-hire assessment data to post-hire HRIS outcomes. The sourcing automation track covers how clean candidate data earlier in the funnel makes downstream prediction more reliable. Bring your current assessment vendor shortlist and the two or three outcomes you care most about predicting. Start at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast and tooling changes frequently. Treat these as starting points, not endorsements. Do not copy scripts that move candidate data to external platforms without reviewing your DPA first.

YouTube

- Search "predictive hiring validity evidence" filtered to the last 12 months. Prioritize IO psychologists and HR academics over vendor demos -- the academic content covers what actually predicts job performance and what does not, which is the most useful starting point.

- Search "structured interview vs unstructured interview predictive validity" for the core evidence base that underpins most predictive hiring claims.

- Search "adverse impact employment testing" to understand the legal and ethical constraints before committing to any assessment tool.

- r/IOPsychology has practitioner and academic perspectives on which assessments have well-established validity evidence versus those with weak or proprietary-only validation.

- r/humanresources includes TA professionals discussing what actually changed after implementing predictive hiring tools -- the skeptical threads are as useful as the success stories.

- r/recruiting has threads on specific vendor experiences with predictive scoring tools, including accuracy claims that did not hold up after implementation.

Quora

- Does predictive analytics work in hiring? collects practitioner and researcher perspectives across company sizes and maturity levels.

Predictive hiring vs unstructured hiring

| Dimension | Unstructured process | Predictive approach |

|---|---|---|

| Interview format | Ad hoc, varies by interviewer | Structured with shared scorecard |

| Assessment | None or unvalidated | Validated, job-relevant instruments |

| Decision input | Impression and fit | Score plus structured rating |

| Bias risk | High and unchecked | Present, but measurable and auditable |

| Data output | None for future learning | Outcome data for calibration |

Related on this site

- Glossary: Predictive analytics in recruitment, Pre-hire assessment tools, Pre-employment assessment software, Explainable AI in hiring, Adverse impact, AI bias audit, Human-in-the-loop (HITL), HR analytics in recruitment, Scorecard

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: foundations in recruiting