Pre-hire assessment tools

Software platforms that deliver, score, and report pre-hire assessments at scale: invite automation, candidate-facing test delivery, ATS score sync, and adverse impact dashboards that let TA teams move from one-off email attachments to a repeatable, auditable screening layer.

Michal Juhas · Last reviewed May 15, 2026

What are pre-hire assessment tools?

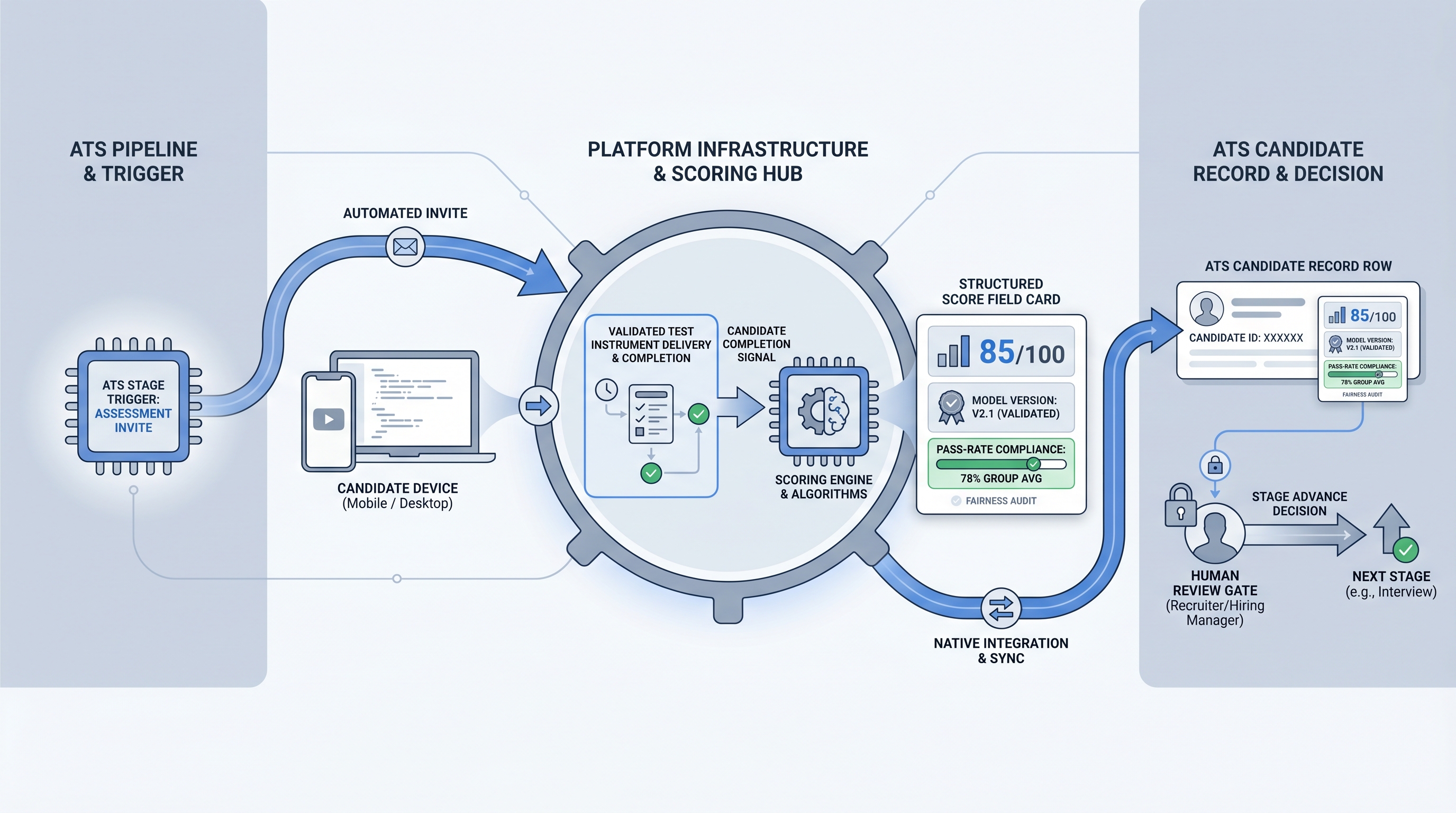

Pre-hire assessment tools are the software platforms that handle everything around a candidate evaluation except the question content itself. The assessment instrument is the test: a cognitive screen, a work sample, a situational judgment task, a coding exercise. The tool is the platform that delivers the invite, hosts the candidate experience, scores responses, stores the result, and returns a structured data point to the ATS candidate record.

At low volume, teams manage without a dedicated platform. A recruiter emails a link, the candidate completes a test on a vendor website, and the recruiter logs a pass or fail manually in the ATS. That works at 10 hires a year. At 100, it creates a bottleneck. At 500, the manual data entry alone becomes a compliance risk because score dates, scoring model versions, and audit trails are absent.

What separates a functional pre-hire assessment tool from a liability is not the feature count. It is whether the platform can show, for every historical score: which version of the scoring model ran at that moment, what group pass-rate data looks like for the role family, and where the data lives and how it is deleted on candidate request.

In practice

- A TA ops lead evaluating three pre-hire assessment platforms for a 100-hire-a-year software engineering track builds a demo script focused on ATS field-level score sync rather than UI aesthetics. Two platforms offer only PDF score emails; neither can populate a structured field in the ATS without manual entry. The third offers a native integration with conditional stage-advance rules. The decision takes one call instead of three weeks.

- A recruiter whose team deployed a pre-hire assessment tool with no ATS integration spends 20 minutes per candidate copying scores from email attachments into a spreadsheet. Six months in, a compliance review finds no versioned record of which scoring model ran on which candidate. The team migrates to a platform with a native integration and the time cost drops to zero for standard completions.

- An HRBP running a post-deployment review discovers the assessment vendor updated its AI scoring model three months after go-live, with no communication. Historical pilot scores and current hiring cycle scores are no longer on the same scale. The vendor cannot produce a version log showing which model scored which candidate. The team halts automated stage-advance rules until the vendor backtracks the scoring metadata.

Quick read, then how hiring teams use it

This is for recruiters, TA ops leads, and HR partners who need the same vocabulary in vendor evaluations, intake calls, and compliance reviews. Skim the first section for a fast shared picture. Use the second when you are selecting a platform for a live hiring program.

Plain-language summary

- What it means for you: Pre-hire assessment tools are the software infrastructure between a validated test and the ATS record. The tool handles invite delivery, candidate experience, scoring, storage, and result sync. You are buying an operational layer, not validation evidence.

- How you would use it: Pick the platform after you have chosen the assessment instrument. Confirm ATS integration returns a structured score field, not a PDF. Check that adverse impact reporting runs per cohort without exporting to a spreadsheet.

- How to get started: Build a demo script with three questions: which exact ATS fields receive score data, how the vendor handles scoring model updates, and what the deletion path looks like when a candidate requests data removal. Answers to these three reveal more than a feature comparison matrix.

- When it is a good time: After the role brief is settled, after the assessment instrument is validated for the role family, and after your data protection lead has reviewed the vendor data processing agreement.

When you are running live reqs and tools

- What it means for you: A pre-hire assessment tool in a live hiring program fires invites from ATS stage triggers, collects completions, scores responses, and syncs results back to specific candidate record fields. Every step between the trigger and the score field is a place where integration drift, scoring model updates, or deletion failures can create audit exposure.

- When it is a good time: After your sourcing pass-through rate is stable enough to separate a screening bottleneck from a sourcing problem, and after IT has reviewed the data routing between your ATS and the vendor.

- How to use it: Set one cut score threshold per role family, document the rationale and the scoring model version in writing on the same date, and run a four-fifths adverse impact check on each cohort before acting on results. Keep the assessment score in a separate field from the recruiter stage decision so the two inputs remain independently auditable.

- How to get started: Pilot on a closed req with 30 or more past hires. Score them retroactively using the platform. Check whether the assessment result correlates with 90-day manager performance ratings. If the correlation is weak, you are buying operational convenience, not selection signal.

- What to watch for: Vendors who refresh their AI scoring engine without versioning historical results. Platforms where the only adverse impact report is a vendor-calculated aggregate, not per-role-family data you can run yourself. Human-in-the-loop gates that exist in demos but disappear in production configurations.

Where we talk about this

On AI with Michal live sessions we cover pre-hire assessment tools in the legal and compliance modules of the AI in recruiting track. Participants work through vendor evaluation exercises, practice reading validity reports and data processing agreements, and discuss where a platform layer adds operational value versus where it adds cost without improving selection signal. The sourcing automation track adds the integration side: wiring ATS stage triggers to assessment invites and routing scored results back without manual data entry. Join a session at Workshops for peer discussion with real vendor names and live ATS configurations, then continue through membership office hours for questions that surface after go-live.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before you wire any assessment tool into a candidate-facing process.

YouTube

Search with Filters → Upload date to surface recent IO psychology and employment law content alongside vendor product demos.

- Pre-hire assessment platform comparison ATS integration (practitioner breakdowns of how different platforms sync scores to ATS fields and what deep integration actually means)

- Pre-employment testing software adverse impact reporting (what to look for in a platform compliance dashboard and how to run your own four-fifths rule check)

- AI scoring pre-hire assessment bias GDPR (legal and IO psychology perspectives on AI-scored assessments under GDPR and US algorithmic hiring disclosure laws)

- Hiring assessment tool demo Greenhouse integration (vendor demos that reveal integration architecture rather than UI polish)

- r/IOPsychology covers practitioner debate on which assessment platform claims hold up under validity scrutiny, with citations to research on specific vendor approaches.

- r/recruiting surfaces real recruiter discussions on candidate drop-off from long tests, mobile completion rates, and which platform deployments cost teams strong prospects.

- r/humanresources captures HRBP and legal partner perspectives on GDPR documentation requirements and adverse impact reporting obligations when using third-party assessment software.

Quora

- Quora search: pre-hire assessment tools has practitioner answers on platform selection, vendor evaluation criteria, and integration experiences; verify specific claims before acting on them.

Pre-hire assessment tools versus two common alternatives

| Approach | ATS integration | Adverse impact reporting | Compliance audit trail |

|---|---|---|---|

| Purpose-built pre-hire assessment tool | Structured field sync via API or webhook | Per-cohort, per-role family | Scoring model version logged per result |

| Manual link plus spreadsheet | None | Manual calculation required | None by default |

| ATS-native assessment module | Native to the ATS | Varies by ATS vendor | Depends on ATS version |

Related on this site

- Glossary: Pre-hire assessment test, Pre-employment assessment tools, Pre-employment assessment software

- Glossary: Hiring assessment tools, Candidate assessment tools, Online assessment tools for recruitment

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), Explainable AI hiring

- Glossary: Workflow automation, Recruiting webhooks, Sourcing pass-through rate

- Glossary: Pre-employment assessment test, Pre-employment skills assessment

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting