AI-native

For TA and recruiting teams: an operating style where models, skills, and automation are assumed in the design of work, with clear handoffs and QA, not one-off chats when you remember to open ChatGPT.

Michal Juhas · Last reviewed May 2, 2026

What is AI-native?

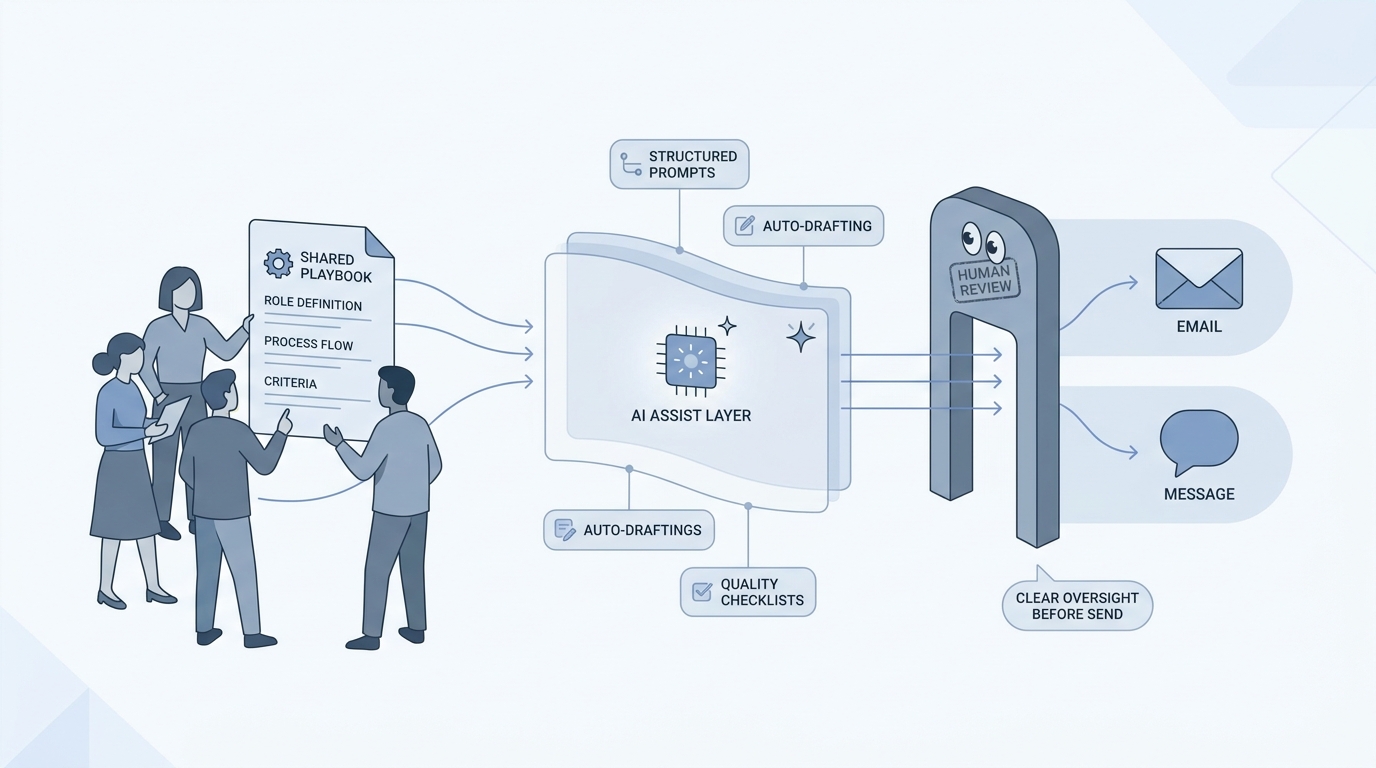

AI-native means you plan hiring work so AI tools, saved prompts, and human checks are a normal part of the process, not a panic move at the last minute. Teams share the same short playbooks so tone and quality stay steady from one req to the next.

In practice

- When TA keeps one short page with tone, must-not phrases, and how you describe the company, and everyone links it before they use ChatGPT or Claude, that is AI-native hiring in plain clothes. You hear it on webinars or from a lead who is tired of five different voices in outreach.

- When sourcers use the same saved project or custom GPT for every similar role instead of retyping the whole brief each time, the work stops living in one person's private chat history. Agency partners sometimes call this "governed everyday use of AI" in slide decks.

- When every candidate email still gets a quick human read before send, even if AI wrote the first draft, that habit is what makes the setup trustworthy instead of only "we bought licenses."

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: AI-native means you plan hiring work the way you plan email: shared rules, the same tone, and a person who checks important sends. The computer helps on purpose, not only when someone panics at 5 p.m.

- How you would use it: You keep one short page everyone uses before they draft outreach or intake notes, and you still read candidate-facing text before it goes out.

- How to get started: Pick one repeat artifact (for example outbound for one role family). Write who types what, where the draft lives, and who approves send.

- When it is a good time: When more than two people touch the same reqs each week and answers already sound like five different companies.

When you are running live reqs and tools

- What it means for you: AI-native is an operating style: system instructions, skills or Gems, Markdown for AI knowledge bases, structured output, and explicit QA before external sends.

- When it is a good time: When hero prompts in private chats are becoming your "real" process documentation, or when compliance asks where the approved tone lives.

- How to use it: Version instructions, debrief what broke when a req closes, and separate "demo speed" from production safety. Pair with What is AI-native work?.

- How to get started: Read the blog post above, mirror one workshop-style chain (inputs, model pass, reviewer, destination), then expand.

- What to watch for: AI theater (slides without owners), skipped verification on employers and dates, and automation before prompts are stable.

Where we talk about this

Live AI in recruiting sessions keep returning to the gap between teams that connect skills, knowledge bases, and APIs and teams that chase new models without fixing inputs. Sourcing automation days name the same tension on the systems side. Bring your stack to Workshops if you want vocabulary plus pressure-testing.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- What is generative AI? (Google Cloud Tech) is a calm enterprise intro you can share with non-technical partners.

- The future of work with AI (Microsoft) is polished vendor narrative, useful for the words leaders repeat in QBRs.

- How to Learn AI as a Beginner (NetworkChuck) is informal but maps "where do I even start" energy many TA folks feel.

- How is AI changing recruiting? in r/recruiting is a long practitioner thread on real workflows.

- Recruiters who use AI daily, what does your workflow look like? in r/recruiting swaps concrete habits.

- How are you using AI as a recruiter? in r/Recruitment overlaps agency and in-house voices.

Quora

- What does it mean for a company to be AI-native? mixes startup and enterprise answers (quality varies).

Boolean versus systemized work

| Mode | What you do | Risk |

|---|---|---|

| Ad hoc chat | Re-type context each time | Inconsistent output, no audit trail |

| Systemized (Gems, GPTs, skills) | Pre-load tone, format, must-haves | Needs owners to update when brand or policy changes |

| Automated flows | Tools like Make or n8n move rows and drafts | Needs monitoring, GDPR, and API hygiene |

For the sourcing angle on when to stay literal versus semantic, read Boolean search vs AI sourcing.

Related on this site

- Glossary: AI adoption ladder, System instructions, Markdown for AI

- Blog: How to write better AI prompts

- Tools: Claude for recruiters

- Guides: Talent acquisition managers

- Live learning: Workshops

- Membership for ongoing Q&A sessions and materials: Become a member