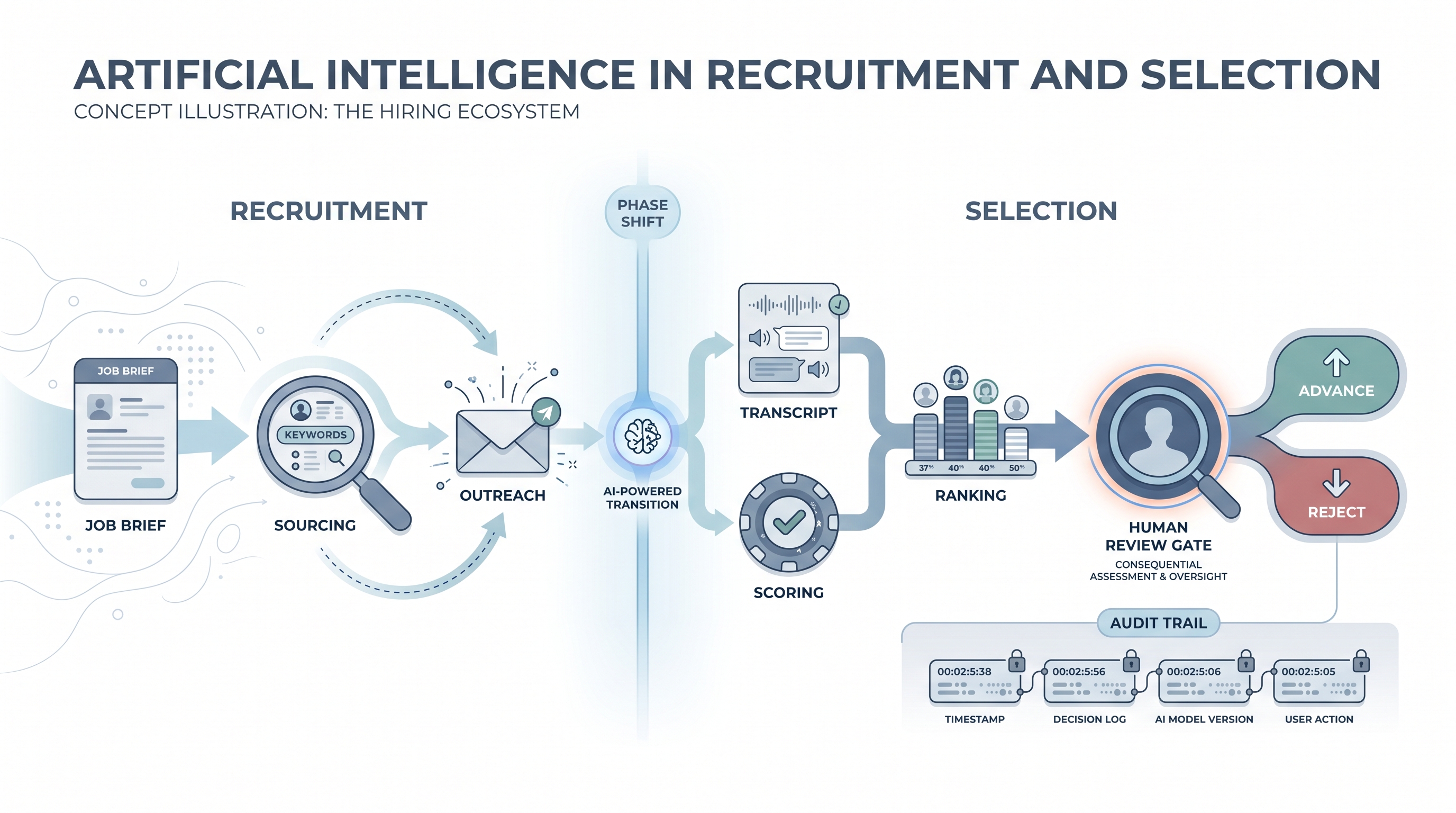

Artificial intelligence in recruitment and selection

The application of AI tools across both hiring phases: using language models and automation to source candidates and write job briefs (recruitment), and using scoring engines, transcript analysis, and decision support to compare and advance or reject applicants (selection).

Michal Juhas · Last reviewed May 10, 2026

What is artificial intelligence in recruitment and selection?

Artificial intelligence in recruitment and selection applies AI tools across two connected hiring phases. The recruitment phase uses language models and automation to source candidates, write job briefs, and fill a pipeline. The selection phase uses scoring engines, transcript analysis, and decision-support tools to compare applicants, score interviews, and inform advance or reject decisions.

The distinction matters because the two phases carry different risk profiles. Recruitment AI speeds up high-volume tasks at the top of the funnel where mistakes are recoverable. Selection AI assists consequential decisions where a rejected candidate can ask why and, in many jurisdictions, has the legal right to a human-reviewed explanation. Human-in-the-loop gates, audit logs, and AI bias audit checks are not optional extras when AI touches selection.

In practice

- A TA team at a 500-person scale-up uses an AI tool to summarise interview transcripts into scorecard notes after each call. The recruiter reads the summary alongside the raw transcript, corrects any gaps, and approves the entry before it becomes the official ATS record. That is AI in selection used responsibly.

- A vendor demo claims the platform "automates selection." In the debrief it turns out the tool ranks CVs and flags the top 20 for recruiter review. That is AI-assisted screening with a human advance gate, not fully automated selection, and the distinction matters for GDPR compliance conversations with legal.

- An HRBP is asked by a rejected candidate why they were not shortlisted. The answer "the model scored you lower" is not compliant under GDPR Article 22. The correct answer requires the human reviewer's documented rationale and the criteria applied.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA leads, and HRBPs who need a shared definition before buying a tool, writing a policy, or running a pilot. Skim the first section for a fast shared picture. Use the second when you are deciding which phase to start with and what review gates to wire in.

Plain-language summary

- What it means for you: Recruitment AI drafts and finds; selection AI evaluates and ranks. The second phase is where candidates get rejected, so it carries more legal and ethical weight than the first.

- How you would use it: Start with a recruitment task (sourcing notes, job description first draft) before adding any selection-phase AI (scoring, ranking). The recruitment phase is a safer place to build confidence in AI outputs before you rely on them for consequential decisions.

- How to get started: Pick one high-volume selection task with clear success criteria: interview transcript summaries added to scorecards, or CV ranking compared to a written job criteria card. Run it alongside your manual process for two weeks before removing the manual step.

- When it is a good time: After your scorecard is written, agreed, and in use; after a vendor DPA is signed; and after at least one recruiter and one hiring manager have calibrated the AI output together on a real role.

When you are running live reqs and tools

- What it means for you: Selection-phase AI produces output that directly affects whether a candidate advances. That means model version, input data, and reviewer name need to be logged per decision, not just per tool deployment.

- When it is a good time: After recruitment-phase AI is stable, after an AI bias audit has been run on selection tools you plan to use at scale, and after legal has reviewed the vendor's conformity documentation under the EU AI Act if you operate in the EU.

- How to use it: Keep AI outputs as a starting point, not a final answer. A transcript summary is a draft; a fit score is a hypothesis. Route every AI selection output through a named human reviewer before stage advance or reject actions are logged in the ATS. Log the review decision and date alongside the AI output.

- How to get started: Map your selection process in five steps: CV review, phone screen, interview, debrief, offer. Identify which step has the clearest criteria and the most volume. Add AI there first. Use structured output when writing scores and summaries back to ATS fields so the data is parseable later.

- What to watch for: Automated ranking models that assign a numeric score without disclosing the features used. Adverse impact patterns that stay invisible until someone samples declined profiles by demographic group. Transcript analysis that misses candidates who pause to think, speak a second language, or use different sentence structures than the training data assumed.

Where we talk about this

On AI with Michal live sessions, the AI in recruiting track covers both phases: recruitment tools for sourcing and outreach, and the selection-phase decisions that arrive when you move from drafting to deciding. The sourcing automation track goes deeper on workflow automation and ATS API patterns. Start at Workshops and bring your current tool stack, a sample role, and your biggest compliance question so the conversation is grounded in your real situation.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to a new tool.

YouTube

- Search "AI in recruitment and selection" on YouTube filtered to the past year for practitioner comparisons and vendor demos. Prefer videos that show what happens when the AI output is wrong, not only the happy path.

- SHRM (Society for Human Resource Management) publishes HR tech sessions and compliance-focused content covering AI in hiring, including selection-phase obligations under US and EU law.

- Search "AI bias in hiring" for academic and advocacy perspectives on what selection-phase models get wrong and how audits surface group-level gaps that individual reviews miss.

- r/recruiting has practitioner threads on what AI in selection looks like day to day: scoring tools that worked, ones that broke, and how hiring managers react when they see AI-ranked shortlists.

- r/humanresources surfaces HR leader and HRBP perspectives on selection compliance and what governance obligations arrive the moment a scoring tool enters the process.

Quora

- Search "AI in recruitment and selection" or "automated candidate screening compliance" on Quora for practitioner and legal perspectives. Vendor-authored answers often skip the GDPR and adverse impact sections, so read critically.

Recruitment phase versus selection phase: where AI changes the picture

| Aspect | Recruitment phase | Selection phase |

|---|---|---|

| Main AI tasks | Sourcing, outreach drafting, JD writing | Interview scoring, ranking, assessment analysis |

| Consequence of error | Candidate not found or contacted | Candidate wrongly advanced or rejected |

| Legal framework risk | GDPR data processing, outreach consent | GDPR Article 22, EU AI Act high risk, bias law |

| Audit depth needed | Tool version, prompt, human send review | Model version, input data, output, reviewer name, date |

| Bias check timing | Before outreach at scale | Before deployment and quarterly after |

Related on this site

- Glossary: Artificial intelligence in recruitment, AI bias audit, Adverse impact, Human-in-the-loop, Scorecard, Explainable AI in hiring, AI in recruiting, Structured output, Workflow automation

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member