Artificial intelligence in recruitment

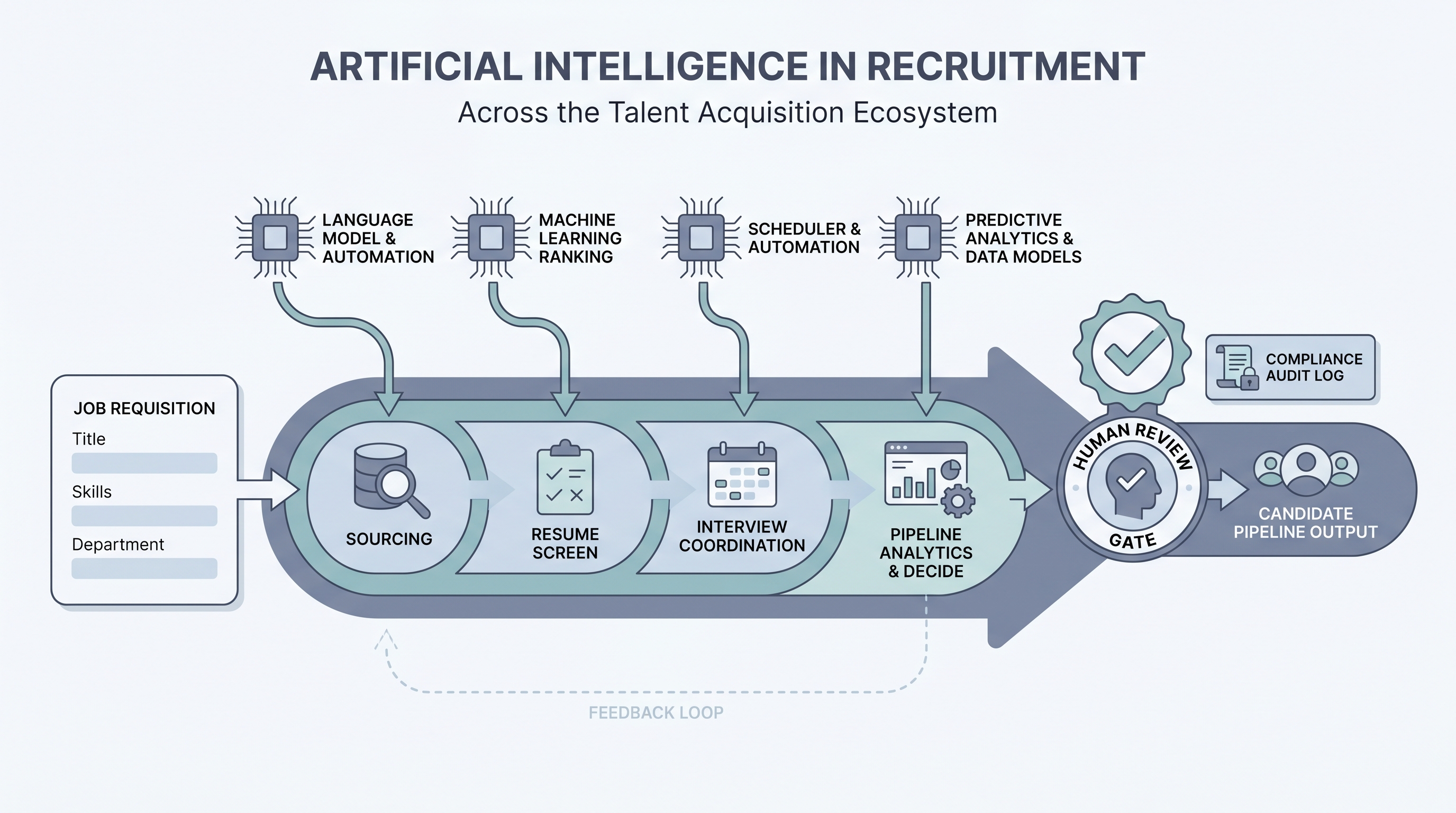

The use of machine learning, large language models, and automation across the recruitment lifecycle, from writing job descriptions and sourcing candidates through screening, interview coordination, and pipeline reporting, with human review at each step where decisions affect people.

Michal Juhas · Last reviewed May 4, 2026

What is artificial intelligence in recruitment?

Artificial intelligence in recruitment is the use of machine learning, large language models, and process automation across the hiring lifecycle: writing job descriptions, sourcing candidates, screening CVs, drafting outreach, transcribing interviews, coordinating schedules, filling scorecards, and generating pipeline reports. It covers every stage from an approved requisition through to an accepted offer.

The term is broader than a single tool category. It spans AI chat assistants a recruiter opens in a browser tab, AI features embedded in an ATS or sourcing platform, and end-to-end workflow automation where ATS events trigger drafts that pass a human review gate before reaching any candidate. What connects all of these is the decision to apply AI to hiring work rather than keeping everything in spreadsheets and manual copy-paste.

In practice

- A TA ops lead describes their pipeline as "AI-assisted" because a prompt summarises stage counts from a spreadsheet export before the Monday team call; recruiters still own the interpretation and every advance or reject decision.

- A sourcer opens a saved AI project with role context pre-loaded and generates four InMail variants for a senior engineering role in 15 minutes instead of an hour; every message still gets a read before send, but the drafting work is gone.

- A TA director asks their ATS vendor which model version is live in the resume-ranking feature and when it was last changed, then logs the answer and re-runs an adverse impact check; this is what auditable artificial intelligence in recruitment looks like at scale.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA leads, and HRBPs who need a shared definition before buying a tool, writing a policy, or running a pilot. Skim the first section for a fast shared picture. Use the second when you are deciding which task to start with and what review gates to put in place.

Plain-language summary

- What it means for you: AI in recruitment shifts repeatable cognitive work (drafting, summarising, scheduling, ranking) to a model while leaving judgment calls (culture read, offer negotiation, debrief facilitation) with the recruiter.

- How you would use it: Pick one high-volume step you do the same way every week: sourcing outreach, screen notes, or pipeline status emails. Run a prompt against five real roles. Measure rework time.

- How to get started: Start with an internal-facing task, not a candidate-facing one. Document the prompt, the format, and who reviews before the output goes anywhere.

- When it is a good time: After your hiring process is stable enough to describe in one page. AI amplifies what is already working; it multiplies chaos if the process is still shifting every Monday.

When you are running live reqs and tools

- What it means for you: AI tools that handle candidate data interact with your ATS and can influence who gets human attention, so vendor DPAs, bias checks, and decision logs are not optional extras.

- When it is a good time: Before a high-volume campaign or after a bottleneck appears in screening speed or outreach quality that the team cannot fix by adding headcount.

- How to use it: Connect AI outputs to your ATS only after the prompt is stable and reviewed. Log model version, prompt, and output next to each candidate interaction. Set a human gate before any candidate-facing send or advance or reject decision.

- How to get started: Run a side-by-side on closed roles: compare the AI-suggested shortlist to who you actually hired. Gaps show you what the model misses before live candidates are affected.

- What to watch for: Opaque scoring tools, vendors that retrain shared models on your candidate data, and AI outputs formatted for a different ATS than the one you run. Ask the vendor which model version is live and when it last changed.

Where we talk about this

AI with Michal live workshops cover artificial intelligence in recruitment across two tracks. AI in recruiting blocks work through sourcing, screening, outreach, and reporting with real compliance questions and tool comparisons. Sourcing automation sessions dig into the integration layer: how AI outputs connect to ATS events, where webhooks break, and which GDPR questions to answer before you scale. Start at Workshops and bring a real role brief and your current stack questions.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures and vendor details directly before wiring candidate data.

YouTube

- AI in Recruiting: What Talent Teams Need to Know covers the practical landscape for TA teams adopting AI tools across the recruitment funnel.

- Introduction to Generative AI (Google Cloud Tech) gives the language-model foundation useful before evaluating any AI recruiting vendor.

- AI Bias and Fairness Explained (IBM Technology) covers the algorithmic fairness concepts that underpin AI bias audits in recruitment contexts.

- How are you actually using AI in your recruiting workflow right now? in r/recruiting is a candid survey of tools and use cases from practitioners in the chair.

- AI tools for recruiting: 6 months in, what worked and what did not in r/recruiting is honest about failure modes you do not see in vendor demos.

- Has AI made recruiting easier or just different? in r/Recruitment covers both the efficiency gains and the skepticism that AI adoption surfaces in teams.

Quora

- How is artificial intelligence changing the recruitment process? collects varied practitioner perspectives across sourcing, screening, and scheduling use cases.

AI in recruitment across the funnel

| Stage | Typical AI use | Human gate |

|---|---|---|

| Job description | Draft and optimize copy | Recruiter reviews before posting |

| Sourcing | Draft outreach, generate Boolean strings | Approve before send |

| Screening | Fill scorecard from CV or call notes | Recruiter reviews before advance or reject |

| Scheduling | Propose times, draft calendar invites | Confirm edge cases manually |

| Reporting | Summarise stage counts, flag bottlenecks | TA lead validates before exec presentation |

Related on this site

- Glossary: AI in recruiting, AI-native, Workflow automation, Human-in-the-loop, AI bias audit, Scorecard, Recruiter AI, AI adoption ladder

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member