Behavioral interview

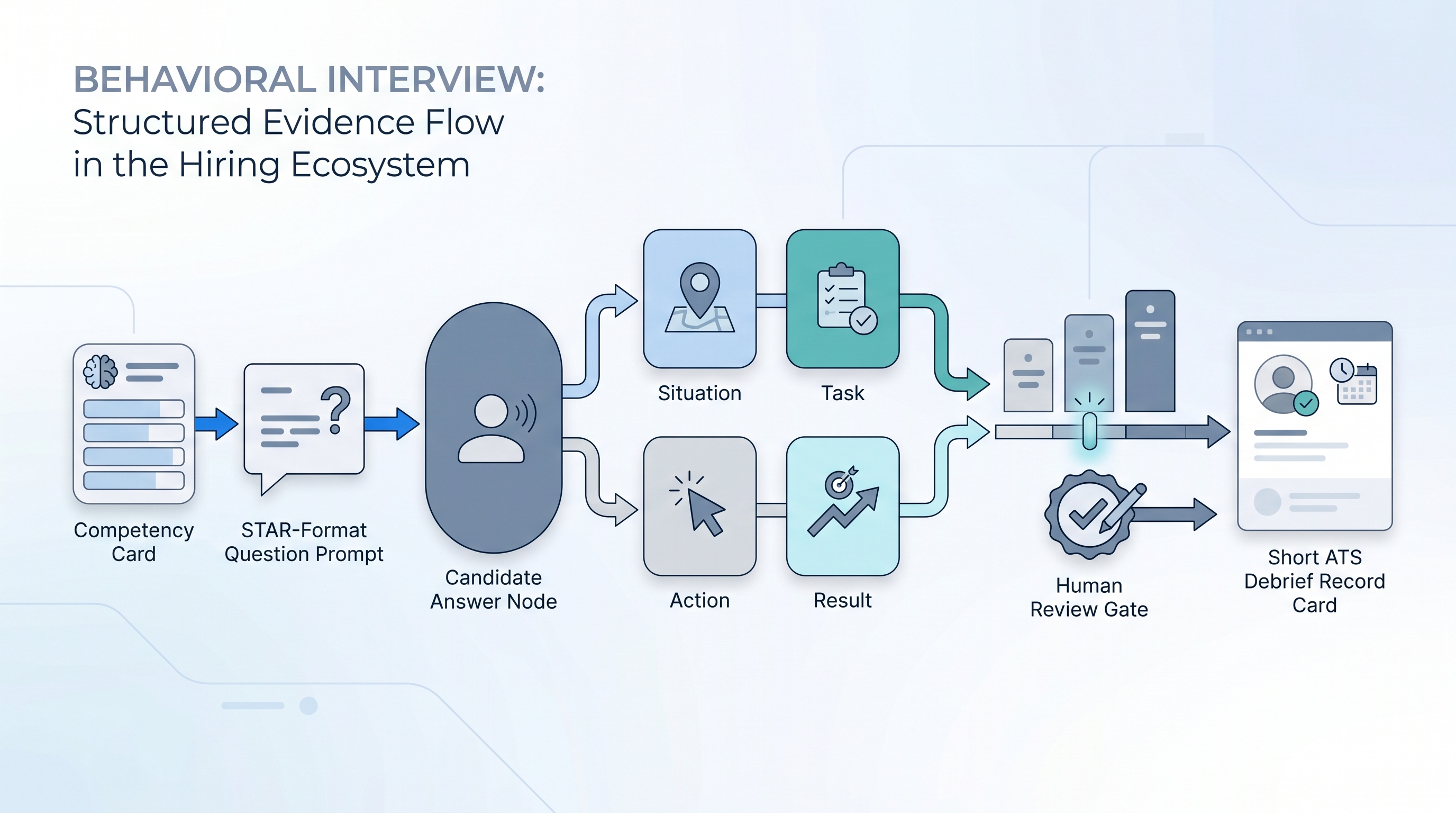

A structured interview technique that asks candidates to describe specific past situations using the STAR format (Situation, Task, Action, Result) to predict future performance. Each question targets a named competency from a shared scorecard rather than inviting hypothetical opinions.

Michal Juhas · Last reviewed May 15, 2026

What is a behavioral interview?

A behavioral interview asks candidates to describe specific past situations rather than explain what they would do hypothetically. The underlying logic is that past behavior is the strongest available predictor of future performance, particularly when the question targets a defined competency and the response is scored against anchors the panel agreed on before the first interview.

The standard structure is STAR: Situation (the context), Task (what needed to happen), Action (what the candidate personally did), and Result (the measurable or observable outcome). A well-formed behavioral question forces a specific answer that can be probed: "What was your exact role?", "What happened when you tried that?", "How did you measure success?"

Behavioral interviewing is not just a questioning style. It works when questions are linked to a competency framework, all candidates at a stage answer the same questions, and scores are recorded before the debrief conversation begins.

In practice

- When a recruiter asks "Tell me about a time you had to influence a hiring manager who disagreed with your recommendation" and the candidate starts with "So last quarter, my HM wanted to close a role we had open for three months...", that is a behavioral question producing usable STAR evidence.

- A sourcing team that says "the interviews are all over the place" is usually describing an unstructured process. Different interviewers ask different questions, no shared rubric exists, and debrief becomes a feeling contest rather than evidence review.

- Interview coordinators who flag "we got a 1 and a 5 on the same candidate for the same competency" have spotted a calibration gap that a scoring rubric and one calibration session before the loop would have surfaced.

Quick read, then how hiring teams use it

This is for recruiters, TA leads, and HR partners who need the same vocabulary in debrief calls, vendor evaluations, and interview training. Skim the first section for a fast shared picture. Use the second when you are designing a question set, reviewing scorecards, or rolling out a new panel.

Plain-language summary

- What it means for you: Instead of asking "Are you a good communicator?" you ask "Tell me about a time you had to communicate difficult news to a team. Walk me through what happened." The answer either has specific evidence or it does not, and you can tell which.

- How you would use it: Write one or two behavioral questions per competency on your scorecard before the loop starts. Share the question set with every interviewer, not just a role description. Score after the interview, before the debrief.

- How to get started: Pick the three competencies that most predict success in the role. Write one STAR-format question per competency. Run one calibration session using a sample transcript (past or synthetic) so every panelist knows what a 3 looks like before the first live interview.

- When it is a good time: As soon as you have a scorecard and a panel who will commit to scoring independently. If neither exists yet, create both before you open the loop.

When you are running live reqs and tools

- What it means for you: Behavioral interview questions are the input layer for structured scoring. Without them, your scorecard is a form the panel fills in based on the debrief conversation, not before it. That makes scores a post-hoc rationalization rather than independent evidence.

- When it is a good time: Every time you open a new role with a panel of two or more interviewers. Single-interviewer screens benefit from behavioral structure too, but the calibration requirement is less acute.

- How to use it: Map each competency to one or two behavioral questions in a shared interview guide. Build the guide before any interviews happen, not during the loop. Run it past HR legal if the role involves legally sensitive competencies like physical ability or health-related requirements.

- How to get started: Pull your last three scorecards and look at the notes section. If interviewers wrote general impressions instead of specific evidence with STAR components, you have a behavioral question gap. Build the question set for the next req using those competency gaps as a starting point.

- What to watch for: Interviewers who accept thin answers without probing ("That sounds great, and what did you personally do?"), panels where only senior members do the probing, and debrief conversations that open with "I just had a good feeling about them" before anyone reviews scores. The human-in-the-loop principle applies: AI can draft questions and flag thin transcript answers, but a named reviewer owns the score before it goes into the ATS.

Where we talk about this

On AI with Michal live sessions, behavioral interviewing comes up in both the AI in recruiting and sourcing automation tracks when we connect structured scoring to ATS data quality. The question design, calibration, and debrief facilitation steps are recurring themes in panel design discussions. If you want the full room conversation with other TA practitioners, start at Workshops and bring a real scorecard or question set you are working on.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to a new tool.

YouTube

- Behavioral Interviewing: The STAR Method Explained for Hiring Managers (search) surfaces interviewer-perspective walkthroughs from HR practitioners and consultants on the STAR technique.

- Structured Interviewing: Reducing Bias in Your Hiring Process (search) covers the research behind structured versus unstructured panels and calibration approaches.

- How to Build a Competency-Based Interview Guide (search) shows question-writing and panel briefing workflows that mirror what cohorts build in live sessions.

- How do you handle behavioral interviews when candidates clearly prepped STAR scripts? in r/humanresources is a practical thread on probing techniques when a rehearsed answer does not reveal real signal.

- What questions do you ask to find out if someone is actually a team player vs. just saying they are? in r/recruiting collects practitioner-tested behavioral questions across competency areas.

- Interview calibration: does your company actually do it? in r/humanresources is a frank discussion of how calibration works (and does not) in real hiring teams.

Quora

- How do you know if a behavioral interview question actually predicts job performance? collects perspectives from I-O psychologists, HR leaders, and recruiting practitioners on validity and common pitfalls.

Behavioral versus unstructured interview

| Dimension | Behavioral (structured) | Unstructured conversation |

|---|---|---|

| Question source | Competency-linked, agreed before the loop | Interviewer improvises per candidate |

| Evidence type | Specific past situations (STAR) | General opinions, hypotheticals, gut reactions |

| Scoring | Rubric-anchored, before debrief | Post-hoc, influenced by debrief discussion |

| Bias exposure | Reduced but not eliminated | Higher: halo effect, recency, affinity |

| Calibration requirement | Required across panel | Usually skipped |

| Predictive validity | Moderate to high (research-supported) | Low to moderate |

Related on this site

- Glossary: Scorecard, Adverse impact, Human-in-the-loop (HITL), AI interview intelligence, Async assessment platform, Async screening, One-way video interview

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Self-paced: Starting with AI: the foundations in recruiting

- Membership: Become a member