Talent acquisition (TA)

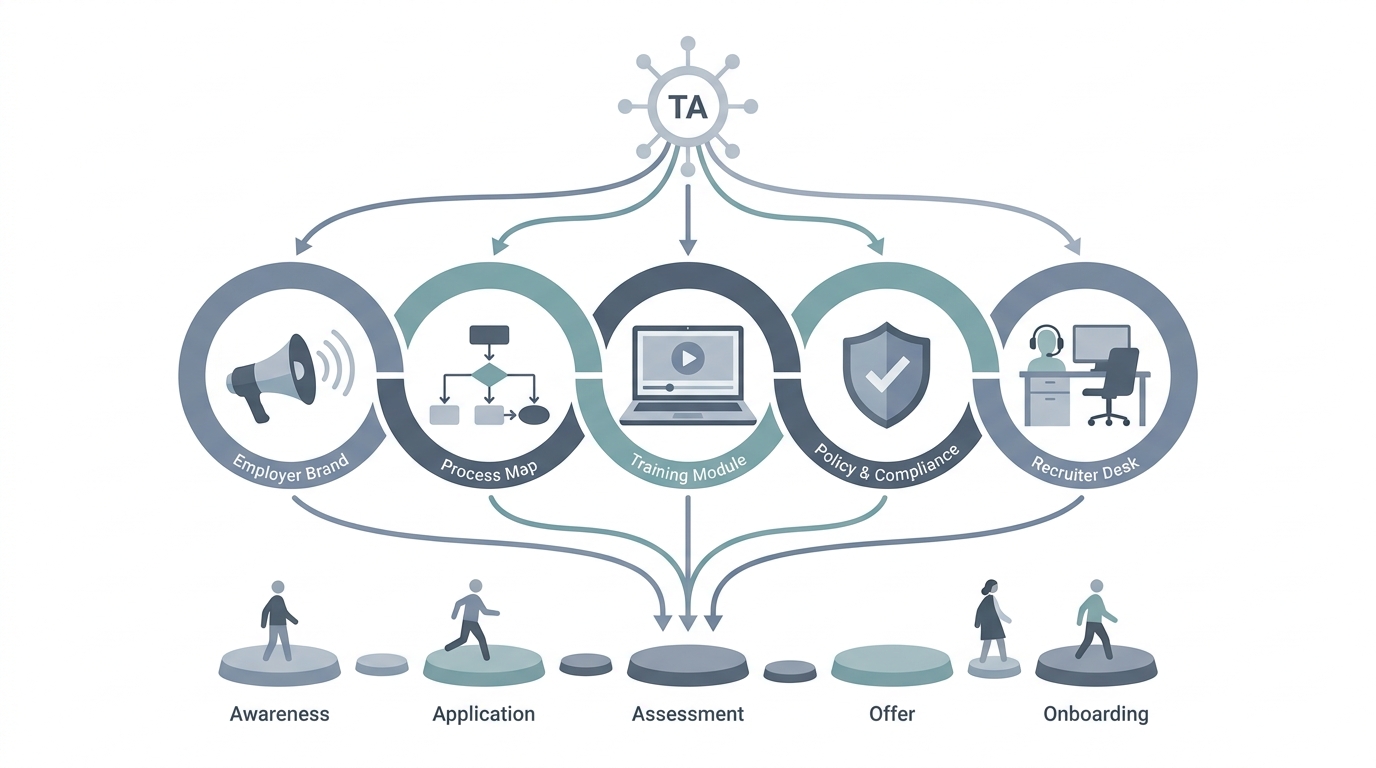

The full function that designs how a company attracts, selects, and onboard-readies talent, spanning employer brand, process, tooling, compliance, and recruiter enablement, not only filling reqs.

Michal Juhas · Last reviewed May 2, 2026

What is talent acquisition (TA)?

Talent acquisition is the full hiring function, from how you attract people to how you run interviews and onboarding handoffs, not only filling one open role. It covers process, tools, training, and how the team stays compliant.

In practice

- LinkedIn titles show "Head of Talent Acquisition" or "VP TA" while line recruiters still say "I run reqs." Business press uses "talent acquisition" when it talks about company-wide hiring strategy, not one open role.

- All-hands may invite the TA leader to explain new assessment tools or employer brand, which signals TA owns more than filling seats this week.

- Candidates rarely say the phrase, but they feel TA in how smooth scheduling is, how comms sound, and whether feedback loops work across months.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: Recruiting often sounds like filling jobs this month. Talent acquisition sounds like building the pipelines, tools, and relationships that make next quarter easier too.

- How you would use it: You still interview people, but you also care about metrics, employer brand, and what happens before the req opens.

- How to get started: Draw two circles: "req today" versus "bench and relationships." List three tasks in each circle you actually do weekly.

- When it is a good time: When leadership says "TA" in strategy decks and you need a shared definition with finance.

When you are running live reqs and tools

- What it means for you: TA spans workforce planning interfaces, CRM and talent pool hygiene, internal mobility, and recruiter operations, not only reqs.

- When it is a good time: When AI pilots need an owner for data, policy, and vendor selection across HR tech.

- How to use it: Pair AI-native governance with TA ops metrics: time in stage, source quality, and consent posture, not only time-to-fill.

- How to get started: Read Guides for talent acquisition managers on this site and map assistants to systems of record.

- What to watch for: Title inflation where "TA" means everything, and AI demos that skip compliance because "recruiting owns it."

Where we talk about this

AI in recruiting workshops speak to TA leads who must align sourcers, recruiters, and hiring managers on the same vocabulary. Sourcing automation workshops speak to the same leaders about keys and data contracts. Both audiences show up at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- What is Talent Acquisition? (Microsoft Cloud) is not a perfect definition video, but mirrors enterprise vocabulary decks.

- The AI Adoption Curve Explained (IBM Technology) helps when TA leads own "digital transformation" narratives.

- Introduction to Large Language Models (Google Cloud Tech) is a safe share for cross-functional committees.

- How is AI changing recruiting? in r/recruiting includes TA-wide policy threads.

- Talent acquisition & Talent pool from 0 in r/recruiting mixes scope and execution questions.

- ATS recommendations in r/Recruitment often reflects TA ops buying power.

Quora

- What is the difference between recruiting and talent acquisition? is messy but matches how candidates Google the terms.

Recruiting versus TA scope

| Lens | Recruiting emphasis | TA emphasis |

|---|---|---|

| Time horizon | This quarter reqs | Multi-quarter capability |

| Metrics | Fill speed, quality of hire | System health, risk, enablement |

| AI focus | Personal productivity | Standards, training, vendors |

Related on this site

- Blog: How to use AI in recruiting

- Tools directory: /tools

- Guides hub: /guides

- Foundation course: Starting with AI: the foundations in recruiting