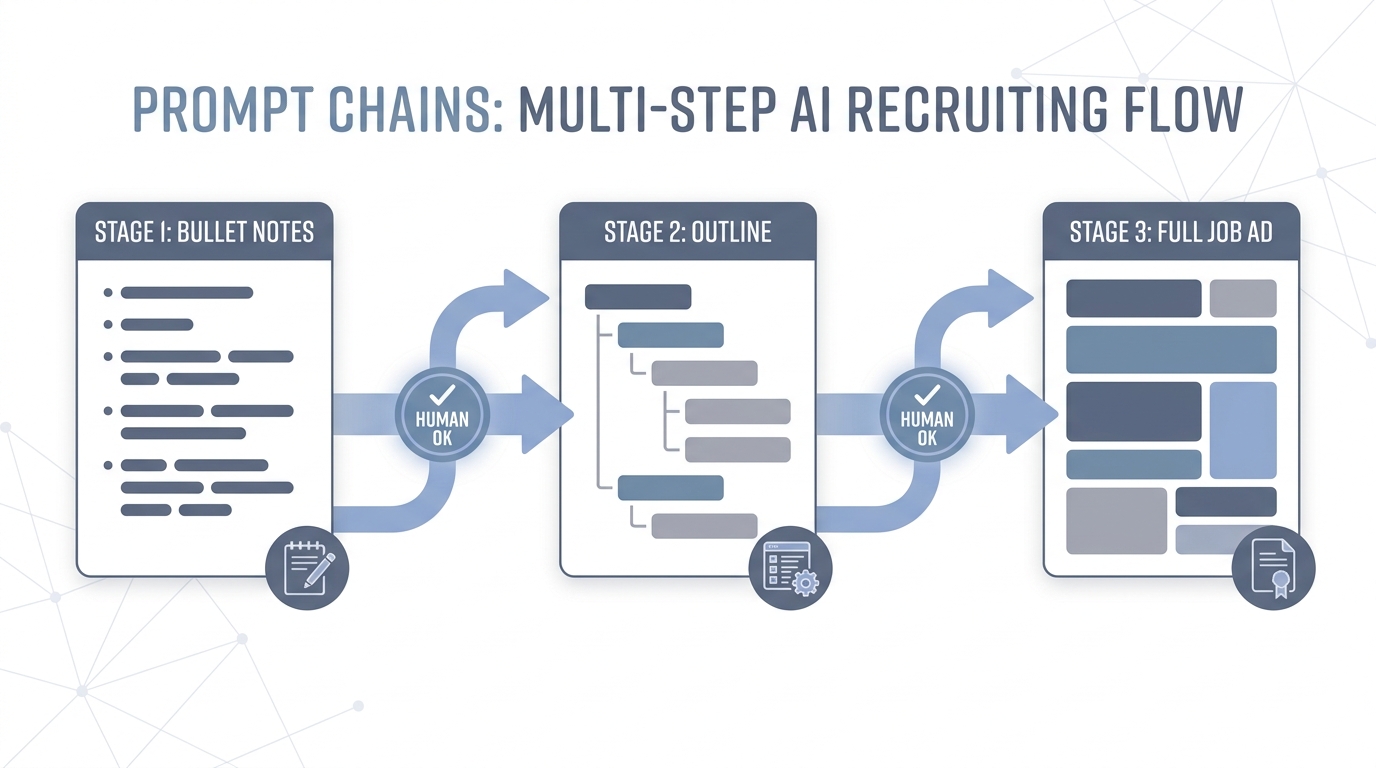

Prompt chain

A sequence of model calls (or human checkpoints) where each step consumes the last step's output, for example intake notes to outline, outline to JD, JD to outreach, with review gates between.

Michal Juhas · Last reviewed May 2, 2026

What is a prompt chain?

A prompt chain is several AI steps in a row, where each step uses the output from the step before. A common flow is intake bullets, then a JD outline, then full job ad copy, with a human review before anything goes live.

In practice

- You grab bullet notes from a hiring manager, turn them into a JD outline, then expand into full ad copy in separate chat steps. That everyday sequence is a prompt chain even if nobody labels it; ops blogs sometimes call it chained prompting.

- Internal playbooks read "step one intake summary, step two brand review" with an AI assist at each gate. You hear "do not skip step one" after someone once generated outreach before the JD was final and the team got burned.

- A teammate keeps the outline in a doc between steps so the next person can see what the model saw. That pause is the human-shaped gap between chain links.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: Instead of one giant ask, you break hiring work into small steps: summarize, then score, then rewrite, with a human between the risky steps.

- How you would use it: You run step one, you read the output, you paste only what step two needs.

- How to get started: Split "write outreach" into (a) extract facts, (b) draft, (c) shorten. Save each mini-prompt.

- When it is a good time: When single-shot answers wander or when compliance wants an audit trail between stages.

When you are running live reqs and tools

- What it means for you: Chains are explicit control flow over LLM calls: map, reduce, branch, tool calls. They relate to LangGraph-style builders and to human-in-the-loop gates before workflow automation fires.

- When it is a good time: When hallucination risk rises with one-shot personalization.

- How to use it: Log intermediate JSON, cap retries, and keep candidate-facing sends behind review.

- How to get started: Model one req in a notebook or script, then promote to shared tooling.

- What to watch for: Chains that hide errors until the last step, and chains nobody can update when the policy changes.

Where we talk about this

Sourcing automation workshops compare chains versus one-shot when APIs return structured fields worth validating between calls. AI in recruiting workshops use the same pattern for intake and outreach. See both at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- LangChain Explained in 13 Minutes (TechWorld with Nana) is builder-oriented vocabulary for chained calls.

- Let's build GPT: from scratch, in code, spelled out (Andrej Karpathy) explains why multi-step reasoning is not one monolith.

- Introduction to Large Language Models (Google Cloud Tech) stays executive-friendly when IT joins the meeting.

- How do you chain multiple LLM calls? in r/LangChain is a pragmatic thread.

- Few shot examples vs long prompt? in r/ChatGPT overlaps with when to split steps instead of stuffing one prompt.

- JSON mode vs function calling in r/OpenAI is API-shaped and relevant to structured steps.

Quora

- What is prompt engineering? is broad but links chains to everyday "please rewrite" habits.

Chain versus single-shot

| Approach | Best for | Risk |

|---|---|---|

| Single-shot | Tiny tasks | Hidden assumptions |

| Chain | Multi-artifact hiring | More handoffs to own |

| Chain + automation | High volume | API and GDPR review |

Related on this site

- Blog: AI candidate screening

- Tools: n8n

- Guides: HR business partners

- Course: Starting with AI: the foundations in recruiting