Pre-employment testing software

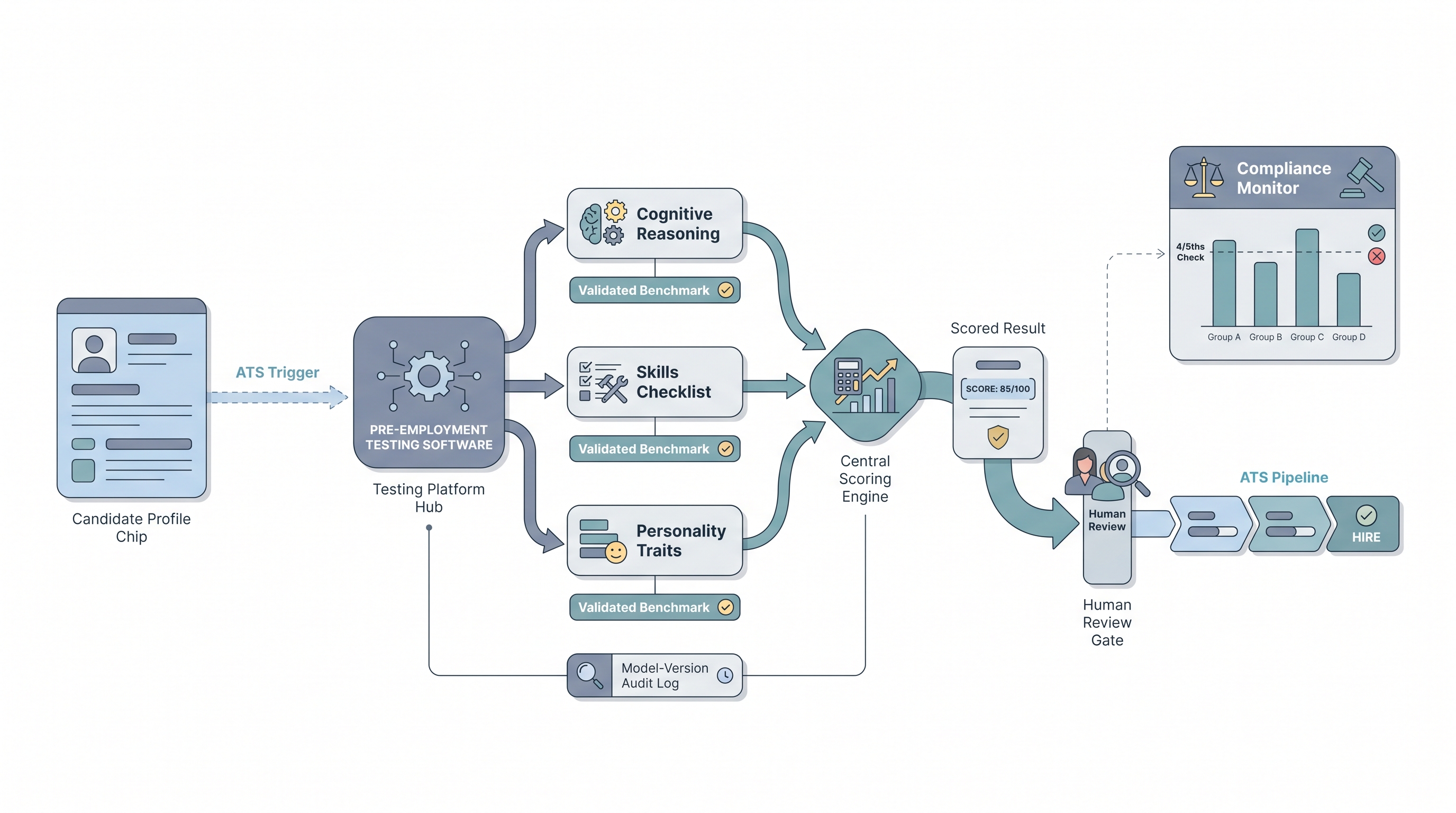

A category of SaaS platform that administers, scores, and audits standardized assessments before a hiring decision, covering cognitive ability, skills, personality, and situational judgment tests, with compliance reporting for adverse impact monitoring and ATS integration so results feed the pipeline with a documented audit trail.

Michal Juhas · Last reviewed May 9, 2026

What is pre-employment testing software?

Pre-employment testing software is the category of platform that organizations use to administer, score, and audit standardized tests before making hiring decisions. The core job is consistent delivery: every candidate for the same role receives the same validated assessment under the same conditions, and the scored result feeds the hiring pipeline with a documented audit trail.

The category covers a wide range of test types: cognitive ability tests measuring verbal reasoning, numerical thinking, and abstract reasoning; skills-based work samples; personality questionnaires; situational judgment tests; and technical screens for coding or role-specific tasks. Most enterprise platforms include a compliance layer: pass-rate reporting by demographic group so TA teams can monitor adverse impact before a screen compounds undetected across thousands of applicants.

The software typically sits between your sourcing and interview stages, integrated into the ATS so test completion updates the candidate stage automatically. What separates a defensible deployment from a legal exposure is whether the instruments inside the platform were validated for your role family, not just for the vendor's reference population.

In practice

- A TA ops lead setting up a new pre-employment testing platform asks the vendor for a technical manual for each assessment module before configuring cut scores, not after the first cohort has been screened.

- A recruiter sees two candidates with similar cognitive scores and uses the result to calibrate interview focus areas, not as a pass-fail gate before the hiring manager has any input.

- An HRBP reviewing last quarter's hiring cohort runs the platform's group pass-rate report before closing the req pool to confirm no protected group passed at below 80 percent of the highest-passing group.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in vendor briefings, debrief rooms, and policy conversations. Skim the first section for a fast shared picture. Use the second when you are deciding whether a testing layer belongs in a live req or how to evaluate a scoring platform.

Plain-language summary

- What it means for you: Pre-employment testing software is the platform your team uses to send standardized tests to candidates, collect scored results, and push that data into your ATS so every hiring decision includes the same measured inputs alongside interview notes.

- How you would use it: Choose the test types that match your role requirements (cognitive, skills, personality), set a consistent invite trigger in your ATS, and brief candidates on the purpose and timing before they receive the link.

- How to get started: Ask your vendor for validity evidence tied to your specific role families, confirm data residency before collecting psychometric scores from candidates in the EU, and pilot on a closed role with past hires before going live.

- When it is a good time: After you have named the predictors of performance on the role scorecard, and after legal has confirmed your lawful basis for processing psychometric data in each jurisdiction where you hire.

When you are running live reqs and tools

- What it means for you: Pre-employment testing software generates scored candidate data at scale. Without pass-rate monitoring, a screen that appears neutral can compound adverse impact across thousands of applicants before anyone notices.

- When it is a good time: After ATS integration is confirmed, after the human-in-the-loop gate is documented (who reviews a flagged result before a decline is recorded), and after version logging is active so you know which instrument version ran against each cohort.

- How to use it: Configure the platform to log test version and cut score per role, connect pass-rate reporting to your quarterly talent acquisition metrics review, and set a minimum sample size (40 per group is a practical floor) before interpreting group differences.

- How to get started: Run a pilot on a closed role with past hires first. Compare test scores against your own performance ratings for that cohort before deploying on live reqs. A vendor that resists pilot data requests is a vendor worth scrutinizing closely.

- What to watch for: Vendors who market culture fit or values alignment scores without publishing the validated instrument underneath. That framing pattern should trigger a full candidate assessment tools vendor evaluation before you sign anything.

Where we talk about this

On AI with Michal live sessions the assessment layer comes up in both the AI in recruiting track and in the compliance and ethics modules where participants walk through real vendor technical manuals, identify which validity coefficients matter for their role types, and discuss where testing belongs in a structured hiring funnel. Join a session at Workshops to work through a live vendor evaluation exercise with peers, and continue in membership office hours when a specific platform RFP or existing deployment question surfaces.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and verify before wiring any assessment platform into a candidate-facing step.

YouTube

Use Filters - Upload date to find recent talks from IO psychologists and employment law practitioners alongside vendor demos.

- Pre-employment testing software comparison cognitive ability (platform comparisons grounded in validity evidence, not just feature lists)

- Pre-employment assessment adverse impact EEOC compliance (how pass-rate differences interact with group demographics and legal exposure)

- AI pre-employment testing validity bias research (what the independent research actually shows when AI-scored assessments are compared to validated instruments)

- Situational judgment tests hiring validity norming (why SJTs need role-specific norming and how to read a technical manual)

- r/IOPsychology has active threads on which pre-employment testing platforms have real criterion validity and which are oversold by marketing claims.

- r/recruiting captures recruiter discussions on legal risk, vendor pitches, and what happens when hiring managers push back on test scores.

- r/humanresources surfaces HRBP perspectives on where testing platforms belong in a pre-employment process and how to handle candidate transparency requests.

Quora

- Quora search: pre-employment testing software surfaces practitioner and researcher answers on platform selection and compliance; quality varies, so verify citations before acting on specific platform recommendations.

Pre-employment test types: what the platform should prove

| Test type | What it measures | Validity evidence quality |

|---|---|---|

| Cognitive ability (g) | Verbal, numerical, abstract reasoning | Strong across complex roles; widely published |

| Work sample or skills test | Role-specific task performance | High for the specific task, limited transfer |

| Personality questionnaire (Big Five) | Stable trait patterns | Moderate; strongest for conscientiousness |

| Situational judgment test (SJT) | Judgment in role-realistic scenarios | Moderate; requires role-specific norming |

| AI-inferred traits from video or text | Claimed trait inference | Low to unknown; no independent validation standard |

Related on this site

- Glossary: Pre-employment personality test, Pre-employment assessment test, Pre-employment assessment tools

- Glossary: Candidate assessment tools, Hiring assessment tools, Employment assessment tools

- Glossary: Adverse impact, AI bias audit, Human-in-the-loop (HITL), Scorecard

- Glossary: Applicant tracking software, Talent acquisition metrics

- Blog: AI sourcing tools for recruiters

- Live cohort: Workshops

- Membership: Become a member

- Course: Starting with AI: the foundations in recruiting