Requisition funnel reporting

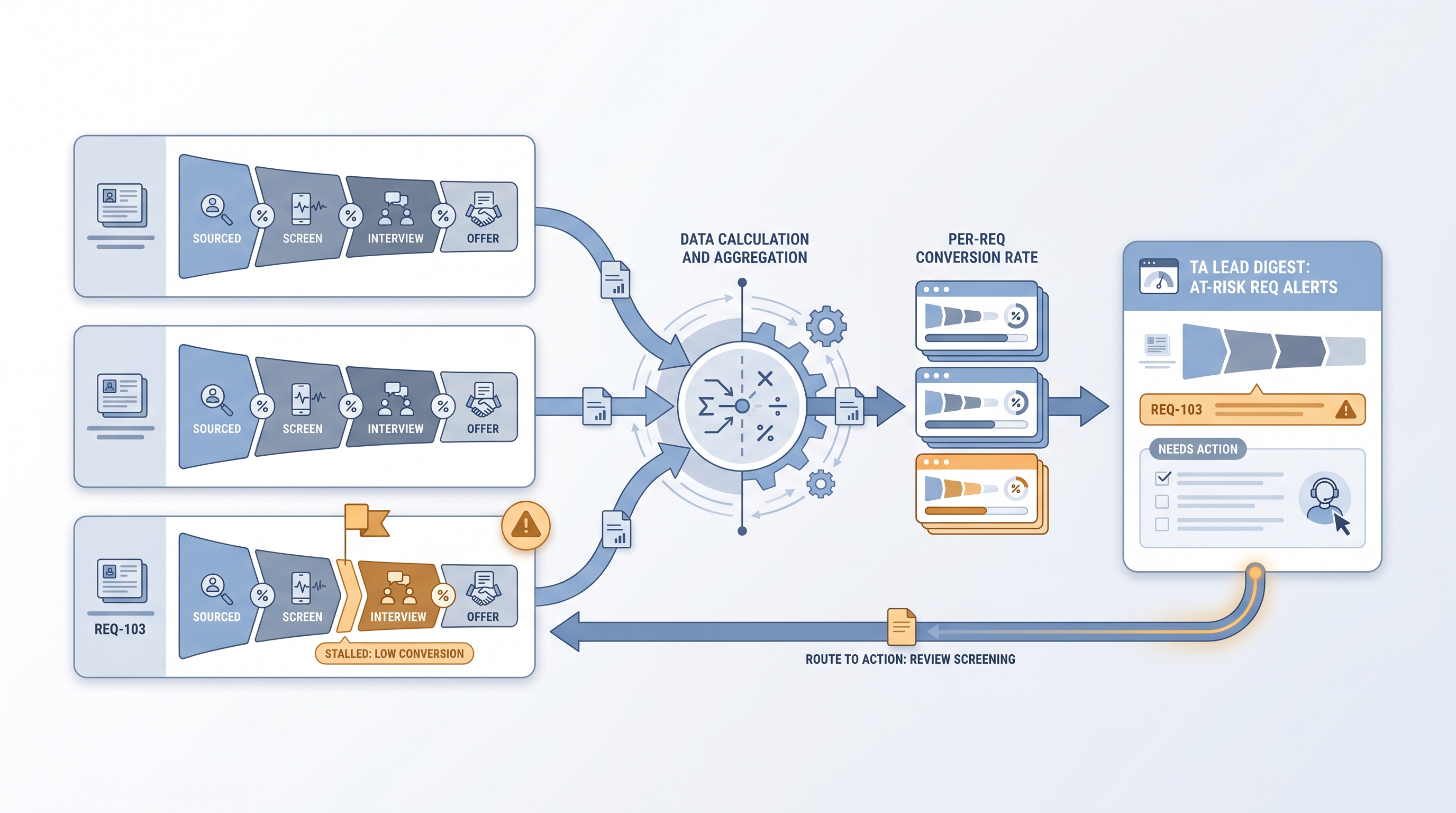

Per-requisition breakdown of how candidates move from first source or application through each hiring stage to offer, showing stage-by-stage conversion rates so TA leads can pinpoint where individual reqs are stalling.

Michal Juhas · Last reviewed May 8, 2026

What is requisition funnel reporting?

Requisition funnel reporting is the practice of tracking stage-by-stage candidate conversion rates and volumes for each individual open role. It shifts attention from blended pipeline health metrics to the specific conversion story inside one req: how many candidates entered at each stage, how many advanced, and where the flow stalled.

Unlike pipeline coverage reporting, which asks whether a req has enough active candidates to close by the target date, req funnel reporting explains why candidates stopped progressing in the first place. A req with consistent drop-off at the hiring manager review stage tells a different story than one where candidates stall between offer and acceptance.

In practice

- A TA lead reviewing the weekly req report sees that one engineering role has a 22% screened-to-HM-review conversion versus a 58% baseline for the same role family, which tells her the hiring manager is declining most submissions and an intake recalibration is overdue.

- A recruiter pulls a one-page req funnel summary before a hiring manager sync to show exactly where the last 14 candidates dropped out, replacing a vague "the pipeline is slow" conversation with a specific ask.

- An automated Friday digest uses ATS stage-count exports processed through a structured output prompt to generate per-req conversion tables, flagging any req where a stage conversion is more than 20 points below the role-family baseline.

Quick read, then how hiring teams use it

This is for recruiters, TA ops leads, and HR partners who need the same vocabulary in standup reviews, vendor evaluations, and hiring manager calibrations. Skim the first section when you need shared language fast. Use the second when configuring ATS reports or automating weekly digests.

Plain-language summary

- What it means for you: Req funnel reporting tells you the conversion story inside each individual open role, not just whether the overall pipeline looks busy. It shows where candidates are stopping and helps you pinpoint the fix.

- How you would use it: Pull stage counts from your ATS weekly, calculate the conversion rate at each stage for each active req, and compare to your historical baseline for that role type.

- How to get started: Start with your two hardest-to-fill open reqs. Export stage counts, map them to a simple six-row table, and share it at your next hiring manager sync. Refine the stage definitions over the next three hires.

- When it is a good time: Any time a req is aging beyond your typical time-to-fill baseline and you need a data-backed explanation for why.

When you are running live reqs and tools

- What it means for you: At scale, req funnel reports replace gut-feel standup updates with a structured per-req conversion view. When integrated with ATS API exports and an automation layer, stall patterns surface before they become urgent.

- When it is a good time: Weekly for all active reqs. Daily for roles with hard deadlines or conversion rates more than 15 points below your role-family baseline. Pair with time-in-stage reporting to see both where volume is dropping and how long candidates sit at each stage before a decision.

- How to use it: Pull ATS stage counts, apply your conversion baselines by role family, and route amber and red reqs to a Slack alert or TA ops digest. Use structured output from an LLM to parse messy stage-count exports into clean Markdown tables if your ATS does not expose clean API data.

- How to get started: Build the simplest version first: one spreadsheet with stage counts per req, one column with conversion rate, one column flagging anything below threshold. Add automation once the stage definitions are stable and hiring managers understand what the numbers mean.

- What to watch for: Inconsistent ATS stage labels across recruiters making the funnel meaningless; AI summaries that invent pattern narratives from sparse data; and sharing candidate-level funnel data with stakeholders without checking your GDPR disclosure scope.

Where we talk about this

On AI with Michal live sessions, requisition funnel reporting comes up in both the AI in recruiting and sourcing automation tracks, because stall patterns at different funnel stages require different interventions: sourcing at the top, process or calibration in the middle, comp at the offer stage. If you want to build a live req funnel report from a real ATS export and hear which thresholds teams actually act on, start at Workshops and bring your most challenging open req with its stage history.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Recruiting Metrics That Actually Matter (Recruiting Daily Advisor) covers conversion-focused reporting for teams building their first per-req funnel dashboard.

- How to Create a Recruitment Dashboard in Excel walks stage-count and conversion-rate tables that map directly to per-req funnel logic.

- Talent Acquisition Metrics and Analytics covers the TA metrics landscape including req-level funnel health for leaders building executive reporting.

- How do you track pipeline health across all your reqs? in r/recruiting has frank answers from in-house TA teams on how they actually build per-req conversion views.

- Best way to report pipeline status to HMs? in r/recruiting covers the challenge of turning funnel conversion data into hiring manager action.

Quora

- How do talent acquisition teams measure pipeline health? collects practitioner answers on metrics, tools, and reporting cadences (quality varies; read critically).

Req funnel report vs sourcing funnel metrics

| Requisition funnel reporting | Sourcing funnel metrics | |

|---|---|---|

| Unit of analysis | Per open requisition | Per sourcing motion or channel |

| Key question | Where are candidates stalling inside this req? | Is outreach landing and converting? |

| Who acts on it | Recruiter, TA lead, hiring manager | Sourcer, TA ops |

| Primary data source | ATS stage counts per req | Outreach tool, ATS source field |

| Direction | Retrospective (why did conversion drop?) | Forward-looking (is the input working?) |

Related on this site

- Glossary: Pipeline coverage reporting, Sourcing funnel metrics, Time-to-fill, Time in stage reporting, Weekly hiring funnel report, Talent acquisition metrics, Scorecard

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member