AI and hiring

The intersection of artificial intelligence with the hiring process, covering how machine learning and language model tools are applied across sourcing, screening, scheduling, and reporting stages to help TA teams move faster without lowering decision quality.

Michal Juhas · Last reviewed May 9, 2026

What is AI and hiring?

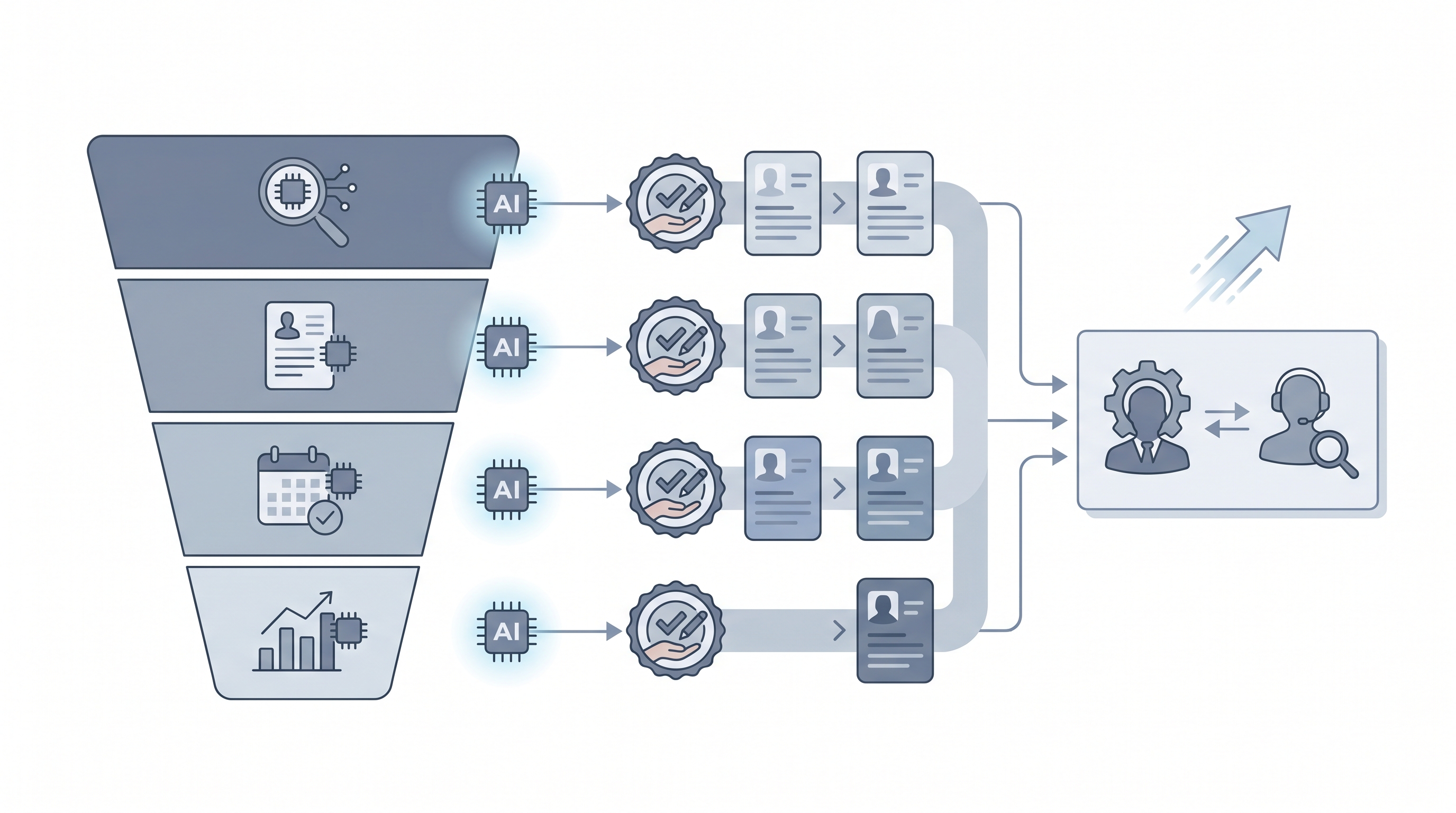

AI and hiring refers to applying machine learning and language model tools at specific stages of the recruiting lifecycle, not as a single gate but as a layer of assistance across sourcing, screening, scheduling, and reporting. At the simplest level, a recruiter uses an LLM to draft a job description from intake notes. At a more integrated level, a platform surfaces AI-ranked shortlists and auto-routes candidates through ATS stages.

What the phrase covers: prompting workflows a recruiter runs manually, vendor tools baked into existing ATS platforms, and custom automations a TA ops team builds with APIs and no-code routers. The common thread is AI handling a step that previously required manual attention so the recruiter can focus on judgment: calibrating with a hiring manager, reading a debrief room, or building trust with a passive candidate over weeks.

In practice

- When a sourcer pastes intake notes into an LLM and gets a first-draft job description back in two minutes, that is AI and hiring at the job definition stage.

- When an ATS vendor markets "AI-matched shortlists," they mean a model scored resumes by predicted probability of advancing, a step that needs a human review gate before a recruiter acts on it.

- A TA ops lead saying "the AI is ghosting candidates" usually means an automated outreach sequence sent too fast and got flagged as spam, not that a model made a relationship decision.

Quick read, then how hiring teams use it

This section is for recruiters, sourcers, TA partners, and HR leaders who need the same vocabulary for vendor calls, debrief conversations, and tool decisions. Skim the first part for a shared definition. Read the second when you are deciding what to try, buy, or put in front of a hiring manager.

Plain-language summary

- What it means for you: AI and hiring is a label for any tool or technique that uses machine learning to help your team move candidates faster: writing, searching, summarising, scheduling, or predicting outcomes at a specific stage.

- How you would use it: You connect AI to one step where you lose more than 30 minutes per week, write or choose a prompt for that step, and review the output before it touches a candidate record or goes out as a message.

- How to get started: Start with one output you already produce manually (a screening summary, a job post, an outreach draft) and ask an LLM to do a first draft. Compare it to your own work for two weeks before adding automation.

- When it is a good time: After you know exactly what a good output looks like and can spot a bad one in 30 seconds. Not while the process is still changing every week.

When you are running live reqs and tools

- What it means for you: AI and hiring shifts recruiter time from production tasks (first drafts, note formatting, search query construction) to judgment tasks (calibration, candidate relationships, offer negotiation). That trade-off only holds if outputs are reviewed before they hit your ATS or a candidate inbox.

- When it is a good time: After you have stable prompts, a review gate, and someone named as the owner for errors. Workflow automation that fires before those conditions are met creates more problems than it saves.

- How to use it: Pair an LLM drafting layer with your ATS and comms stack. Keep candidate-facing sends behind a human gate. Log what each prompt is doing so compliance questions have a paper trail.

- How to get started: Pick one integration: call summaries pushed to candidate notes, or JD drafts from intake form answers. Ship that with a review step before you add a second automation. Read AI in recruiting for the funnel-wide view of where AI connects.

- What to watch for: Confident wrong output, stale data passed through as true, and prompts baked into automations that nobody updates when policy or job requirements change.

Where we talk about this

On AI with Michal live sessions, "AI and hiring" is the opening frame before we narrow into sourcing automation or interview workflows. The AI in recruiting track covers the full lifecycle with live tool demos on real req briefs. The sourcing automation track goes deeper on outreach sequences and ATS integrations. If you want the room conversation with peer pressure-testing rather than a static page, start at Workshops and bring a real role to work on.

Around the web (opinions and rabbit holes)

Third-party creators move fast here. Treat these as starting points, not endorsements, and verify compliance postures and vendor details directly before wiring candidate data to any script you find.

YouTube

- AI in Recruiting: What Talent Teams Need to Know covers the practical landscape for TA teams adopting AI tools across the funnel.

- Introduction to Generative AI (Google Cloud Tech) explains the foundation models powering most AI hiring tools, useful for pressure-testing vendor claims.

- AI Bias and Fairness Explained (IBM Technology) covers the algorithmic fairness concepts that apply whenever an AI system scores or ranks candidates.

- How are you actually using AI in your recruiting workflow right now? in r/recruiting is a candid survey of tools and use cases from practitioners in the chair.

- AI tools for recruiting: 6 months in, what worked and what did not in r/recruiting is honest about failure modes you do not see in vendor demos.

- Has AI made recruiting easier or just different? in r/Recruitment covers both the efficiency gains and the anxiety that AI adoption surfaces in teams.

Quora

- How can AI be used in the hiring process? collects varied practitioner perspectives across sourcing, screening, and scheduling use cases.

AI and hiring across the funnel

| Stage | What AI handles | What still needs a human |

|---|---|---|

| Sourcing | Outreach drafts, semantic search over ATS | Approves before send, evaluates culture fit |

| Screening | Summarises resumes, fills scorecard fields | Makes the advance or reject call |

| Scheduling | Suggests times, sends calendar invites | Handles edge cases and rescheduling |

| Reporting | Flags pipeline bottlenecks, tracks conversion | Validates with context, presents to leadership |

Related on this site

- Glossary: AI in recruiting, AI for hiring, AI hiring software, AI recruiting tools, Human-in-the-loop, Workflow automation, Semantic search, AI adoption ladder, AI bias audit, Adverse impact, Hallucination

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Workshops: AI in recruiting

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member