AI-powered hiring

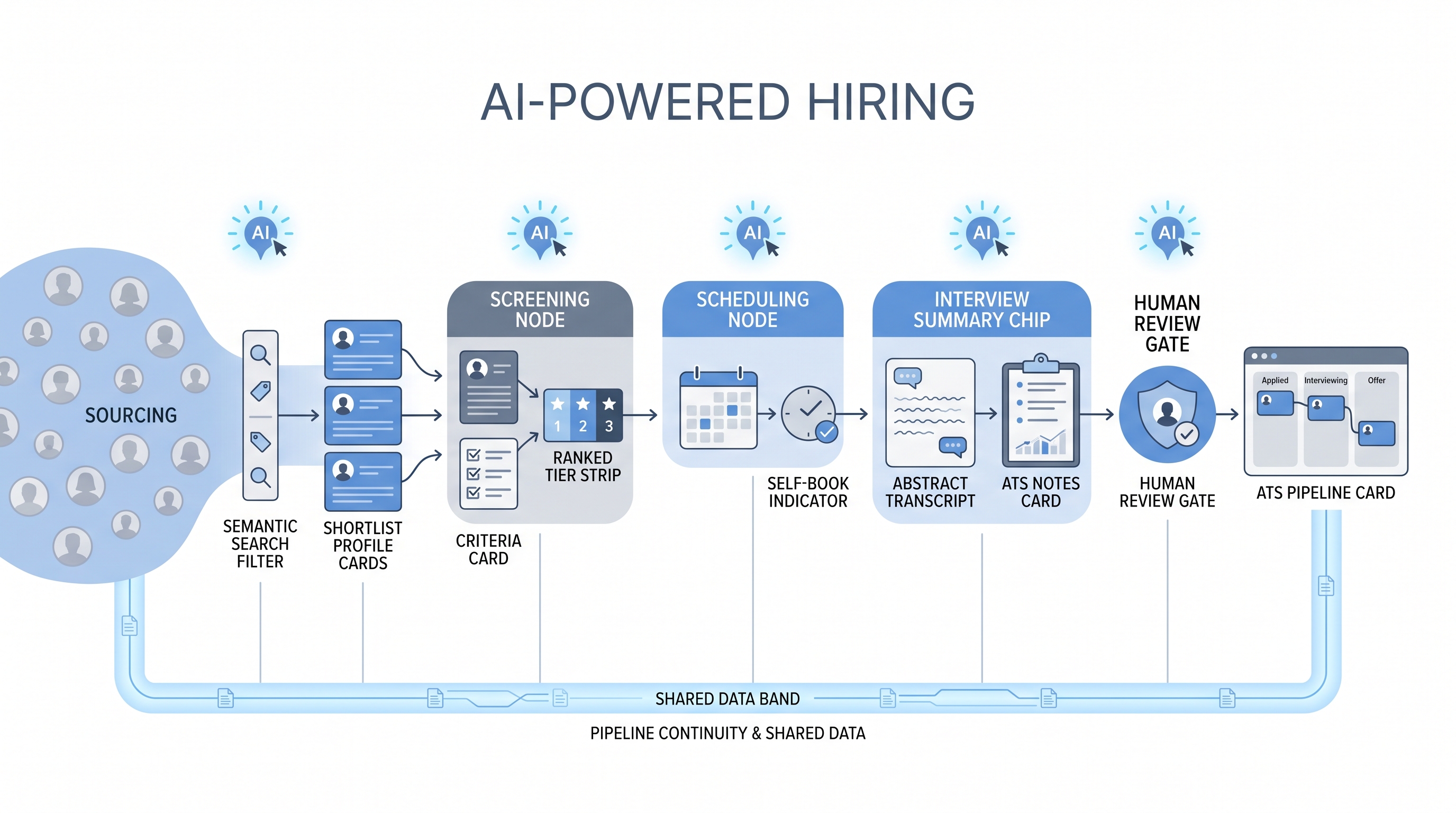

Using AI tools across the full hiring lifecycle, from sourcing and screening through scheduling and interview summaries, so recruiters make faster, better-documented decisions without replacing human judgment at consequential gates.

Michal Juhas · Last reviewed May 10, 2026

What is AI-powered hiring?

AI-powered hiring means running the full recruiting process with AI tools connected across stages: semantic search and enrichment for sourcing, scoring models for screening, smart scheduling, and AI-drafted summaries after interviews. The goal is fewer manual hand-offs between stages, not fewer humans making decisions.

The distinction matters. A recruiter who drafts outreach messages with an AI tool is using AI in hiring. A team where sourcing output feeds a screening prompt, screening results route to a scheduling tool, and interview notes become structured ATS entries through a transcript model is running AI-powered hiring. Integration is what makes it powerful, and what makes the compliance obligations more demanding.

In practice

- When a TA director says "we are going AI-powered," they usually mean sourcing, screening, and scheduling now have an AI layer, not that recruiters have been replaced. The decision gates, offer conversations, and rejection calls still belong to people.

- A sourcer at a scale-up might use semantic search to find candidates, AI scoring to prioritize applications, and an AI drafting tool for outreach, all in one req, without a single automation webhook. That is already AI-powered hiring at the task level.

- Compliance teams ask "which AI vendors see candidate data" before any pilot because the answer determines the DPA vendor list and the GDPR record of processing entry, not just the tool budget line.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how it shows up in the ATS, sourcing tools, or candidate communications.

Plain-language summary

- What it means for you: AI tools handle the repetitive parts of sourcing, sorting, and scheduling so you spend more time on conversations that require judgment: calibrating with a hiring manager, reading a room in an interview, or building a relationship with a passive candidate.

- How you would use it: Pick the stage that costs the most recruiter time with the least judgment required. Start there, stabilize it, then connect it to the next stage.

- How to get started: Map your current process in five stages (source, screen, schedule, interview, decide). Identify which step has the clearest success criteria and the most repetition. Add one AI layer there before you connect to anything else.

- When it is a good time: When you have a named owner for each stage, a GDPR review complete, and at least one real req to test on before scaling to high volume.

When you are running live reqs and tools

- What it means for you: AI-powered hiring is a pipeline, not a product. Each stage produces output that feeds the next stage through an API, ATS field, or shared prompt. That pipeline needs error alerts, a retry strategy, and a human inbox for exceptions.

- When it is a good time: After each stage works independently and has a stable error rate, after vendor DPAs are signed, and after a hiring manager has seen and calibrated the output before it runs unsupervised.

- How to use it: Build and validate each stage separately. Connect sourcing to screening only after screening criteria are stable. Add scheduling integration only after screening pass rates are predictable. Log every model version and criteria card version for audit trail.

- How to get started: Run a parallel test on a live req: AI-assisted alongside your current process for two weeks. Compare outputs. Adjust criteria and prompts before removing the manual step. Use structured output patterns when writing scores and summaries back to ATS fields.

- What to watch for: Silent integration failures where one stage produces bad output and downstream stages amplify the error. Adverse impact patterns at the screening stage that are invisible until someone samples the declined profiles. Model version drift when a vendor updates their API without warning and criteria that worked last month stop working this month.

Where we talk about this

On AI with Michal live sessions, the AI in recruiting track covers AI-powered hiring end to end: sourcing flows, screening criteria cards, scheduling integration, interview summary patterns, and the GDPR questions that come up the moment candidate data touches a model. The sourcing automation track goes deeper on the ATS webhook and API layer. Start at Workshops and bring your current stack and a real job brief so feedback is grounded, not theoretical.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Search "AI-powered hiring" and "AI recruiting workflow" on YouTube filtered to the past year to find practitioners building end-to-end flows in Make, n8n, or direct API integrations. Prefer channels that show the error handling and the calibration step, not only the happy path demo.

- Recruiting Brainfood (Hung Lee) covers AI adoption across hiring stages through practitioner interviews and honest assessments of where the integration story falls apart versus where it holds up in production.

- HR Tech channels increasingly cover AI-powered ATS and sourcing tools with live demos. Watch for whether the demo shows the human review gate or skips straight from AI score to candidate communication.

- r/recruiting has active threads on what AI-powered hiring looks like in practice: what tools connect well, what breaks after the first month, and what hiring managers think when they see AI-sorted shortlists.

- r/humanresources surfaces HRBP and HR leader perspectives on the compliance and governance obligations that arrive with any AI-powered process.

Quora

- Search "AI-powered hiring" or "AI hiring process" on Quora for practitioner answers about implementation. Read critically; vendor-authored answers tend to skip the bias, failure mode, and GDPR sections.

Manual process versus AI-powered hiring

| Stage | Manual | AI-Powered |

|---|---|---|

| Sourcing | Boolean and directory search | Semantic search, enrichment, AI-ranked shortlists |

| Screening | Recruiter reads every application | AI scores and ranks, recruiter reviews top tier |

| Scheduling | Email back-and-forth | AI suggests slots, candidate self-books |

| Interview notes | Typed after each call | AI transcript summary, recruiter edits |

| Reporting | Manual spreadsheet tracking | ATS analytics, real-time funnel metrics |

Related on this site

- Glossary: AI in hiring, AI hiring, AI-powered recruiting, AI job screening, Human-in-the-loop (HITL), AI bias audit, Adverse impact, Workflow automation, Structured output

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member