Applicant tracking system (ATS)

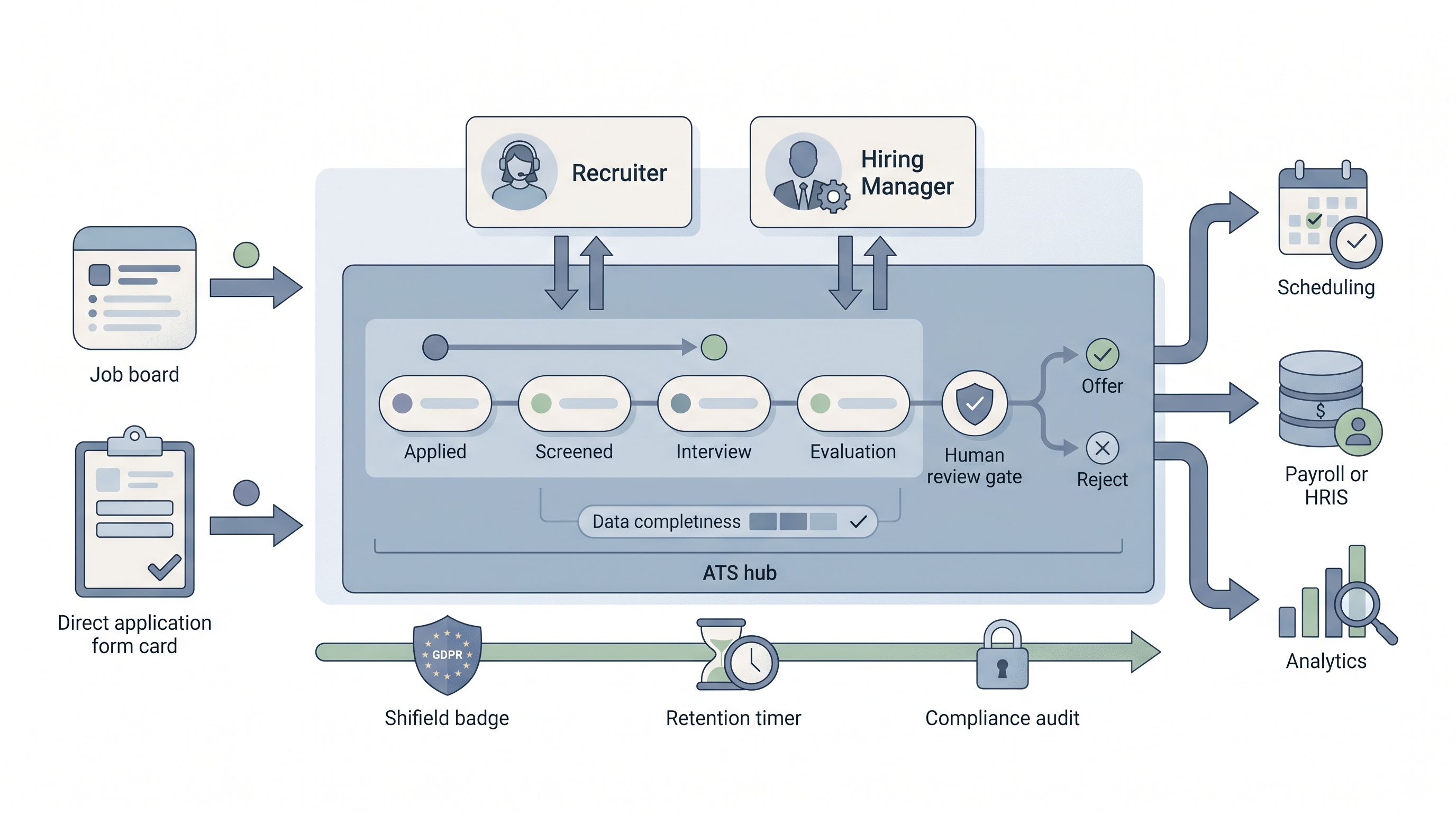

A system that records every candidate who applies, moves them through defined pipeline stages, and coordinates the recruiter, hiring manager, and interviewer activity that turns an open req into a hire.

Michal Juhas · Last reviewed May 15, 2026

What is an applicant tracking system?

An applicant tracking system is the database and workflow layer that sits at the center of every recruiting operation. It holds the candidate record, owns the pipeline stages from application to hire or reject, and routes work between recruiters, hiring managers, and interviewers. Every report, AI feature, and integration downstream draws from what the ATS contains.

The phrase "system of record" means the ATS is where the authoritative state lives: if the ATS says a candidate is at the offer stage, every connected tool and stakeholder should see the same. When that single-source property breaks, decisions get made on stale data nobody trusts.

In practice

- When a recruiter says "move them to debrief," they are changing an ATS stage. That stage change is what triggers scheduling tools, updates pipeline reports, and can fire a webhook to a downstream system. The word in the calendar invite and the stage in the ATS are the same event.

- Hiring managers who complain that "the pipeline report never looks right" are usually describing an ATS data problem: recruiters advancing stages before feedback is in, or leaving candidates parked in an old stage because none of the available options fits.

- TA ops teams use ATS configuration audits to find where fields are blank, which stages get skipped, and which reqs have zero interviewer feedback attached. This is routine hygiene work, not a one-time setup task.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how the ATS connects to your stack, your data, or your compliance obligations.

Plain-language summary

- What it means for you: An ATS is the shared scoreboard for hiring. Every candidate, every stage move, and every piece of feedback lives there so the team sees the same picture without chasing emails.

- How you would use it: You open a req, move candidates through stages as decisions are made, and collect structured feedback from each interviewer in the fields the platform provides. The ATS records the timestamps and outcomes.

- How to get started: Audit one closed req from last quarter. Check whether the key fields are filled, the stage history makes sense, and the interviewer feedback matches the outcome. That one req tells you more about your ATS health than any vendor demo.

- When it is a good time: An ATS investment pays off when the same workflow runs across every req, not when each recruiter manages their pipeline differently and the ATS is just a backup for spreadsheets.

When you are running live reqs and tools

- What it means for you: The ATS is the event source for every integration. Job boards, HRIS, scheduling tools, and AI sourcing platforms all subscribe to or poll ATS state. If the ATS stage logic is inconsistent, every connected tool gets inconsistent signals.

- When it is a good time: Add integrations after stage logic is stable and field fill rates are above 80 percent on key fields. A recruiting webhooks setup that fires on every stage move is only useful if stage moves mean something consistent.

- How to use it: Define stages as decisions, not tasks. Set required fields per stage so feedback is collected before the next gate opens. Use the ATS event log to catch integration failures early, not after the HRIS receives wrong data. Cross-link to workflow automation once the pipeline is stable.

- How to get started: Pull a field-fill audit for the last 30 closed reqs. Any field below 70 percent fill rate is a process gap, not a software gap. Fix the process convention first, then consider whether an automation or required-field setting enforces it.

- What to watch for: Silent stage skips where candidates jump two stages without a timestamp in between, high-volume reqs where feedback fields stay blank because interviewers never log in, and AI shortlists that surface candidates already declined in a prior req because deduplication was never configured.

Where we talk about this

On AI with Michal live sessions, ATS configuration is a recurring theme in both the AI in recruiting and sourcing automation tracks. Participants bring their actual ATS names, show the stage logic they currently run, and work through where the integration or AI feature they want to add is blocked by a data quality problem underneath. If you want that room conversation rather than only this page, start at Workshops and bring your pipeline report and one field audit.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- What is an Applicant Tracking System (ATS)? (Workable) is a vendor-produced but clear walkthrough of ATS core concepts for recruiters new to the category.

- How to Use an ATS to Hire Better covers practical configuration decisions teams face when setting up pipeline stages and required fields.

- The Dark Side of ATS: Why Your Hiring Process Might Be Broken is a practitioner-perspective critique worth watching before you over-rely on ATS-scored shortlists.

- What ATS are you using and what do you love/hate about it? in r/recruiting is a long practitioner thread with unfiltered opinions on real platforms.

- ATS are ruining recruiting is a contrarian thread worth reading for candidate and recruiter frustrations before you add another required field.

- How do you audit ATS data quality? in r/humanresources has practical suggestions from TA ops and HRIS practitioners.

Quora

- What are the benefits and drawbacks of using an ATS in the recruitment process? collects practitioner and candidate perspectives across company sizes.

ATS vs. recruiting CRM

| Dimension | ATS | Recruiting CRM |

|---|---|---|

| Who it tracks | Active applicants on open reqs | Passive and future candidates not yet in process |

| Primary workflow | Stage progression to hire or reject | Relationship nurture, interest signals, talent pooling |

| Data structure | Req-linked records with outcome fields | Candidate-linked records with engagement history |

| AI feature surface | Parsing, shortlist scoring, JD drafting | Outreach personalization, pool segmentation |

| Compliance trigger | GDPR right-to-explanation on rejections | GDPR lawful basis for unsolicited contact |

Many enterprise platforms bundle both modules. Smaller teams often run passive candidate relationships through ATS stages when a dedicated CRM is not in budget. The practical boundary is whether the record is tied to an open req or to a longer-term talent relationship.

Related on this site

- Glossary: Applicant tracking software, Best applicant tracking system, ATS API integration, ATS hiring software, Resume parsing, Recruiting webhooks, Workflow automation, Human-in-the-loop (HITL), Talent acquisition metrics

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Self-paced: Starting with AI: the foundations in recruiting

- Membership: Become a member