Assessment tools for recruitment and selection

Software and structured methods used in hiring to evaluate candidate skills, cognitive ability, personality, and job fit before a hire decision is made. Types include cognitive tests, work sample exercises, situational judgment tests, skills assignments, and personality inventories.

Michal Juhas · Last reviewed May 10, 2026

What are assessment tools for recruitment and selection?

Assessment tools are structured methods used in hiring to evaluate candidates before a hire decision is made. They include cognitive ability tests, work sample exercises, situational judgment tests (SJTs), personality inventories, and skills assignments. When chosen for job relevance and validated against performance data, they replace gut-feel impressions with repeatable evidence. When chosen for speed or brand recognition alone, they introduce a compliance liability without reliably predicting job performance.

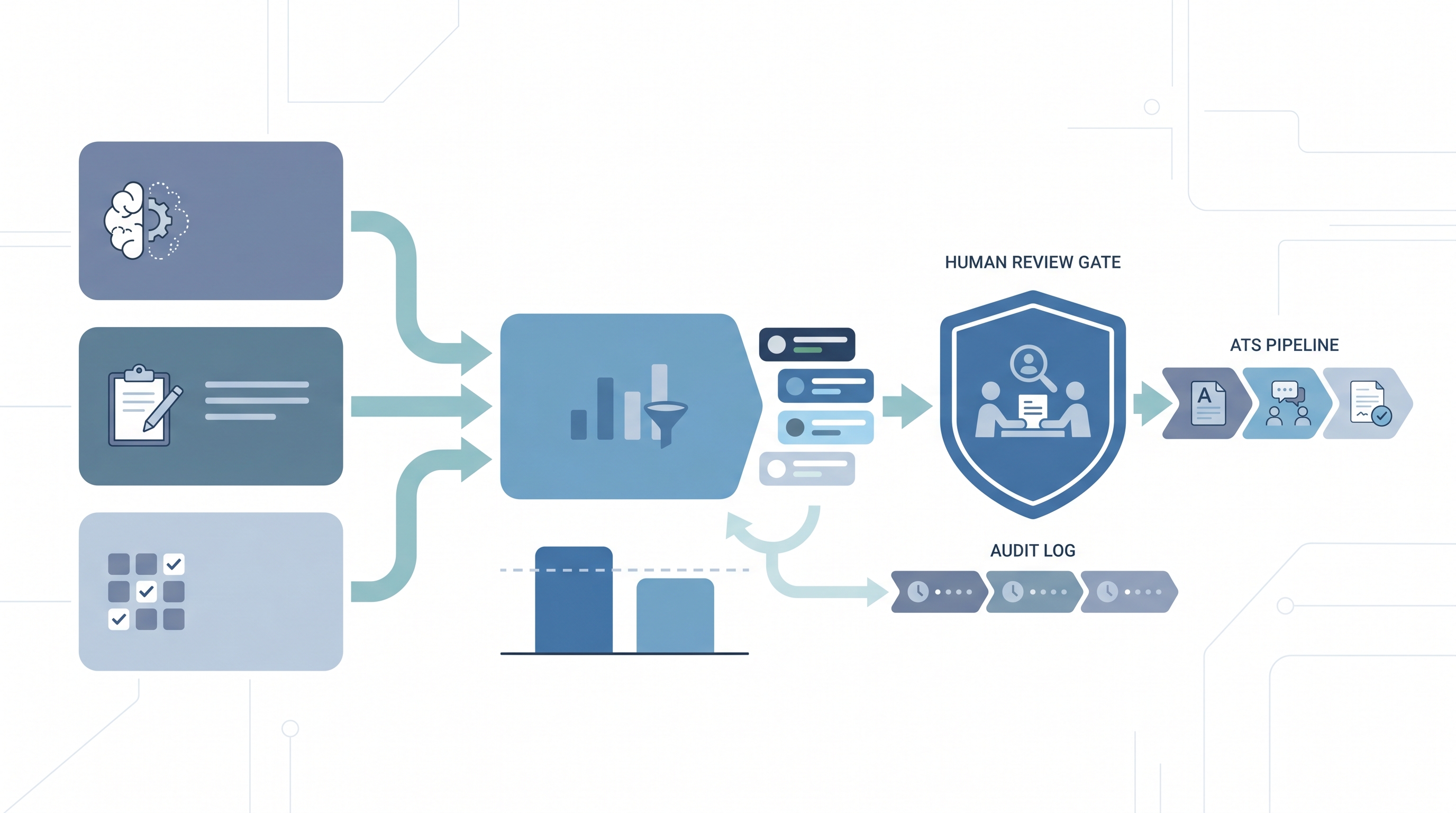

The term covers both traditional instruments and modern software platforms that deliver tests online, score results automatically, and integrate with an ATS. AI has added a third category: platforms that score video responses by analyzing tone, language, and nonverbal cues. That scoring method carries the highest compliance risk because it directly influences who advances or is rejected, and group-level bias is not always visible without a formal AI bias audit.

In practice

- A recruiter at a high-volume contact centre role sends a 20-minute situational judgment test to 200 applicants after the initial screen. The tool narrows the pool to 40 before phone screens, cutting recruiter time on that req significantly. The team runs quarterly adverse impact checks to verify pass rates stay consistent across gender and age groups.

- A TA leader reviewing a vendor demo hears "our AI scores video interviews with over 90% accuracy." The right follow-up is: accurate at predicting what, for which roles, and what do group pass rates look like across race and gender? Accuracy on a general benchmark does not equal fairness on your specific candidate population.

- An HRBP is asked by a rejected candidate why they did not advance after completing an online assessment. The answer requires the documented scoring criteria, the name of the human reviewer who confirmed the decision, and evidence that the assessment was validated for this role. "The system scored you lower" is not a compliant response under GDPR Article 22 if the score was the sole basis for rejection.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA leads, and HRBPs who need a shared vocabulary before choosing a tool, running a pilot, or writing an assessment policy. Skim the first section for a fast shared picture. Use the second when you are deciding where an assessment fits in your pipeline and what review gates to set up.

Plain-language summary

- What it means for you: Assessment tools give every candidate the same task under the same conditions, so hiring decisions rest on evidence rather than impressions from an interview that may have gone better for a confident speaker than a careful thinker.

- How you would use it: Pick one stage in your funnel where gut feel currently drives a big volume decision, such as who gets a phone screen after applying. Replace or supplement that call with a validated assessment, review the results with a human gate, and compare quality-of-hire data after six months.

- How to get started: Ask your vendor for validity evidence specific to your role type, and run a group pass-rate check before going live. Pilot on a closed, already-hired role first so you can compare the assessment output against the hire decision retrospectively.

- When it is a good time: After your scorecard criteria are agreed on, after a DPA is signed with the vendor, and after at least one recruiter and one hiring manager have reviewed sample results together on a real role.

When you are running live reqs and tools

- What it means for you: Assessment outputs that influence who advances or is rejected require audit trails: the scoring criteria, the model version if AI-scored, and the name of the human reviewer who confirmed the decision before the ATS stage was updated.

- When it is a good time: After an AI bias audit has been run on any AI-scored tool, after legal has reviewed the vendor's conformity documentation if you operate in the EU, and after you have a re-evaluation process for candidates who flag a scoring error.

- How to use it: Treat assessment scores as one input, not a filter that eliminates without review. Use structured output to write scores back to ATS fields so the data is parseable for quarterly adverse impact analysis. Keep the raw assessment report alongside the ATS stage note, not only the summary score.

- How to get started: Map your selection process and identify the one step with the highest volume and least structured evaluation criteria. Add the assessment there first. Connect it to a named reviewer who reads the result before a stage advance or reject fires.

- What to watch for: AI-scored video interviews that return a numeric fit score with no explanation of what it measured. Completion drop-off rates above 20%, which often indicate a candidate experience problem or an accessibility gap. Adverse impact signals that show up in aggregate data but are invisible in individual file reviews.

Where we talk about this

On AI with Michal live sessions, the AI in recruiting track covers how assessment tools fit into a structured selection process and what happens when AI scores a video or a written response without a human check. The sourcing automation track connects assessment placement to workflow automation patterns for high-volume roles. Start at Workshops and bring your current assessment stack, the roles where you use it, and your biggest compliance question so the conversation is grounded in your actual situation.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to a new tool.

YouTube

- Search "pre-employment assessment tools" on YouTube filtered to the past year for practitioner comparisons and vendor demos. Prefer videos that show what happens when results are challenged or when adverse impact surfaces, not only the happy-path onboarding walkthrough.

- SHRM (Society for Human Resource Management) publishes compliance-focused sessions covering assessment validity, adverse impact methodology, and what the EU AI Act means for TA teams using European candidate data.

- Search "cognitive ability test hiring bias" for research-backed perspectives on why GMA tests remain strong predictors but require adverse impact monitoring before deployment at scale.

- r/recruiting has practitioner threads on which assessment tools actually get used day to day, how hiring managers react to structured test results, and when a vendor's "bias audited" claim turned out to mean less than expected.

- r/humanresources surfaces HRBP and HR leader perspectives on compliance obligations when an assessment result is challenged, and how teams handle candidate requests for score explanations under GDPR.

Quora

- Search "recruitment assessment tools comparison" or "pre-employment testing compliance" on Quora for practitioner and legal perspectives. Vendor-authored answers often skip the adverse impact sections, so read critically.

Assessment categories compared

| Tool type | Predicts | Best stage | Key risk |

|---|---|---|---|

| Cognitive ability test | General reasoning, learning speed | Early screen | Adverse impact on race, age |

| Work sample | Job-specific task performance | Before interview | Accessibility, device requirements |

| Situational judgment | Judgment in complex situations | Before HM interview | Construct validity per role |

| Personality inventory | Behavioural tendencies | Debrief input | Adverse impact, fake-good responses |

| AI video scoring | Vendor-defined fit signals | Mid-funnel | EU AI Act high-risk, group bias |

Related on this site

- Glossary: Candidate assessment tools, Employment assessment test, Employment assessment tools, Hiring assessment tools, Personality test for employment, Psychometric testing for recruitment, AI bias audit, Adverse impact, Human-in-the-loop, Scorecard

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Course: Starting with AI: the foundations in recruiting

- Membership: Become a member