Candidate evaluation software

Platforms and tools that help hiring teams assess, score, and compare candidates through structured evaluations, so that selection decisions rest on documented evidence rather than memory or gut feel.

Michal Juhas · Last reviewed May 10, 2026

What is candidate evaluation software?

Candidate evaluation software helps hiring teams collect, score, and compare structured evidence about applicants so that selection decisions rest on documented criteria rather than memory and informal impressions.

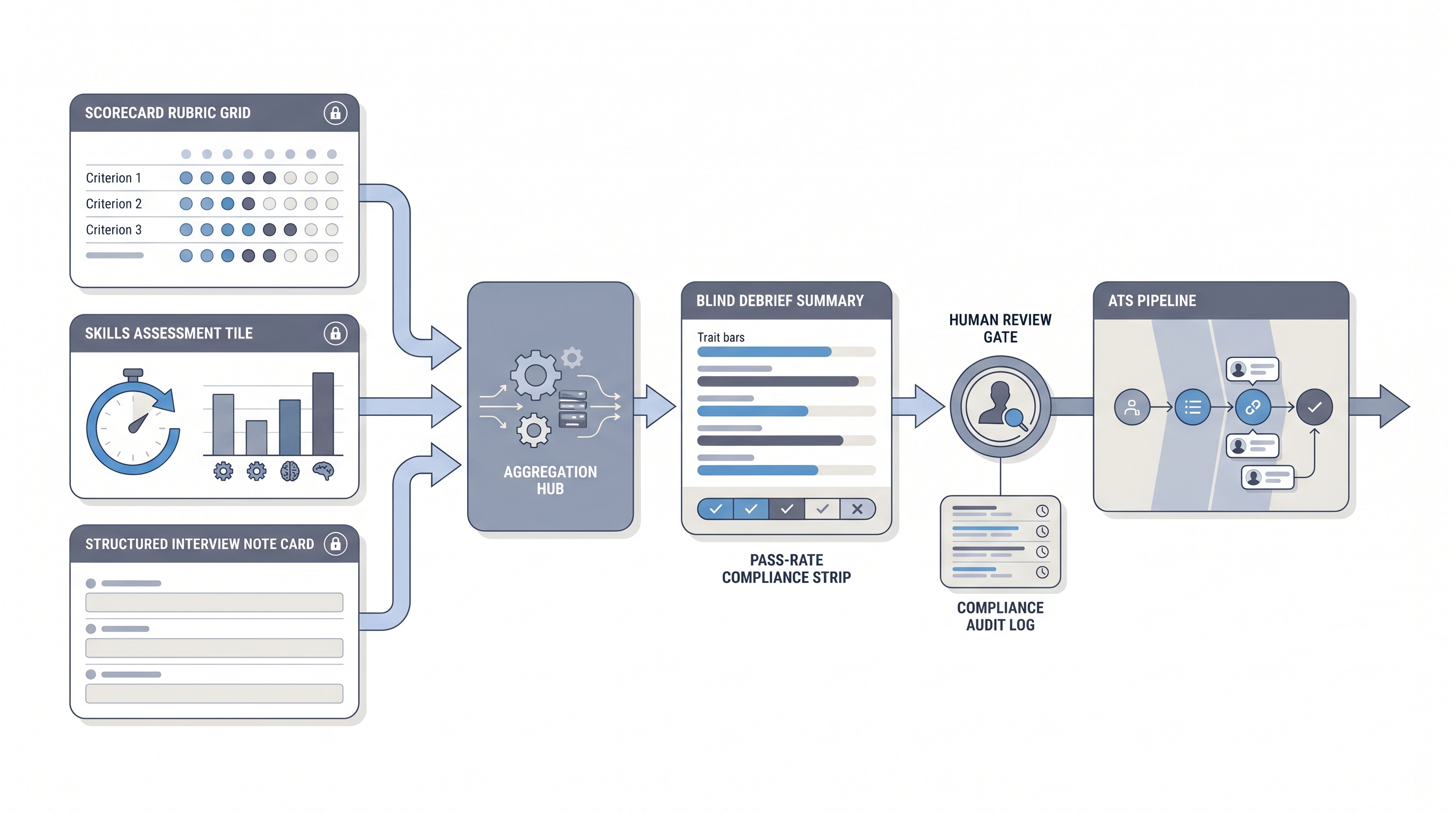

The category covers scorecard tools, skills and cognitive assessments, interview scoring modules, and AI-assisted note summarisation. What ties them together is consistency: every candidate in the same requisition is evaluated against the same rubric in a documented, auditable format.

In practice

- A TA team building an evaluation workflow for a volume hiring campaign sets up a scored work-sample exercise and a structured interviewer scorecard inside a single platform, so every hiring manager submits blind ratings before the debrief call.

- A recruiter at a 50-person startup uses a standalone evaluation tool to add rubric-based scoring to video interviews, because the ATS can move candidates through stages but cannot store criterion-by-criterion scores.

- A TA ops lead reviewing candidate ghosting rates discovers that the application drop-off spike aligns with a long unvalidated assessment placed too early in the funnel, and moves it to a post-phone-screen position to recover conversion.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and compliance reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how evaluation software shows up in your ATS, interviewer workflow, or candidate communications.

Plain-language summary

- What it means for you: Software that turns interviewer opinions into structured, documented scores so that two interviewers in the same debrief are comparing the same evidence rather than two different gut feels.

- How you would use it: Set up a rubric before the first interview goes out. Map each question to a competency on the scorecard. Close submissions before any interviewer sees another person's notes.

- How to get started: Audit one recent hire. Count how many numeric scores or rubric ratings you have versus freeform written notes. If most of the evidence is unstructured prose, that is the gap candidate evaluation software is built to close.

- When it is a good time: When you have more than two interviewers per req, when GDPR or audit requirements demand documented evidence, or when debrief discussions routinely feel like whoever speaks first sets the outcome.

When you are running live reqs and tools

- What it means for you: Every assessment instrument or scorecard module that touches a hire decision needs to be mapped to the ATS stage and tested for group pass-rate differences before it goes live, not after the first cohort is already scored.

- When it is a good time: After the scorecard is stable and agreed by the hiring manager, when pass rates are documented, and when a named compliance owner has reviewed the vendor data processing agreement.

- How to use it: Connect the evaluation tool to your ATS via API or webhook so scores flow into the candidate record automatically. Log which model version ran each AI-assisted scoring step. Build a human-in-the-loop review into any flow where an AI score influences a stage advance or reject.

- How to get started: Run one structured debrief pilot using blind rubric submission before the group call. Measure score variance. If variance collapses toward a single interviewer's rating, the debrief process is the problem, not the tool.

- What to watch for: Scorecard inflation, panelist anchoring, model drift between vendor updates, and GDPR Article 22 exposure for AI-scored selections without a named human reviewer. Read adverse impact documentation requirements before setting a cut score.

Where we talk about this

On AI with Michal live sessions we step through candidate evaluation design in the AI in recruiting track: building rubrics that survive a compliance review, briefing hiring managers on structured debriefs, and wiring assessment invites to ATS stage changes. If you want the full room conversation, not only this page, start at Workshops and bring your real stack and rubric questions.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- How to Build a Structured Interview Process covers the rubric foundation that evaluation software depends on.

- Pre-Employment Testing: What HR Needs to Know walks compliance basics before any scored instrument goes live.

- AI in Hiring: What Works and What Backfires explores where AI-scored evaluations create adverse impact risk.

- What assessment software does your team use? in r/recruiting collects candid vendor reviews from practitioners.

- Structured interviews vs vibes-based hiring in r/humanresources shows how teams actually run debriefs.

- GDPR and candidate assessments in r/gdpr surfaces real compliance questions around AI-scored hiring tools.

Quora

- What is the best candidate evaluation software? collects practitioner answers on tool selection criteria (quality varies, so read critically).

Scorecard-led versus tool-led evaluation

| Approach | Strengths | Watch for |

|---|---|---|

| Scorecard first | Criteria defined before tool selection | Rubric can be ignored if tool does not enforce it |

| Tool first | Quick to launch | Criteria drift to match what the tool measures |

| AI-assisted scoring | Consistent at volume | Model drift, Article 22 GDPR, and pass-rate bias |

Related on this site

- Glossary: Scorecard, Candidate assessment test, Adverse impact, Human-in-the-loop (HITL), AI bias audit, Pre-employment assessment test, Async screening, Applicant tracking software

- Glossary: Hiring assessment tools, Employment assessment tools, AI in hiring

- Live cohort: Workshops

- Membership: Become a member