Virtual interview platforms

Virtual interview platforms are tools and systems that host any non-in-person candidate conversation, from phone screens and live video calls to structured async recordings, giving hiring teams a way to evaluate candidates without requiring travel or a shared physical space.

Michal Juhas · Last reviewed May 9, 2026

What are virtual interview platforms?

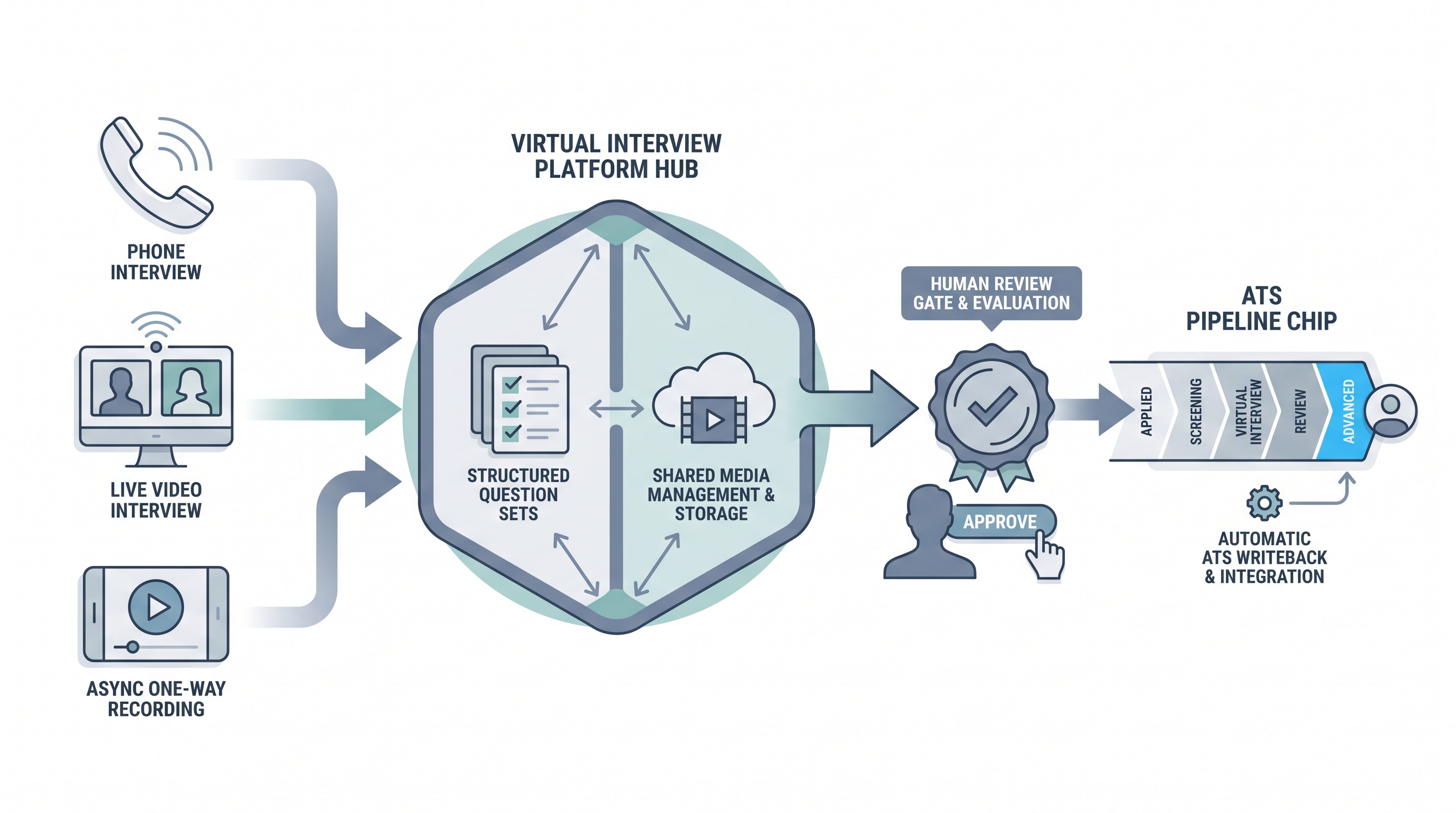

Virtual interview platforms are tools that host any non-in-person candidate conversation: phone screens logged in an ATS, live video calls on Zoom, Microsoft Teams, or Google Meet, one-way async platforms where candidates record answers to preset questions, and multi-panel tools that coordinate several reviewers across geographies.

The defining feature is that geography stops being a barrier to the interview step. What separates a purpose-built platform from a general video call tool is the hiring-specific layer: consent management, structured question sets with time limits, a rubric and scoring system, clip or call storage with access controls, and an ATS push when a stage moves. General tools leave all of that as manual work for a coordinator.

In practice

- A TA team running 80 applications per week on a customer support role replaces a phone screen queue with a three-question async platform. Two reviewers watch clips in batches twice a week. Scheduling time drops to near zero for the first screen, but they discover that 55 percent of candidates who receive the invite never submit, which prompts them to add a plain-text explainer and a named sender to the invite email.

- A technical sourcer at a scale-up uses live video for senior engineering roles and async for junior roles on the same req pipeline, splitting the two modalities at the funnel stage where real-time follow-up actually changes the outcome.

- A hiring manager asks to see the AI sentiment scores after reviewing a batch of clips. The conversation reveals the model scored a non-native English speaker as low confidence on clips where the hiring manager rated them as thoughtful and precise. The team disables automated scoring before the next batch.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section for the fast shared picture. Use the second when you are choosing which modality to wire into each funnel stage.

Plain-language summary

- What it means for you: Instead of flying or booking a room, you and the candidate connect over a screen or recording. The platform handles the logistics: scheduling link, recording consent, question set, and where the notes land after.

- How you would use it: For any role where candidates are geographically distributed or where scheduling across time zones is the actual bottleneck, not the quality of the conversation itself.

- How to get started: Pick one stable role. Write the three questions you ask on every first screen. Choose a modality that fits the stage (async for volume, live for senior). Confirm consent language with legal before sending the first invite.

- When it is a good time: When geographic distribution is real, when scheduling is the constraint, and when you can staff a human review gate within five business days of completion.

When you are running live reqs and tools

- What it means for you: Virtual interview platforms move interview logistics from calendar back-and-forth to a link. The trade is real-time follow-up for async scale. Pair either modality with a rubric and a reply SLA or you scale the evaluation pattern, not the quality.

- When it is a good time: When intake spikes from programmatic sourcing, when hiring managers decline to take early-screen calls, or when the same five questions appear on every first call for a stable high-volume role.

- How to use it: Wire the platform into your ATS so completed interviews trigger stage moves automatically. Keep AI-generated scores off the official record until they have passed an adverse impact audit. Use structured output patterns when pushing call notes or transcript excerpts back to the ATS candidate record.

- How to get started: Request the data processing agreement before any demo with real candidates. Confirm mobile and low-bandwidth completion works end to end. Test the consent flow with legal before inviting candidates. Resolve caption and accommodation requirements for async formats before launch.

- What to watch for: Completion drop-off after the invite link goes out, automated scoring overlays legal has not reviewed, vendor subprocessors who receive clip data outside your required data region, and silent ATS integration failures that leave candidates stuck in a stage without the recruiter noticing.

Where we talk about this

On AI with Michal live sessions, virtual interview formats come up in both the AI in recruiting and sourcing automation tracks: which modality belongs at which funnel stage, what the rubric needs to say before you hit send, and how you brief candidates so they trust an unfamiliar format. Bring your current screening volume, ATS name, and legal constraints to Workshops and work through them with practitioners who have run both sides of the process.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data.

YouTube

- Virtual Interview Best Practices for Hiring Teams covers rubric setup and candidate communication patterns across live and async formats.

- Async Video Interview Platform Comparisons has practitioner walk-throughs of major platforms including candidate experience demos.

- AI Video Interview Bias and Compliance surfaces the legal and construct validity conversations worth having before you accept automated scoring.

- Virtual interview tools teams actually keep after 6 months in r/recruiting collects honest practitioner answers on which platforms survive past the pilot.

- Async video completion rates and ghosting in r/recruiting surfaces candidate experience problems teams discover only after launch.

- Phone screen vs video screen which is better in r/recruiting is a practical comparison thread where recruiters share what actually changed their funnel metrics.

Quora

- What is the best virtual interview platform for recruiting? collects practitioner perspectives across industries (quality varies, read critically).

Modality comparison

| Factor | Phone screen | Live video | One-way async |

|---|---|---|---|

| Scheduling load | High: mutual time needed | High: mutual time needed | Low: candidate picks own time |

| Real-time follow-up | Available by phone | Available via video | Not available |

| ATS integration effort | Low (notes logged manually) | Minimal (link in invite) | Higher (consent, clip, stage sync) |

| AI scoring risk | Minimal | Lower (no clip capture by default) | Higher if overlays are enabled |

| Candidate drop-off | Near zero (verbal confirm) | Near zero (calendar confirmed) | 30 to 60 percent from invite |

Related on this site

- Glossary: One-way video interview, Async screening, Video interview software, Video interviewing platforms, Best video interview software, Scorecard, Human-in-the-loop (HITL), Adverse impact (selection), AI bias audit, Workflow automation

- Blog: AI candidate screening

- Guides: Talent acquisition managers

- Course: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member