Video interviewing platforms

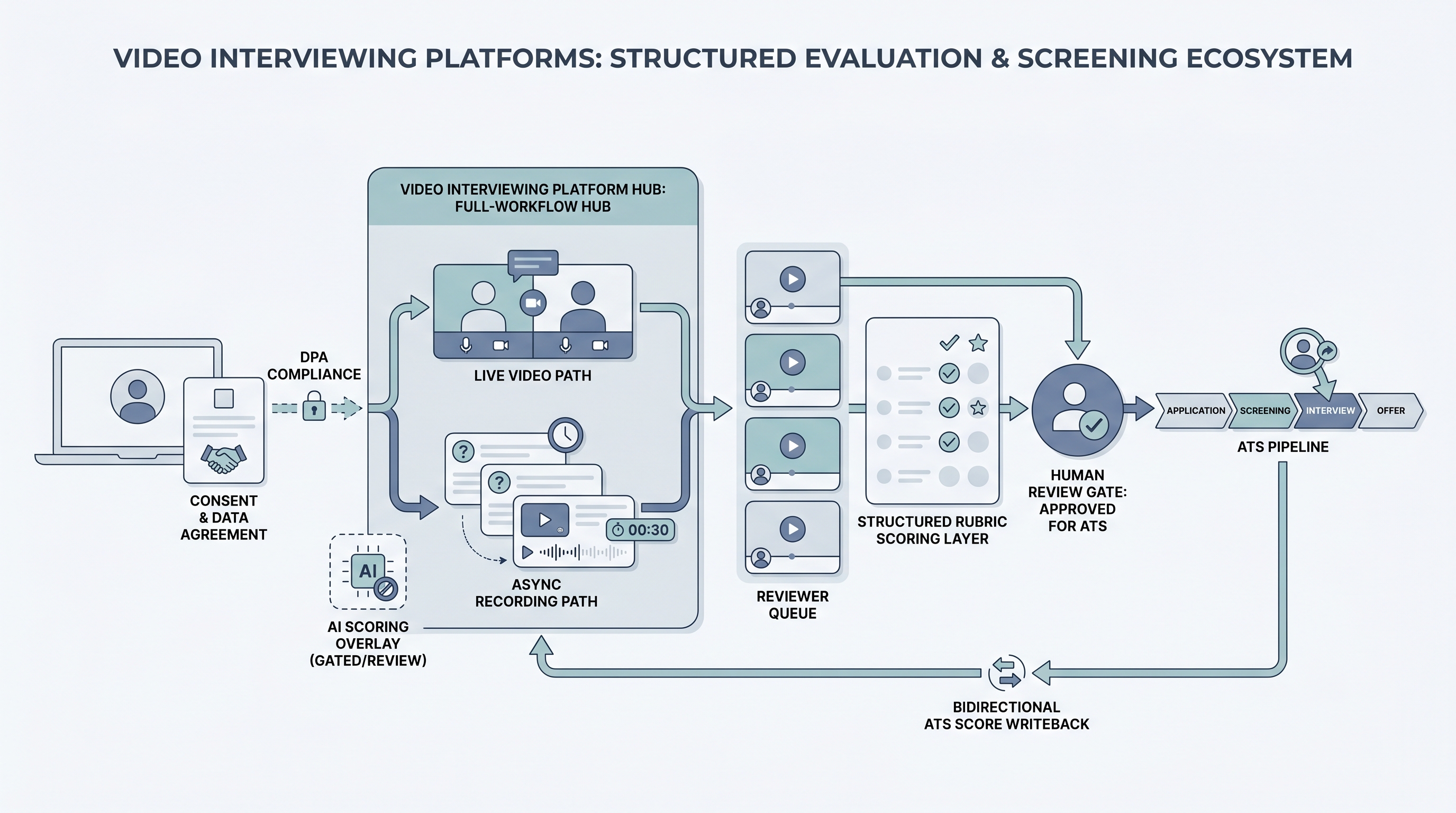

Video interviewing platforms are software systems that host both live and recorded candidate screening sessions, manage reviewer access and scoring, and connect to an ATS so hiring decisions flow without manual data entry between tools.

Michal Juhas · Last reviewed May 9, 2026

What are video interviewing platforms?

Video interviewing platforms are purpose-built systems that host candidate screening sessions over video, manage reviewer access and scoring, and connect to an ATS so results flow without manual data entry. They differ from general video call tools by handling the full workflow: candidate consent, structured question sets with time limits, clip storage, reviewer rubric collection, and stage updates.

Two formats appear across most platforms: live sessions where both parties join at the same time, and one-way async formats where candidates record answers to preset questions that reviewers watch later. Many platforms support both, with the choice driven by role volume, seniority level, and whether scheduling or evaluation quality is the binding constraint.

In practice

- A TA ops lead at a 200-person company piloting async video on a high-volume SDR role discovers the platform's ATS connector only triggers a notification, not a stage move. The coordinator still updates each candidate record by hand, which costs more time than the scheduling gain the tool was purchased to create.

- A recruiter evaluating two platforms side by side finds the cheaper option has a stronger HRM integration but weaker mobile completion rates. The team picks the one with better mobile UX because 60 percent of candidates in their market apply on a phone.

- A hiring manager asks to see AI-generated vocal pace scores alongside clips. The team reviews them for one batch, finds they disagree with the rubric on six of twelve candidates, and disables the overlay before anyone outside TA sees the output.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in debriefs, vendor calls, and policy reviews. Skim the first section when you need a fast shared picture. Use the second when you are deciding how a video interviewing platform fits into your ATS and screening stack.

Plain-language summary

- What it means for you: Instead of booking 20 to 40 phone screens per week, you send a structured link. Candidates record two to four questions on their own schedule. You and the hiring manager review clips with a shared rubric before deciding who advances.

- How you would use it: For early funnel roles where the same questions repeat on every first call, volume is high, and scheduling is the real bottleneck, not the depth of conversation needed.

- How to get started: Write the three questions you ask on every first screen. Build a two-row rubric per question. Pilot on one role with more than 15 weekly applicants. Resolve consent language with legal before sending the first invite.

- When it is a good time: When scheduling is the constraint, when the same five questions appear on every first call for a stable role, and when you can staff a human review queue with a five-business-day turnaround.

When you are running live reqs and tools

- What it means for you: A video interviewing platform is a scheduling trade for async formats and a structured collaboration surface for live. The async format gains throughput and loses real-time follow-up. Without a rubric and a reply SLA, you get faster intake volume with the same evaluation inconsistency running at higher scale.

- When it is a good time: When intake spikes from programmatic advertising or automated outreach, when hiring managers decline screen calls but will review clips, or when you need to standardize question delivery across a distributed recruiting team.

- How to use it: Wire the platform to your ATS so submitted clips trigger stage moves and reviewer scores write back to the candidate record. Keep AI-generated scoring off the official record until a third-party AI bias audit clears the vendor. Use structured output patterns when exporting review notes back to the ATS.

- How to get started: Request the data processing agreement before any demo. Confirm data residency matches your GDPR or CCPA obligations. Test the ATS connector in staging with real candidate records before production. Confirm mobile completion works end to end. Set reviewer reply windows before the first invite batch goes out.

- What to watch for: Completion drop-off after the invite goes out (40 to 60 percent is typical), ghosting post-submission, AI scoring overlays legal has not reviewed, vendor subprocessors receiving clip data outside your required data region, and manual stage updates that signal the ATS integration is not working as documented.

Where we talk about this

On AI with Michal live sessions, video platform choices come up in both the AI in recruiting and sourcing automation tracks: how to set up a rubric that survives a hiring manager review, where the human-in-the-loop gate belongs when AI scoring is on, and how to brief candidates so completion rates hold. Bring your ATS name, current screening volume, and legal questions to Workshops to work through them with practitioners who have run both sides of the process.

Around the web (opinions and rabbit holes)

Third-party creators move fast. Treat these as starting points, not endorsements, and double-check anything before you wire candidate data to an external platform.

YouTube

- Video Interview Platform Comparison for Recruiters has practitioner walk-throughs comparing HireVue, Spark Hire, Willo, and similar platforms on ATS integration and candidate experience.

- One-Way Video Interview Setup and Best Practices covers rubric design and candidate communication patterns that affect completion rates.

- AI Video Interview Bias Risk and Compliance surfaces compliance conversations worth watching before accepting automated scoring from any vendor.

- Which video interviewing platforms do teams actually keep after 90 days? in r/recruiting collects honest post-pilot answers on which tools survive past onboarding.

- Async video interview completion rate problems in r/recruiting surfaces candidate experience issues teams find after launch.

- HireVue vs Spark Hire vs Willo comparison in r/recruiting is a useful cross-team comparison thread that covers ATS connector quality.

Quora

- Which video interviewing platform works best for recruiting teams? collects practitioner perspectives across industries and team sizes (quality varies, read critically).

General video call tool versus dedicated platform

| Factor | General video call (Zoom, Teams) | Video interviewing platform |

|---|---|---|

| Async recording | Manual setup required | Built in |

| Consent management | Manual | Managed by platform |

| Rubric and scoring | Separate tool needed | Integrated |

| ATS stage sync | Manual | Automated via connector |

| AI scoring overlays | None | Optional, audit before enabling |

| Setup time | Near zero | Days to weeks |

Related on this site

- Glossary: Video interview software, One-way video interview, Async screening, Best video interview software, Interviewing platform, Scorecard, Human-in-the-loop (HITL), Adverse impact (selection), AI bias audit, Workflow automation

- Blog: AI candidate screening

- Guides: Talent acquisition managers

- Course: Starting with AI: the foundations in recruiting

- Live cohort: Workshops

- Membership: Become a member