AI candidate sourcing

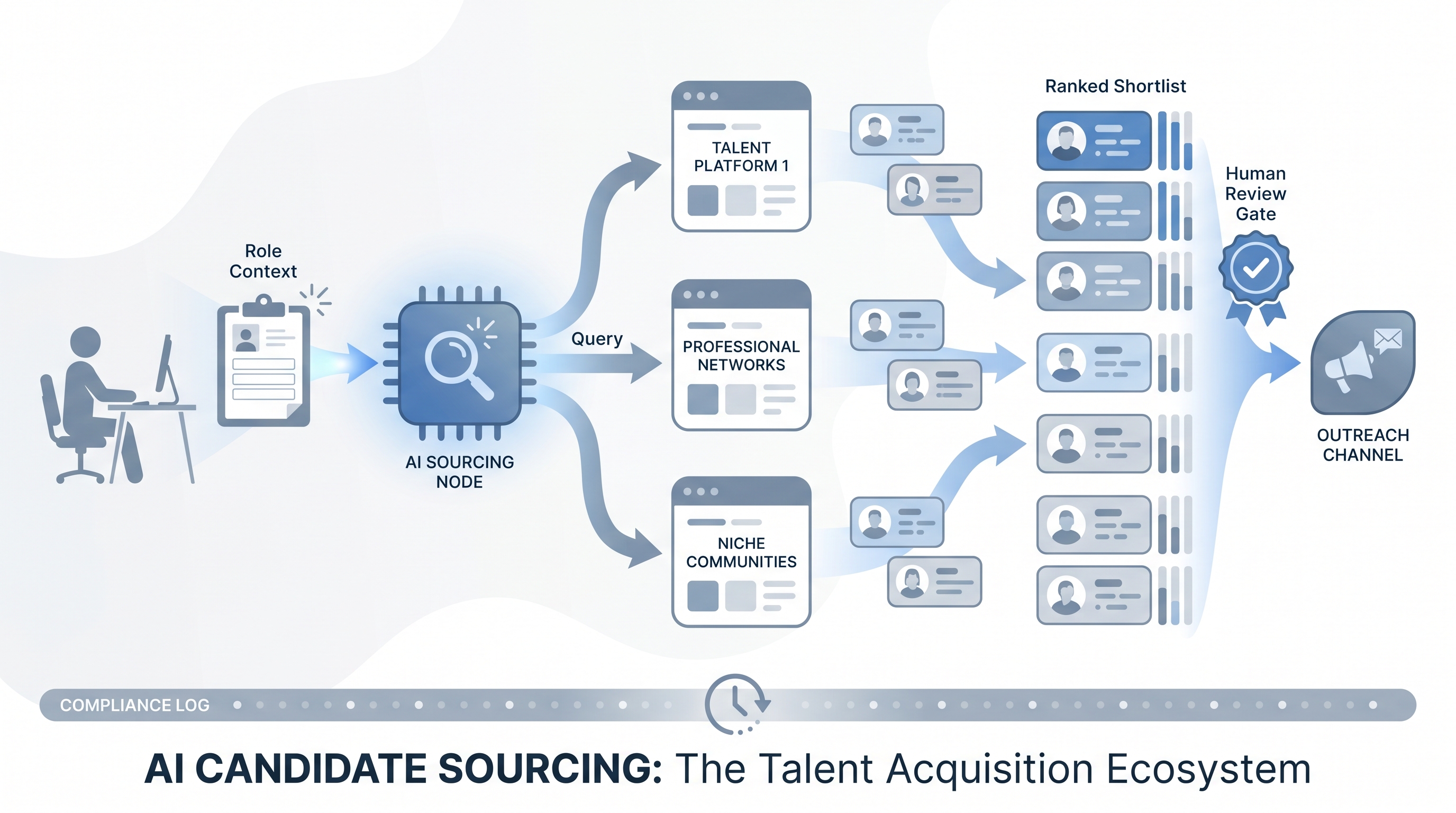

Using machine learning and large language models to discover, rank, and engage passive candidates at speed - from semantic profile matching and Boolean string generation to personalized outreach drafts and talent pool surfacing.

Michal Juhas · Last reviewed May 9, 2026

What is AI candidate sourcing?

AI candidate sourcing is the practice of using machine learning and large language models to find, rank, and engage candidates for open roles. Instead of relying on keyword search alone, an AI layer matches on intent: a sourcer looking for a payment infrastructure engineer gets profiles ranked by skill clusters and career context, not just by who typed the same words on a resume.

In practice it splits into four tasks: discovering passive profiles that match the brief, enriching contact details so outreach actually reaches inboxes, generating personalized first-touch messages, and prioritizing the resulting long-list so human review starts with the strongest candidates. The outputs feed into sourcer judgment, not past it. Every shortlist goes through a human-in-the-loop gate before any message goes out.

The tooling changes faster than the fundamentals. Whether a team uses a standalone AI sourcing platform, a sourcing layer inside an ATS, or a sequence of prompts in a general-purpose model, the workflow logic stays the same: brief the model well, review the output critically, and log what ran so you can audit for demographic drift and improve the brief next cycle.

In practice

- A sourcer who says "the AI pulled 30 profiles I would never have found with Boolean" is describing intent-based matching: the tool translated their brief into a broader query and surfaced candidates whose skills cluster around the need, even when their titles do not match the req.

- When a TA lead asks "why did the model skip everyone from this background?" they are hitting the adverse impact risk that appears when a ranking model has absorbed historical hiring patterns that skewed narrow.

- A team running AI candidate sourcing in parallel with their usual manual process on two open roles for four weeks is the standard calibration method before committing a full subscription or changing sourcer workflow permanently.

Quick read, then how hiring teams use it

This is for sourcers, recruiters, TA leads, and HR partners who need shared vocabulary when evaluating tools, writing briefs, or reviewing AI-sourced shortlists with hiring managers. Skim the first section for a fast shared picture. Use the second when you are running live reqs.

Plain-language summary

- What it means for you: AI candidate sourcing takes your description of who you need and finds candidates who fit that need, even when their resumes do not use the same words as the job posting.

- How you would use it: Write a brief with must-haves, nice-to-haves, and example career paths. Review the first shortlist critically with the hiring manager. Use the feedback loop to improve future results.

- How to get started: Pick one high-volume role type where sourcing takes the most hours per week and run a four-week parallel test against your current process before changing the workflow.

- When it is a good time: When you have enough volume that pattern-matching adds leverage, and when the role type is common enough that the model has seen similar profiles before.

When you are running live reqs and tools

- What it means for you: Every AI-ranked shortlist is a recommendation with a compliance obligation. Document which tool version ran, what brief it received, and who reviewed the output before anyone was advanced or rejected.

- When it is a good time: After you have validated shortlist quality on a sample set, confirmed the data processing agreement with legal, and confirmed your applicant tracking software integration pushes profiles cleanly without manual re-entry.

- How to use it: Pair the discovery layer with contact enrichment sourcing for outreach-ready profiles and workflow automation to push shortlisted profiles into the ATS. Use multi-channel talent sourcing to cover platforms the AI tool does not index.

- How to get started: Map data residency and retention before the first campaign. Run an AI bias audit after the first 200 profiles to check for demographic skew before scaling volume.

- What to watch for: Models that reproduce historical hiring bias, contact enrichment providers outside your approved vendor list, integration errors that create duplicate candidate records on retry, and outreach campaigns that fire before a human read the draft.

Where we talk about this

On AI with Michal live sessions AI candidate sourcing runs through both tracks: sourcing automation covers brief writing, output review, enrichment wiring, and what happens when a vendor changes an API; AI in recruiting connects sourcing outputs to the wider hiring funnel and covers how to explain AI-assisted decisions to hiring managers and compliance teams. Bring your current stack, the role types giving you the most sourcing friction, and your honest read on where manual effort still wins. Start at Workshops.

Around the web (opinions and rabbit holes)

Third-party creators move fast on this topic. Treat these as starting points, not endorsements. Verify compliance postures and integration claims directly with vendors before purchase or deployment.

YouTube

- How to source candidates using AI covers practitioner walkthroughs of live AI sourcing setups, brief-writing methods, and output review workflows.

- AI candidate sourcing tools comparison shows head-to-head tool comparisons with real role briefs rather than vendor demo scripts.

- Passive candidate sourcing with AI includes step-by-step builds for finding passive candidates outside job boards.

- What AI tools do you use for sourcing? in r/recruiting collects candid in-production reports from sourcers with real volume.

- Is AI sourcing actually worth it? in r/recruiting separates tools that held up after renewal from those that looked good in a demo.

- Bias in AI sourcing tools in r/recruiting surfaces honest failure stories and audit approaches from practitioners.

Quora

- How do companies use AI to source candidates? collects practitioner explanations with varying detail; read alongside recent LinkedIn discussions from sourcers in your industry.

AI sourcing method comparison

| Method | Reach | Speed | Explainability | Compliance overhead |

|---|---|---|---|---|

| Manual Boolean search | Limited to keyword matches | Slow | High | Low |

| AI semantic search | Broad (intent-based) | Fast | Low | Medium |

| AI with seed profiles | Broad plus contextual fit | Fast | Medium | Medium |

| Warm network outreach | Narrow but pre-qualified | Varies | High | Low |

Related on this site

- Glossary: AI sourcing tools, Semantic search, Boolean search, Contact enrichment sourcing, Multi-channel talent sourcing, Outbound talent sourcing, Proprietary talent pool, Candidate data enrichment, AI bias audit, Adverse impact, Human-in-the-loop, Workflow automation

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Courses: Starting with AI: the foundations in recruiting

- Membership: Become a member