Selection tools for hiring

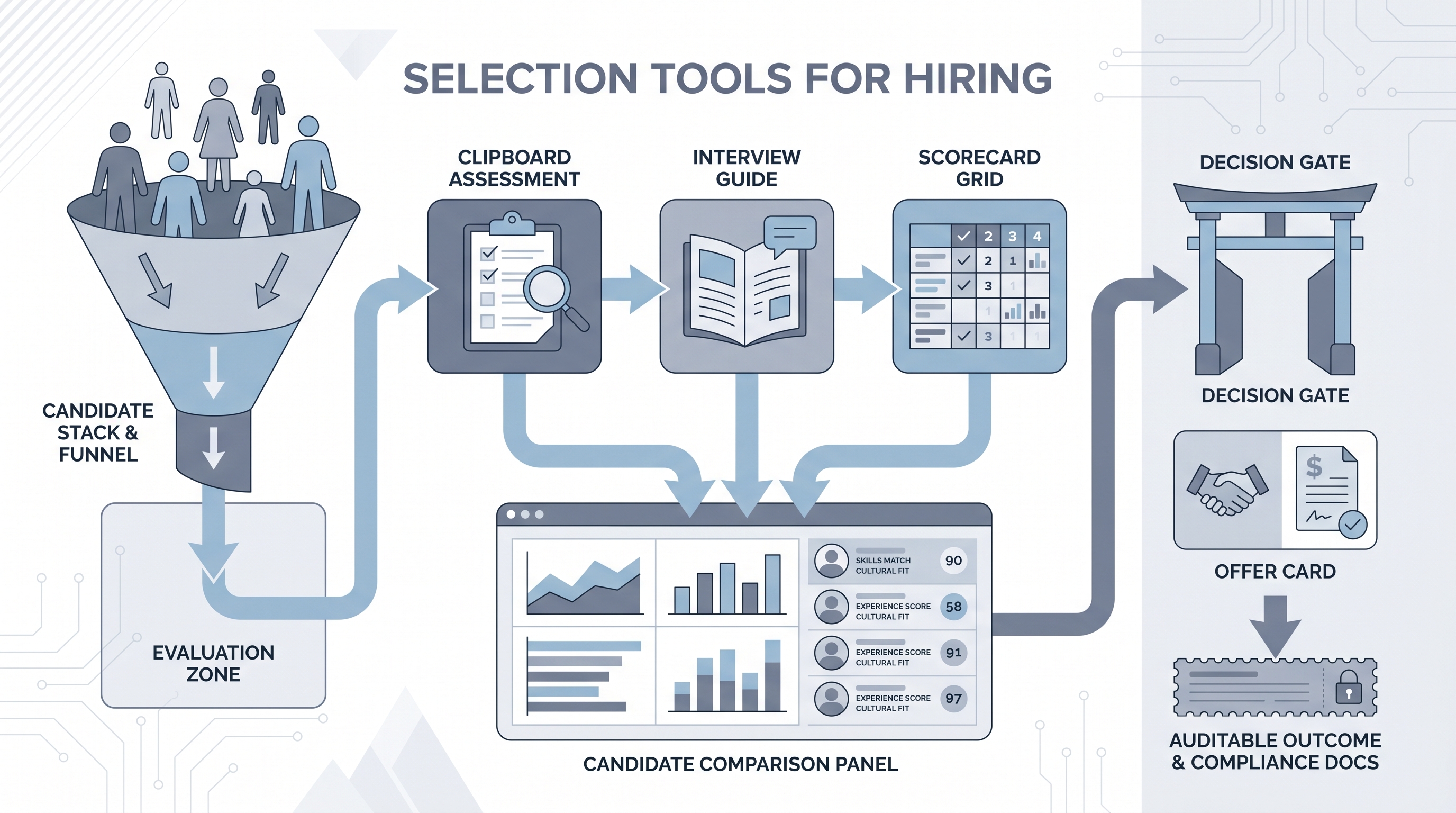

Software and structured processes that help hiring teams evaluate and decide on candidates after sourcing: assessments, scorecards, structured interview guides, video interview platforms, and comparison dashboards that replace informal gut-feel decisions with documented criteria.

Michal Juhas · Last reviewed May 15, 2026

What are selection tools for hiring?

Selection tools for hiring are the software and structured processes that teams use after sourcing to evaluate candidates and make offer decisions. The category includes skills assessments, cognitive and psychometric tests, structured interview guides, scorecards, video interview platforms, reference check tools, and comparison dashboards.

Each tool targets a specific decision point in the funnel: an assessment narrows the shortlist before the first call, a structured guide keeps the panel asking the same questions in sequence, and a scorecard anchors feedback before anyone discusses their favourite. Together they replace informal gut-feel decisions with documented criteria.

The tools only work when criteria are agreed before the search opens. Choosing a selection tool after the preferred candidate has already emerged is retrofitting structure onto a decision already made.

In practice

- A TA lead at a 500-person SaaS company described their selection process as three separate spreadsheets that nobody had agreed to share: a skills test the recruiter sent, a competency grid the hiring manager maintained, and a values rubric from the People team. None were visible to the others until after the debrief. Adding a proper tool meant choosing one shared scorecard, not buying more software.

- Practitioners on recruiting forums often contrast selection tools with sourcing tools by saying "sourcing fills the funnel, selection empties it properly." The distinction shapes where teams invest: high-volume roles need faster screening at the top; specialist or leadership roles need richer evaluation at the bottom.

- An HRBP preparing for an audit discovered their assessment vendor had no signed data processing agreement for EU candidates. The tool produced good scores; the compliance paperwork was missing entirely.

Quick read, then how hiring teams use it

This is for recruiters, sourcers, TA, and HR partners who need the same vocabulary in vendor evaluations, compliance reviews, and hiring manager conversations. Skim the first section for the shared picture. Use the second when you are setting up evaluation workflows or choosing specific tools.

Plain-language summary

- What it means for you: Selection tools are the software and structure you use to evaluate candidates fairly and consistently after they enter the pipeline: assessments, interview guides, scorecards, and comparison views so the panel works from the same criteria.

- How you would use it: Pick one stage in your current process where decisions feel inconsistent or undocumented. Add one tool there first: a shared scorecard, a short assessment, or a structured guide. Expand only after that stage runs cleanly.

- How to get started: Map your current evaluation steps and note where criteria are informal or unwritten. Choose a tool that matches the most inconsistent step. Run a small pilot with one role before rolling it out to the whole team.

- When it is a good time: When multiple interviewers disagree about what a good candidate looks like, when different hiring managers apply different standards to the same role, or when legal asks for a documented selection rationale.

When you are running live reqs and tools

- What it means for you: Selection tools expand your data and compliance surface. Each assessment vendor holds candidate data under its own DPA, retention schedule, and deletion mechanism. A right-to-erasure request means acting across every tool, not only the ATS.

- When it is a good time: Before enabling AI scoring features in any assessment or video interview platform. Confirm pass-rate parity by demographic group, log which model version produced each score, and keep human review before candidates advance to offer stage.

- How to use it: Standardize tool conditions: every candidate in the same role takes the same assessment, sees the same instructions, and is scored on the same rubric. Document which tool owns which decision stage and who reviews exceptions.

- How to get started: Audit your current selection tools: does each have a signed DPA, a defined data retention window, and a mechanism for deleting candidate data on request? Fix those before adding new tools.

- What to watch for: AI scoring features that activate by default, assessment libraries that accumulate candidate data indefinitely, rubric drift between hiring managers, and assessment scores used as final decisions rather than one input among several.

Where we talk about this

On AI with Michal live sessions, selection tools come up across both tracks: the AI in recruiting track covers structured evaluation design, scorecard calibration, and how AI scoring features interact with fair hiring obligations, and the sourcing automation track shows how selection tools connect to the broader pipeline without creating decision bottlenecks. Start at Workshops with your current selection process and your top evaluation consistency pain points.

Around the web (opinions and rabbit holes)

Third-party creators move fast and tooling changes frequently. Treat these as starting points, not endorsements, and check anything before connecting candidate data to a new system.

YouTube

- Search "structured interviewing hiring" on YouTube for practitioner walkthroughs of rubric design and scorecard calibration. Filter by upload date: best practice guidance updates as legal interpretations evolve.

- Search "pre-employment assessment recruiting" for independent reviews of assessment validity, candidate experience, and what breaks when volume increases.

- Search "hiring panel debrief structured interview" for the panel dynamics that selection tools are meant to support: how disagreements surface and how scoring anchors the conversation.

- r/recruiting has recurring threads on which assessment tools hold up in production and where candidates report friction or accessibility issues.

- r/TalentAcquisition surfaces TA leader conversations on selection tool evaluation, rubric standardization, and the compliance gaps teams discover after deployment.

Quora

- What tools do recruiters use to assess candidates? collects practitioner answers on specific tools and where they fit in the funnel.

Selection tools versus informal evaluation

| Aspect | Informal evaluation | Selection tools |

|---|---|---|

| Criteria | Decided post-interview | Agreed before search opens |

| Consistency | Varies by interviewer | Same rubric, same conditions |

| Documentation | Notes or memory | Logged, auditable |

| Bias exposure | High | Reduced with calibration |

| Compliance | Gaps common | DPA and retention required |

| AI risk | Implicit assumptions | Explicit model and pass-rate audit |

Related on this site

- Glossary: Scorecard, Async screening, One-way video interview, Pre-employment assessment software, Adverse impact, AI bias audit, Human-in-the-loop (HITL), Applicant tracking software, Remote hiring tools, Recruitment tracking system

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Membership: Become a member