Skills assessment test for employment

A structured exercise that measures whether a job candidate can perform specific tasks the role requires, producing documented, job-relevant evidence before hiring decisions are made.

Michal Juhas · Last reviewed May 15, 2026

What is a skills assessment test for employment?

A skills assessment test for employment is a structured exercise that measures whether a candidate can perform specific tasks the role requires, before hiring decisions are made. The category covers timed online modules, practical work samples, take-home projects, and scenario-based exercises, each designed to produce observable, job-relevant evidence rather than a recruiter's impression from a resume or a brief call.

The distinction from personality tests and cognitive ability measures matters. Personality tests estimate behavioral tendencies; cognitive tests measure reasoning capacity; skills tests show what a candidate can actually produce in a defined scenario: write a brief, analyze a dataset, debug code, handle a customer objection, or build a small spreadsheet model. That difference in what they measure shapes when they belong in the hiring process and how to interpret the results alongside interview notes and scorecard ratings.

Skills tests only improve hiring consistency when the criteria they measure are agreed before the search opens and applied the same way to every candidate in the role. Adding a work sample after a preferred candidate has already emerged does not make the decision fairer; it gives the appearance of rigor without the substance.

In practice

- A sourcing agency added a short writing exercise to a client services role after noticing that candidates who interviewed well but wrote inconsistently were churning within three months. The exercise caught communication gaps the phone screen missed, and a pass-rate review after six cohorts showed no significant difference across demographic groups.

- On recruiting forums, sourcers distinguish skills tests from personality or psychometric tools by outcome: a skills test has a right answer or a quality judgment grounded in the actual work; a personality test does not. That distinction shapes which tests attract legal scrutiny and how debrief conversations run.

- A compliance team discovered that their third-party coding assessment vendor had no signed DPA covering EU candidate data. The test was producing useful scores; the paperwork was missing entirely, and a single subject access request would have exposed the gap.

Quick read, then how hiring teams use it

This is for recruiters, TA partners, and HR leads who need a shared vocabulary across tool evaluations, hiring manager briefings, and compliance reviews. Skim the plain-language section for the shared picture. Use the second section when setting up assessment workflows or choosing specific tools.

Plain-language summary

- What it means for you: A skills assessment test shows what a candidate can actually do, not just what they claim. You give them a task, they complete it, and the output becomes documented evidence alongside interview notes and scorecard ratings.

- How you would use it: Identify the one competency that most consistently separates your strong hires from your early exits. Build or license a short test for that competency. Use it for every candidate at the same stage in the same role, scored against a rubric you agreed on before the first submission arrives.

- How to get started: Choose one current open role and draft a brief task that takes 20-40 minutes and mirrors real work. Score a few past submissions from recent hires to confirm the rubric is workable before you send it to live candidates.

- When it is a good time: When interview feedback consistently disagrees about candidate quality, when early attrition is linked to skill gaps the interview did not surface, or when legal or compliance has asked for documented selection rationale.

When you are running live reqs and tools

- What it means for you: Every skills assessment vendor holds candidate data under its own DPA, retention schedule, and deletion mechanism. A right-to-erasure request means acting across every connected system, not only the ATS, and the clock starts from the candidate request, not your next quarterly review.

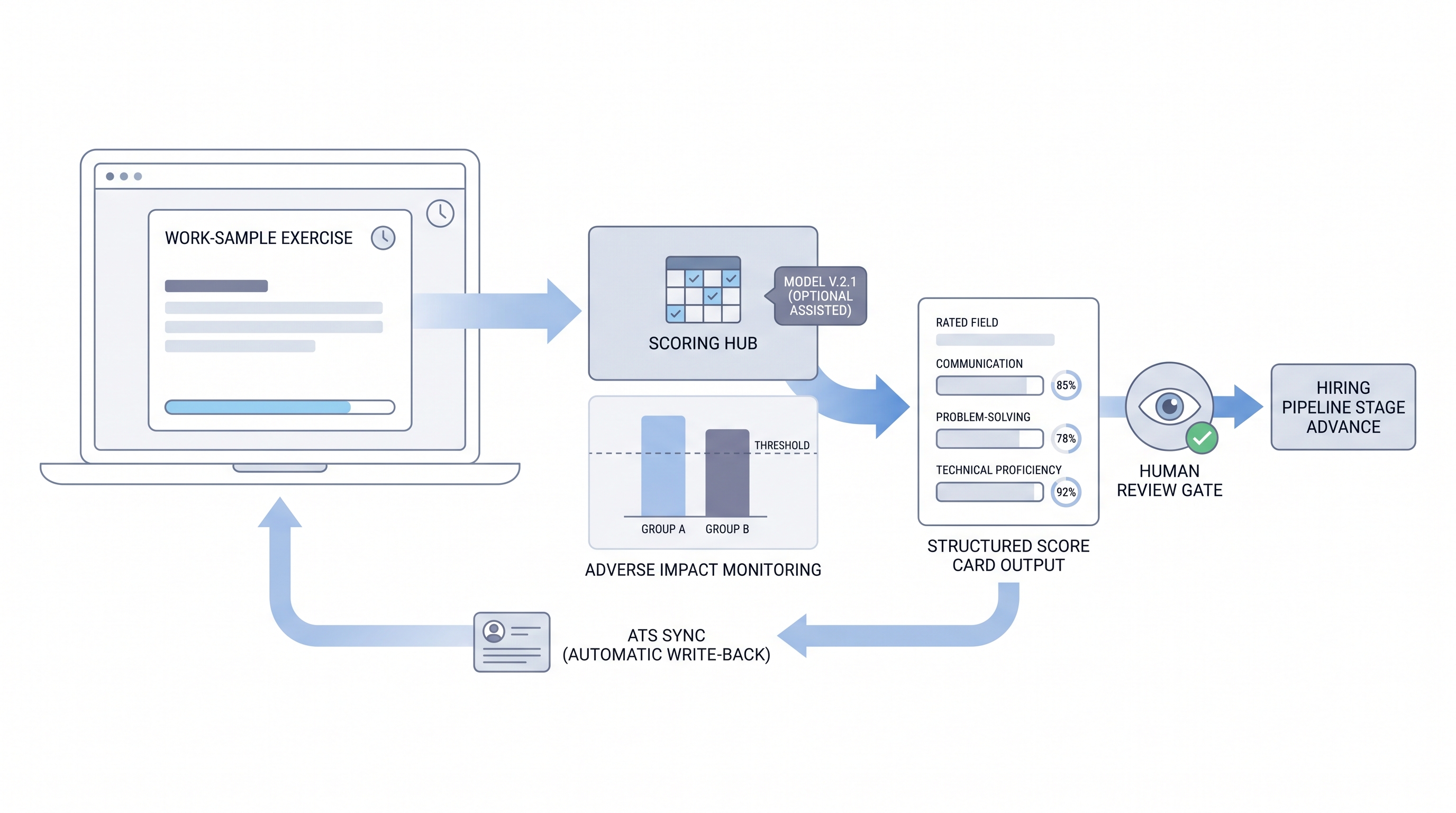

- When it is a good time: Before enabling any AI scoring feature in an assessment platform. Confirm pass-rate parity by demographic group, log which model version produced each score, and confirm a human reviewer sees the output before a decline decision is made.

- How to use it: Standardize conditions: same task, same instructions, same rubric, same time window for every candidate in the same role. Score before reviewing the resume to avoid anchoring. Document which tool owns which decision stage and who reviews exceptions before a candidate is declined.

- How to get started: Audit your current assessment stack: does each vendor have a signed DPA, a defined data retention window, and a confirmed deletion path for candidate data on request? Fix those gaps before adding new tools.

- What to watch for: Vendors activating AI scoring by default on platform updates, test libraries accumulating candidate data past the retention window, rubric drift when different hiring managers score the same submission differently, and scores treated as final rather than as one input alongside scorecard ratings and interview notes.

Where we talk about this

On AI with Michal live sessions, skills assessment tests for employment come up in both tracks: the AI in recruiting track covers how to evaluate AI scoring features before enabling them and how structured assessments connect to scorecard design and debrief conversations, and the sourcing automation track covers how assessments integrate with ATS pipelines without creating parallel records. Start at Workshops with your current assessment setup and the competency you most need to measure reliably.

Around the web (opinions and rabbit holes)

Third-party creators move fast and tooling changes frequently. Treat these as starting points, not endorsements, and check any tool before connecting candidate data to a new system.

YouTube

- Search "work sample test hiring" on YouTube for practitioner walkthroughs of exercise design, rubric calibration, and what breaks when volume scales. Filter by upload date because legal interpretations update regularly.

- Search "skills based hiring assessment" for independent perspectives on replacing degree requirements with demonstrated competency and the compliance considerations that follow.

- Search "pre-employment skills test design" for the test development side: how to validate a task, set time windows, and confirm the rubric holds up across different scorers.

- r/recruiting has recurring threads on which skills tests hold up in production, where candidates report friction or accessibility issues, and which tasks generate disputes about scoring.

- r/TalentAcquisition surfaces TA leader discussions on skills-based hiring, competency definition, and how to manage hiring manager resistance to structured assessment.

Quora

- What are the best pre-employment skills assessment tests? collects practitioner answers on tools and task design across industries and role types.

Skills assessment test versus resume screening versus personality test

| Dimension | Resume screening | Skills assessment test | Personality test |

|---|---|---|---|

| What it measures | Claimed history | Demonstrated task ability | Behavioral tendencies |

| Right answer exists | No | Yes (for most tasks) | No |

| Adverse impact risk | High (credential bias) | Moderate (task design matters) | Moderate (norm group bias) |

| Legal defensibility | Low (unstructured) | High (if validated) | Mixed (jurisdiction-dependent) |

| ATS integration | Native | Requires API or native sync | Varies by platform |

Related on this site

- Glossary: Employment assessment test, Employment skills assessment, Pre-employment skills assessment, Hiring assessment test, Candidate assessment tools, Selection tools for hiring, Assessment tools for recruitment and selection, Scorecard, Adverse impact, AI bias audit, Human-in-the-loop (HITL), Async screening, Applicant tracking software, Personality test for employment

- Blog: AI sourcing tools for recruiters

- Guides: Sourcers

- Live cohort: Workshops

- Membership: Become a member